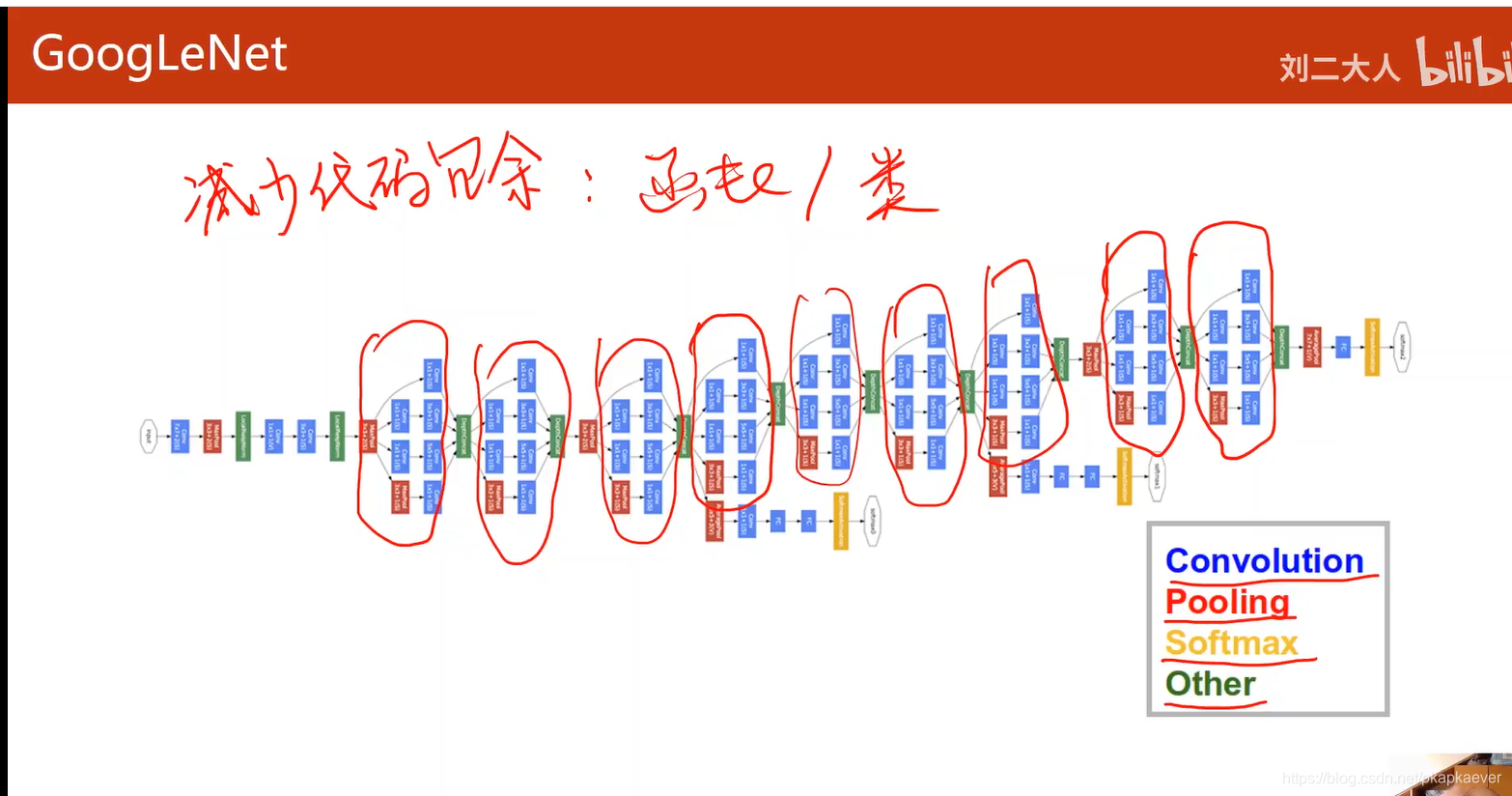

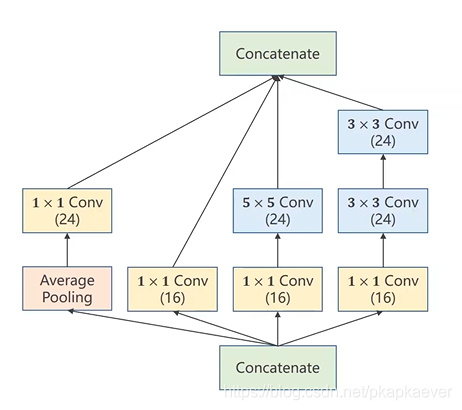

1.这个红圈圈画的块叫做Inception

2.concatenate就是将块拼接起来(长宽一样 channel不同)

3.Average pooling 人为指定stride =1,padding = 1(均值卷积核)

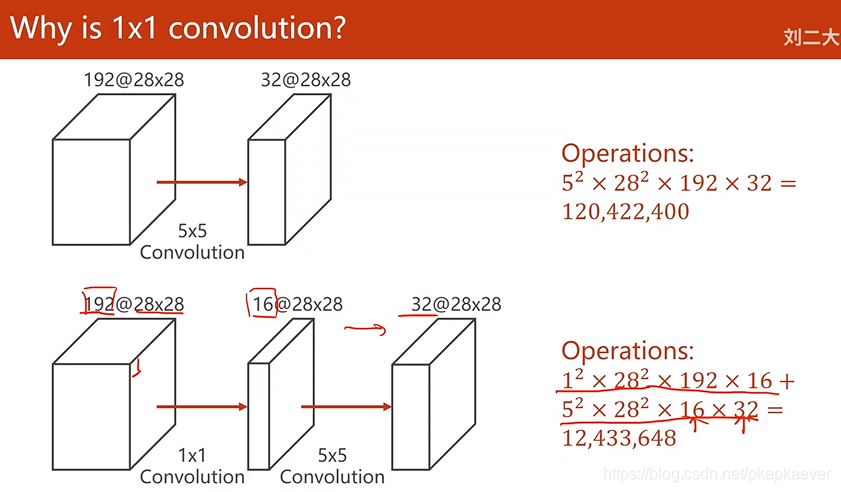

4.1*1卷积 表示卷积核也是1*1,可以融合不同通道相同位置像素的值,也就是信息融合

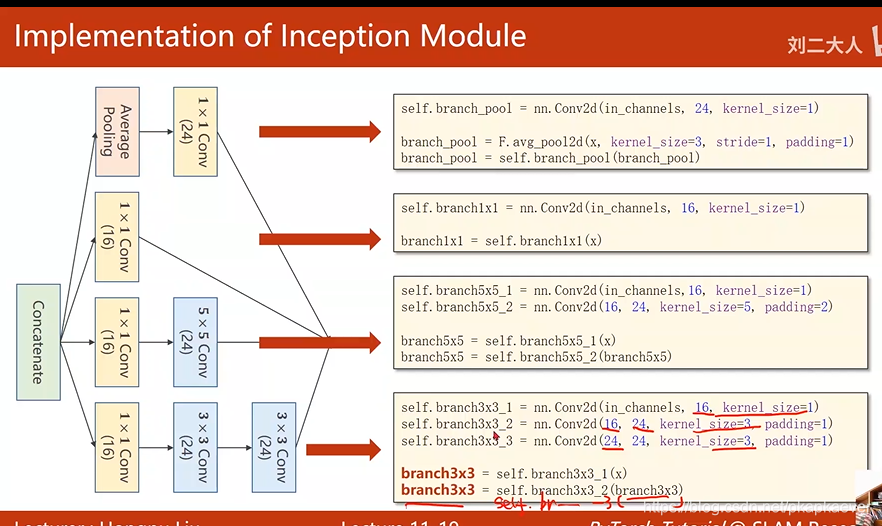

Inception module实现

沿着channel的维度拼接起来 (dim = 1)

import torch

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import torch.nn.functional as F

import matplotlib.pyplot as plt

import torch.nn as nn

import time

import datetime

batch_size =64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307, ), (0.3081, ))])

#将原始像素数据归一到(0,1)中 并基于均值0.1307和标准差0.3081来对数据进行标准化处理

train_dataset = datasets.MNIST(root='../dataset/mnist', train=True, download=True,transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True,batch_size=batch_size)

#(下载)加载训练集,之后进行batch分组

test_dataset = datasets.MNIST(root='../dataset/mnist', train=False,download=True,transform=transform)

test_loader = DataLoader(test_dataset,shuffle=False ,batch_size=batch_size)

#测试集

class InceptionA(nn.Module):

def __init__(self, in_channels):

super(InceptionA, self).__init__()

self.branch1x1 = nn.Conv2d(in_channels, 16, kernel_size=1)

#1x1

self.branch5x5_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch5x5_2 = nn.Conv2d(16, 24, kernel_size=5, padding=2)

#1x1 5x5

self.branch3x3_1 = nn.Conv2d(in_channels, 16, kernel_size=1)

self.branch3x3_2 = nn.Conv2d(16, 24, kernel_size=3, padding=1)

self.branch3x3_3 = nn.Conv2d(24, 24, kernel_size=3, padding=1)

#1x1 3x3 3x3

self.branch_pool = nn.Conv2d(in_channels, 24, kernel_size=1)

#1x1

def forward(self,x):

branch1x1 = self.branch1x1(x)

branch5x5 = self.branch5x5_1(x)

branch5x5 = self.branch5x5_2(branch5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x, kernel_size=3, stride=1, padding=1)

branch_pool = self.branch_pool(branch_pool)

outputs = [branch1x1, branch5x5, branch3x3, branch_pool]

return torch.cat(outputs, dim=1) #shape: batch_size x C x H x W (经过一次Inception变换C=88)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(88, 20, kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = nn.MaxPool2d(2)

self.fc = nn.Linear(1408, 10)

def forward(self,x):

in_seze = x.size(0)

x = F.relu(self.mp(self.conv1(x))) #输出通道数为10

x = self.incep1(x) #输出通道数为88

x = F.relu(self.mp(self.conv2(x))) #输出通道数为20

x = self.incep2(x) #输出通道数为88

x = x.view(in_seze, -1)

x = self.fc(x)

return x

model= Net()

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')#设置GPU

model.to(device)#将数据加载到GPU

#---计算损失和更新

criterion = torch.nn.CrossEntropyLoss()#交叉熵size_average=False

optimizer = torch.optim.SGD(model.parameters(), lr = 0.01, momentum=0.5)#, momentum=0.5

#---计算损失和更新

def train(epoch):

running_loss = 0.0 #计算loss的累积

#num = []

#loss_list = []

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

inputs, target = inputs.to(device), target.to(device)#将数据加载到GPU

optimizer.zero_grad()

#forward + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

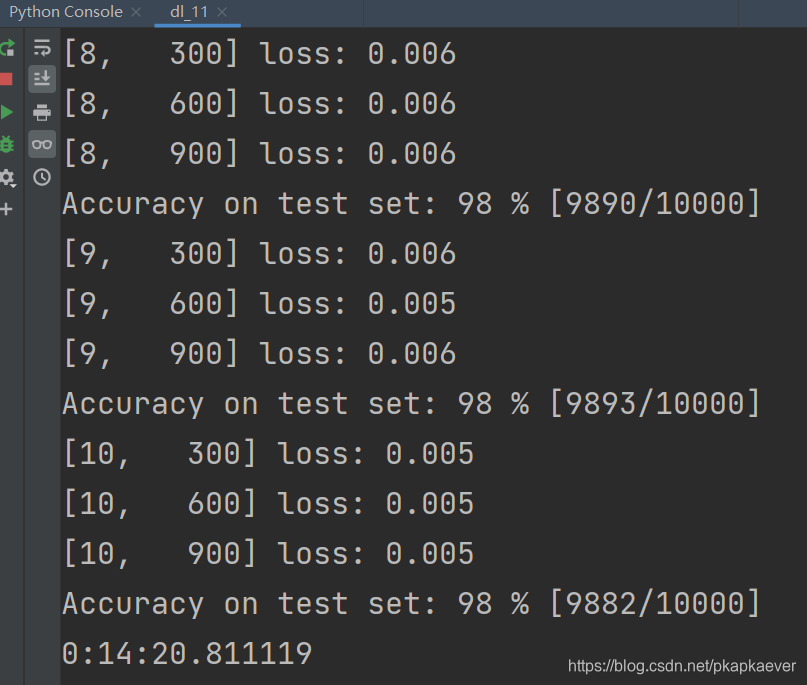

if batch_idx % 300 == 299:#每训练300个数据输出一次

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 2000))

running_loss = 0.0

'''num.append(batch_idx)

loss_list.append(loss.item())

plt.plot(num, loss_list)

plt.xlabel('num')

plt.ylabel('loss')

plt.show() # 绘图'''

def test():

correct = 0

total = 0

with torch.no_grad():#不计算梯度

for data in test_loader:

images, labels = data

images, labels = images.to(device), labels.to(device)#将数据加载到GPU

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %% [%d/%d]' % (100 * correct / total, correct, total))

return correct / total

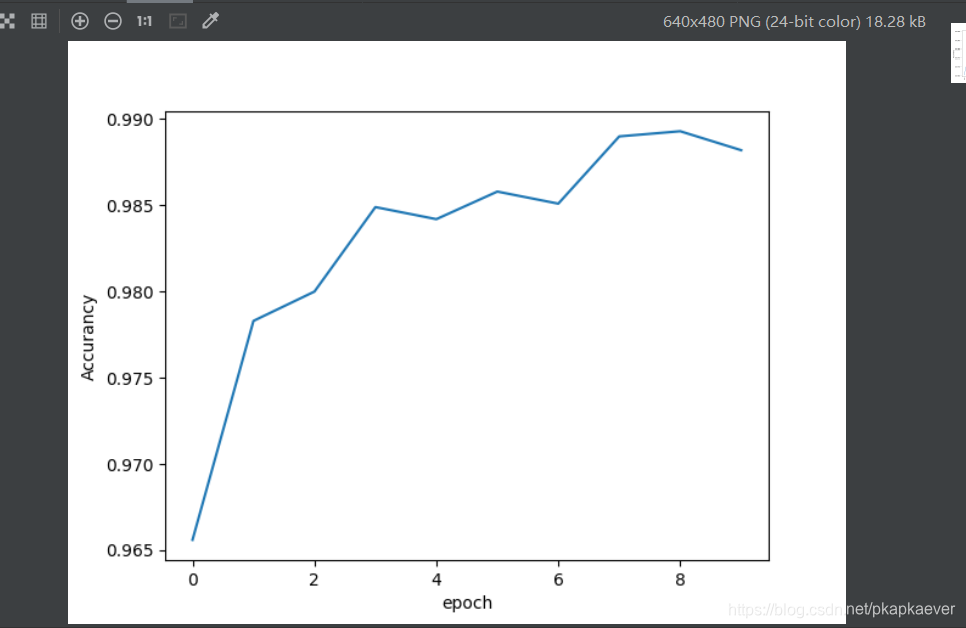

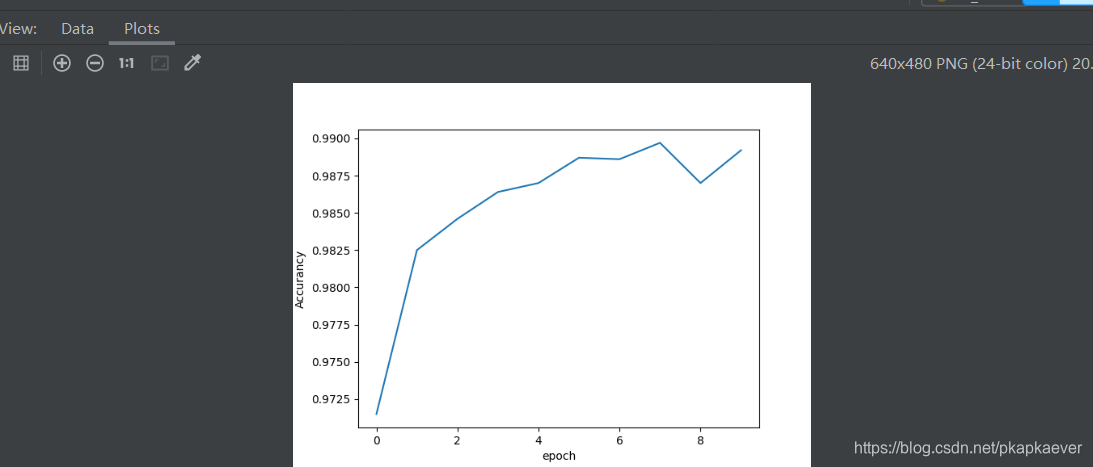

if __name__ == '__main__':

time_start = time.time() # 开始计时

Accurancy = []

epoch_list = []

for epoch in range(10):

train(epoch)

#test()

Accurancy.append(test())

epoch_list.append(epoch)

time_end = time.time() # 结束计时

print(datetime.timedelta(seconds= (time_end - time_start)))

plt.plot(epoch_list, Accurancy)

plt.xlabel('epoch')

plt.ylabel('Accurancy')

plt.show()#绘图

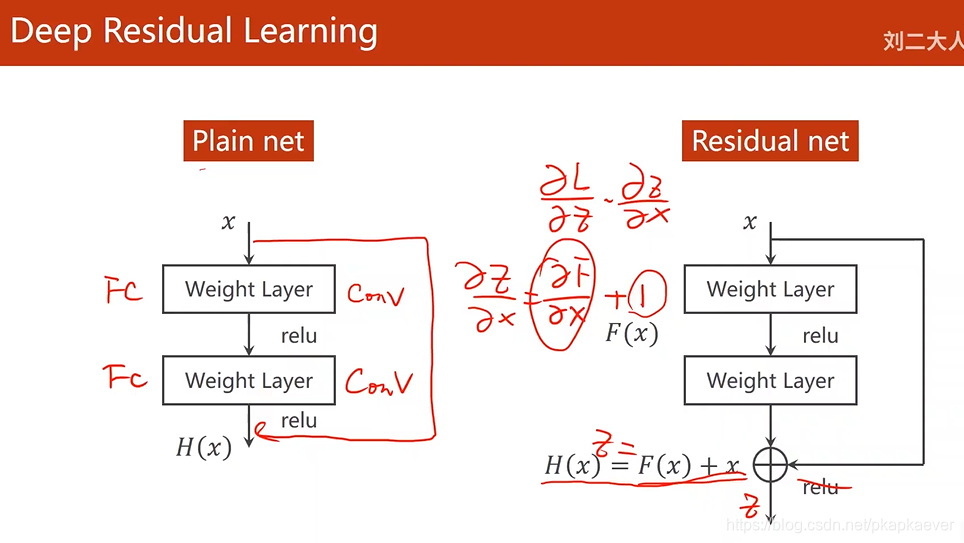

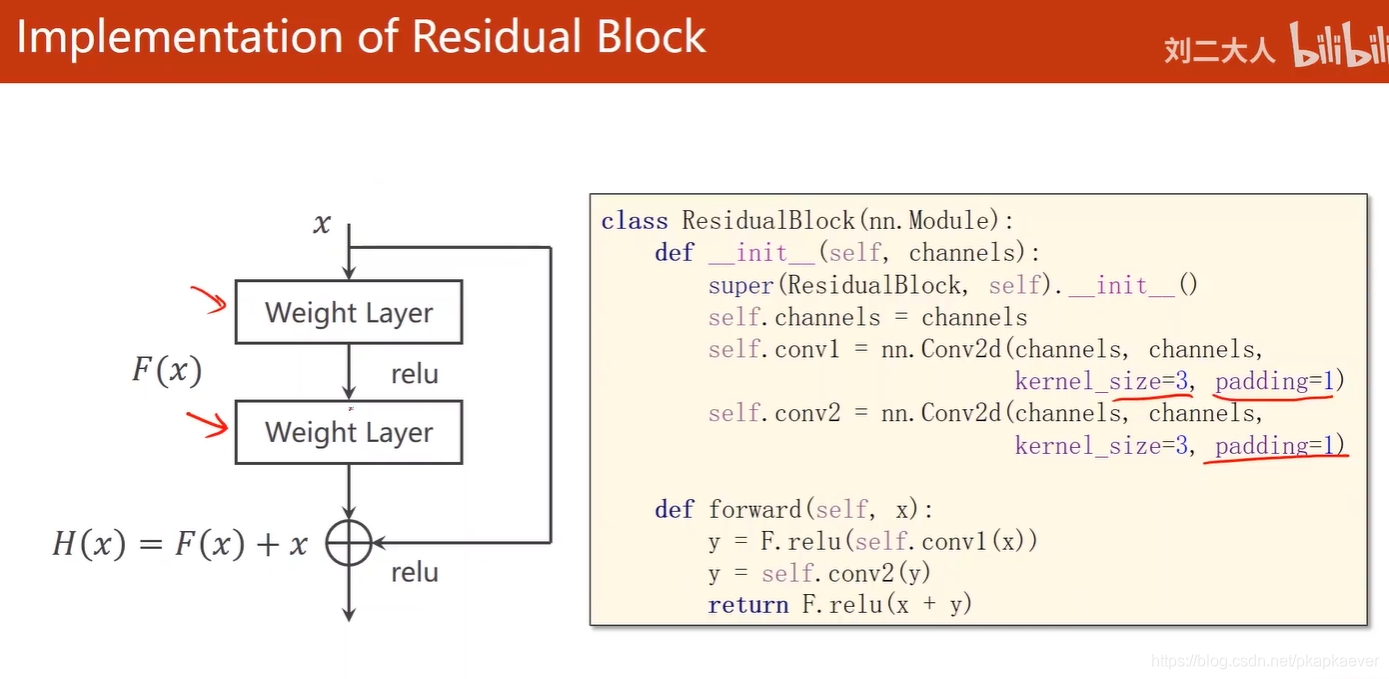

为了解决卷积层叠加太多导致梯度消失

Deep Residual Learning

这样数据就会在1附近,多个相乘不会为0,由于要加上x,所以Weight层输出维度要与x张量维度相同。才能做此运算,这种块叫做Residual Block(残差块)

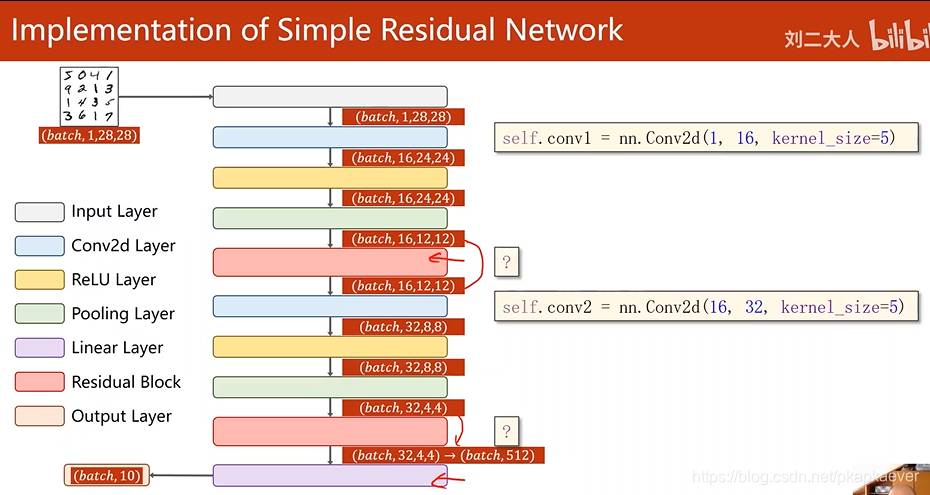

实现一个简单的Residual Network

import torch

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import torch.nn.functional as F

import matplotlib.pyplot as plt

import torch.nn as nn

import time

import datetime

batch_size =64

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.1307, ), (0.3081, ))])

#将原始像素数据归一到(0,1)中 并基于均值0.1307和标准差0.3081来对数据进行标准化处理

train_dataset = datasets.MNIST(root='../dataset/mnist', train=True, download=False,transform=transform)

train_loader = DataLoader(train_dataset, shuffle=True,batch_size=batch_size)

#(下载)加载训练集,之后进行batch分组

test_dataset = datasets.MNIST(root='../dataset/mnist', train=False,download=False,transform=transform)

test_loader = DataLoader(test_dataset,shuffle=False ,batch_size=batch_size)

#测试集

class ResidualBlock(nn.Module):

def __init__(self,channels):

super(ResidualBlock,self).__init__()

self.channels = channels

##输出的通道数要和x的通道数(输入的通道数)相同

self.conv1 = nn.Conv2d(channels,channels,

kernel_size=3,

padding=1)

#为了保证输出图像大小不变,padding=1

self.conv2 = nn.Conv2d(channels, channels,

kernel_size=3,

padding=1)

def forward(self,x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x+y)

#先求和后激活

class Net(nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1 = nn.Conv2d(1,16,kernel_size=5)

self.conv2 = nn.Conv2d(16,32,kernel_size=5)

self.mp = nn.MaxPool2d(2)

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.fc = nn.Linear(512,10)

def forward(self,x):

in_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.rblock1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.rblock2(x)

x = x.view(in_size,-1)

x = self.fc(x)

return x

model= Net()

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')#设置GPU

model.to(device)#将数据加载到GPU

#---计算损失和更新

criterion = torch.nn.CrossEntropyLoss()#交叉熵size_average=False

optimizer = torch.optim.SGD(model.parameters(), lr = 0.01, momentum=0.5)#, momentum=0.5

#---计算损失和更新

def train(epoch):

running_loss = 0.0 #计算loss的累积

#num = []

#loss_list = []

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

inputs, target = inputs.to(device), target.to(device)#将数据加载到GPU

optimizer.zero_grad()

#forward + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:#每训练300个数据输出一次

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 2000))

running_loss = 0.0

'''num.append(batch_idx)

loss_list.append(loss.item())

plt.plot(num, loss_list)

plt.xlabel('num')

plt.ylabel('loss')

plt.show() # 绘图'''

def test():

correct = 0

total = 0

with torch.no_grad():#不计算梯度

for data in test_loader:

images, labels = data

images, labels = images.to(device), labels.to(device)#将数据加载到GPU

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %% [%d/%d]' % (100 * correct / total, correct, total))

return correct / total

if __name__ == '__main__':

time_start = time.time() # 开始计时

Accurancy = []

epoch_list = []

for epoch in range(10):

train(epoch)

#test()

Accurancy.append(test())

epoch_list.append(epoch)

time_end = time.time() # 结束计时

print(datetime.timedelta(seconds= (time_end - time_start)))

plt.plot(epoch_list, Accurancy)

plt.xlabel('epoch')

plt.ylabel('Accurancy')

plt.show()#绘图

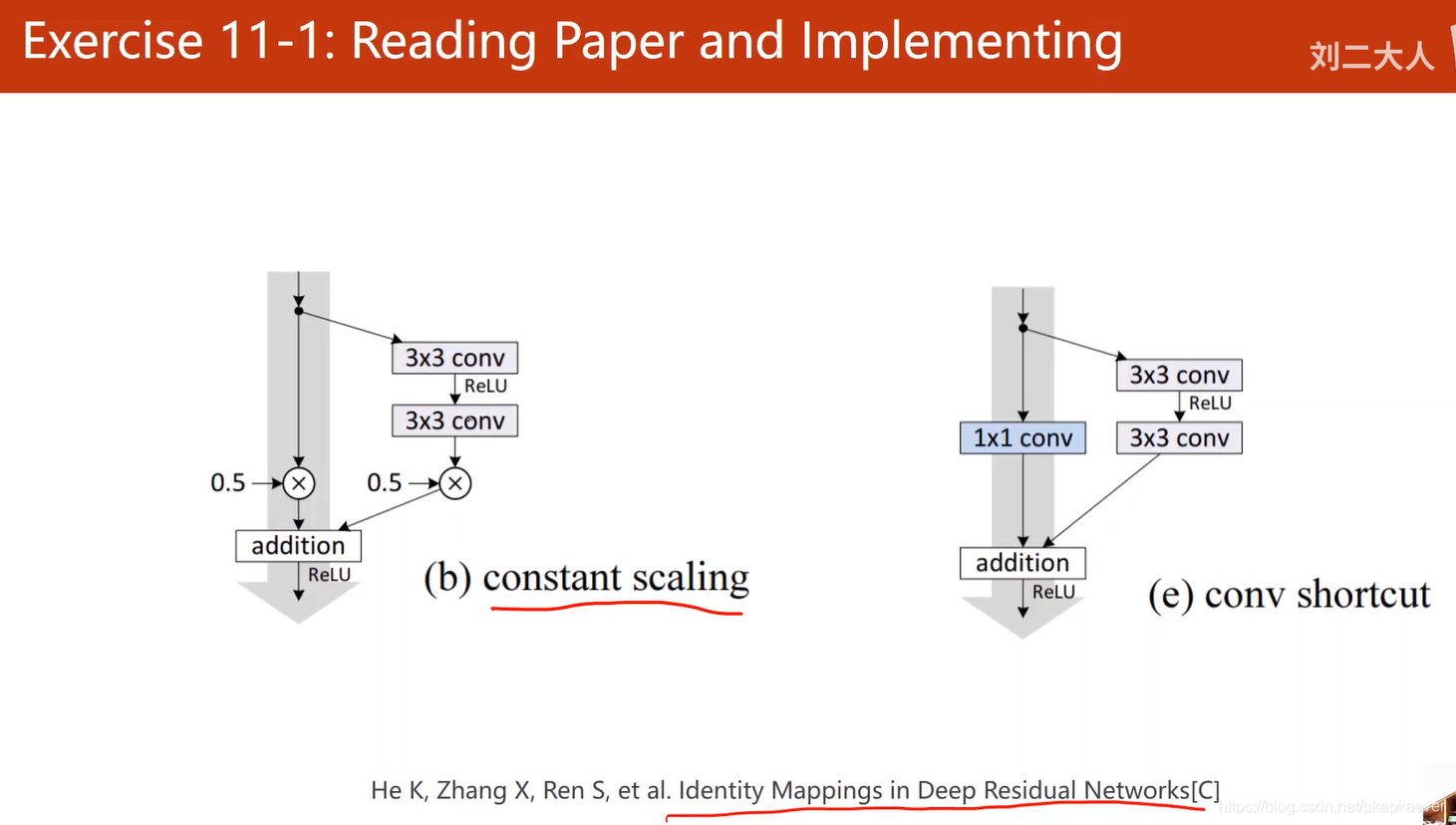

不同的设计

这篇论文给了很多关于 residual block的设计

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?