回归参数预测:需预测多个参数、

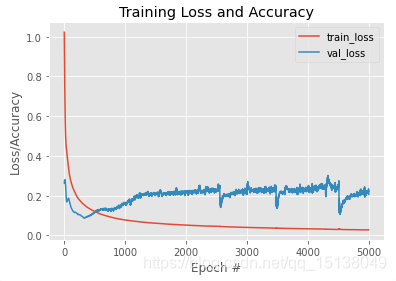

relu激活函数

model = Sequential()

model.add(Dense(7, input_shape=(7,),activation='relu', kernel_initializer='random_normal', bias_initializer='random_normal'))

#model.add(Dropout(0.5))

model.add(Dense(units=7, activation='relu',kernel_regularizer=regularizers.l2(0.01),bias_regularizer=regularizers.l1(0.01),

activity_regularizer=regularizers.l1(0.015)))

# model.add(Dropout(0.6))

model.add(Dense(units=2))

model.compile(loss='mse', optimizer=Adam(lr=0.005), metrics=['mae', 'mse'])

model.summary()

=======

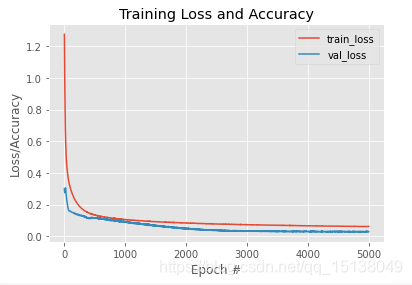

linear 激活函数

model = Sequential()

model.add(Dense(7, input_shape=(7,),activation='linear', kernel_initializer='random_normal', bias_initializer='random_normal'))

#model.add(Dropout(0.5))

model.add(Dense(units=7, activation='linear',kernel_regularizer=regularizers.l2(0.01),bias_regularizer=regularizers.l1(0.01),

activity_regularizer=regularizers.l1(0.015)))

# model.add(Dropout(0.6))

model.add(Dense(units=2))

model.compile(loss='mse', optimizer=Adam(lr=0.005), metrics=['mae', 'mse'])

model.summary()

这是不是能说明在我这个模型训练优化中 linear 对比 relu 更合适?

----------------------

https://stackoverflow.com/questions/51023739/multidimensional-regression-with-keras

在stackoverflow上看到一篇类似的问题、

Try changing the activation on your first two layers to

activation='relu'and see if that improves things at all by introducing non-linearity. You're currently just performing a series of linear transformations, so you're not really leveraging the power of a neural net in any way. There are a lot of other reasons why things might not be working as well as you hope, but they are a bit beyond the scope of a stackoverflow answer. If you have a big enough dataset, then regularization would be a good first thing to start reading up on though.

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?