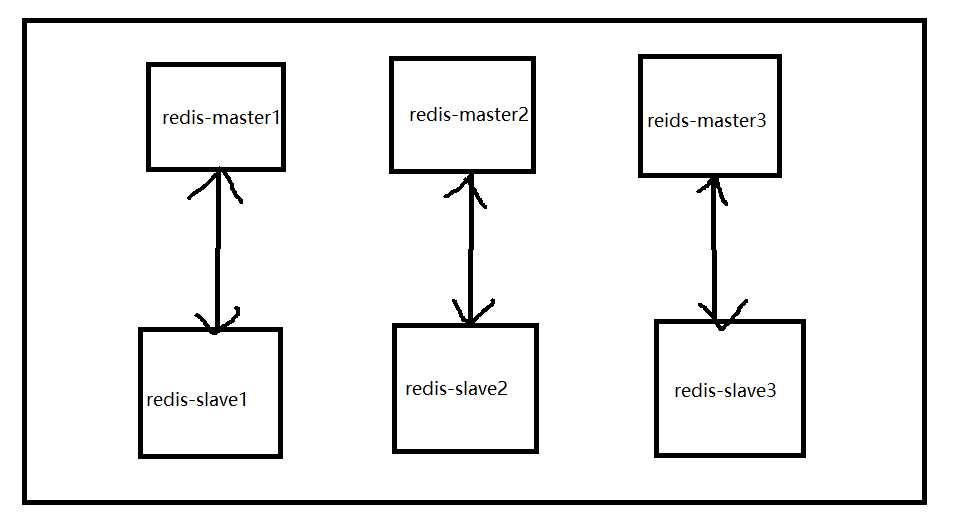

redis 集群简易图

我们要让 redis 集群拥有 分片 + 高可用 + 负载均衡 的作用

1、安装docker,点击我跳转docker安装博客

2、创建网卡 ,我们不使用 --link 的docker选项来让我们的网络连接,我们可以自定义网络

docker network create --driver bridge --subnet 172.77.0.0/16 --gateway 172.77.0.1 redis

#--driver bridge 默认就是桥接模式

#--subnet 子网掩码

#--gateway 网关

#redis 我们对这个自定网络的命名

docker network ls

docker network inspect redis[root@qwh ~]# docker network ls NETWORK ID NAME DRIVER SCOPE 7824a4fe0564 bridge bridge local 5e248a90ad9f host host local b70935957a82 none null local 49dd4d92fd8e redis bridge local

[root@qwh ~]# docker network inspect redis [ { "Name": "redis", "Id": "49dd4d92fd8efc60cbdf2f30e92c4340071355c1fd26cbbb3cb8bb14f486a6eb", "Created": "2022-11-13T22:44:48.756136319+08:00", "Scope": "local", "Driver": "bridge", "EnableIPv6": false, "IPAM": { "Driver": "default", "Options": {}, "Config": [ { "Subnet": "172.77.0.0/16", "Gateway": "172.77.0.1" } ] }, "Internal": false, "Attachable": false, "Ingress": false, "ConfigFrom": { "Network": "" }, "ConfigOnly": false, "Containers": {}, "Options": {}, "Labels": {} } ]

3、通过脚本创建六个redis配置

vim redis.sh

cat redis.sh

for port in $(seq 1 6); do mkdir -p /mydata/redis/node-${port}/conf touch /mydata/redis/node-${port}/conf/redis.conf cat << EOF >> /mydata/redis/node-${port}/conf/redis.conf port 6379 bind 0.0.0.0 cluster-enabled yes cluster-config-file nodes.conf cluster-node-timeout 5000 cluster-announce-ip 172.77.0.7${port} cluster-announce-port 6379 cluster-announce-bus-port 16379 appendonly yes EOF done

shmod 755 redis.sh4、运行六个redis

docker run -p 6371:6379 -p 16671:16379 --name redis-1 \

-v /mydata/redis/node-1/data:/data \

-v /mydata/redis/node-1/conf/redis.conf:/etc/redis/redis.conf \

-d --net redis --ip 172.77.0.71 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

参数说明

#-d 让容器运行在后台

#-p 添加主机到容器的端口映射

#-v 添加目录映射,这里最好nginx容器的根目录最好写成和php容器中根目录一样。但是不一定非要一样,如果不一样在配置nginx的时候需要注意

#-name 容器的名称

#--net 使用自定义网络

#--ip 指定ip

#redis-server /etc/redis/redis.conf 启动redisUnable to find image 'redis:5.0.9-alpine3.11' locally 5.0.9-alpine3.11: Pulling from library/redis cbdbe7a5bc2a: Pull complete dc0373118a0d: Pull complete cfd369fe6256: Pull complete 3e45770272d9: Pull complete 558de8ea3153: Pull complete a2c652551612: Pull complete Digest: sha256:83a3af36d5e57f2901b4783c313720e5fa3ecf0424ba86ad9775e06a9a5e35d0 Status: Downloaded newer image for redis:5.0.9-alpine3.11 8dec8461ac577ac1bd7203a173e354c970d038cb166c54cf119ebb2a64d735a2

docker run -p 6372:6379 -p 16672:16379 --name redis-2 \ -v /mydata/redis/node-2/data:/data \ -v /mydata/redis/node-2/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.77.0.72 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6373:6379 -p 16673:16379 --name redis-3 \ -v /mydata/redis/node-3/data:/data \ -v /mydata/redis/node-3/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.77.0.73 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6374:6379 -p 16674:16379 --name redis-4 \ -v /mydata/redis/node-4/data:/data \ -v /mydata/redis/node-4/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.77.0.74 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6375:6379 -p 16675:16379 --name redis-5 \ -v /mydata/redis/node-5/data:/data \ -v /mydata/redis/node-5/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.77.0.75 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker run -p 6376:6379 -p 16676:16379 --name redis-6 \ -v /mydata/redis/node-6/data:/data \ -v /mydata/redis/node-6/conf/redis.conf:/etc/redis/redis.conf \ -d --net redis --ip 172.77.0.76 redis:5.0.9-alpine3.11 redis-server /etc/redis/redis.conf

docker ps

5、进入redis1容器

[root@qwh ~]# docker exec -it redis-1 /bin/bash OCI runtime exec failed: exec failed: unable to start container process: exec: "/bin/bash": stat /bin/bash: no such file or directory: unknown

#redis默认没有bash

docker exec -it redis-1 /bin/sh6、创建redis集群

redis-cli --cluster create 172.77.0.71:6379 172.77.0.72:6379 172.77.0.73:6379 172.77.0.74:6379 172.77.0.75:6379 172.77.0.76:6379 --cluster-replicas 1Performing hash slots allocation on 6 nodes... Master[0] -> Slots 0 - 5460 Master[1] -> Slots 5461 - 10922 Master[2] -> Slots 10923 - 16383 Adding replica 172.77.0.75:6379 to 172.77.0.71:6379 Adding replica 172.77.0.76:6379 to 172.77.0.72:6379 Adding replica 172.77.0.74:6379 to 172.77.0.73:6379 M: 764e25bf621e4a43f7c1d44213e2b898118f3765 172.77.0.71:6379 slots:[0-5460] (5461 slots) master M: 19d86a0a92252d04ee05b0ca642c3e586eb62794 172.77.0.72:6379 slots:[5461-10922] (5462 slots) master M: d59aea1e087f65e9cf8f2c7cbb110abfae429b20 172.77.0.73:6379 slots:[10923-16383] (5461 slots) master S: c3a45eedffeea52d60addf387571c446f72f0f47 172.77.0.74:6379 replicates d59aea1e087f65e9cf8f2c7cbb110abfae429b20 S: 58fffd90cb8e4c96fc01101a61496d3e314e1290 172.77.0.75:6379 replicates 764e25bf621e4a43f7c1d44213e2b898118f3765 S: 35e0cb6b794a5b83f7edb454ca9caae39802e8fa 172.77.0.76:6379 replicates 19d86a0a92252d04ee05b0ca642c3e586eb62794 Can I set the above configuration? (type 'yes' to accept): yes Nodes configuration updated Assign a different config epoch to each node Sending CLUSTER MEET messages to join the cluster Waiting for the cluster to join .... Performing Cluster Check (using node 172.77.0.71:6379) M: 764e25bf621e4a43f7c1d44213e2b898118f3765 172.77.0.71:6379 slots:[0-5460] (5461 slots) master 1 additional replica(s) M: d59aea1e087f65e9cf8f2c7cbb110abfae429b20 172.77.0.73:6379 slots:[10923-16383] (5461 slots) master 1 additional replica(s) S: 58fffd90cb8e4c96fc01101a61496d3e314e1290 172.77.0.75:6379 slots: (0 slots) slave replicates 764e25bf621e4a43f7c1d44213e2b898118f3765 S: c3a45eedffeea52d60addf387571c446f72f0f47 172.77.0.74:6379 slots: (0 slots) slave replicates d59aea1e087f65e9cf8f2c7cbb110abfae429b20 M: 19d86a0a92252d04ee05b0ca642c3e586eb62794 172.77.0.72:6379 slots:[5461-10922] (5462 slots) master 1 additional replica(s) S: 35e0cb6b794a5b83f7edb454ca9caae39802e8fa 172.77.0.76:6379 slots: (0 slots) slave replicates 19d86a0a92252d04ee05b0ca642c3e586eb62794 [OK] All nodes agree about slots configuration. Check for open slots... Check slots coverage... [OK] All 16384 slots covered.

8、测试redis集群的高可用功能

redis-cli -c

#-c 连接集群结点时使用,此选项可防止moved和ask异常127.0.0.1:6379> cluster nodes #查看集群的节点信息 d59aea1e087f65e9cf8f2c7cbb110abfae429b20 172.77.0.73:6379@16379 master - 0 1668352765000 3 connected 10923-16383 58fffd90cb8e4c96fc01101a61496d3e314e1290 172.77.0.75:6379@16379 slave 764e25bf621e4a43f7c1d44213e2b898118f3765 0 1668352764593 5 connected c3a45eedffeea52d60addf387571c446f72f0f47 172.77.0.74:6379@16379 slave d59aea1e087f65e9cf8f2c7cbb110abfae429b20 0 1668352764994 4 connected 19d86a0a92252d04ee05b0ca642c3e586eb62794 172.77.0.72:6379@16379 master - 0 1668352764493 2 connected 5461-10922 35e0cb6b794a5b83f7edb454ca9caae39802e8fa 172.77.0.76:6379@16379 slave 19d86a0a92252d04ee05b0ca642c3e586eb62794 0 1668352765495 6 connected 764e25bf621e4a43f7c1d44213e2b898118f3765 172.77.0.71:6379@16379 myself,master - 0 1668352764000 1 connected 0-5460

#给 gang 赋予一个值:123

127.0.0.1:6379> set gang 123

OK

127.0.0.1:6379> get gang

"123"

exit

exit

docker stop redis-1 #停止 redis-1 容器的运行

docker exec -it redis-2 /bin/sh

redis-cli -c #再次进入redis

127.0.0.1:6379> cluster nodes

19d86a0a92252d04ee05b0ca642c3e586eb62794 172.77.0.72:6379@16379 myself,master - 0 1668353178000 2 connected 5461-10922 764e25bf621e4a43f7c1d44213e2b898118f3765 172.77.0.71:6379@16379 master,fail - 1668352980393 1668352979190 1 connected 35e0cb6b794a5b83f7edb454ca9caae39802e8fa 172.77.0.76:6379@16379 slave 19d86a0a92252d04ee05b0ca642c3e586eb62794 0 1668353180000 6 connected c3a45eedffeea52d60addf387571c446f72f0f47 172.77.0.74:6379@16379 slave d59aea1e087f65e9cf8f2c7cbb110abfae429b20 0 1668353180635 4 connected d59aea1e087f65e9cf8f2c7cbb110abfae429b20 172.77.0.73:6379@16379 master - 0 1668353179000 3 connected 10923-16383 58fffd90cb8e4c96fc01101a61496d3e314e1290 172.77.0.75:6379@16379 master - 0 1668353179632 7 connected 0-5460

#我们发现172.77.0.71:6379@16379 master,fail 掉了

127.0.0.1:6379> get gang

-> Redirected to slot [4288] located at 172.77.0.75:6379

"123"

#master172.77.0.71 挂掉了,但是它的 slave 172.77.0.75 接过了它的任务,继续运行,成功实现高可用!!

1026

1026

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?