geotrellis-栅格数据切片

前言

版本描述:geotrellis 2.3.3、scala:2.11.12、java 1.8 、hadoop3.0.0

目标:使用geotrellis中的pipeline模块进行栅格数据切片

步骤

1.创建maven项目

创建一个普通的maven java项目即可

2.pom中引入需要的库

<properties>

<geotrellis.version>2.3.3</geotrellis.version>

<scala.version>2.11</scala.version>

<spark.version>2.4.0</spark.version>

</properties>

<dependencies>

<!-- https://mvnrepository.com/artifact/org.locationtech.geotrellis/geotrellis-raster -->

<dependency>

<groupId>org.locationtech.geotrellis</groupId>

<artifactId>geotrellis-raster_${scala.version}</artifactId>

<version>${geotrellis.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.locationtech.geotrellis/geotrellis-spark -->

<dependency>

<groupId>org.locationtech.geotrellis</groupId>

<artifactId>geotrellis-spark_${scala.version}</artifactId>

<version>${geotrellis.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.locationtech.geotrellis/geotrellis-proj4 -->

<dependency>

<groupId>org.locationtech.geotrellis</groupId>

<artifactId>geotrellis-proj4_${scala.version}</artifactId>

<version>${geotrellis.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.locationtech.geotrellis/geotrellis-spark-pipeline -->

<dependency>

<groupId>org.locationtech.geotrellis</groupId>

<artifactId>geotrellis-spark-pipeline_${scala.version}</artifactId>

<version>${geotrellis.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers>

<transformer

implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>core.OffLineRecommendHandle</mainClass>

</transformer>

<transformer

implementation="org.apache.maven.plugins.shade.resource.AppendingTransformer">

<resource>reference.conf</resource>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<version>2.15.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

以上还增加了两个plugin,第一个是(maven-shade-plugin)是为了打包使用到,第二个是(maven-scala-plugin)java项目中编译scala支持插件。

3.编码

我这里因为是要批量操作所以将输入输出作为参数传进来,pipeline是根据json的描述一步一步进行执行的,上一步的结果给下一步使用。

def insertTileToHdfs(implicit sc: SparkContext,input:String,output:String,layerName:String) = {

val maskJson: String =

"""

|[

| {

| "uri" : "{input}",

| "type" : "singleband.spatial.read.hadoop"

| },

| {

| "resample_method" : "nearest-neighbor",

| "type" : "singleband.spatial.transform.tile-to-layout"

| },

| {

| "crs" : "EPSG:3857",

| "scheme" : {

| "crs" : "epsg:3857",

| "tileSize" : 256,

| "resolutionThreshold" : 0.1

| },

| "resample_method" : "nearest-neighbor",

| "type" : "singleband.spatial.transform.buffered-reproject"

| },

| {

| "end_zoom" : 0,

| "resample_method" : "nearest-neighbor",

| "type" : "singleband.spatial.transform.pyramid"

| },

| {

| "name" : "{layerName}",

| "uri" : "{output}",

| "key_index_method" : {

| "type" : "zorder"

| },

| "scheme" : {

| "crs" : "epsg:3857",

| "tileSize" : 256,

| "resolutionThreshold" : 0.1

| },

| "type" : "singleband.spatial.write"

| }

|]

""".stripMargin

val maskJsonStr = maskJson.replace("{input}",input).replace("{output}",output).replace("{layerName}",layerName)

// parse the JSON above

val list: Option[Node[Stream[(Int, TileLayerRDD[SpatialKey])]]] = maskJsonStr.node

list match {

case None => println("Couldn't parse the JSON")

case Some(node) => {

// eval evaluates the pipeline

// the result type of evaluation in this case would ben Stream[(Int, TileLayerRDD[SpatialKey])]

node.eval.foreach { case (zoom, rdd) =>

println(s"ZOOM: ${zoom}")

println(s"COUNT: ${rdd.count}")

}

}

}

}

主方法调用

def main(args: Array[String]): Unit = {

val conf =

new SparkConf()

// .setMaster("local") //本机跑起来这里就设置,要放到集群中跑就不用,后面打成jar包 spark-submit的时候在指定 --master yarn

.setAppName("Spark Tiler")

.set("spark.serializer", "org.apache.spark.serializer.KryoSerializer")

.set("spark.kryo.registrator", "geotrellis.spark.io.kryo.KryoRegistrator")

implicit val sc: SparkContext = SparkUtils.createSparkContext("IngestTestRasterSourceToHadoop",conf)

//tif数据源使用本地磁盘写如 “file:///d:/data/”

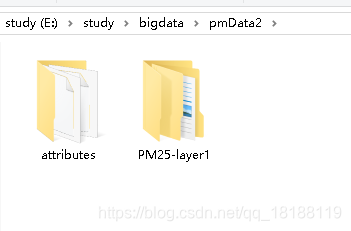

var sour = "file:/d:/data/atmors/sour/FJ-PM25/PM25-layer1.tif"

//瓦片保存地址,也是保存到hdfs中

var layers = "file:/d:/data/atmors/tiles/pm25/"

insertTileToHdfs(sc,input,layers,layername)

}

结果:

总结

1.这种方式进行切片入库少了很多代码,比之前的方式好很多。

2.pipeline的文档可以看官方文档https://geotrellis.readthedocs.io/en/v3.5.1/guide/pipeline.html

3.需要多看文档,多理解,多尝试,别放弃,过程中可能会遇到pom依赖,导包、等各种问题,需要耐心,总有办法,无非就是多看点文档,别急。

4.欢迎互相学习,交流讨论,本人的微信:huangchuanxiaa。

1233

1233

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?