kafka cluster机器:

机器名称

hadoop01001

hadoop01002

hadoop01003

【安装目录】: /home/hadoop/app

1.将scala同步到集群其他机器(scala 2.11版本)

[hadoop@hadoop software]$ scp scala-2.11.8.tgz hadoop01001:/home/hadoop/software

[hadoop@hadoop software]$ scp scala-2.11.8.tgz hadoop01002:/home/hadoop/software

[hadoop@hadoop software]$ scp scala-2.11.8.tgz hadoop01003:/home/hadoop/software

解压至/home/hadoop/app目录下

[hadoop@hadoop01001 software]$ tar -zxvf scala-2.11.8.tgz -C /home/hadoop/app

[hadoop@hadoop01002 software]$ tar -zxvf scala-2.11.8.tgz -C /home/hadoop/app

[hadoop@hadoop01003 software]$ tar -zxvf scala-2.11.8.tgz -C /home/hadoop/app

添加环境变量

[hadoop@hadoop01001 ~]$ vi .bash_profile

export SCALA_HOME=/home/hadoop/app/scala

export PATH=$SCALA_HOME/bin:$PATH

hadoop01002,hadoop01003进行同样的操作

完成后source .bash_profile

检查scala -version

[hadoop@hadoop01001 ~]$ scala -version

Scala code runner version 2.11.8 -- Copyright 2002-2016, LAMP/EPFL

2.下载基于Scala 2.11的kafka并进行配置部署(kafka_2.11-0.10.1.0, )

https://archive.apache.org/dist/kafka/0.10.1.0/kafka_2.11-0.10.1.0.tgz

1.将kafka安装包同步至集群其他机器

[hadoop@hadoop software]$ scp kafka_2.11-0.10.1.0.tgz hadoop01001:/home/hadoop/sofware

[hadoop@hadoop software]$ scp kafka_2.11-0.10.1.0.tgz hadoop01002:/home/hadoop/sofware

[hadoop@hadoop software]$ scp kafka_2.11-0.10.1.0.tgz hadoop01003:/home/hadoop/sofware

2.解压至/home/hadoop/app 下

[hadoop@hadoop01001 software]$ tar -zxvf kafka_2.11-0.10.1.0.tgz -C /home/hadoop/app/

[hadoop@hadoop01002 software]$ tar -zxvf kafka_2.11-0.10.1.0.tgz -C /home/hadoop/app/

[hadoop@hadoop01003 software]$ tar -zxvf kafka_2.11-0.10.1.0.tgz -C /home/hadoop/app/

3.创建日志目录和修改server.properties(前提zookeeper cluster部署好)

进入app下kafka目录

[hadoop@hadoop01001 kafka]$ cd config/

[hadoop@hadoop01001 config]$ vi server.properties

按照如下进行修改

broker.id=1

port=9092

host.name=本机ip

log.dirs=/home/hadoop/kafka/logs

zookeeper.connect=hadoop01001:2181,hadoop01002:2181,hadoop01003:2181/kafka

剩余机器也按上述第三步操作,broker.id (2,3)和 host.name(各自的ip)不同

4.将kafka写到环境变量(三台机器)

[hadoop@hadoop01001 ~]$ vi .bash_profile

export KAFKA_HOME=/home/hadoop/app/kafka

export PATH=$KAFKA_HOME/bin:$PATH

[hadoop@hadoop01001 ~]$ . .bash_profile

[hadoop@hadoop01001 ~]$ echo $KAFKA_HOME

/home/hadoop/app/kafka

5.kafka启动

因为有config目录,路径搞对

[hadoop@hadoop01001 kafka]$ nohup kafka-server-start.sh config/server.properties &

[hadoop@hadoop01002 kafka]$ nohup kafka-server-start.sh config/server.properties &

[hadoop@hadoop01003 kafka]$ nohup kafka-server-start.sh config/server.properties &

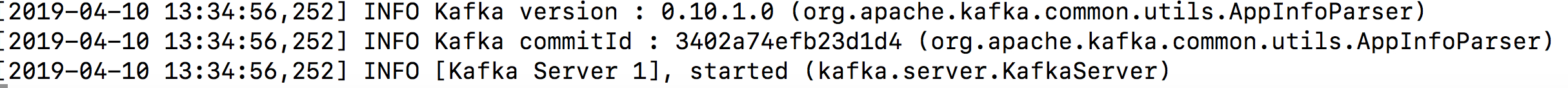

此时查看nohup.out文件

输出如下图:

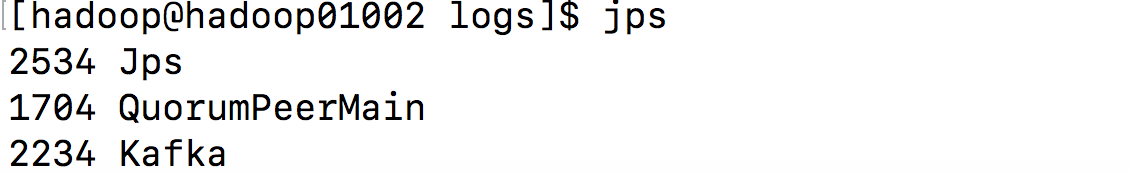

jps查看:

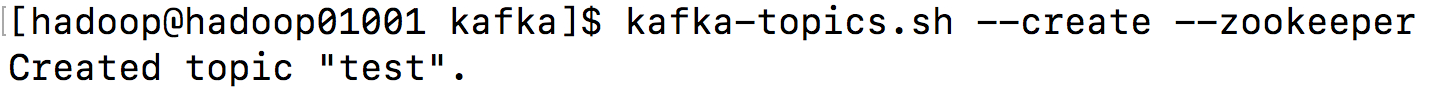

6.topic相关的操作,创建topic,如能成功创建topic则表示集群安装完成

[hadoop@hadoop01001 kafka]$ kafka-topics.sh --create --zookeeper 172.19.164.205:2181/kafka --replication-factor 3 --partitions 1 --topic test

创建成功,kafka集群安装完成

创建topic时遇到的问题:

https://blog.csdn.net/qq_24363849/article/details/89183675

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?