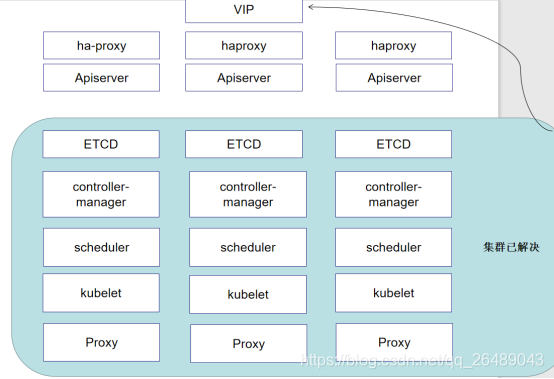

1.集群初始化设置

(1)配置阿里云源然后安装依赖包

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git

(2)修改主机名

hostname k8s-master1 && bash

hostname k8s-master2 && bash

hostname k8s-master3 && bash

vim /etc/hosts

192.168.74.238 k8s-master1

192.168.74.248 k8s-master2

192.168.74.229 k8s-master3

192.168.74.100 VIP

(3)关闭防火墙以及swap

systemctl stop firewalld && systemctl disable firewalld

yum -y install iptables-services && systemctl start iptables && systemctl enable iptables && iptables -F && service iptables save

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

(4)配置固定ip

vim /etc/sysconfig/network-scripts/ifcfg-ens33

IPADDR=192.168.74.248

NETMASK=255.255.255.0

GATEWAY=192.168.74.2

BOOTPROTO=static

切记修改dns

vim /etc/resolv.conf

ameserver 202.106.0.20

(5)关闭系统不需要的服务

systemctl stop postfix && systemctl disable postfix

(6)调整内核参数,对于k8s

cat > kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0 # 禁止使用 swap 空间,只有当系统 OOM 时才允许使用它

vm.overcommit_memory=1 # 不检查物理内存是否够用

vm.panic_on_oom=0 # 开启 OOM

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

cp kubernetes.conf /etc/sysctl.d/kubernetes.conf

sysctl -p /etc/sysctl.d/kubernetes.conf

(7)调整时区

# 设置系统时区为 中国/上海

timedatectl set-timezone Asia/Shanghai

# 将当前的 UTC 时间写入硬件时钟

timedatectl set-local-rtc 0

# 重启依赖于系统时间的服务

systemctl restart rsyslog

systemctl restart crond

(8)设置 rsyslogd 和 systemd journald

mkdir /var/log/journal # 持久化保存日志的目录

mkdir /etc/systemd/journald.conf.d

cat > /etc/systemd/journald.conf.d/99-prophet.conf <<EOF

[Journal]

# 持久化保存到磁盘

Storage=persistent

# 压缩历史日志

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

# 最大占用空间 10G

SystemMaxUse=10G

# 单日志文件最大 200M

SystemMaxFileSize=200M

# 日志保存时间 2 周

MaxRetentionSec=2week

# 不将日志转发到 syslog

ForwardToSyslog=no

EOF

systemctl restart systemd-journald

(9)升级系统内核为 4.44

CentOS 7.x 系统自带的 3.10.x 内核存在一些 Bugs,导致运行的 Docker、Kubernetes 不稳定,例如: rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

# 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装一次!

yum --enablerepo=elrepo-kernel install -y kernel-lt

# 设置开机从新内核启动

grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)"

reboot #重启服务器

(10)关闭 NUMA

cp /etc/default/grub{,.bak}

vim /etc/default/grub # 在 GRUB_CMDLINE_LINUX 一行添加 `numa=off` 参数,如下所示:

diff /etc/default/grub.bak /etc/default/grub

6c6

< GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rhgb quiet"

---

> GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rhgb quiet numa=off"

cp /boot/grub2/grub.cfg{,.bak}

grub2-mkconfig -o /boot/grub2/grub.cfg

修改完毕重启即可

2.kube-proxy开启ipvs的前置条件

modprobe br_netfilter

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules &&

lsmod | grep -e ip_vs -e nf_conntrack_ipv4

3.安装 Docker

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum update -y && yum install -y docker-ce

grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)"

由于更新以后内核选择3版本的 所以我们设置一下继续重启一下

systemctl start docker && systemctl start docker

{

"registry-mirrors": ["https://h5d0mk6d.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"], #指定cgroup使用systemd的

"log-driver": "json-file", #日志保存方式是json

"log-opts": {

"max-size": "100m" #轮替大小是100

}

}

systemctl daemon-reload

systemctl restart docker && systemctl enable docker

4.导入镜像im

docker image load < keepalived.tar

docker image load < haproxy.tar

tar xf kubeadm-basic.images.tar.gz

#!/bin/bash

cd /root/data/kubeadm-basic.images

ls /root/data/kubeadm-basic.images | grep -v load-images.sh > /tmp/k8s-images.txt

for i in $( cat /tmp/k8s-images.txt )

do

docker load -i $i

done

rm -rf /tmp/k8s-images.txt

启动haproxy

tar xf start.keep.tar.gz

cd data/lb/

vim haproxy.cfg

global

log 127.0.0.1 local0

log 127.0.0.1 local1 notice

maxconn 4096

#chroot /usr/share/haproxy

#user haproxy

#group haproxy

daemon

defaults

log global

mode http

option httplog

option dontlognull

retries 3

option redispatch

timeout connect 5000

timeout client 50000

timeout server 50000

frontend stats-front

bind *:8081

mode http

default_backend stats-back

frontend fe_k8s_6444

bind *:6444

mode tcp

timeout client 1h

log global

option tcplog

default_backend be_k8s_6443

acl is_websocket hdr(Upgrade) -i WebSocket

acl is_websocket hdr_beg(Host) -i ws

backend stats-back

mode http

balance roundrobin

stats uri /haproxy/stats

stats auth pxcstats:secret

backend be_k8s_6443

mode tcp

timeout queue 1h

timeout server 1h

timeout connect 1h

log global

balance roundrobin

# server rancher01 192.168.74.238:6443

# server rancher01 192.168.74.248:6443

server rancher02 192.168.74.229:6443

这里先添加一个本机的,其他的随后再加

vim start-haproxy.sh

#!/bin/bash

MasterIP1=192.168.74.238

MasterIP2=192.168.74.248

MasterIP3=192.168.74.229

docker run -d --restart=always --name HAProxy-K8S -p 6444:6444 \

-e MasterIP1=$MasterIP1 \

-e MasterIP2=$MasterIP2 \

-e MasterIP3=$MasterIP3 \

-e MasterPort=$MasterPort \

-v /data/lb/etc/haproxy.cfg:/usr/local/etc/haproxy/haproxy.cfg \

wise2c/haproxy-k8s

如果配置文件路径不同也需要修改下面-v 映射的配置文件路径

./start-haproxy.sh

netstat -aunpt | grep 6444

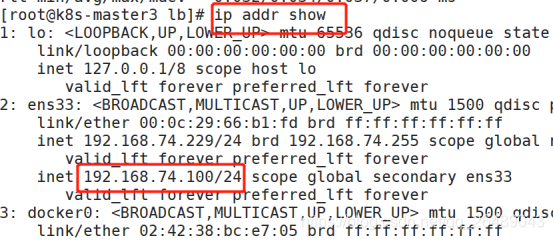

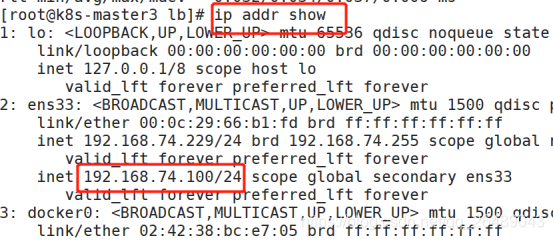

启动keepalived

vim start-keepalived.sh

#!/bin/bash

VIRTUAL_IP=192.168.74.100 #VIP地址

INTERFACE=ens33 #网卡名称

NETMASK_BIT=24 #子网掩码

CHECK_PORT=6444 #监听端口

RID=10

VRID=160

MCAST_GROUP=224.0.0.18

docker run -itd --restart=always --name=Keepalived-K8S \

--net=host --cap-add=NET_ADMIN \

-e VIRTUAL_IP=$VIRTUAL_IP \

-e INTERFACE=$INTERFACE \

-e CHECK_PORT=$CHECK_PORT \

-e RID=$RID \

-e VRID=$VRID \

-e NETMASK_BIT=$NETMASK_BIT \

-e MCAST_GROUP=$MCAST_GROUP \

wise2c/keepalived-k8s

./start-keepalived.sh

5.安装集群软件kubeadm kubelet kubectl

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum -y install kubeadm-1.15.1 kubectl-1.15.1 kubelet-1.15.1

systemctl enable kubelet.service

kubeadm config print init-defaults > kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.74.229 #主节点就是当前节点IP你又haproxy的

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master3 #这里我的keepalived就先在这个节点所以3

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: "192.168.74.100:6444" #默认没有自己加 VIP

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: v1.15.1 #注意跟你kubelet版本一致

networking:

dnsDomain: cluster.local

podSubnet: 10.244.0.0/16 #因为不可以加参数用config选项的话所以这里我们设置好了

serviceSubnet: 10.1.0.0/16

# serviceSubnet: 10.96.0.0/12

scheduler: {}

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1 #改为ipvs

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

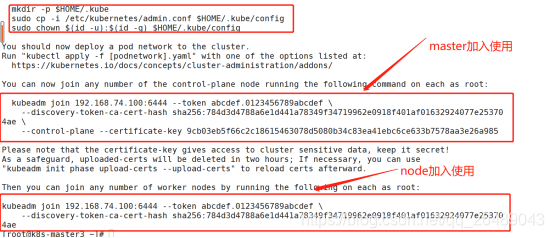

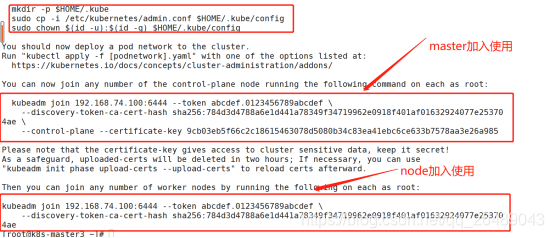

6.初始化集群

kubeadm init --config=kubeadm-config.yaml --experimental-upload-certs | tee kubeadm-init.log

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

scp start-* k8s-master1:/root/

scp start-* k8s-master2:/root/

scp haproxy.cfg k8s-master2:/root/

scp haproxy.cfg k8s-master1:/root/

修改haproxy.cfg还是用229节点因为贸然加入新的容易报错,等都启动以后我们在做调试

切记这是第一条别加错了(添加自己的我这里是我自己的。。。)

kubeadm join 192.168.74.100:6444 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:784d3d4788a6e1d441a78349f34719962e0918f401af01632924077e253704ae \

--control-plane --certificate-key 9cb03eb5f66c2c18615463078d5080b34c83ea41ebc6ce633b7578aa3e26a985

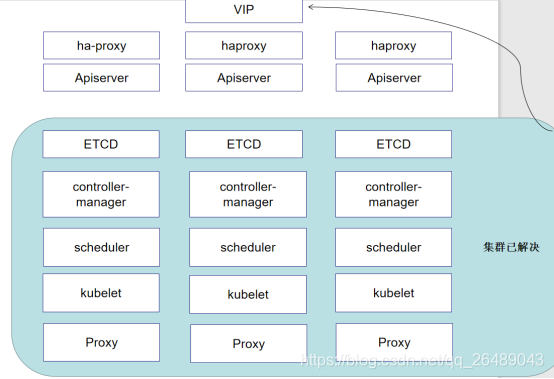

7.重启haproxy并且加载flannel网络插件

因为之前我们启动的haproxy配置文件只是单节点流量,我们要使3节点可以做分发请求,那么我们需要重启,之前没有多节点是因为我们只有一个节点装好了如果多节点可能会导致访问失败,kubectl是通过api进行交互来实现功能的

docker rm -f HAProxy-K8S && bash /root/aa/start-haproxy.sh #3节点都要做

kubectl apply -f kube-flannel.yml #如果这里失败了 pod是一直重启,那么就是前面你网段没设置好,需要在kubeadm config的文件中指定

8.修改集群连接地址

因为我们连接集群是连接VIP但是主master节点只需要连接自己本地api就可以就不需要写VIP了,写VIP会比较慢,所以把有VIP的节点改为他自己本身IP,可以加快访问速度,并且一旦他自己挂了,VIP可以漂移,那么同理当他漂移以后我们也可以把他漂移以后的节点改为

vim .kube/config

server: https://192.168.74.229:6444

注意:修改完之后,可能有些命令会不能用,每个节点都写自己本地ip

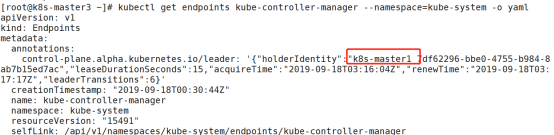

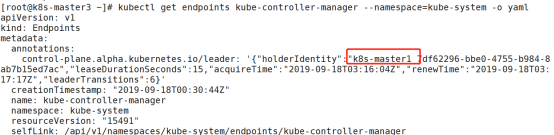

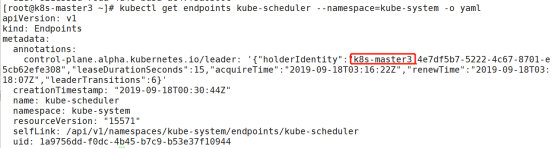

9.查看集群状态

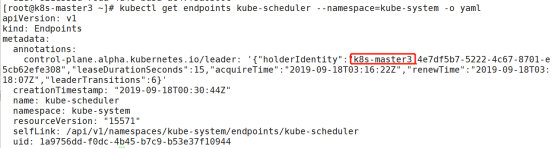

kubectl get endpoints kube-controller-manager --namespace=kube-system -o yaml

查看controller-manager

kubectl get endpoints kube-scheduler --namespace=kube-system -o yaml

查看scheduler

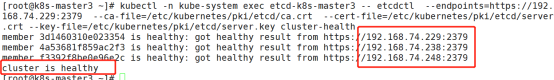

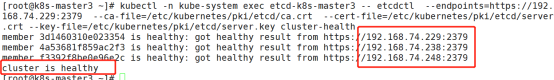

kubectl -n kube-system exec etcd-k8s-master3 -- etcdctl --endpoints=https://192.168.74.229:2379 --ca-file=/etc/kubernetes/pki/etcd/ca.crt --cert-file=/etc/kubernetes/pki/etcd/server.crt --key-file=/etc/kubernetes/pki/etcd/server.key cluster-health

指定master3 和访问地址

表示有3个节点 集群状态健康

1473

1473

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?