docker版本:24.0.7

nacos版本 :2.3.1-SNAPSHOT

CentOS版本:CentOS Stream 9 64bit

- 一、nacos拉取

- 二、修改源码

- 打开克隆的项目,修改下列文件的标记位置

- 修改父工程下的pom.xml并指定版本

- 修改config下的pom.xml

- 修改naming下的pom.xml

- 修改nacos-console下的application.properties

- 修改nacos-datasource-plugin下的DataSourceConstant文件

- 修改persistence下的ExternalDataSourceProperties类

- 在nacos.plugin.datasource下的impl中新建dm包,并新增如下类

- 修改nacos-config子项目的extrnal包下的ExternalConfigInfoPersistServiceImpl类中的addConfigInfoAtomic方法

- 修改nacos-config子项目的extrnal包下的ExternalStoragePersistServiceImpl类中的addConfigInfoAtomic方法

- 三、nacos打成tar.gz

- 四、拉取nacos-docker并打开

- 五、修改代码

- 六、打包镜像

- 七、导入镜像并运行

- 八、踩坑

- 九、参考文章

一、nacos拉取

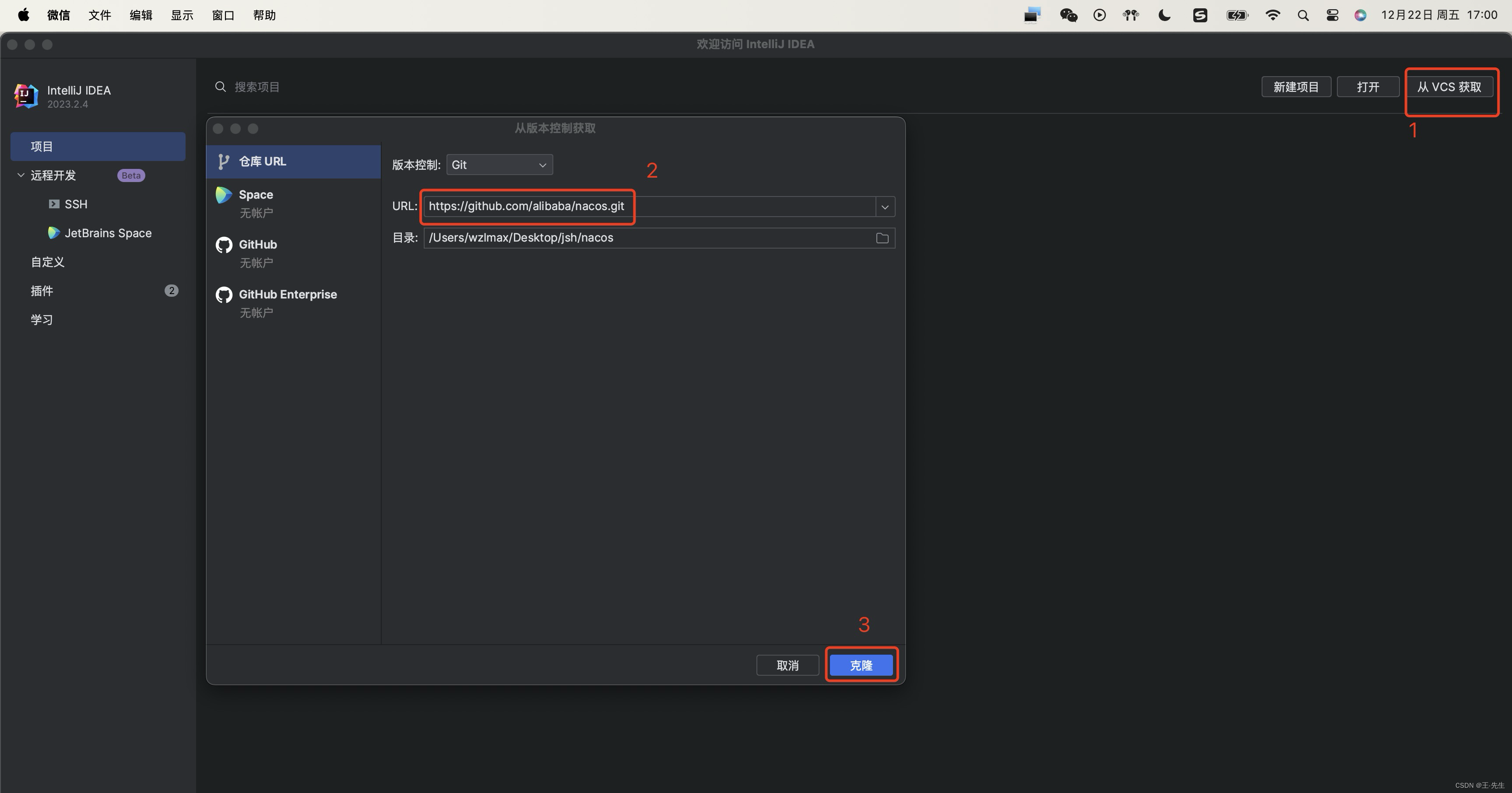

1. 拉取nacos源码到本地

GitHub地址 —— nacos

打开Idea,在Idea欢迎页点击从VCS获取,并将地址拷贝到URL中,点击clone 进行源码克隆

二、修改源码

打开克隆的项目,修改下列文件的标记位置

修改父工程下的pom.xml并指定版本

<!--添加数据库驱动安装包-->

<dependency>

<groupId>com.dameng</groupId>

<artifactId>Dm8JdbcDriver18</artifactId>

<version>${dm8.version}</version>

</dependency>

<!--添加数据库驱动依赖版本-->

<properties>

<dm8.version>8.1.0.157</dm8.version>

<properties/>

修改config下的pom.xml

<!--添加数据库驱动安装包-->

<dependency>

<groupId>com.dameng</groupId>

<artifactId>Dm8JdbcDriver18</artifactId>

</dependency>

修改naming下的pom.xml

<!--添加数据库驱动安装包-->

<dependency>

<groupId>com.dameng</groupId>

<artifactId>Dm8JdbcDriver18</artifactId>

</dependency>

修改nacos-console下的application.properties

#*************** Spring Boot Related Configurations ***************#

### Default web context path:

server.servlet.contextPath=/nacos

### Include message field

server.error.include-message=ALWAYS

### Default web server port:

server.port=8848

#*************** Network Related Configurations ***************#

### If prefer hostname over ip for Nacos server addresses in cluster.conf:

# nacos.inetutils.prefer-hostname-over-ip=false

### Specify local server's IP:

# nacos.inetutils.ip-address=

#*************** Config Module Related Configurations ***************#

### Deprecated configuration property, it is recommended to use `spring.sql.init.platform` replaced.

# 修改

spring.sql.init.platform=dm

db.num=1

db.jdbcDriverName=dm.jdbc.driver.DmDriver

db.jdbcScheme=NACOS

db.url.0=jdbc:dm://你的ip地址:端口号?schema=NACOS&characterEncoding=UTF-8&useUnicode=true&useSSL=false&tinyInt1isBit=false&allowPublicKeyRetrieval=true&serverTimezone=Asia/Shanghai&clobAsString=1

db.user=连接数据库用户名

db.password=连接数据库密码

# nacos.plugin.datasource.log.enabled=true

#spring.sql.init.platform=mysql

### Count of DB:

# db.num=1

### Connect URL of DB:

# db.url.0=jdbc:mysql://127.0.0.1:3306/nacos?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC

# db.user=nacos

# db.password=nacos

### Count of DB:

### the maximum retry times for push

nacos.config.push.maxRetryTime=50

#*************** Naming Module Related Configurations ***************#

### Data dispatch task execution period in milliseconds:

### If enable data warmup. If set to false, the server would accept request without local data preparation:

# nacos.naming.data.warmup=true

### If enable the instance auto expiration, kind like of health check of instance:

# nacos.naming.expireInstance=true

nacos.naming.empty-service.auto-clean=true

nacos.naming.empty-service.clean.initial-delay-ms=50000

nacos.naming.empty-service.clean.period-time-ms=30000

#*************** CMDB Module Related Configurations ***************#

### The interval to dump external CMDB in seconds:

# nacos.cmdb.dumpTaskInterval=3600

### The interval of polling data change event in seconds:

# nacos.cmdb.eventTaskInterval=10

### The interval of loading labels in seconds:

# nacos.cmdb.labelTaskInterval=300

### If turn on data loading task:

# nacos.cmdb.loadDataAtStart=false

#*************** Metrics Related Configurations ***************#

### Metrics for prometheus

#management.endpoints.web.exposure.include=*

### Metrics for elastic search

management.metrics.export.elastic.enabled=false

#management.metrics.export.elastic.host=http://localhost:9200

### Metrics for influx

management.metrics.export.influx.enabled=false

#management.metrics.export.influx.db=springboot

#management.metrics.export.influx.uri=http://localhost:8086

#management.metrics.export.influx.auto-create-db=true

#management.metrics.export.influx.consistency=one

#management.metrics.export.influx.compressed=true

#*************** Access Log Related Configurations ***************#

### If turn on the access log:

server.tomcat.accesslog.enabled=true

### accesslog automatic cleaning time

server.tomcat.accesslog.max-days=30

### The access log pattern:

server.tomcat.accesslog.pattern=%h %l %u %t "%r" %s %b %D %{User-Agent}i %{Request-Source}i

### The directory of access log:

server.tomcat.basedir=file:.

#*************** Access Control Related Configurations ***************#

### If enable spring security, this option is deprecated in 1.2.0:

#spring.security.enabled=false

### The ignore urls of auth, is deprecated in 1.2.0:

nacos.security.ignore.urls=/,/error,/**/*.css,/**/*.js,/**/*.html,/**/*.map,/**/*.svg,/**/*.png,/**/*.ico,/console-ui/public/**,/v1/auth/**,/v1/console/health/**,/actuator/**,/v1/console/server/**

### The auth system to use, currently only 'nacos' and 'ldap' is supported:

nacos.core.auth.system.type=nacos

### If turn on auth system:

#修改

nacos.core.auth.enabled=true

### Turn on/off caching of auth information. By turning on this switch, the update of auth information would have a 15 seconds delay.

nacos.core.auth.caching.enabled=true

### Since 1.4.1, Turn on/off white auth for user-agent: nacos-server, only for upgrade from old version.

nacos.core.auth.enable.userAgentAuthWhite=false

### Since 1.4.1, worked when nacos.core.auth.enabled=true and nacos.core.auth.enable.userAgentAuthWhite=false.

### The two properties is the white list for auth and used by identity the request from other server.

#修改key和value

nacos.core.auth.server.identity.key=example

nacos.core.auth.server.identity.value=example

### worked when nacos.core.auth.system.type=nacos

### The token expiration in seconds:

nacos.core.auth.plugin.nacos.token.cache.enable=false

nacos.core.auth.plugin.nacos.token.expire.seconds=18000

### The default token (Base64 string):

#修改你的SecretKey值

nacos.core.auth.plugin.nacos.token.secret.key=SecretKey012345678901234567890123456789012345678901234567890123456789

### worked when nacos.core.auth.system.type=ldap,{0} is Placeholder,replace login username

#nacos.core.auth.ldap.url=ldap://localhost:389

#nacos.core.auth.ldap.basedc=dc=example,dc=org

#nacos.core.auth.ldap.userDn=cn=admin,${nacos.core.auth.ldap.basedc}

#nacos.core.auth.ldap.password=admin

#nacos.core.auth.ldap.userdn=cn={0},dc=example,dc=org

#nacos.core.auth.ldap.filter.prefix=uid

#nacos.core.auth.ldap.case.sensitive=true

#nacos.core.auth.ldap.ignore.partial.result.exception=false

#*************** Control Plugin Related Configurations ***************#

# plugin type

#nacos.plugin.control.manager.type=nacos

# local control rule storage dir, default ${nacos.home}/data/connection and ${nacos.home}/data/tps

#nacos.plugin.control.rule.local.basedir=${nacos.home}

# external control rule storage type, if exist

#nacos.plugin.control.rule.external.storage=

#*************** Config Change Plugin Related Configurations ***************#

# webhook

#nacos.core.config.plugin.webhook.enabled=false

# It is recommended to use EB https://help.aliyun.com/document_detail/413974.html

#nacos.core.config.plugin.webhook.url=http://localhost:8080/webhook/send?token=***

# The content push max capacity ,byte

#nacos.core.config.plugin.webhook.contentMaxCapacity=102400

# whitelist

#nacos.core.config.plugin.whitelist.enabled=false

# The import file suffixs

#nacos.core.config.plugin.whitelist.suffixs=xml,text,properties,yaml,html

# fileformatcheck,which validate the import file of type and content

#nacos.core.config.plugin.fileformatcheck.enabled=false

#*************** Istio Related Configurations ***************#

### If turn on the MCP server:

nacos.istio.mcp.server.enabled=false

nacos.console.ui.enabled=true

修改nacos-datasource-plugin下的DataSourceConstant文件

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.constants;

/**

* The data source name.

*

* @author hyx

**/

public class DataSourceConstant {

public static final String MYSQL = "mysql";

public static final String DERBY = "derby";

//添加dm

public static final String DM = "dm";

}

修改persistence下的ExternalDataSourceProperties类

/*

* Copyright 1999-2023 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.persistence.datasource;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.common.utils.Preconditions;

import com.alibaba.nacos.common.utils.StringUtils;

import com.zaxxer.hikari.HikariDataSource;

import org.springframework.boot.context.properties.bind.Bindable;

import org.springframework.boot.context.properties.bind.Binder;

import org.springframework.core.env.Environment;

import java.util.ArrayList;

import java.util.List;

import java.util.Objects;

import static com.alibaba.nacos.common.utils.CollectionUtils.getOrDefault;

/**

* Properties of external DataSource.

*

* @author Nacos

*/

public class ExternalDataSourceProperties {

private static final String JDBC_DRIVER_NAME = "com.mysql.cj.jdbc.Driver";

private static final String TEST_QUERY = "SELECT 1";

//增加达梦驱动名称

private String jdbcDriverName = "dm.jdbc.driver.DmDriver";

//增加视图

private String jdbcScheme = "NACOS";

private Integer num;

private List<String> url = new ArrayList<>();

private List<String> user = new ArrayList<>();

private List<String> password = new ArrayList<>();

public void setNum(Integer num) {

this.num = num;

}

public void setUrl(List<String> url) {

this.url = url;

}

public void setUser(List<String> user) {

this.user = user;

}

public void setPassword(List<String> password) {

this.password = password;

}

//驱动名称和视图的Get和Set方法

public String getJdbcDriverName() {

return jdbcDriverName;

}

public void setJdbcDriverName(String jdbcDriverName) {

this.jdbcDriverName = jdbcDriverName;

}

public String getJdbcScheme() {

return jdbcScheme;

}

public void setJdbcScheme(String jdbcScheme) {

this.jdbcScheme = jdbcScheme;

}

/**

* Build serveral HikariDataSource.

*

* @param environment {@link Environment}

* @param callback Callback function when constructing data source

* @return List of {@link HikariDataSource}

*/

List<HikariDataSource> build(Environment environment, Callback<HikariDataSource> callback) {

List<HikariDataSource> dataSources = new ArrayList<>();

Binder.get(environment).bind("db", Bindable.ofInstance(this));

Preconditions.checkArgument(Objects.nonNull(num), "db.num is null");

Preconditions.checkArgument(CollectionUtils.isNotEmpty(user), "db.user or db.user.[index] is null");

Preconditions.checkArgument(CollectionUtils.isNotEmpty(password), "db.password or db.password.[index] is null");

for (int index = 0; index < num; index++) {

int currentSize = index + 1;

Preconditions.checkArgument(url.size() >= currentSize, "db.url.%s is null", index);

DataSourcePoolProperties poolProperties = DataSourcePoolProperties.build(environment);

if (StringUtils.isEmpty(poolProperties.getDataSource().getDriverClassName())) {

poolProperties.setDriverClassName(JDBC_DRIVER_NAME);

}

poolProperties.setJdbcUrl(url.get(index).trim());

poolProperties.setUsername(getOrDefault(user, index, user.get(0)).trim());

poolProperties.setPassword(getOrDefault(password, index, password.get(0)).trim());

HikariDataSource ds = poolProperties.getDataSource();

if (StringUtils.isEmpty(ds.getConnectionTestQuery())) {

ds.setConnectionTestQuery(TEST_QUERY);

}

// 对达梦数据库的支持

//ds.setDriverClassName(jdbcDriverName);

//ds.setSchema(jdbcScheme);

if (StringUtils.isNotEmpty(jdbcDriverName)) {

//添加达梦名称和视图

ds.setDriverClassName(jdbcDriverName);

ds.setSchema(jdbcScheme);

} else {

ds.setDriverClassName(JDBC_DRIVER_NAME);

}

dataSources.add(ds);

callback.accept(ds);

}

Preconditions.checkArgument(CollectionUtils.isNotEmpty(dataSources), "no datasource available");

return dataSources;

}

interface Callback<D> {

/**

* Perform custom logic.

*

* @param datasource dataSource.

*/

void accept(D datasource);

}

}

在nacos.plugin.datasource下的impl中新建dm包,并新增如下类

ConfigInfoAggrMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigInfoAggrMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.util.List;

/**

* The mysql implementation of ConfigInfoAggrMapper.

*

* @author hyx

**/

public class ConfigInfoAggrMapperByDM extends AbstractMapper implements ConfigInfoAggrMapper {

@Override

public MapperResult findConfigInfoAggrByPageFetchRows(MapperContext context) {

int startRow = context.getStartRow();

int pageSize = context.getPageSize();

String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

String groupId = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

String tenantId = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

String sql =

"SELECT data_id,group_id,tenant_id,datum_id,app_name,content FROM config_info_aggr WHERE data_id= ? AND "

+ "group_id= ? AND tenant_id= ? ORDER BY datum_id LIMIT " + startRow + "," + pageSize;

List<Object> paramList = CollectionUtils.list(dataId, groupId, tenantId);

return new MapperResult(sql, paramList);

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

ConfigInfoBetaMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigInfoBetaMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.util.ArrayList;

import java.util.List;

/**

* The mysql implementation of ConfigInfoBetaMapper.

*

* @author hyx

**/

public class ConfigInfoBetaMapperByDM extends AbstractMapper implements ConfigInfoBetaMapper {

@Override

public MapperResult findAllConfigInfoBetaForDumpAllFetchRows(MapperContext context) {

int startRow = context.getStartRow();

int pageSize = context.getPageSize();

String sql = " SELECT t.id,data_id,group_id,tenant_id,app_name,content,md5,gmt_modified,beta_ips,encrypted_data_key "

+ " FROM ( SELECT id FROM config_info_beta ORDER BY id LIMIT " + startRow + "," + pageSize + " )"

+ " g, config_info_beta t WHERE g.id = t.id ";

List<Object> paramList = new ArrayList<>();

paramList.add(startRow);

paramList.add(pageSize);

return new MapperResult(sql, paramList);

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

ConfigInfoMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.common.utils.NamespaceUtil;

import com.alibaba.nacos.common.utils.StringUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigInfoMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.sql.Timestamp;

import java.util.ArrayList;

import java.util.Collections;

import java.util.List;

/**

* The mysql implementation of ConfigInfoMapper.

*

* @author hyx

**/

public class ConfigInfoMapperByDM extends AbstractMapper implements ConfigInfoMapper {

private static final String DATA_ID = "dataId";

private static final String GROUP = "group";

private static final String APP_NAME = "appName";

private static final String CONTENT = "content";

private static final String TENANT = "tenant";

@Override

public MapperResult findConfigInfoByAppFetchRows(MapperContext context) {

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String tenantId = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

String sql = "SELECT id,data_id,group_id,tenant_id,app_name,content FROM config_info"

+ " WHERE tenant_id LIKE ? AND app_name= ?" + " LIMIT " + context.getStartRow() + ","

+ context.getPageSize();

return new MapperResult(sql, CollectionUtils.list(tenantId, appName));

}

@Override

public MapperResult getTenantIdList(MapperContext context) {

String sql = "SELECT tenant_id FROM config_info WHERE tenant_id != '" + NamespaceUtil.getNamespaceDefaultId()

+ "' GROUP BY tenant_id LIMIT " + context.getStartRow() + "," + context.getPageSize();

return new MapperResult(sql, Collections.emptyList());

}

@Override

public MapperResult getGroupIdList(MapperContext context) {

String sql = "SELECT group_id FROM config_info WHERE tenant_id ='" + NamespaceUtil.getNamespaceDefaultId()

+ "' GROUP BY group_id LIMIT " + context.getStartRow() + "," + context.getPageSize();

return new MapperResult(sql, Collections.emptyList());

}

@Override

public MapperResult findAllConfigKey(MapperContext context) {

String sql = " SELECT data_id,group_id,app_name FROM ( "

+ " SELECT id FROM config_info WHERE tenant_id LIKE ? ORDER BY id LIMIT " + context.getStartRow() + ","

+ context.getPageSize() + " )" + " g, config_info t WHERE g.id = t.id ";

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.TENANT_ID)));

}

@Override

public MapperResult findAllConfigInfoBaseFetchRows(MapperContext context) {

String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String tenantId = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

String sql = "SELECT t.id,data_id,group_id,content,md5"

+ " FROM ( SELECT id FROM config_info ORDER BY id LIMIT ?,? ) "

+ " g, config_info t WHERE g.id = t.id ";

return new MapperResult(sql, Collections.emptyList());

}

@Override

public MapperResult findAllConfigInfoFragment(MapperContext context) {

String sql = "SELECT id,data_id,group_id,tenant_id,app_name,content,md5,gmt_modified,type,encrypted_data_key "

+ "FROM config_info WHERE id > ? ORDER BY id ASC LIMIT " + context.getStartRow() + ","

+ context.getPageSize();

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.ID)));

}

@Override

public MapperResult findChangeConfigFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String tenantTmp = StringUtils.isBlank(tenant) ? StringUtils.EMPTY : tenant;

final Timestamp startTime = (Timestamp) context.getWhereParameter(FieldConstant.START_TIME);

final Timestamp endTime = (Timestamp) context.getWhereParameter(FieldConstant.END_TIME);

List<Object> paramList = new ArrayList<>();

final String sqlFetchRows = "SELECT id,data_id,group_id,tenant_id,app_name,content,type,md5,gmt_modified FROM config_info WHERE ";

String where = " 1=1 ";

if (!StringUtils.isBlank(dataId)) {

where += " AND data_id LIKE ? ";

paramList.add(dataId);

}

if (!StringUtils.isBlank(group)) {

where += " AND group_id LIKE ? ";

paramList.add(group);

}

if (!StringUtils.isBlank(tenantTmp)) {

where += " AND tenant_id = ? ";

paramList.add(tenantTmp);

}

if (!StringUtils.isBlank(appName)) {

where += " AND app_name = ? ";

paramList.add(appName);

}

if (startTime != null) {

where += " AND gmt_modified >=? ";

paramList.add(startTime);

}

if (endTime != null) {

where += " AND gmt_modified <=? ";

paramList.add(endTime);

}

return new MapperResult(

sqlFetchRows + where + " AND id > " + context.getWhereParameter(FieldConstant.LAST_MAX_ID)

+ " ORDER BY id ASC" + " LIMIT " + 0 + "," + context.getPageSize(), paramList);

}

@Override

public MapperResult listGroupKeyMd5ByPageFetchRows(MapperContext context) {

String sql = "SELECT t.id,data_id,group_id,tenant_id,app_name,md5,type,gmt_modified,encrypted_data_key FROM "

+ "( SELECT id FROM config_info ORDER BY id LIMIT " + context.getStartRow() + ","

+ context.getPageSize() + " ) g, config_info t WHERE g.id = t.id";

return new MapperResult(sql, Collections.emptyList());

}

@Override

public MapperResult findConfigInfoBaseLikeFetchRows(MapperContext context) {

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

final String sqlFetchRows = "SELECT id,data_id,group_id,tenant_id,content FROM config_info WHERE ";

String where = " 1=1 AND tenant_id='" + NamespaceUtil.getNamespaceDefaultId() + "' ";

List<Object> paramList = new ArrayList<>();

if (!StringUtils.isBlank(dataId)) {

where += " AND data_id LIKE ? ";

paramList.add(dataId);

}

if (!StringUtils.isBlank(group)) {

where += " AND group_id LIKE ";

paramList.add(group);

}

if (!StringUtils.isBlank(content)) {

where += " AND content LIKE ? ";

paramList.add(content);

}

return new MapperResult(sqlFetchRows + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public MapperResult findConfigInfo4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

List<Object> paramList = new ArrayList<>();

final String sql = "SELECT id,data_id,group_id,tenant_id,app_name,content,type,encrypted_data_key FROM config_info";

StringBuilder where = new StringBuilder(" WHERE ");

where.append(" tenant_id=? ");

paramList.add(tenant);

if (StringUtils.isNotBlank(dataId)) {

where.append(" AND data_id=? ");

paramList.add(dataId);

}

if (StringUtils.isNotBlank(group)) {

where.append(" AND group_id=? ");

paramList.add(group);

}

if (StringUtils.isNotBlank(appName)) {

where.append(" AND app_name=? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND content LIKE ? ");

paramList.add(content);

}

return new MapperResult(sql + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public MapperResult findConfigInfoBaseByGroupFetchRows(MapperContext context) {

String sql = "SELECT id,data_id,group_id,content FROM config_info WHERE group_id=? AND tenant_id=?" + " LIMIT "

+ context.getStartRow() + "," + context.getPageSize();

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.GROUP_ID),

context.getWhereParameter(FieldConstant.TENANT_ID)));

}

@Override

public MapperResult findConfigInfoLike4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

List<Object> paramList = new ArrayList<>();

final String sqlFetchRows = "SELECT id,data_id,group_id,tenant_id,app_name,content,encrypted_data_key FROM config_info";

StringBuilder where = new StringBuilder(" WHERE ");

where.append(" tenant_id LIKE ? ");

paramList.add(tenant);

if (!StringUtils.isBlank(dataId)) {

where.append(" AND data_id LIKE ? ");

paramList.add(dataId);

}

if (!StringUtils.isBlank(group)) {

where.append(" AND group_id LIKE ? ");

paramList.add(group);

}

if (!StringUtils.isBlank(appName)) {

where.append(" AND app_name = ? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND content LIKE ? ");

paramList.add(content);

}

return new MapperResult(sqlFetchRows + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public MapperResult findAllConfigInfoFetchRows(MapperContext context) {

String sql = "SELECT t.id,data_id,group_id,tenant_id,app_name,content,md5 "

+ " FROM ( SELECT id FROM config_info WHERE tenant_id LIKE ? ORDER BY id LIMIT ?,? )"

+ " g, config_info t WHERE g.id = t.id ";

return new MapperResult(sql,

CollectionUtils.list(context.getWhereParameter(FieldConstant.TENANT_ID), context.getStartRow(),

context.getPageSize()));

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

ConfigInfoTagMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigInfoTagMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.util.Collections;

/**

* The mysql implementation of ConfigInfoTagMapper.

*

* @author hyx

**/

public class ConfigInfoTagMapperByDM extends AbstractMapper implements ConfigInfoTagMapper {

@Override

public MapperResult findAllConfigInfoTagForDumpAllFetchRows(MapperContext context) {

String sql = " SELECT t.id,data_id,group_id,tenant_id,tag_id,app_name,content,md5,gmt_modified "

+ " FROM ( SELECT id FROM config_info_tag ORDER BY id LIMIT " + context.getStartRow() + ","

+ context.getPageSize() + " ) " + "g, config_info_tag t WHERE g.id = t.id ";

return new MapperResult(sql, Collections.emptyList());

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

ConfigInfoTagMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.StringUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigTagsRelationMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.util.ArrayList;

import java.util.List;

/**

* The mysql implementation of ConfigTagsRelationMapper.

*

* @author hyx

**/

public class ConfigTagsRelationMapperByDM extends AbstractMapper implements ConfigTagsRelationMapper {

@Override

public MapperResult findConfigInfo4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

final String[] tagArr = (String[]) context.getWhereParameter(FieldConstant.TAG_ARR);

List<Object> paramList = new ArrayList<>();

StringBuilder where = new StringBuilder(" WHERE ");

final String sql =

"SELECT a.id,a.data_id,a.group_id,a.tenant_id,a.app_name,a.content FROM config_info a LEFT JOIN "

+ "config_tags_relation b ON a.id=b.id";

where.append(" a.tenant_id=? ");

paramList.add(tenant);

if (StringUtils.isNotBlank(dataId)) {

where.append(" AND a.data_id=? ");

paramList.add(dataId);

}

if (StringUtils.isNotBlank(group)) {

where.append(" AND a.group_id=? ");

paramList.add(group);

}

if (StringUtils.isNotBlank(appName)) {

where.append(" AND a.app_name=? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND a.content LIKE ? ");

paramList.add(content);

}

where.append(" AND b.tag_name IN (");

for (int i = 0; i < tagArr.length; i++) {

if (i != 0) {

where.append(", ");

}

where.append('?');

paramList.add(tagArr[i]);

}

where.append(") ");

return new MapperResult(sql + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public MapperResult findConfigInfoLike4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

final String[] tagArr = (String[]) context.getWhereParameter(FieldConstant.TAG_ARR);

List<Object> paramList = new ArrayList<>();

StringBuilder where = new StringBuilder(" WHERE ");

final String sqlFetchRows = "SELECT a.id,a.data_id,a.group_id,a.tenant_id,a.app_name,a.content "

+ "FROM config_info a LEFT JOIN config_tags_relation b ON a.id=b.id ";

where.append(" a.tenant_id LIKE ? ");

paramList.add(tenant);

if (!StringUtils.isBlank(dataId)) {

where.append(" AND a.data_id LIKE ? ");

paramList.add(dataId);

}

if (!StringUtils.isBlank(group)) {

where.append(" AND a.group_id LIKE ? ");

paramList.add(group);

}

if (!StringUtils.isBlank(appName)) {

where.append(" AND a.app_name = ? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND a.content LIKE ? ");

paramList.add(content);

}

where.append(" AND b.tag_name IN (");

for (int i = 0; i < tagArr.length; i++) {

if (i != 0) {

where.append(", ");

}

where.append('?');

paramList.add(tagArr[i]);

}

where.append(") ");

return new MapperResult(sqlFetchRows + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

ConfigTagsRelationMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.StringUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.ConfigTagsRelationMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

import java.util.ArrayList;

import java.util.List;

/**

* The mysql implementation of ConfigTagsRelationMapper.

*

* @author hyx

**/

public class ConfigTagsRelationMapperByDM extends AbstractMapper implements ConfigTagsRelationMapper {

@Override

public MapperResult findConfigInfo4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

final String[] tagArr = (String[]) context.getWhereParameter(FieldConstant.TAG_ARR);

List<Object> paramList = new ArrayList<>();

StringBuilder where = new StringBuilder(" WHERE ");

final String sql =

"SELECT a.id,a.data_id,a.group_id,a.tenant_id,a.app_name,a.content FROM config_info a LEFT JOIN "

+ "config_tags_relation b ON a.id=b.id";

where.append(" a.tenant_id=? ");

paramList.add(tenant);

if (StringUtils.isNotBlank(dataId)) {

where.append(" AND a.data_id=? ");

paramList.add(dataId);

}

if (StringUtils.isNotBlank(group)) {

where.append(" AND a.group_id=? ");

paramList.add(group);

}

if (StringUtils.isNotBlank(appName)) {

where.append(" AND a.app_name=? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND a.content LIKE ? ");

paramList.add(content);

}

where.append(" AND b.tag_name IN (");

for (int i = 0; i < tagArr.length; i++) {

if (i != 0) {

where.append(", ");

}

where.append('?');

paramList.add(tagArr[i]);

}

where.append(") ");

return new MapperResult(sql + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public MapperResult findConfigInfoLike4PageFetchRows(MapperContext context) {

final String tenant = (String) context.getWhereParameter(FieldConstant.TENANT_ID);

final String dataId = (String) context.getWhereParameter(FieldConstant.DATA_ID);

final String group = (String) context.getWhereParameter(FieldConstant.GROUP_ID);

final String appName = (String) context.getWhereParameter(FieldConstant.APP_NAME);

final String content = (String) context.getWhereParameter(FieldConstant.CONTENT);

final String[] tagArr = (String[]) context.getWhereParameter(FieldConstant.TAG_ARR);

List<Object> paramList = new ArrayList<>();

StringBuilder where = new StringBuilder(" WHERE ");

final String sqlFetchRows = "SELECT a.id,a.data_id,a.group_id,a.tenant_id,a.app_name,a.content "

+ "FROM config_info a LEFT JOIN config_tags_relation b ON a.id=b.id ";

where.append(" a.tenant_id LIKE ? ");

paramList.add(tenant);

if (!StringUtils.isBlank(dataId)) {

where.append(" AND a.data_id LIKE ? ");

paramList.add(dataId);

}

if (!StringUtils.isBlank(group)) {

where.append(" AND a.group_id LIKE ? ");

paramList.add(group);

}

if (!StringUtils.isBlank(appName)) {

where.append(" AND a.app_name = ? ");

paramList.add(appName);

}

if (!StringUtils.isBlank(content)) {

where.append(" AND a.content LIKE ? ");

paramList.add(content);

}

where.append(" AND b.tag_name IN (");

for (int i = 0; i < tagArr.length; i++) {

if (i != 0) {

where.append(", ");

}

where.append('?');

paramList.add(tagArr[i]);

}

where.append(") ");

return new MapperResult(sqlFetchRows + where + " LIMIT " + context.getStartRow() + "," + context.getPageSize(),

paramList);

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

GroupCapacityMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.GroupCapacityMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

/**

* The derby implementation of {@link GroupCapacityMapper}.

*

* @author lixiaoshuang

*/

public class GroupCapacityMapperByDM extends AbstractMapper implements GroupCapacityMapper {

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

@Override

public MapperResult selectGroupInfoBySize(MapperContext context) {

String sql = "SELECT id, group_id FROM group_capacity WHERE id > ? LIMIT ?";

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.ID), context.getPageSize()));

}

}

HistoryConfigInfoMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.HistoryConfigInfoMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

/**

* The mysql implementation of HistoryConfigInfoMapper.

*

* @author hyx

**/

public class HistoryConfigInfoMapperByDM extends AbstractMapper implements HistoryConfigInfoMapper {

@Override

public MapperResult removeConfigHistory(MapperContext context) {

String sql = "DELETE FROM his_config_info WHERE gmt_modified < ? LIMIT ?";

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.START_TIME),

context.getWhereParameter(FieldConstant.LIMIT_SIZE)));

}

@Override

public MapperResult pageFindConfigHistoryFetchRows(MapperContext context) {

String sql =

"SELECT nid,data_id,group_id,tenant_id,app_name,src_ip,src_user,op_type,gmt_create,gmt_modified FROM his_config_info "

+ "WHERE data_id = ? AND group_id = ? AND tenant_id = ? ORDER BY nid DESC LIMIT "

+ context.getStartRow() + "," + context.getPageSize();

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.DATA_ID),

context.getWhereParameter(FieldConstant.GROUP_ID), context.getWhereParameter(FieldConstant.TENANT_ID)));

}

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

TenantCapacityMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.common.utils.CollectionUtils;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.constants.FieldConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.TenantCapacityMapper;

import com.alibaba.nacos.plugin.datasource.model.MapperContext;

import com.alibaba.nacos.plugin.datasource.model.MapperResult;

/**

* The mysql implementation of TenantCapacityMapper.

*

* @author hyx

**/

public class TenantCapacityMapperByDM extends AbstractMapper implements TenantCapacityMapper {

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

@Override

public MapperResult getCapacityList4CorrectUsage(MapperContext context) {

String sql = "SELECT id, tenant_id FROM tenant_capacity WHERE id>? LIMIT ?";

return new MapperResult(sql, CollectionUtils.list(context.getWhereParameter(FieldConstant.ID),

context.getWhereParameter(FieldConstant.LIMIT_SIZE)));

}

}

TenantInfoMapperByDM.class

/*

* Copyright 1999-2022 Alibaba Group Holding Ltd.

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package com.alibaba.nacos.plugin.datasource.impl.dm;

import com.alibaba.nacos.plugin.datasource.constants.DataSourceConstant;

import com.alibaba.nacos.plugin.datasource.mapper.AbstractMapper;

import com.alibaba.nacos.plugin.datasource.mapper.TenantInfoMapper;

/**

* The mysql implementation of TenantInfoMapper.

*

* @author hyx

**/

public class TenantInfoMapperByDM extends AbstractMapper implements TenantInfoMapper {

@Override

public String getDataSource() {

return DataSourceConstant.DM;

}

}

修改nacos-config子项目的extrnal包下的ExternalConfigInfoPersistServiceImpl类中的addConfigInfoAtomic方法

@Override

public long addConfigInfoAtomic(final long configId, final String srcIp, final String srcUser,

final ConfigInfo configInfo, Map<String, Object> configAdvanceInfo) {

final String appNameTmp = StringUtils.defaultEmptyIfBlank(configInfo.getAppName());

final String tenantTmp = StringUtils.defaultEmptyIfBlank(configInfo.getTenant());

final String desc = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("desc");

final String use = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("use");

final String effect = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("effect");

final String type = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("type");

final String schema = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("schema");

final String encryptedDataKey =

configInfo.getEncryptedDataKey() == null ? StringUtils.EMPTY : configInfo.getEncryptedDataKey();

final String md5Tmp = MD5Utils.md5Hex(configInfo.getContent(), Constants.ENCODE);

KeyHolder keyHolder = new GeneratedKeyHolder();

ConfigInfoMapper configInfoMapper = mapperManager

.findMapper(dataSourceService.getDataSourceType(), TableConstant.CONFIG_INFO);

final String sql = configInfoMapper.insert(Arrays

.asList("data_id", "group_id", "tenant_id", "app_name", "content", "md5", "src_ip", "src_user",

"gmt_create", "gmt_modified", "c_desc", "c_use", "effect", "type", "c_schema",

"encrypted_data_key"));

String[] returnGeneratedKeys = configInfoMapper.getPrimaryKeyGeneratedKeys();

try {

jt.update(new PreparedStatementCreator() {

@Override

public PreparedStatement createPreparedStatement(Connection connection) throws SQLException {

Timestamp now = new Timestamp(System.currentTimeMillis());

//使用此方法会出现 jmenv.tbsite.net 异常

//PreparedStatement ps = connection.prepareStatement(sql, returnGeneratedKeys);

//使用此方法会导致 insert config_info fail 异常

//PreparedStatement ps = connection.prepareStatement(sql);

PreparedStatement ps = connection.prepareStatement(sql, Statement.RETURN_GENERATED_KEYS);

ps.setString(1, configInfo.getDataId());

ps.setString(2, configInfo.getGroup());

ps.setString(3, tenantTmp);

ps.setString(4, appNameTmp);

ps.setString(5, configInfo.getContent());

ps.setString(6, md5Tmp);

ps.setString(7, srcIp);

ps.setString(8, srcUser);

ps.setTimestamp(9, now);

ps.setTimestamp(10, now);

ps.setString(11, desc);

ps.setString(12, use);

ps.setString(13, effect);

ps.setString(14, type);

ps.setString(15, schema);

ps.setString(16, encryptedDataKey);

return ps;

}

}, keyHolder);

Number nu = keyHolder.getKey();

if (nu == null) {

throw new IllegalArgumentException("insert config_info fail");

}

return nu.longValue();

} catch (CannotGetJdbcConnectionException e) {

LogUtil.FATAL_LOG.error("[db-error] " + e, e);

throw e;

}

}

修改nacos-config子项目的extrnal包下的ExternalStoragePersistServiceImpl类中的addConfigInfoAtomic方法

@Override

public long addConfigInfoAtomic(final long configId, final String srcIp, final String srcUser,

final ConfigInfo configInfo, final Timestamp time, Map<String, Object> configAdvanceInfo) {

final String appNameTmp = StringUtils.defaultEmptyIfBlank(configInfo.getAppName());

final String tenantTmp = StringUtils.defaultEmptyIfBlank(configInfo.getTenant());

final String desc = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("desc");

final String use = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("use");

final String effect = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("effect");

final String type = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("type");

final String schema = configAdvanceInfo == null ? null : (String) configAdvanceInfo.get("schema");

final String encryptedDataKey =

configInfo.getEncryptedDataKey() == null ? StringUtils.EMPTY : configInfo.getEncryptedDataKey();

final String md5Tmp = MD5Utils.md5Hex(configInfo.getContent(), Constants.ENCODE);

KeyHolder keyHolder = new GeneratedKeyHolder();

ConfigInfoMapper configInfoMapper = mapperManager.findMapper(dataSourceService.getDataSourceType(),

TableConstant.CONFIG_INFO);

final String sql = configInfoMapper.insert(

Arrays.asList("data_id", "group_id", "tenant_id", "app_name", "content", "md5", "src_ip", "src_user",

"gmt_create", "gmt_modified", "c_desc", "c_use", "effect", "type", "c_schema",

"encrypted_data_key"));

String[] returnGeneratedKeys = configInfoMapper.getPrimaryKeyGeneratedKeys();

try {

jt.update(new PreparedStatementCreator() {

@Override

public PreparedStatement createPreparedStatement(Connection connection) throws SQLException {

//使用此方法会出现 jmenv.tbsite.net 异常

//PreparedStatement ps = connection.prepareStatement(sql, returnGeneratedKeys);

//使用此方法会导致 insert config_info fail 异常

//PreparedStatement ps = connection.prepareStatement(sql);

PreparedStatement ps = connection.prepareStatement(sql, Statement.RETURN_GENERATED_KEYS);

ps.setString(1, configInfo.getDataId());

ps.setString(2, configInfo.getGroup());

ps.setString(3, tenantTmp);

ps.setString(4, appNameTmp);

ps.setString(5, configInfo.getContent());

ps.setString(6, md5Tmp);

ps.setString(7, srcIp);

ps.setString(8, srcUser);

ps.setTimestamp(9, time);

ps.setTimestamp(10, time);

ps.setString(11, desc);

ps.setString(12, use);

ps.setString(13, effect);

ps.setString(14, type);

ps.setString(15, schema);

ps.setString(16, encryptedDataKey);

return ps;

}

}, keyHolder);

Number nu = keyHolder.getKey();

if (nu == null) {

throw new IllegalArgumentException("insert config_info fail");

}

return nu.longValue();

} catch (CannotGetJdbcConnectionException e) {

LogUtil.FATAL_LOG.error("[db-error] " + e.toString(), e);

throw e;

}

}

三、nacos打成tar.gz

打包后的tar.gz包在项目根路径下distribution包target下

mvn -Prelease-nacos -Dmaven.test.skip=true -Dpmd.skip=true -Dcheckstyle.skip=true clean install -U

四、拉取nacos-docker并打开

GitHub地址 —— nacos-docker

五、修改代码

修改build下的Dockerfile文件

FROM centos:7.9.2009

LABEL maintainer="pader <huangmnlove@163.com>"

# set environment

ENV MODE="cluster" \

PREFER_HOST_MODE="ip"\

BASE_DIR="/home/nacos" \

CLASSPATH=".:/home/nacos/conf:$CLASSPATH" \

CLUSTER_CONF="/home/nacos/conf/cluster.conf" \

FUNCTION_MODE="all" \

JAVA_HOME="/usr/lib/jvm/java-1.8.0-openjdk" \

NACOS_USER="nacos" \

JAVA="/usr/lib/jvm/java-1.8.0-openjdk/bin/java" \

JVM_XMS="1g" \

JVM_XMX="1g" \

JVM_XMN="512m" \

JVM_MS="128m" \

JVM_MMS="320m" \

NACOS_DEBUG="n" \

TOMCAT_ACCESSLOG_ENABLED="false" \

TIME_ZONE="Asia/Shanghai"

ARG NACOS_VERSION=2.3.1-SNAPSHOT

ARG HOT_FIX_FLAG=""

WORKDIR $BASE_DIR

COPY nacos-server-2.3.1-SNAPSHOT.tar.gz /home

RUN set -x \

&& yum update -y \

&& yum install -y java-1.8.0-openjdk java-1.8.0-openjdk-devel iputils nc vim libcurl \

&& yum clean all

RUN tar -xzvf /home/nacos-server-${NACOS_VERSION}.tar.gz -C /home \

&& rm -rf /home/nacos-server-${NACOS_VERSION}.tar.gz /home/nacos/bin/* /home/nacos/conf/*.properties /home/nacos/conf/*.example /home/nacos/conf/nacos-mysql.sql

RUN yum autoremove -y wget \

&& ln -snf /usr/share/zoneinfo/$TIME_ZONE /etc/localtime && echo $TIME_ZONE > /etc/timezone \

&& yum clean all

ADD bin/docker-startup.sh bin/docker-startup.sh

ADD conf/application.properties conf/application.properties

# set startup log dir

RUN mkdir -p logs \

&& touch logs/start.out \

&& ln -sf /dev/stdout start.out \

&& ln -sf /dev/stderr start.out

RUN chmod +x bin/docker-startup.sh

EXPOSE 8848

ENTRYPOINT ["bin/docker-startup.sh"]

修改conf下的application.properties文件

server.servlet.contextPath=${SERVER_SERVLET_CONTEXTPATH:/nacos}

server.contextPath=/nacos

server.port=${NACOS_APPLICATION_PORT:8848}

server.tomcat.accesslog.max-days=30

server.tomcat.accesslog.pattern=%h %l %u %t "%r" %s %b %D %{User-Agent}i %{Request-Source}i

server.tomcat.accesslog.enabled=${TOMCAT_ACCESSLOG_ENABLED:false}

server.error.include-message=ALWAYS

server.tomcat.basedir=file:.

spring.sql.init.platform=${SPRING_DATASOURCE_PLATFORM:}

nacos.cmdb.dumpTaskInterval=3600

nacos.cmdb.eventTaskInterval=10

nacos.cmdb.labelTaskInterval=300

nacos.cmdb.loadDataAtStart=false

db.num=${DM_DATABASE_NUM:1}

db.url.0=jdbc:dm://${DM_SERVICE_HOST:123.60.223.204}:${DM_SERVICE_PORT:5237}?schema=${DM_SCHEMA:NACOS}&characterEncoding=UTF-8&useUnicode=true&serverTimezone=Asia/Shanghai

db.user.0=${DM_SERVICE_USER:IE}

db.password.0=${DM_SERVICE_PASSWORD:6IEASDCMHIENMAS..}

nacos.core.auth.system.type=${NACOS_AUTH_SYSTEM_TYPE:nacos}

nacos.core.auth.plugin.nacos.token.expire.seconds=${NACOS_AUTH_TOKEN_EXPIRE_SECONDS:18000}

nacos.core.auth.plugin.nacos.token.secret.key=${NACOS_AUTH_TOKEN:SecretKey3hiyggynui43ni244yu2nu53i565oij6io45yg35y2vjkahsbiauw8ko2u3h3}

### Turn on/off caching of auth information. By turning on this switch, the update of auth information would have a 15 seconds delay.

nacos.core.auth.enabled=${NACOS_AUTH_ENABLE:true}

nacos.core.auth.caching.enabled=${NACOS_AUTH_CACHE_ENABLE:false}

nacos.core.auth.enable.userAgentAuthWhite=${NACOS_AUTH_USER_AGENT_AUTH_WHITE_ENABLE:false}

nacos.core.auth.server.identity.key=${NACOS_AUTH_IDENTITY_KEY:example}

nacos.core.auth.server.identity.value=${NACOS_AUTH_IDENTITY_VALUE:example}

nacos.security.ignore.urls=${NACOS_SECURITY_IGNORE_URLS:/,/error,/**/*.css,/**/*.js,/**/*.html,/**/*.map,/**/*.svg,/**/*.png,/**/*.ico,/console-fe/public/**,/v1/auth/**,/v1/console/health/**,/actuator/**,/v1/console/server/**}

# metrics for elastic search

management.metrics.export.elastic.enabled=false

management.metrics.export.influx.enabled=false

nacos.naming.distro.taskDispatchThreadCount=10

nacos.naming.distro.taskDispatchPeriod=200

nacos.naming.distro.batchSyncKeyCount=1000

nacos.naming.distro.initDataRatio=0.9

nacos.naming.distro.syncRetryDelay=5000

nacos.naming.data.warmup=true

nacos.console.ui.enabled=true

nacos.core.param.check.enabled=true

六、打包镜像

docker build --platform linux/amd64 -t nacos-dm:1.0.0 .

docker save -o nacos-dm.tar nacos-dm:1.0.0

七、导入镜像并运行

#导入镜像

docker load -i nacos-dm.tar

#运行镜像

docker run --name nacos-dm -d -p 8848:8848 --privileged=true --restart=always -e JVM_XMS=256m -e JVM_XMX=256m -e MODE=standalone -e PREFER_HOST_MODE=hostname -v /tools/configs/nacos-dm/logs:/home/nacos/logs -v /tools/configs/nacos-dm/init.d/custom.properties:/home/nacos/init.d/custom.properties nacos-dm:1.0.0

八、踩坑

addConfigInfoAtomic方法不修改会导致异常

//使用此方法会出现 jmenv.tbsite.net 异常

//PreparedStatement ps = connection.prepareStatement(sql, returnGeneratedKeys);

//使用此方法会导致 insert config_info fail 异常

//PreparedStatement ps = connection.prepareStatement(sql);

//正确方式

PreparedStatement ps = connection.prepareStatement(sql, Statement.RETURN_GENERATED_KEYS);

打包之后的tar.gz需要重新设置application.properties文件如下配置

#数据库配置

spring.sql.init.platform=dm

db.num=1

db.jdbcDriverName=dm.jdbc.driver.DmDriver

db.jdbcScheme=NACOS

db.url.0=jdbc:dm://你的数据库ip:端口号?schema=NACOS&characterEncoding=UTF-8&useUnicode=true&useSSL=false&tinyInt1isBit=false&allowPublicKeyRetrieval=true&serverTimezone=Asia/Shanghai&clobAsString=1

db.user=你的数据库用户名

db.password=你的数据库密码

#开启鉴权

nacos.core.auth.enabled=true

#设置key与value

nacos.core.auth.server.identity.key=example

nacos.core.auth.server.identity.value=example

#设置SecretKey

nacos.core.auth.plugin.nacos.token.secret.key=SecretKey012345678901234567890123456789012345678901234567890123456789

4255

4255

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?