主从配置

一主一从或一主多从的架构模式,实现读写分离、数据同步。

主从搭建:

- redis默认为主服务器;

- 从服务器修改redis.conf相关设置:

replicaof masterip masterport:配置主服务器地址masterauth master节点密码:如果主节点设置了密码需要配置此项- 启动后

redis-cli连接,执行:info replication即可查看主从信息- 本地测试时推荐基于docker搭建,下附测试时使用的

docker-compose.yml文件。

# docker-compose版本

version: '2'

# 服务

services:

redis-master:

# restart: always

image: redis

container_name: redis-master

hostname: redis-master

ports:

- "6379:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis/redis-master/data:/data

- /home/lyq/soft/redis/redis-master/redis.conf:/usr/local/etc/redis/redis.conf

redis-slave1:

# restart: always

image: redis

container_name: redis-slave-16379

ports:

- "16379:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis/redis-slave1/data:/data

- /home/lyq/soft/redis/redis-slave1/redis.conf:/usr/local/etc/redis/redis.conf

links:

- redis-master

redis-slave2:

# restart: always

image: redis

container_name: redis-slave-26379

ports:

- "26379:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis/redis-slave2/data:/data

- /home/lyq/soft/redis/redis-slave2/redis.conf:/usr/local/etc/redis/redis.conf

links:

- redis-master

- 上述从服务器的配置文件中使用

replicaof redis-master 6379指定主服务器地址;redis.conf中bind 127.0.0.1修改为bind 0.0.0.0或修改protected-mode为no,开启允许远程访问;- 主从搭建完毕后,写操作只允许在主节点上执行,从节点会自动同步主节点上的数据;

从节点同步主节点数据的步骤:

- 当slave启动后,主动向master发送SYNC命令;

- master接收到SYNC命令后在后台保存快照(RDB持久化),同时缓存保存快照这段时间内的命令;

- 主节点将保存的快照文件和缓存的命令发送给slave,slave接收到快照文件和命令后加载快照文件和缓存的执行命令实现数据初始化;

- 之后的master每一个写命令都会同步发送至从节点,保证主从数据的一致性。

主从模式的集群方案的弊端是主节点挂掉后无法自动恢复,需要人工干预。

哨兵模式

鉴于主从模式的集群方案无法在主节点宕机的时候自动故障恢复,衍生除了

哨兵模式的集群方案:在主从集群的基础上启动多个哨兵进程(sentinel),通过定时与redis服务器集群进行通信,对主从集群状态进行监控;当哨兵监测到master宕机,会自动将slave切换成master,然后通过发布订阅模式通知其他的从服务器,修改配置文件,让它们切换主机。

version: '2'

# 服务

services:

redis-master:

# restart: always

image: redis

container_name: redis-master

hostname: redis-master

ports:

- "6379:6379"

command: redis-server /usr/local/etc/redis/redis.conf

# network_mode: "bridge"

volumes:

- /home/lyq/soft/redis/redis-master/data:/data

- /home/lyq/soft/redis/redis-master/redis.conf:/usr/local/etc/redis/redis.conf

redis-slave1:

# restart: always

image: redis

container_name: redis-slave-6380

hostname: redis-slave1

ports:

- "6380:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis/redis-slave1/data:/data

- /home/lyq/soft/redis/redis-slave1/redis.conf:/usr/local/etc/redis/redis.conf

# network_mode: "bridge"

links:

- redis-master

redis-slave2:

# restart: always

image: redis

container_name: redis-slave-6381

hostname: redis-slave2

ports:

- "6381:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis/redis-slave2/data:/data

- /home/lyq/soft/redis/redis-slave2/redis.conf:/usr/local/etc/redis/redis.conf

# network_mode: "bridge"

links:

- redis-master

sentinel1:

image: redis

container_name: sentinel-26379

ports:

- "26379:26379"

command: redis-sentinel /usr/local/etc/redis/sentinel.conf

volumes:

- ./sentinels/sentinel-26379.conf:/usr/local/etc/redis/sentinel.conf

links:

- redis-master

sentinel2:

image: redis

container_name: sentinel-26380

ports:

- "26380:26379"

command: redis-sentinel /usr/local/etc/redis/sentinel.conf

volumes:

- ./sentinels/sentinel-26380.conf:/usr/local/etc/redis/sentinel.conf

links:

- redis-master

sentinel3:

image: redis

container_name: sentinel-26381

ports:

- "26381:26379"

command: redis-sentinel /usr/local/etc/redis/sentinel.conf

volumes:

- ./sentinels/sentinel-26381.conf:/usr/local/etc/redis/sentinel.conf

links:

- redis-master

# sentinel.conf文件配置项

protected-mode no

port 26379

pidfile "/var/run/redis-sentinel.pid"

dir "/tmp"

sentinel deny-scripts-reconfig yes

sentinel monitor redis-sentinel-cluster redis-master 6379 2

通过如上操作,默认容器redis-master作为主节点,当停掉该节点后,sentinel会自动在从节点中选取一个作为新的主节点对外提供服务。

sentinel实现故障切换的过程:

- 主服务器宕机,哨兵1先检测到这个结果,系统并不会马上进行failover过程,仅仅是哨兵1主观的认为主服务器不可用,称为

主观下线;- 当后面的哨兵也检测到主服务器不可用,并且数量达到一定值时,那么哨兵之间就会进行一次

投票,投票的结果由一个哨兵发起,进行failover操作;- 切换成功后,就会通过发布订阅模式,让各个哨兵把自己监控的从服务器实现切换主机,这个过程称为

客观下线。这样对于客户端而言,一切都是透明的。

Redis Cluster

哨兵模式虽然解决了主节点故障的问题,但是此时每一个节点中存储的都是全量数据。一旦需要数据量过多,内存的大小将成为瓶颈;此外如果QPS过高,主从架构中单机主可能无法满足业务QPS的需求。总结一下主从模式的不足:

数据分布过于集中、无法对过高的网络流量进行分流。Redis Cluster作为官方推荐的集群方案,与高可用方案结合使用不但可以实现数据分片,同时也可以保证高可用。

数据分片方式:

- 根据key的hash值对服务器节点数取模,计算数据分布的节点;优点是实现简单,缺点是进行节点扩容与收缩时需要进行数据移动;

- 一致性哈希;

在上面两种数据分片方式中,一致性哈希虽然已经很大程度上缓解了节点数量变动带来的数据移动问题,但仍无法彻底避免。Redis Cluster采用基于

虚拟hash槽的分区方式彻底避免集群伸缩导致的数据移动问题:Redis Cluster的具体实现细节是采用了Hash槽的概念,集群会预先分配16384个槽,并将这些槽分配给具体的服务节点,通过对Key进行CRC16(key)%16384运算得到对应的槽是哪一个,从而将读写操作转发到该槽所对应的服务节点。当有新的节点加入或者移除的时候,再来迁移这些槽以及其对应的数据。在这种设计之下,我们就可以很方便的进行动态扩容或缩容。

# docker-compose版本

version: '2'

# 服务

services:

redis-node1:

# restart: always

image: redis

container_name: redis-node-6379

ports:

- "6379:6379"

command: redis-server /usr/local/etc/redis/redis.conf

# network_mode: "bridge"

volumes:

- /home/lyq/soft/redis-cluster/redis-6379/data:/data

- /home/lyq/soft/redis-cluster/redis-6379/redis.conf:/usr/local/etc/redis/redis.conf

redis-node2:

# restart: always

image: redis

container_name: redis-node-6380

ports:

- "6380:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis-cluster/redis-6380/data:/data

- /home/lyq/soft/redis-cluster/redis-6380/redis.conf:/usr/local/etc/redis/redis.conf

redis-node3:

# restart: always

image: redis

container_name: redis-node-6381

hostname: redis-slave2

ports:

- "6381:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis-cluster/redis-6381/data:/data

- /home/lyq/soft/redis-cluster/redis-6381/redis.conf:/usr/local/etc/redis/redis.conf

redis-node4:

# restart: always

image: redis

container_name: redis-node-6382

ports:

- "6382:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis-cluster/redis-6382/data:/data

- /home/lyq/soft/redis-cluster/redis-6382/redis.conf:/usr/local/etc/redis/redis.conf

redis-node5:

# restart: always

image: redis

container_name: redis-node-6383

ports:

- "6383:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis-cluster/redis-6383/data:/data

- /home/lyq/soft/redis-cluster/redis-6383/redis.conf:/usr/local/etc/redis/redis.conf

redis-node6:

# restart: always

image: redis

container_name: redis-node-6384

ports:

- "6384:6379"

command: redis-server /usr/local/etc/redis/redis.conf

volumes:

- /home/lyq/soft/redis-cluster/redis-6384/data:/data

- /home/lyq/soft/redis-cluster/redis-6384/redis.conf:/usr/local/etc/redis/redis.conf

# redis.conf

port 6379

bind 0.0.0.0

dir ./

cluster-enabled yes

cluster-config-file nodes-8001.conf

cluster-node-timeout 5000

appendonly yes

pidfile /var/run/redis_8001.pid

Redis Cluster至少需要六个节点,三主三从。启动所有redis服务后执行指令:

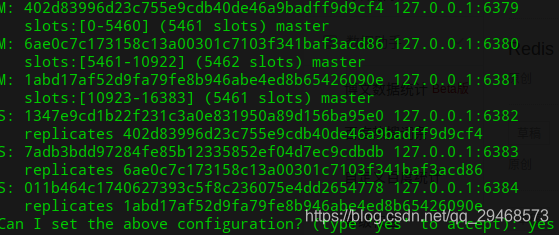

redis-cli --cluster create 127.0.0.1:6379 127.0.0.1:6380 127.0.0.1:6381 127.0.0.1:6382 127.0.0.1:6383 127.0.0.1:6384 --cluster-replicas 1,指令过程中需要输入一次yes,执行完毕可以看到如下输出(redis-cli版本必须为5.x,否则一直提示unrecognized option 或 --cluster参数不正确):

M 表示主节点,S 表示从节点,可以看到每个节点分配的哈希槽范围以及从节点隶属主节点的信息。

450

450

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?