1.Maven中的配置如下:

关于Hadoop,如果只配置haddop-client也可,所有的如下依赖包都会被下载。

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

<scope>test</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-common -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.6.4</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-hdfs -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.6.4</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-mapreduce-client-core -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>2.6.4</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.poi/poi -->

<dependency>

<groupId>org.apache.poi</groupId>

<artifactId>poi</artifactId>

<version>4.0.1</version>

</dependency>2.创建一个主类,测试各种方法

连接集群(创建一个文件夹,查看连接是否是否成功):

package dfsHadoop;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

public class MyHadoop {

public static void main(String[] args) throws URISyntaxException, IOException, InterruptedException {

//配置

Configuration conf=new Configuration();

//urI地址,注意是java.net包下的

URI uri=new URI("hdfs://hadoop1:8020");

FileSystem fs=FileSystem.get(uri, conf, "root");

fs.mkdirs(new Path("/Demo"));

fs.close();

}

}在80070端口查看是否上传成功:

3.Cnfiguration类的作用,修改配置文件

我集群内部配置的是3个副本,此处修改为两个测试

package dfsHadoop;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

public class MyHadoop {

public static void main(String[] args) throws URISyntaxException, IOException, InterruptedException {

Configuration conf=new Configuration();

//Configuration可以修改配置信息,测试修改副本数为2

conf.set("dfs.replication", "2");

//url地址,注意是java.net包下的

URI uri=new URI("hdfs://hadoop1:8020");

FileSystem fs=FileSystem.get(uri, conf, "root");

fs.copyFromLocalFile(new Path("C:\\Users\\12042\\Desktop\\a.xlsx"), new Path("/Demo/"));

fs.close();

}

}

80070端口查看副本数:

对文件遍历和查看信息,文件的信息存放在LocatedFileStatus对象中,通过其包含的方法,可以获取某一个文件的详细信息

package dfsHadoop;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.Arrays;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.BlockLocation;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.LocatedFileStatus;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.fs.RemoteIterator;

import org.apache.hadoop.fs.permission.FsPermission;

public class MyHadoop {

public static void main(String[] args) throws URISyntaxException, IOException, InterruptedException {

//配置

Configuration conf=new Configuration();

URI uri=new URI("hdfs://hadoop1:8020");

FileSystem fs=FileSystem.get(uri, conf, "root");

RemoteIterator<LocatedFileStatus> ls= fs.listFiles(new Path("/"), true);

while(ls.hasNext()) {

LocatedFileStatus ll=ls.next();

//文件名称:

String name= ll.getPath().getName();

System.err.print("文件名:"+name+"\t");

//权限

FsPermission permossion= ll.getPermission();

System.out.print("权限"+permossion+"\t");

//ll.getBlockSize();

long size=ll.getBlockSize();

//块的大小

System.err.print("块大小:"+size+"\t");

//副本数

short num= ll.getReplication();

System.out.print("复本数"+num+"\t");

String group=ll.getGroup();

System.out.println("所属组"+group+"\t");

long time=ll.getAccessTime();

System.out.println("修改时间"+time+"\t");

System.err.println("------------获取文件块信息------");

BlockLocation[] blocks = ll.getBlockLocations();

for (BlockLocation block : blocks) {

System.err.println("文件块开始:" + block.getOffset() + ",到:" + block.getLength());

System.err.println("主机:" + Arrays.toString(block.getHosts()));

System.err.println("主机 :ip为:" + Arrays.toString(block.getNames()));

System.err.println("-----------------------------------");

}

}

fs.close();

}

}

输出结果:

补充:

① public RemoteIterator<LocatedFileStatus> listFiles( final Path f, final boolean recursive);

对于,listFiles(path,recursive)方法 ,是对文件的查询,如果Recursive为true,则递归返回路径下全部的文件,如果为false,则返回指定路径下的文件。

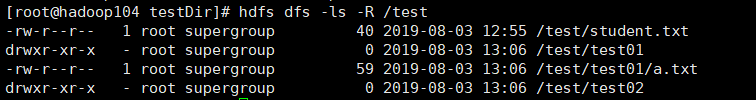

假如在集群中的某个文件夹下的信息如下:

package hadoop002;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.LocatedFileStatus;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.fs.RemoteIterator;

import org.apache.hadoop.io.IOUtils;

public class day20190803 {

public static void main(String[] args) throws Exception, IOException, InterruptedException {

seeDir();

}

//连接集群并,查看某个路径下的全部文件

public static void seeDir() throws URISyntaxException, IOException, InterruptedException {

//配置

Configuration conf=new Configuration();

//访问的集群

URI uri=new URI("hdfs://hadoop104:9000");

//获取文件系统的实例

FileSystem fs=FileSystem.get(uri, conf, "root");

RemoteIterator<LocatedFileStatus> it=fs.listFiles(new Path("/test/"), true);

while(it.hasNext()) {

LocatedFileStatus locatedFileStatus= it.next();

String name=locatedFileStatus.getPath().getName();

System.out.println("文件的名字为:"+name);

}

IOUtils.closeStream(fs);

System.out.println("ok");

}

}

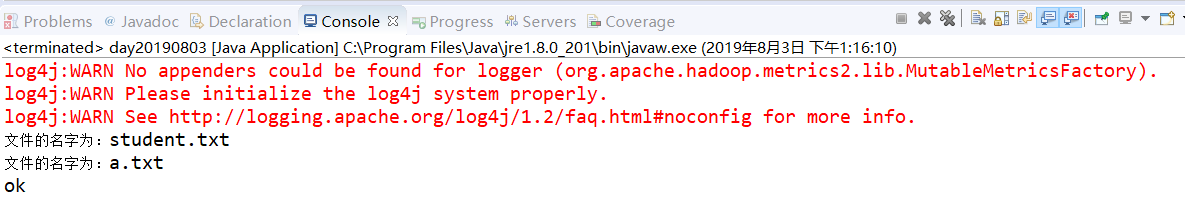

如果未true,运行结果如下:

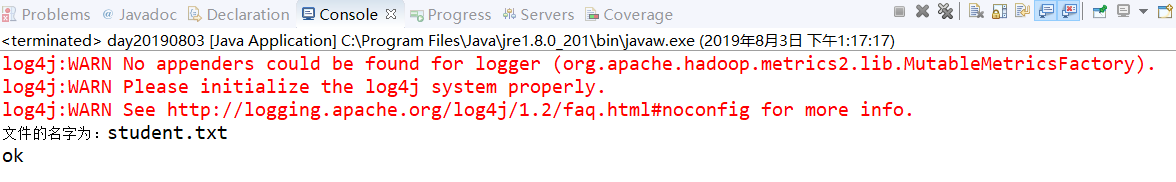

如果为false,结果如下:

②public RemoteIterator<LocatedFileStatus> listLocatedStatus(final Path f);

此方法是对文件及文件夹的查看:

比如查看某一路径下的文件,并判断文件是否为文件夹

public static void listDir01() throws Exception {

//配置

Configuration conf=new Configuration();

//访问的集群

URI uri=new URI("hdfs://hadoop104:9000");

//获取文件系统的实例

FileSystem fs=FileSystem.get(uri, conf, "root");

RemoteIterator<LocatedFileStatus> it=fs.listLocatedStatus(new Path("/test"));

while(it.hasNext()) {

LocatedFileStatus locatedFileStatus=it.next();

//判断是否为文件夹

boolean b=locatedFileStatus.isDirectory();

String name=locatedFileStatus.getPath().getName();

if(b) {

System.out.println("类型为目录,名称为:"+name);

}else {

System.out.println("类型为文件,名称为"+name);

}

}输出结果为:

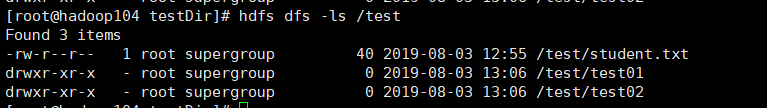

集群中查看:

139

139

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?