模型容器与AlexNet构建

一、模型容器

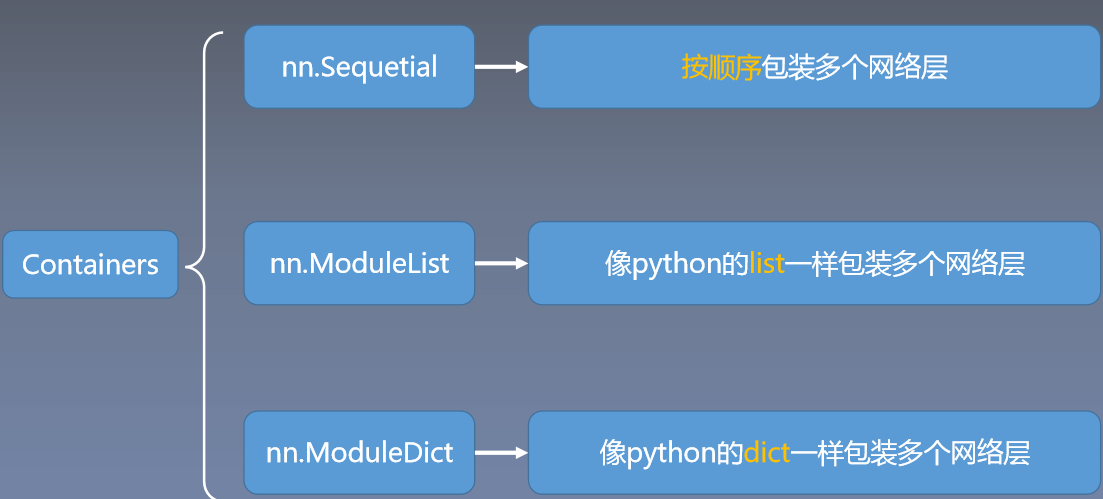

模型容器包含:

nn.Sequetial

nn.ModuleList

nn.ModuleDict

1.1 nn.Sequetial

nn.Sequential 是 nn.module的容器,用于按顺序包装一组网络层

• 顺序性:各网络层之间严格按照顺序构建

• 自带forward():自带的forward里,通过for循环依次执行前向传播运算

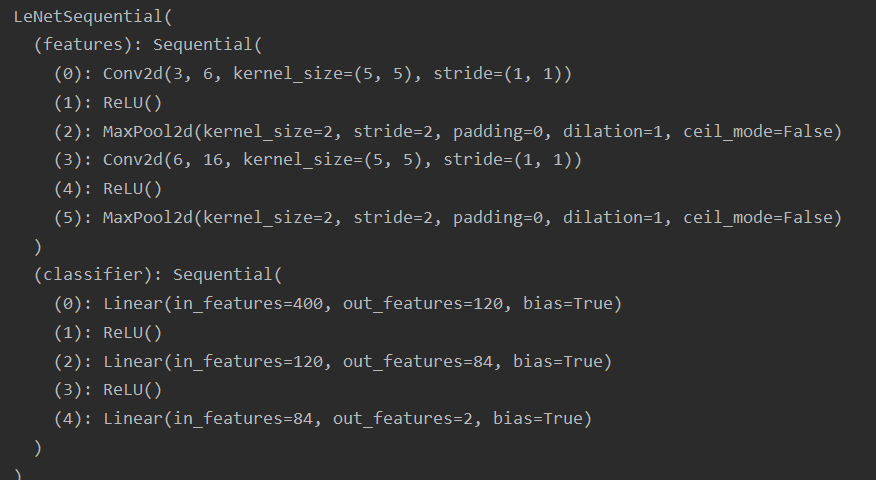

程序以LeNet为例

class LeNetSequential(nn.Module):

def __init__(self, classes):

super(LeNetSequential, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 6, 5),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, 5),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2),)

self.classifier = nn.Sequential(

nn.Linear(16*5*5, 120),

nn.ReLU(),

nn.Linear(120, 84),

nn.ReLU(),

nn.Linear(84, classes),)

上面这种发现没名字

在nn.Sequential()中写一个OrderedDict()即可

class LeNetSequentialOrderDict(nn.Module):

def __init__(self, classes):

super(LeNetSequentialOrderDict, self).__init__()

self.features = nn.Sequential(OrderedDict({

'conv1': nn.Conv2d(3, 6, 5),

'relu1': nn.ReLU(inplace=True),

'pool1': nn.MaxPool2d(kernel_size=2, stride=2),

'conv2': nn.Conv2d(6, 16, 5),

'relu2': nn.ReLU(inplace=True),

'pool2': nn.MaxPool2d(kernel_size=2, stride=2),

}))

self.classifier = nn.Sequential(OrderedDict({

'fc1': nn.Linear(16*5*5, 120),

'relu3': nn.ReLU(),

'fc2': nn.Linear(120, 84),

'relu4': nn.ReLU(inplace=True),

'fc3': nn.Linear(84, classes),

}))

def forward(self, x):

x = self.features(x)

x = x.view(x.size()[0], -1)

x = self.classifier(x)

return x

1.2 nn.ModuleList

nn.ModuleList是 nn.module的容器,用于包装一组网络层,以迭代方式调用网络层

主要方法:

• append():在ModuleList后面添加网络层

• extend():拼接两个ModuleList • insert():指定在ModuleList中位置插入网络层

例如构建20个全连接层

class ModuleList(nn.Module):

def __init__(self):

super(ModuleList, self).__init__()

self.linears = nn.ModuleList([nn.Linear(10, 10) for i in range(20)])

def forward(self, x):

for i, linear in enumerate(self.linears):

x = linear(x)

return x

1.3 ModuleLDict

nn.ModuleDict是 nn.module的容器,用于包装一组网络层,以索引方式调用网络层

主要方法:

• clear():清空ModuleDict

• items():返回可迭代的键值对(key-value pairs)

• keys():返回字典的键(key)

• values():返回字典的值(value)

• pop():返回一对键值,并从字典中删除

class ModuleDict(nn.Module):

def __init__(self):

super(ModuleDict, self).__init__()

self.choices = nn.ModuleDict({

'conv': nn.Conv2d(10, 10, 3),

'pool': nn.MaxPool2d(3)

})

self.activations = nn.ModuleDict({

'relu': nn.ReLU(),

'prelu': nn.PReLU()

})

def forward(self, x, choice, act):

x = self.choices[choice](x)

x = self.activations[act](x)

return x

容器总结:

• nn.Sequential:顺序性,各网络层之间严格按顺序执行,常用于block构建

• nn.ModuleList:迭代性,常用于大量重复网构建,通过for循环实现重复构建

• nn.ModuleDict:索引性,常用于可选择的网络层

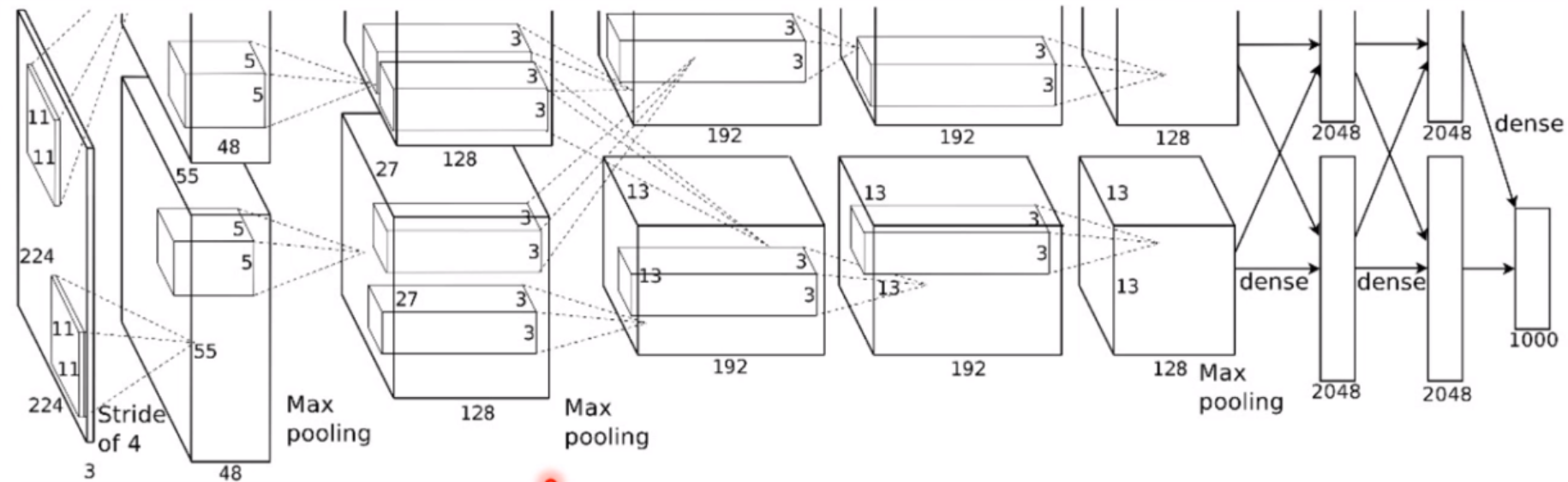

AlexNet构建

AlexNet:2012年以高出第二名10多个百分点的准确率获得ImageNet分类任务冠军,开创了卷积神经网络的新时代

AlexNet特点如下:

- 采用ReLU:替换饱和激活函数,减轻梯度消失

- 采用LRN(Local Response Normalization):对数据归一化,减轻梯度消失

- Dropout:提高全连接层的鲁棒性,增加网络的泛化能力

- Data Augmentation:TenCrop,色彩修改

将前面卷积池化部分组装,构成features,将后面全连接部分构成classfier

class AlexNet(nn.Module):

def __init__(self, num_classes=1000):

super(AlexNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 64, kernel_size=11, stride=4, padding=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Conv2d(64, 192, kernel_size=5, padding=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Conv2d(192, 384, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(384, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(256, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

)

self.avgpool = nn.AdaptiveAvgPool2d((6, 6))

self.classifier = nn.Sequential(

nn.Dropout(),

nn.Linear(256 * 6 * 6, 4096),

nn.ReLU(inplace=True),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(inplace=True),

nn.Linear(4096, num_classes),

)

def forward(self, x):

x = self.features(x)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.classifier(x)

return x

1478

1478

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?