Abstract

使用Bert在西班牙语tweet中进行情感分类,双向Bert

Introduction

使用模型bert,基于对西班牙语tweet的Bert模型的预训练适应性的微调。介绍本文结构,第二节介绍解决了的任务,第三节提出了一些设想,和baseline model。第四节,对实验进行评估评价和实验的结果进行分析,最后第5节显示了一些结论和未来的工作。

对数据集进行分析

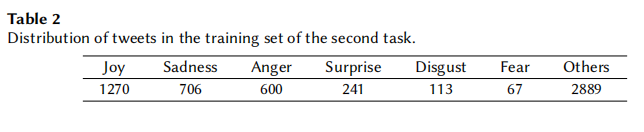

In Table

2

the tweet distribution for each

emotion in the training set of the task 2 is shown. In this case, there is a large bias towards the

class

Others

that acts like a sink of unconsidered emotions or combinations among emotions.

The less frequent class, by far, is the

Fear

class.

Task description

Task1:

Task2:The second task is also a single-label classification task

but with 7 different emotions (

joy

,

sadness

,

anger

,

surprise

,

disgust

,

fear

and

others

).

Systems

- Deep Averaging Networks:以前用过的版本作为baseline,该模型在averaging word embedding的基础上运用 feed-forward networks。

- TWilBERT:TWilBERT是在tweet领域中用于训练,评估,和微调的Bert模型,介绍一下TWilBERT,并与multi-lingual Bert进行比较,说明TWilBERT的优点。

Experimental Word

TwilBert与baseline(DAN)通过不同的设置进行尽可能公平的对比。

本文探讨了如何利用预训练的BERT模型对西班牙语推文进行情感分类,特别关注了双向BERT在任务2中的应用。对比了TWilBERT,一种针对tweet领域优化的BERT模型,与传统Deep Averaging Networks(DAN)模型。研究了数据集中情绪分布偏斜并介绍了实验设计和结果分析。

本文探讨了如何利用预训练的BERT模型对西班牙语推文进行情感分类,特别关注了双向BERT在任务2中的应用。对比了TWilBERT,一种针对tweet领域优化的BERT模型,与传统Deep Averaging Networks(DAN)模型。研究了数据集中情绪分布偏斜并介绍了实验设计和结果分析。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?