pom.xml 配置文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>atguigu-class</artifactId>

<groupId>com.atguigu.bigdata</groupId>

<version>1.0.0</version>

</parent>

<modelVersion>4.0.0</modelVersion>

<artifactId>spark-core</artifactId>

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-streaming-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.bahir</groupId>

<artifactId>flink-connector-redis_2.11</artifactId>

<version>1.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-elasticsearch6_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.44</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-statebackend-rocksdb_2.11</artifactId>

<version>1.7.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<!-- 该插件用于将Scala代码编译成class文件 -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.4.6</version>

<executions>

<execution>

<!-- 声明绑定到maven的compile阶段 -->

<goals>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.0.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

package com.atguigu.bigdata.spark.core.wc

import org.apache.flink.api.scala._

object WordCount {

def main(args: Array[String]): Unit = {

//创建一个执行环境

val env = ExecutionEnvironment.getExecutionEnvironment

//从文件中读取数据

val inputPath = "D:\\hello.txt"

val inputDataSet = env.readTextFile(inputPath)

//切分数据得到word,然后在按word做分组聚合

val wordCountDataSet = inputDataSet.flatMap(_.split(" "))

.map((_, 1))

.groupBy(0)

.sum(1)

wordCountDataSet.print()

}

}

快捷键ctrl+alt+l idea 中代码对齐 ctrl+alt+v 补全代码

package com.atguigu.bigdata.spark.core.wc

import org.apache.flink.streaming.api.scala._

// 流处理word count

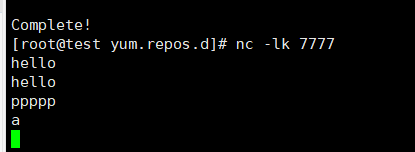

object StreamWordCount {

def main(args: Array[String]): Unit = {

// 创建流处理执行环境

val env = StreamExecutionEnvironment.getExecutionEnvironment

// 接收一个socket文本流

val dataStream = env.socketTextStream("192.168.186.129",7777)

// 对每条数据进行处理

val wordCountDataStream = dataStream.flatMap(_.split(" "))

.filter(_.nonEmpty)

.map((_,1))

.keyBy(0)

.sum(1)

wordCountDataStream.print()

// 启动executer

env.execute("stream word count job")

}

}

下载nc命令 yum install -y nc

1975

1975

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?