数据存入HBase

FluxInfo代码:

这是一个javabean,用来封装tuple中各字段信息,然后存入hbase中。一条访问记录就是一个实例对象。

有一处需要注意:在插入hbase表时,需要指定行键。我们规定的行键规则为: sstime_uvid_ssid_随机数;

package cn.tarena.domain;

public class FluxInfo {

private String url;

private String urlname;

private String uvid;

private String ssid;

private String sscount;

private String sstime;

private String cip;

public String getRK(){

return sstime+"_"+uvid+"_"+ssid+"_"+(int)(Math.random()*100);

}

public String getUrl() {

return url;

}

public void setUrl(String url) {

this.url = url;

}

public String getUrlname() {

return urlname;

}

public void setUrlname(String urlname) {

this.urlname = urlname;

}

public String getUvid() {

return uvid;

}

public void setUvid(String uvid) {

this.uvid = uvid;

}

public String getSsid() {

return ssid;

}

public void setSsid(String ssid) {

this.ssid = ssid;

}

public String getSscount() {

return sscount;

}

public void setSscount(String sscount) {

this.sscount = sscount;

}

public String getSstime() {

return sstime;

}

public void setSstime(String sstime) {

this.sstime = sstime;

}

public String getCip() {

return cip;

}

public void setCip(String cip) {

this.cip = cip;

}

}

HBaseBolt代码示例:

此类是一个Bolt组件,是最下游的Tuple。用于将数据存入Hbase中。

package cn.tarena.weblog;

import java.util.Map;

import backtype.storm.task.OutputCollector;

import backtype.storm.task.TopologyContext;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseRichBolt;

import backtype.storm.tuple.Tuple;

import cn.tarena.dao.HBaseDao;

import cn.tarena.domain.FluxInfo;

/*

*

*/

public class HBaseBolt extends BaseRichBolt{

private OutputCollector collector = null;

@Override

public void prepare(Map stormConf, TopologyContext context, OutputCollector collector) {

this.collector = collector;

}

@Override

public void execute(Tuple input) {

try {

FluxInfo fi = new FluxInfo();

fi.setUrl(input.getStringByField("url"));

fi.setUrlname(input.getStringByField("urlname"));

fi.setUvid(input.getStringByField("uvid"));

fi.setSsid(input.getStringByField("ssid"));

fi.setSstime(input.getStringByField("sstime"));

fi.setSscount(input.getStringByField("sscount"));

fi.setCip(input.getStringByField("cip"));

//自定义的dao层工具类,用于存储数据以及其他的dao层操作

HBaseDao.getHbaseDao().saveToHbase(fi);

collector.ack(input);

} catch (Exception e) {

e.printStackTrace();

collector.fail(input);

}

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

}

}

HBaseDao 代码示例:

package cn.tarena.dao;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.client.HTable;

import org.apache.hadoop.hbase.client.Put;

import cn.tarena.domain.FluxInfo;

public class HBaseDao {

private static HBaseDao hbaseDao = new HBaseDao();

private HBaseDao() {

}

public static HBaseDao getHbaseDao(){

return hbaseDao;

}

/**

* 将信息写入hbase

* @param fi 封装了日志信息的bean

*/

public void saveToHbase(FluxInfo fi){

HTable tab = null;

try {

//1.创建hbase配置对象

Configuration conf = new Configuration();

//--其他用默认配置 至少要配置zookeeper的地址 客户端通过链接zookeeper获取元数据信息

conf.set("hbase.zookeeper.quorum",

"192.168.150.137:2181,192.168.150.138:2181,192.168.150.139:2181");

//2.创建HTable对象

tab = new HTable(conf,"flux".getBytes());

//3.向表中存入数据

Put put = new Put(fi.getRK().getBytes());

put.add("cf1".getBytes(), "url".getBytes(), fi.getUrl().getBytes());

put.add("cf1".getBytes(), "urlname".getBytes(), fi.getUrlname().getBytes());

put.add("cf1".getBytes(), "uvid".getBytes(), fi.getUvid().getBytes());

put.add("cf1".getBytes(), "ssid".getBytes(), fi.getSsid().getBytes());

put.add("cf1".getBytes(), "sscount".getBytes(), fi.getSscount().getBytes());

put.add("cf1".getBytes(), "sstime".getBytes(), fi.getSstime().getBytes());

put.add("cf1".getBytes(), "cip".getBytes(), fi.getCip().getBytes());

tab.put(put);

} catch (IOException e) {

e.printStackTrace();

} finally {

//4.关闭连接

try {

tab.close();

} catch (IOException e) {

e.printStackTrace();

}

}

}

}

注:清空habase表指令: truncate ‘表名’

PvBolt+UvBolt+VvBolt的改造

业务说明

对于pv、uv和vv的统计,我们要实现的效果是:

1)每当一个用户访问一次,storm就接收一条记录

2)pv的处理:让每条记录的pv=1,即并不是马上做叠加处理

3)uv的处理:根据每条记录的uvid,去hbase中去查询,如果没有查到记录,让每条记录的uv=1。如果查到,则让每条记录的uv=0

4)vv的处理:根据每条记录的sscount,如果ssount=0,让每条记录的vv=1,反之vv=0

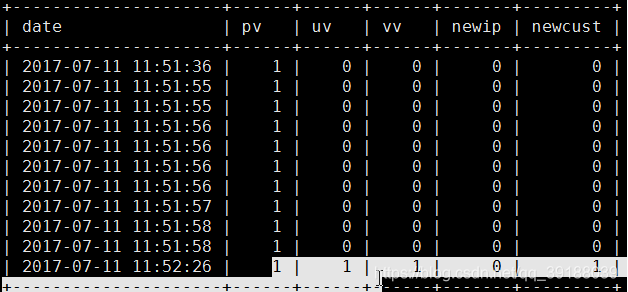

5)前面4步并没有做指标的叠加统计,所以我们最后会将所有记录都插入到数据库中,如下:

这么做的目的在于我们可以从数据库中,查询一段时间内的总的pv、uv等指标数据。

PvBolt代码示例:

import java.util.List;

import java.util.Map;

import backtype.storm.task.OutputCollector;

import backtype.storm.task.TopologyContext;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseRichBolt;

import backtype.storm.tuple.Fields;

import backtype.storm.tuple.Tuple;

public class PvBolt extends BaseRichBolt{

private OutputCollector collector;

@Override

public void prepare(Map stormConf, TopologyContext context, OutputCollector collector) {

this.collector=collector;

}

@Override

public void execute(Tuple input) {

try {

List<Object> values=input.getValues();

//--令每条记录的pv=1

values.add(1);

//--锚定

collector.emit(input,values);

collector.ack(input);

} catch (Exception e) {

collector.fail(input);

e.printStackTrace();

}

}

@Override

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("url","urlname","uvid","ssid","sscount","sstime","cip","pv"));

}

}

UvBolt代码示例:

package cn.tarena.store.weblog;

import java.util.Calendar;

import java.util.List;

import java.util.Map;

import backtype.storm.task.OutputCollector;

import backtype.storm.task.TopologyContext;

import backtype.storm.topology.OutputFieldsDeclarer;

import backtype.storm.topology.base.BaseRichBolt;

import backtype.storm.tuple.Fields;

import backtype.storm.tuple.Tuple;

import cn.tarena.dao.HBaseDao;

import cn.tarena.domain.FluxInfo;

public class UvBolt extends BaseRichBolt{

private OutputCollector collector;

@Override

public void prepare(Map stormConf, TopologyContext context, OutputCollector collector) {

this.collector=collector;

}

@Override

public void execute(Tuple input) {

try {

//--获取uvid

String uvid = input.getStringByField("uvid");

//--获取sstime

long endTime = Long.parseLong(input.getStringByField("sstime"));

//--基于当前时间向前寻找今天0点的时间值

Calendar calendar = Calendar.getInstance();

calendar.setTimeInMillis(endTime);

calendar.set(Calendar.HOUR, 0);

calendar.set(Calendar.MINUTE,0);

calendar.set(Calendar.SECOND,0);

calendar.set(Calendar.MILLISECOND,0);

long beginTime = calendar.getTimeInMillis();

//--从hbase中查询 今天0点到当前日志时间的数据 中uvid和当前uvid相同的数据

String regex="^\\d{13}_"

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

14万+

14万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?