Flink-高级特性-新特性-FlinkSQL整合Hive

1.介绍

版本

https://ci.apache.org/projects/flink/flink-docs-release-1.12/dev/table/connectors/hive/

添加依赖和jar包和配置

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.12</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-metastore</artifactId>

<version>2.1.0</version>

<exclusions>

<exclusion>

<artifactId>hadoop-hdfs</artifactId>

<groupId>org.apache.hadoop</groupId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>2.1.0</version>

</dependency>

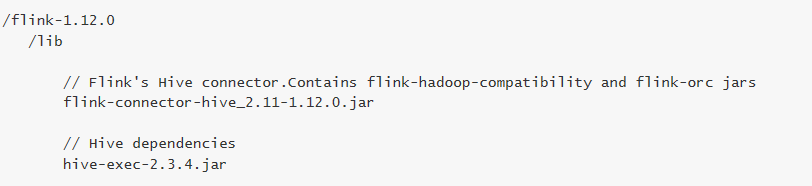

上传资料hive中的jar包到flink/lib中

FlinkSQL整合Hive-CLI命令行整合

1.修改hive-site.xml

<property>

<name>hive.metastore.uris</name>

<value>thrift://node3:9083</value>

</property>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://node3:3306/hive?createDatabaseIfNotExist=true&useSSL=false</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>datanucleus.schema.autoCreateAll</name>

<value>true</value>

</property>

<property>

<name>hive.server2.thrift.bind.host</name>

<value>node3</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://node3:9083</value>

</property>

</configuration>

2.启动元数据服务

nohup /export/server/hive/bin/hive --service metastore &

3.修改flink/conf/sql-client-defaults.yaml

catalogs:

- name: myhive

type: hive

hive-conf-dir: /export/server/hive/conf

default-database: default

4.分发

5.启动flink集群

/export/server/flink/bin/start-cluster.sh

6.启动flink-sql客户端-hive在哪就在哪启

/export/server/flink/bin/sql-client.sh embedded

7.执行sql:

show catalogs;

use catalog myhive;

show tables;

select * from person;

FlinkSQL整合Hive-代码整合

https://ci.apache.org/projects/flink/flink-docs-release-1.12/dev/table/connectors/hive/

package cn.itcast.feature;

import org.apache.flink.table.api.EnvironmentSettings;

import org.apache.flink.table.api.TableEnvironment;

import org.apache.flink.table.api.TableResult;

import org.apache.flink.table.catalog.hive.HiveCatalog;

/**

* Author itcast

* Desc

*/

public class HiveDemo {

public static void main(String[] args){

//TODO 0.env

EnvironmentSettings settings = EnvironmentSettings.newInstance().useBlinkPlanner().build();

TableEnvironment tableEnv = TableEnvironment.create(settings);

//TODO 指定hive的配置

String name = "myhive";

String defaultDatabase = "default";

String hiveConfDir = "./conf";

//TODO 根据配置创建hiveCatalog

HiveCatalog hive = new HiveCatalog(name, defaultDatabase, hiveConfDir);

//注册catalog

tableEnv.registerCatalog("myhive", hive);

//使用注册的catalog

tableEnv.useCatalog("myhive");

//向Hive表中写入数据

String insertSQL = "insert into person select * from person";

TableResult result = tableEnv.executeSql(insertSQL);

System.out.println(result.getJobClient().get().getJobStatus());

}

}

846

846

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?