python 多线程爬取小说文章内容

目录

目标

爬取一部小说内容,下载到本地,可以进行查看的文件-txt

网址

网站

python技术

requests

requests 是python 中的一种第三方库,使用该第三方库的脚本,进行发送请求,获取服务器的内容

import requests

res=requests.get(url,headers)

print(res.text)

re

正则表达式,是python的第三方库,获取网页中的指定的字符串等

import re

rres=re.findall("<dd><a href='/1_1509/(.*)'>(.*)</a></dd>", response.text)

ThreadPoolExecutor

多线程进行爬取信息

爬取小说的步骤

1、找到小说对应的url后得到小说名称及各章节的地址及章节名称

import requests, re

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36 Edg/81.0.416.72',

}

def get_pageUrlName(url):

"""

:param url:

:return: 获取各章节的地址及章节名称

"""

response = requests.get(url, headers=headers)

response.encoding = 'utf-8'

dit = re.findall("<dd><a href='/1_1509/(.*)'>(.*)</a></dd>", response.text)

# print(dit)

dict = {}

pageUrlName_list = []

for urlName, urlTitle in dit:

pass

dict['pageUrl'] = url + urlName

dict["pageName"] = urlTitle

pageUrlName_list.append(dict.copy())

return pageUrlName_list

#

2、找到每一个章节的url及章节名称后的小说内容

3、获取当前章节的内容

4、进行书写到指定路径下的文件中

def get_content(pageUrl, pageName):

'获取指定页面下的内容并进行保存到文件中'

content_regx = ' (.*)<br />'

#

response = requests.get(pageUrl, headers=headers)

response.encoding = 'utf-8'

contentlist = re.findall(content_regx, response.text)

content = '\n'.join(contentlist)

with open(f"D:\File\小说爬取器\小说名/{pageName}" + '.txt', mode='w', encoding='utf-8') as f:

f.write(pageName)

f.write('\n')

f.write(content)

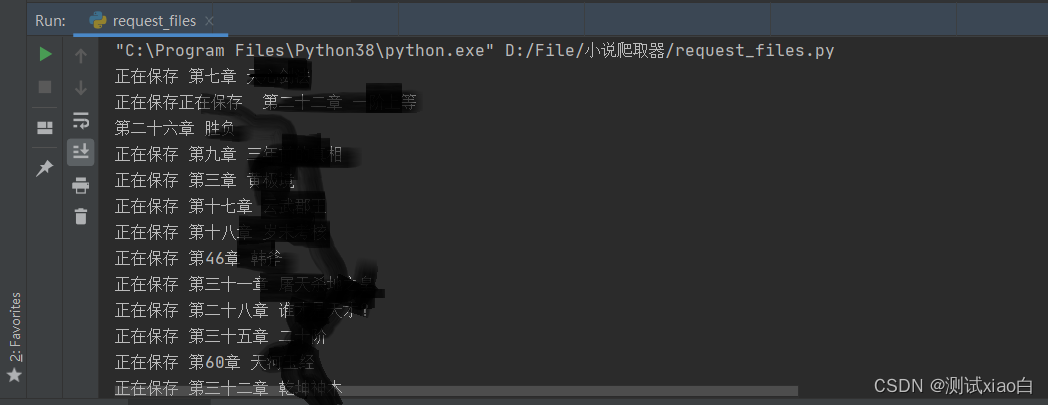

print('正在保存', pageName)

源码

from concurrent.futures.thread import ThreadPoolExecutor

import requests, re

headers = {

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36 Edg/81.0.416.72',

}

def get_pageUrlName(url):

"""

:param url:

:return: 获取各章节的地址及章节名称

"""

response = requests.get(url, headers=headers)

response.encoding = 'utf-8'

dit = re.findall("<dd><a href='/1_1509/(.*)'>(.*)</a></dd>", response.text)

# print(dit)

dict = {}

pageUrlName_list = []

for urlName, urlTitle in dit:

pass

dict['pageUrl'] = url + urlName

dict["pageName"] = urlTitle

pageUrlName_list.append(dict.copy())

return pageUrlName_list

def get_content(pageUrl, pageName):

'下载的模式以一个章节一个文本文档,进行保存'

content_regx = ' (.*)<br />'

response = requests.get(pageUrl, headers=headers)

#设置返回的内容以该编码进行展示

response.encoding = 'utf-8'

contentlist = re.findall(content_regx, response.text)

content = '\n'.join(contentlist)

with open(f"D:\File\小说爬取器\小说名/{pageName}" + '.txt', mode='w', encoding='utf-8') as f:

f.write(pageName)

f.write('\n')

f.write(content)

print('正在保存', pageName)

if __name__ == '__main__':

book_url = 'https://{小说网站}/{小说id}/'

#小说网址我这边提供一个 **http://wujixsw.org/1_1762/**对应的网址及小说id

list = get_pageUrlName(book_url)

res = [item[key] for item in list for key in item]

#使用多线程进行下载一本小说中的多个章节内容

with ThreadPoolExecutor(max_workers=len(list)) as exe:

for item in list:

exe.submit(get_content,item['pageUrl'], item['pageName'])

本文介绍了如何使用Python的requests库和re模块,配合ThreadPoolExecutor进行多线程爬取小说的章节内容,从指定网站抓取小说并下载到本地存储为文本文件。

本文介绍了如何使用Python的requests库和re模块,配合ThreadPoolExecutor进行多线程爬取小说的章节内容,从指定网站抓取小说并下载到本地存储为文本文件。

1695

1695

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?