1. 链接到外部系统

- 流式:消息队列

- 非流式:es、jdbc

- 如果没有,自定义sink

2. 输出到文件

StreamingFileSink<String> steamingFileSink = StreamingFileSink.<String>forRowFormat(new Path(".\\output"),

new SimpleStringEncoder<>("UTF-8"))

.withRollingPolicy(DefaultRollingPolicy.builder()

.withMaxPartSize(1024*1024*1024).withRolloverInterval(TimeUnit.MINUTES.toMillis(15))

.withInactivityInterval(TimeUnit.MINUTES.toMillis(15)).build()

) //滚动策略,超过多少时间、多少大小创建新文件;

.build();

stream.map(data -> data.toString()).addSink(steamingFileSink);

3. 输出到kafka

输入输出都是kafka,使用flink作为etl工具。

# 生产者

kafka-console-producer.sh --broker-list node00:9092 --topic clicks

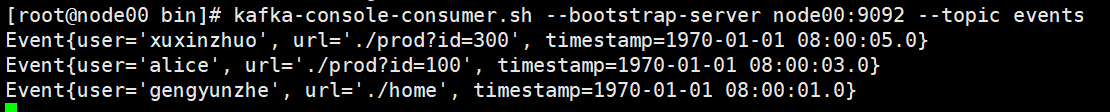

# 消费者

kafka-console-consumer.sh --bootstrap-server node00:9092 --topic events

public class SinkToKafka {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1);

//1.读kafka

Properties properties = new Properties();

properties.setProperty("bootstrap.servers","node00:9092");

DataStreamSource<String> kafkaStream = env.addSource(new FlinkKafkaConsumer<String>("clicks", new SimpleStringSchema(), properties));

//2. flink 处理转换

SingleOutputStreamOperator<String> result = kafkaStream.map(new MapFunction<String, String>() {

@Override

public String map(String s) throws Exception {

String[] fields = s.split(",");

return new Event(fields[0].trim(), fields[1].trim(), Long.valueOf(fields[2].trim())).toString();

}

});

//3 sink to kafka\

result.addSink(new FlinkKafkaProducer<String>("192.168.10.132:9092","events",new SimpleStringSchema()));

env.execute();

}

}

3.输出到redis

4.输出到es

5. 输出到mysql(JDBC)

result.addSink(JdbcSink.sink("INSERT INTO clicks (user, url) values (?, ?)",

((statement, event)->{

statement.setString(1, event.user);

statement.setString(2, event.url);

statement.execute();

}),

new JdbcConnectionOptions.JdbcConnectionOptionsBuilder()

.withUrl("jdbc:mysql://192.168.10.134:3306/flink_study")

.withDriverName("com.mysql.jdbc.Driver")

.withUsername("root")

.withPassword("123456")

.build()

));

6. 自定义sink 函数

实现从kafka获取json,sink输出到mysql

//1.读kafka

Properties properties = new Properties();

properties.setProperty("bootstrap.servers","node00:9092");

SingleOutputStreamOperator<com.shinho.chapter05_sink.Event> result = env.addSource(

new FlinkKafkaConsumer<String>("clicks", new SimpleStringSchema(), properties))

.map(new MapFunction<String, com.shinho.chapter05_sink.Event>() {

@Override

public com.shinho.chapter05_sink.Event map(String s) throws Exception {

Gson gson = new Gson();

System.out.println(s);

return gson.fromJson(s, com.shinho.chapter05_sink.Event.class);

}

});

public class MyMysqlSink extends RichSinkFunction<Event> {

private PreparedStatement ps = null;

Connection connection = null;

String driver = "com.mysql.jdbc.Driver";

String url = "jdbc:mysql://127.0.0.1:3306/shinho";

String username = "root";

String password = "383240gyz";

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

// 获取连接

connection = getConn();

//执行查询

ps = connection.prepareStatement("insert into clicks (user, url) values (?,?);");

}

private Connection getConn() {

try {

Class.forName(driver);

connection = DriverManager.getConnection(url, username, password);

} catch (Exception e) {

e.printStackTrace();

}

return connection;

}

@Override

public void close() throws Exception {

super.close();

if(connection != null){

connection.close();

}

if (ps != null){

ps.close();

}

}

@Override

public void invoke(Event value, Context context) throws Exception {

ps.setString(1,value.user);

ps.setString(2,value.url);

System.out.println("insert into clicks (user, url values ("+value.user+","+value.url+"))");

ps.executeUpdate();

}

}

1509

1509

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?