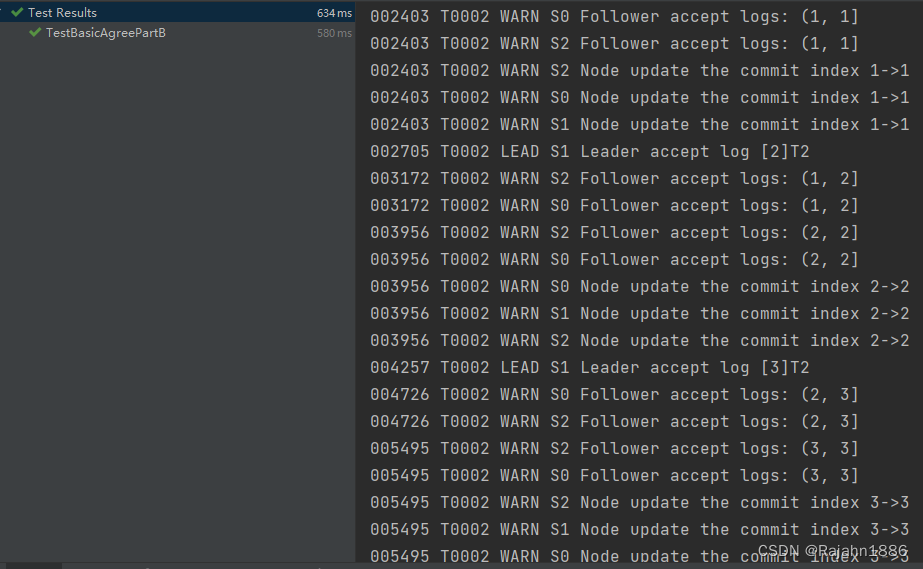

在日志分发1中, 构造了AppendEntries和AppendEntriesCommit的基本逻辑, 还有一些细节需要调整

在AppendEntries中, 移除原本Follower根据Leader的CommitIndex更新自己提交记录的逻辑, 在AppendEntriesCommit中根据map记录的日志接收数量判断是否已在集群内达成共识

增加一行applyCond.signal, 通知日志应用状态机

// hanle LeaderCommit

//if args.LeaderCommit > rf.commitIndex {

// LOG(rf.me, rf.currentTerm, DWarn, "Follower update the commit index %d->%d", rf.commitIndex, args.LeaderCommit)

// rf.commitIndex = args.LeaderCommit

// rf.applyCond.Signal()

//}

if rf.m[key] >= len(rf.peers)/2 && key.Index > rf.commitIndex {

rf.commitIndex = key.Index

rf.applyCond.Signal()

LOG(rf.me, rf.currentTerm, DWarn, "Node update the commit index %d->%d", rf.commitIndex, key.Index)

}

日志应用状态机

// raft_application.go

package raft

func (rf *Raft) applicationTicker() {

for !rf.killed() {

rf.mu.Lock()

rf.applyCond.Wait()

//从上次apply过的index+1开始,直到当前已知的全部commitIndex

//收集所有需要apply的entries

entries := make([]LogEntry, 0)

for i := rf.lastApplied + 1; i <= rf.commitIndex; i++ {

entries = append(entries, rf.log[i])

}

rf.mu.Unlock()

//释放锁后,逐个构造ApplyMsg,发送到applyCh

for i, entry := range entries {

rf.applyCh <- ApplyMsg{

CommandValid: entry.CommandValid,

Command: entry.Command,

CommandIndex: rf.lastApplied + 1 + i, // must be cautious

}

}

//apply完成后,更新lastApplied

rf.mu.Lock()

LOG(rf.me, rf.currentTerm, DApply, "Apply log for [%d, %d]", rf.lastApplied+1, rf.lastApplied+len(entries))

rf.lastApplied += len(entries)

rf.mu.Unlock()

}

}

移除Leader更新CommitIndex的代码, 采用和Follower一样的更新方式

// update the commitIndex

//majorityMatched := rf.getMajorityIndexLocked()

//if majorityMatched > rf.commitIndex && rf.log[majorityMatched].Term == rf.currentTerm {

// LOG(rf.me, rf.currentTerm, DApply, "Leader update the commit index %d->%d", rf.commitIndex, majorityMatched)

// rf.commitIndex = majorityMatched

// rf.applyCond.Signal()

//}

文章详细描述了在Raft协议中,移除Leader自动更新FollowerCommitIndex的机制,转而让Follower根据接收到的日志数量判断并更新,同时提及了日志应用状态机的工作原理。

文章详细描述了在Raft协议中,移除Leader自动更新FollowerCommitIndex的机制,转而让Follower根据接收到的日志数量判断并更新,同时提及了日志应用状态机的工作原理。

2506

2506

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?