转载自:https://blog.csdn.net/qq547276542/article/details/78251779

转载自:https://blog.csdn.net/lanyanchenxi/article/details/50448640

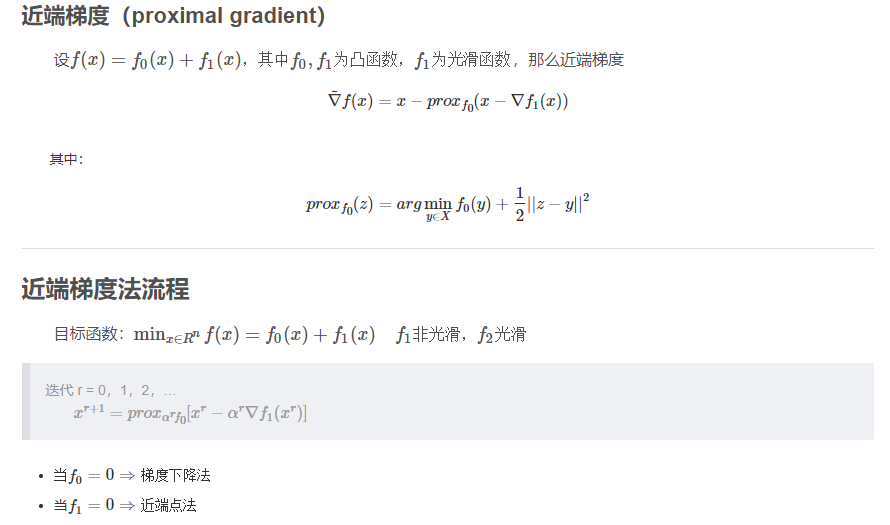

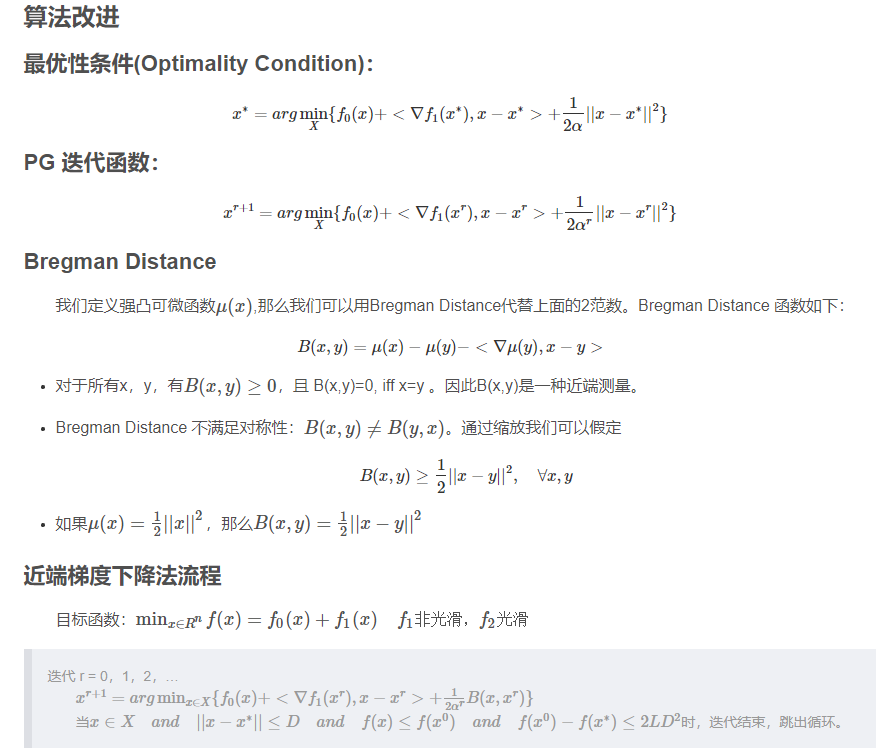

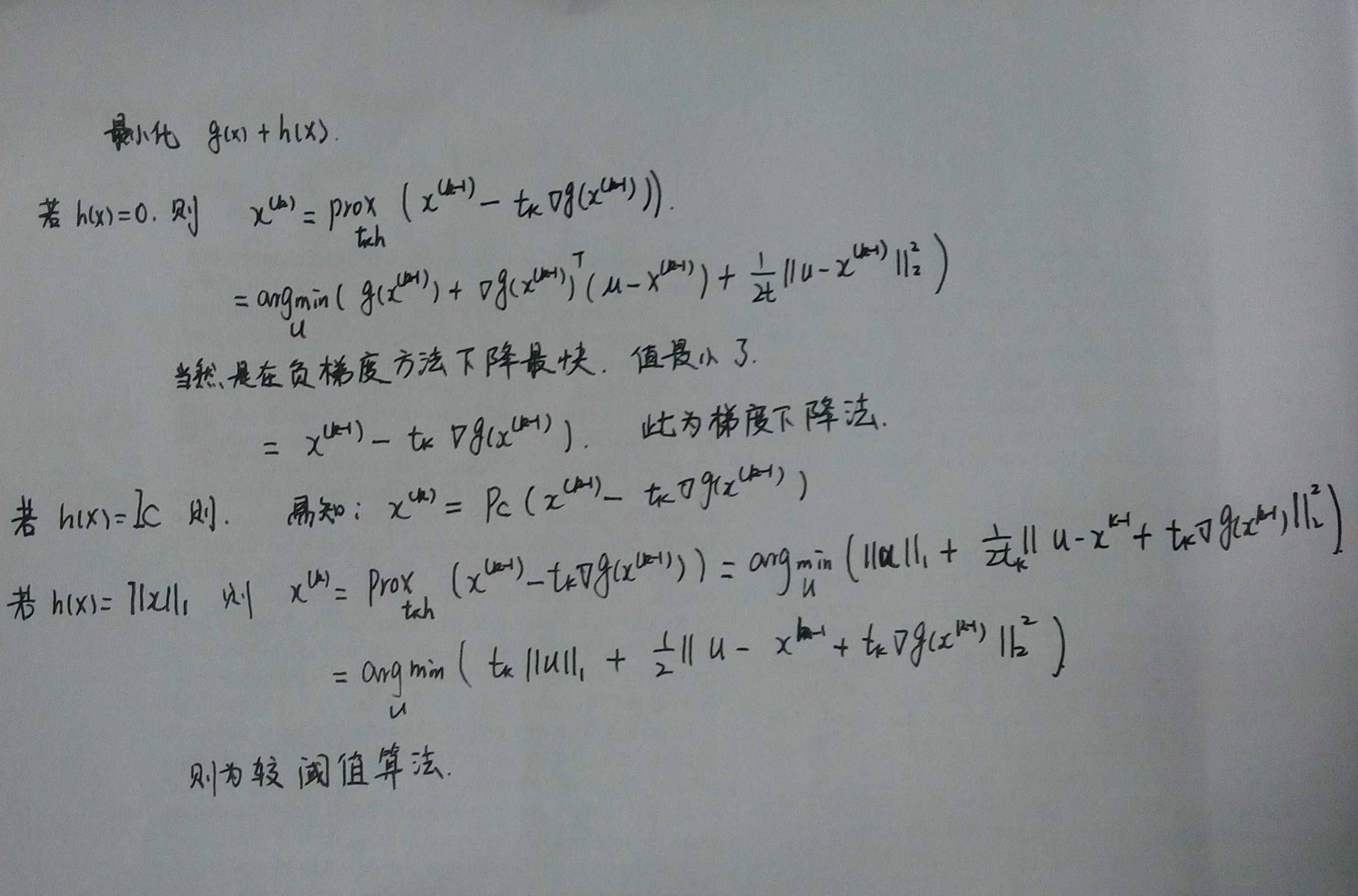

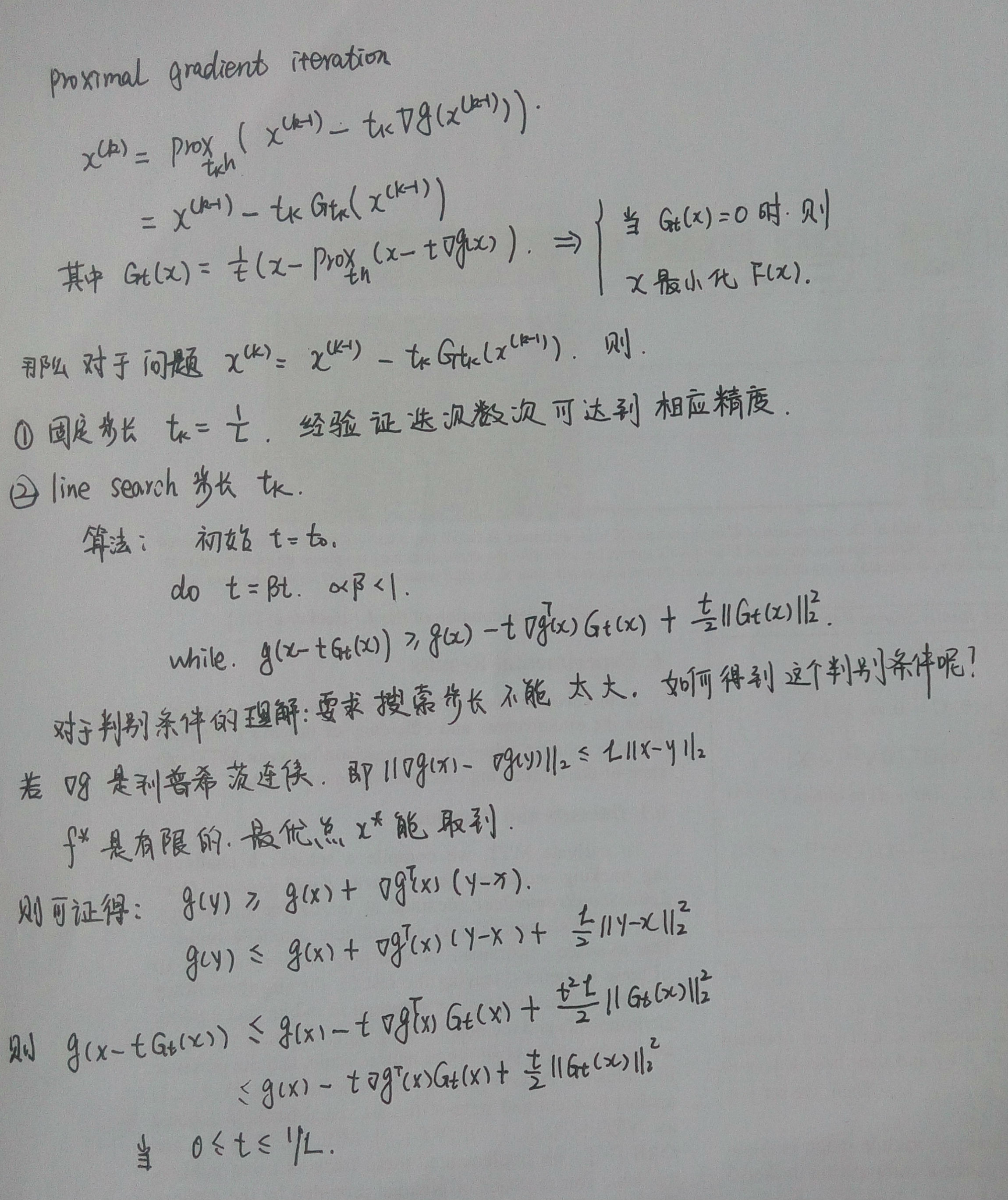

3)近端梯度法的算法:

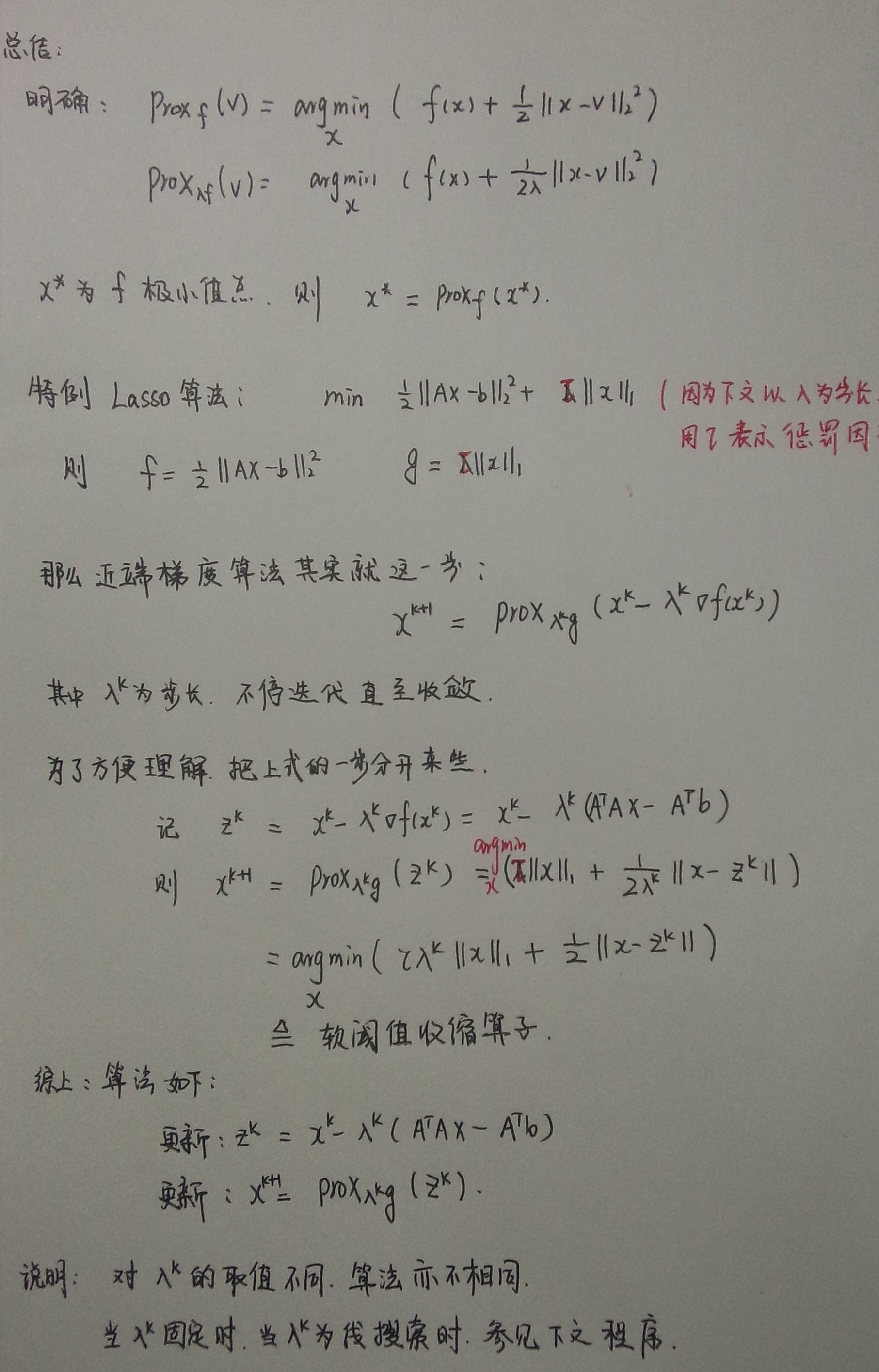

3:总结

此代码参考下面参考文献提供的代码,此为固定步长的近端梯度算法,经过简单修改:把while那一块取消注释即为线性搜索确定步长。

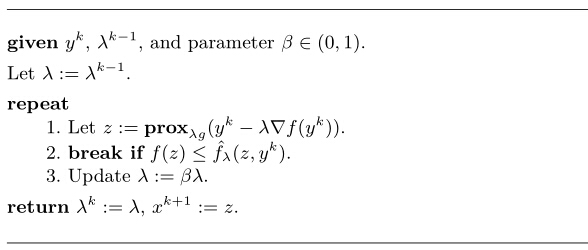

其中先搜索确定步长的算法如下:

-

function [x]=proximalGradient(A,b,gamma)

-

%%解决 1/2||AX-b||2_{2}+gamma*||X||1

-

%% A:m*n X:n*1

-

MAX_ITER =

400;

-

ABSTOL =

1e-4;

-

RELTOL =

1e-2;

-

-

f = @(u)

0.5*norm(A*u-b)^

2;

%%为了确定线搜索步长

-

lambda =

1;

-

beta =

0.5;

-

[~,n]=

size(A);

-

x =

zeros(n,

1);

-

xprev = x;

-

AtA =

A'

*A;

-

Atb =

A'*b;

-

for k =

1:MAX_ITER

-

% while 1

-

grad_x = AtA*x - Atb;

-

z = soft_threshold(x - lambda*grad_x, lambda*

gamma);

%%迭代更新x

-

% if f(z) <= f(x) + grad_x'*(z - x) + (1/(2*lambda))*(norm(z - x))^2

-

% break;

-

% end

-

% lambda = beta*lambda;

-

end

-

xprev = x;

-

x = z;

-

-

h.

prox_optval(k) = objective(A, b,

gamma

, x, x);

-

if

k >

1

&&

abs

(

h.

prox_optval(k) -

h.

prox_optval(k-

1

)) < ABSTOL

-

break

;

-

end

-

end

-

-

h.

x_prox = x;

-

h.

p_prox =

h.

prox_optval(

end

);

-

-

% h.prox_grad_toc = toc;

-

%

-

% fprintf('Proximal gradient time elapsed: %.2f seconds.\n', h.prox_grad_toc);

-

% h.prox_iter = length(h.prox_optval);

-

% K = h.prox_iter;

-

% h.prox_optval = padarray(h.prox_optval', K-h.prox_iter, h.p_prox, 'post');

-

%

-

% plot( 1:K, h.prox_optval, 'r-');

-

% xlim([0 75]);

-

-

-

end

-

-

function p = objective(A, b, gamma, x, z)

-

p =

0.5

*(norm(A*x - b))^

2

+

gamma

*norm(z,

1

);

-

end

-

-

function [X]=soft_threshold(b,lambda)

-

X=

sign

(b).*max(

abs

(b) - lambda,

0

);

-

end

参考文献如下:

Proximal gradient method近端梯度算法

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?