需要的小伙伴可以直接将代码复制至编辑器中学习交流或完善

但切勿用作商业用途,否则后果自负!

项目概述

网址:https://3g.163.com/touch/news/?ver=c&clickfrom=index2018_header(手机网易新闻网)

所需模块如下:

标准库: time; json

第三方库: requests; lxml; multiprocessing; pymysql

爬取内容: 已知爬取页面范围内所有新闻数据的标题,信源,发布日期,正文,图片地址,有效性

项目机理:

- 通过requests模块下载网易新闻网页数据

- 使用lxml库和json库解析带有每条新闻的正文链接的json对象,此时亦可以直接提取出新闻的标题、信源、发布日期、图片地址

- 再次使用requests模块下载每条新闻的正文页面数据 并使用lxml库解析和精炼正文内容

- 将处理后的数据逐条插入数据库

话不多说,直接上代码 ↓ ↓ ↓ (使用安卓爪机浏览的朋友双击代码片可以查看代码详情)

# -*- coding: utf-8 -*-

"""

@ Time: 2019/2/18

@ Author: 黑猫省长

"""

'''

These are modules

'''

import requests

from lxml import etree

import json

import multiprocessing

import pymysql

from pymysql import Error

import time

'''

Connect to MySQL Server

'''

def get_conn():

try:

global conn

conn = pymysql.connect(

host = "localhost",

user = "", # You need to input by yourself !!!

passwd = "", # You need to input by yourself !!!

db = "news_project", # You need to input by yourself !!!

port = 3306,

charset = "utf8"

)

except pymysql.Error as e:

print("Error: {}".format(e))

return conn.cursor()

'''

To request html

'''

def download(url):

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/71.0.3578.98 Safari/537.36'

}

response = requests.get(url, headers=headers, timeout=30)

response.encoding = 'utf-8'

return response.text

'''

To analyse news content

'''

def parse(url):

htmls = download(url).replace("artiList(", "").strip(")")

news_dict = json.loads(htmls)

dict1 = news_dict["BBM54PGAwangning"]

for i in dict1:

news_url = i["url"]

if news_url is '':

news_validity = int(0)

else:

news_validity = int(1)

news_title = i["title"]

created_at = i["ptime"]

news_source = i["source"]

img_url = i["imgsrc"]

try:

news_html = download(news_url)

except:

print("对不起, 新闻地址无效! 已切换到下一条新闻")

continue

selector = etree.HTML(news_html)

news_text = selector.xpath('string(//*[@class="content"])').strip().replace('\n', '').replace('\t', '')

counter.append(1) # Add one record to counter

try:

insert_data(news_title, img_url, news_source, created_at, news_text, news_validity)

except:

print(news_title + " 未成功写入数据库\t已切换到下一条新闻")

counter.pop()

print("正在尝试抓取 >>> ", news_title)

time.sleep(1)

'''

Insert each news to MySQL Server

'''

def insert_data(news_title, img_url, news_source, created_at, news_text, news_validity):

sql = "INSERT INTO `news` (`title`, `image`, `resource`, `created_at`, `content`, `is_valid`) VALUE (%s, %s, %s, %s, %s, %s)"

cur.execute(sql,(news_title, img_url, news_source, created_at, news_text, news_validity))

time.sleep(1)

'''

Initialize functions

'''

def main():

# Set a starting-project physical time point

global start

start = time.time()

# Turn on cursor from SQL Server API

global cur

cur = get_conn()

pl = multiprocessing.Pool(2)

page_num = input("请输入您希望爬取的新闻页码数(每页约10条有效新闻) >>> ")

while page_num.isdigit() == False:

page_num = input("请输入自然数 >>> ")

for num in range(0, int(page_num)):

url = "https://3g.163.com/touch/reconstruct/article/list/BBM54PGAwangning/" + str(num) + "0-10.html"

parse(url)

pl.apply_async(parse, (url, ))

# Wait for all programs stopping and close pools

pl.close()

pl.join()

# Set a stopping-project physical time point

global end

end = time.time()

# Turn off cursor

cur.close()

# Commit all data to MySQL

conn.commit()

conn.close()

if __name__ == '__main__':

# Set a counter to count the number of news

global counter

counter = []

main()

print("\n\n已帮您成功抓取" + str(len(counter)) + "条新闻! \n\n本次抓取共用时" + str(round((end-start)/60, 2)) + '分钟')

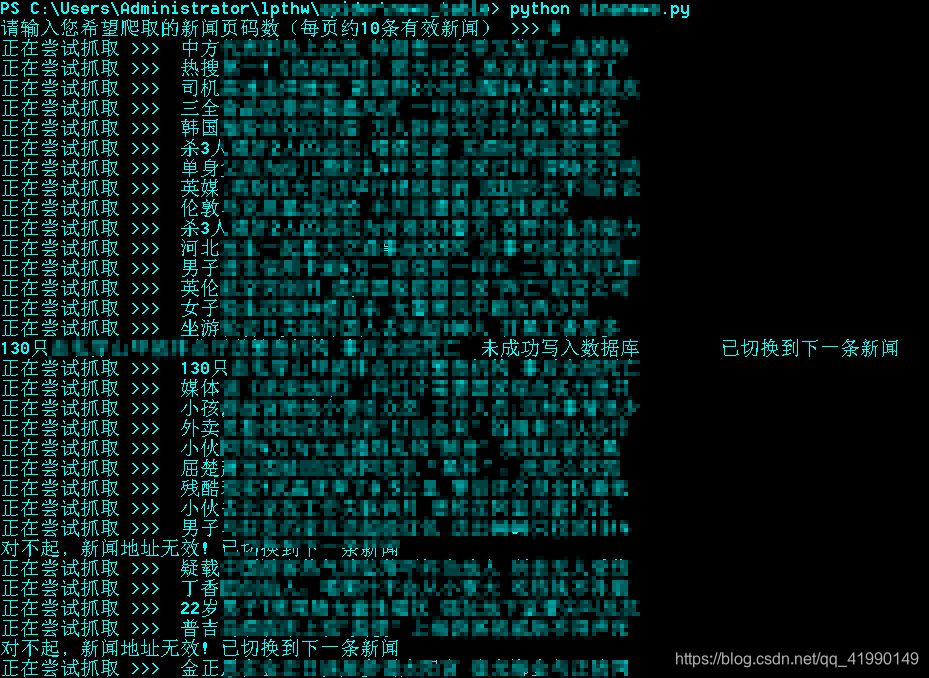

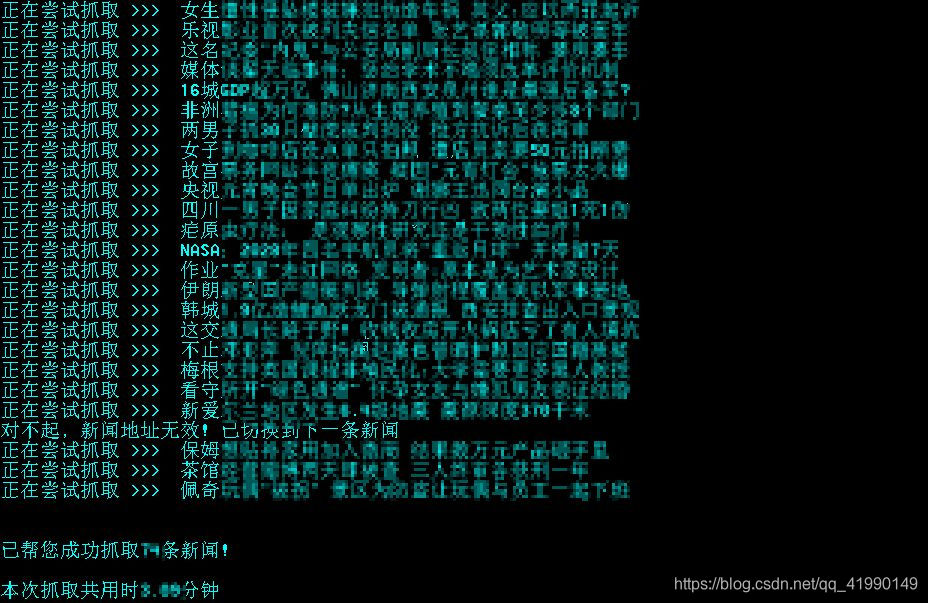

代码运行结果

有朋友调侃我发个图片都要搞得跟《像素世界》一样,哈哈哈哈哈哈哈其实我就是这么想的

运行代码时需要注意什么?

1.为了提高代码的复用性,用户希望爬取的新闻页数在程序执行时均由用户输入

page_num = input("请输入您希望爬取的新闻页码数(每页约10条有效新闻) >>> ")

while page_num.isdigit() == False:

page_num = input("请输入自然数 >>> ")

2.连接MySQL数据库部分需用户自行输入用户名及密码和项目名称

try:

global conn

conn = pymysql.connect(

host = "localhost",

user = "******",

passwd = "******",

db = "******",

port = 3306,

charset = "utf8"

)

except pymysql.Error as e:

print("Error: {}".format(e))

return conn.cursor()

3.同时为了提高效率,引入multiprocessing库的进程池,以此实现多进程爬取

# 由于笔者使用的电脑是双核处理器设备,故设置进程数为2

pl = multiprocessing.Pool(2)

pl.apply_async(parse, (url, ))

pl.close()

pl.join()

这里不使用threading库的原因是——multiprocessing库对多核CPU的利用率相对高于threading库,关于这一模块希望了解更多的朋友可以查看

https://www.cnblogs.com/gengyi/p/8620853.html

https://morvanzhou.github.io/tutorials/python-basic/multiprocessing/

注意:Pool进程池中的进程数最好不要超过电脑CPU核数,否则将事倍功半

4.为了防止过快的爬取速率影响到服务器的正常运作,笔者设置了每条爬取的时间间隔,平均约为0.5秒,若爬取超过5页新闻,请勿设置更小的时间间隔

代码出现的其他问题欢迎大家评论留言指出,如果情况属实 笔者会马不停蹄地更新代码,以求为需要的朋友提供最优的学习和交流资料

But !

还是再次提醒——虽然网易新闻为公开数据,但切勿将爬取的新闻数据用作商业用途,若因数据分析之外的目的导致任何纠纷请自负后果

@黑猫省长——感想

作为某高校新闻专业的一枚文科男,我实在有愧于与生俱来对计算机的热爱,四个月前接触Python之后,从零基础到能够逐渐地实现一些“小目标”(比如可以用python自带的许多库实现统计图表的绘制,以及编写一些为自己所用的数据新闻爬虫等等……),几个月的自学过程中我积累了颇多经验,但也走了不少弯路,还好对计算机的热爱并未减少半分,如今鼓起勇气写的这个项目也算是一个flag,以后的学习经验我也会逐步分享出来,欢迎大家找我探讨交流哦(谈人生也是可以的嘿嘿)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?