在Linux环境下的部署(基于Docker Compose)

最简单的mall在Linux下部署方式,使用两个Docker Compose脚本即可完成部署。第一个脚本用于部署mall运行所依赖的服务(MySQL、Redis、Nginx、RabbitMQ、MongoDB、Elasticsearch、Logstash、Kibana),第二个脚本用于部署mall中的应用(mall-admin、mall-search、mall-portal)。

使用Docker Compose部署SpringBoot应用

Docker Compose是一个用于定义和运行多个docker容器应用的工具。使用Compose你可以用YAML文件来配置你的应用服务,然后使用一个命令,你就可以部署你配置的所有服务了。

Docker环境安装

- 安装

# 安装`yum-utils`:

yum install -y yum-utils device-mapper-persistent-data lvm2

# 为yum源添加docker仓库位置:

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

# 安装docker:

yum install docker-ce

# 启动docker:

systemctl start docker

# 开机自启docker

systemctl enable docker.service

- 查看是否设置开机启动

systemctl list-unit-files | grep enable

- 配置下载docker镜像

vi /etc/docker/daemon.json

{

"registry-mirrors":["https://f3lu6ju1.mirror.aliyuncs.com"]

}

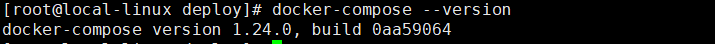

Docker Compose安装

下载Docker Compose

curl -L https://get.daocloud.io/docker/compose/releases/download/1.24.0/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

修改该文件的权限为可执行

chmod +x /usr/local/bin/docker-compose

查看是否已经安装成功

docker-compose --version

Docker Registry

Docker Registry 2.0搭建

docker run -d -p 5000:5000 --restart=always --name registry2 registry:2

如果遇到镜像下载不下来的情况,需要修改 /etc/docker/daemon.json 文件并添加上 registry-mirrors 键值,然后重启docker服务:

{

"registry-mirrors": ["https://registry.docker-cn.com"]

}

Docker开启远程API

用vim编辑器修改docker.service文件

vi /usr/lib/systemd/system/docker.service

需要修改的部分:

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock

修改后的部分:

ExecStart=/usr/bin/dockerd -H tcp://0.0.0.0:2375 -H unix://var/run/docker.sock

让Docker支持http上传镜像

echo '{ "insecure-registries":["106.13.194.132:5000"] }' > /etc/docker/daemon.json

修改配置后需要使用如下命令使配置生效

systemctl daemon-reload

# 重新启动Docker服务

systemctl stop docker

systemctl start docker

# 开启防火墙的Docker构建端口

firewall-cmd --zone=public --add-port=2375/tcp --permanent

firewall-cmd --reload

下载所有需要安装的Docker镜像

docker pull mysql:5.7

docker pull redis:5

docker pull java:8

docker pull nginx:1.10

docker pull logstash:7.6.2

docker pull elasticsearch:7.6.2

docker pull kibana:7.6.2

docker pull rabbitmq:3.7.15-management

docker pull mongo:4.2.5

Elasticsearch

- 需要设置系统内核参数,否则会因为内存不足无法启动;

# 改变设置

sysctl -w vm.max_map_count=262144

# 使之立即生效

sysctl -p

- 需要创建

/mydata/elasticsearch/data目录并设置权限,否则会因为无权限访问而启动失败。

# 创建目录

mkdir -p /mydata/elasticsearch/data/

# 创建并改变该目录权限

chmod 777 /mydata/elasticsearch/data

Nginx

需要拷贝nginx配置文件,否则挂载时会因为没有配置文件而启动失败。

# 创建目录之后将nginx.conf文件上传到该目录下面

mkdir -p /mydata/nginx/

#user nobody;

worker_processes 1;

#error_log logs/error.log;

#error_log logs/error.log notice;

#error_log logs/error.log info;

#pid logs/nginx.pid;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

#log_format main '$remote_addr - $remote_user [$time_local] "$request" '

# '$status $body_bytes_sent "$http_referer" '

# '"$http_user_agent" "$http_x_forwarded_for"';

#access_log logs/access.log main;

sendfile on;

#tcp_nopush on;

#keepalive_timeout 0;

keepalive_timeout 65;

#gzip on;

server {

listen 80;

server_name www.usount.top;

#charset koi8-r;

#access_log logs/host.access.log main;

#rewrite ^/(.*) https://$server_name$request_uri? permanent;

#添加头部信息

#proxy_set_header Cookie $http_cookie;

#proxy_set_header X-Forwarded-Host $host;

#proxy_set_header X-Forwarded-Server $host;

#proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

location / {

#root html;

#index index.html index.htm;

rewrite ^(.*)$ https://$host$1;

}

location /api/ {

proxy_pass http://127.0.0.1:8887/;

}

location /prod-admin/ {

proxy_pass http://127.0.0.1:8888/;

}

#error_page 404 /404.html;

# redirect server error pages to the static page /50x.html

#

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root html;

}

# proxy the PHP scripts to Apache listening on 127.0.0.1:80

#

#location ~ \.php$ {

# proxy_pass http://127.0.0.1;

#}

# pass the PHP scripts to FastCGI server listening on 127.0.0.1:9000

#

#location ~ \.php$ {

# root html;

# fastcgi_pass 127.0.0.1:9000;

# fastcgi_index index.php;

# fastcgi_param SCRIPT_FILENAME /scripts$fastcgi_script_name;

# include fastcgi_params;

#}

# deny access to .htaccess files, if Apache's document root

# concurs with nginx's one

#

#location ~ /\.ht {

# deny all;

#}

}

# another virtual host using mix of IP-, name-, and port-based configuration

#

#server {

# listen 8000;

# listen somename:8080;

# server_name somename alias another.alias;

# location / {

# root html;

# index index.html index.htm;

# }

#}

# HTTPS server

#

server {

listen 443 ssl;

server_name usount.top;

ssl_certificate /home/ssl/usount.top_nginx/server.crt;

ssl_certificate_key /home/ssl/usount.top_nginx/server.key;

ssl_session_cache shared:SSL:1m;

ssl_session_timeout 5m;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

location / {

root /usr/share/nginx/html/api;

index index.html index.htm;

}

location /api/ {

proxy_pass http://127.0.0.1:8887/;

}

}

server {

listen 443 ssl;

server_name admin.usount.top;

ssl_certificate /home/ssl/admin.usount.top_nginx/admin.usount.top_bundle.crt;

ssl_certificate_key /home/ssl/admin.usount.top_nginx/admin.usount.top.key;

ssl_session_cache shared:SSL:1m;

ssl_session_timeout 5m;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

location / {

root /usr/share/nginx/html/admin;

index index.html index.htm;

}

location /prod-admin/ {

proxy_pass http://127.0.0.1:8888/;

}

}

}

Logstash

修改Logstash的配置文件logstash.conf中output节点下的Elasticsearch连接地址为es:9200。

创建/mydata/logstash目录,并将Logstash的配置文件logstash.conf拷贝到该目录。

mkdir -p /mydata/logstash

input {

tcp {

mode => "server"

host => "0.0.0.0"

port => 4560

codec => json_lines

type => "debug"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4561

codec => json_lines

type => "error"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4562

codec => json_lines

type => "business"

}

tcp {

mode => "server"

host => "0.0.0.0"

port => 4563

codec => json_lines

type => "record"

}

}

filter{

if [type] == "record" {

mutate {

remove_field => "port"

remove_field => "host"

remove_field => "@version"

}

json {

source => "message"

remove_field => ["message"]

}

}

}

output {

elasticsearch {

hosts => "localhost:9200"

index => "mall-%{type}-%{+YYYY.MM.dd}"

}

}

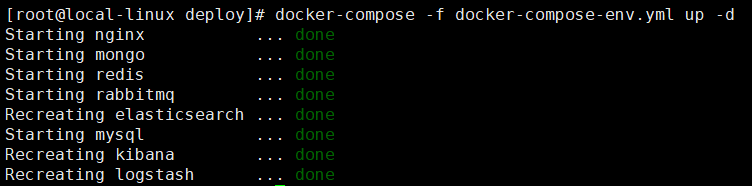

执行docker-compose-env.yml脚本

将该文件上传的linux服务器上,执行docker-compose up命令即可启动mall所依赖的所有服务。

version: '3'

services:

mysql:

image: mysql:5.7

container_name: mysql

command: mysqld --character-set-server=utf8mb4 --collation-server=utf8mb4_unicode_ci

restart: always

environment:

MYSQL_ROOT_PASSWORD: root #设置root帐号密码

ports:

- 3306:3306

volumes:

- /mydata/mysql/data/db:/var/lib/mysql #数据文件挂载

- /mydata/mysql/data/conf:/etc/mysql/conf.d #配置文件挂载

- /mydata/mysql/log:/var/log/mysql #日志文件挂载

redis:

image: redis:5

container_name: redis

command: redis-server --appendonly yes --requirepass 1228ai9971.

volumes:

- /mydata/redis/data:/data #数据文件挂载

ports:

- 6379:6379

nginx:

image: nginx:1.10

container_name: nginx

volumes:

- /mydata/nginx/nginx.conf:/etc/nginx/nginx.conf #配置文件挂载

- /mydata/nginx/html:/usr/share/nginx/html #静态资源根目录挂载

- /mydata/nginx/log:/var/log/nginx #日志文件挂载

ports:

- 80:80

- 443:443

rabbitmq:

image: rabbitmq:3.7.15-management

container_name: rabbitmq

volumes:

- /mydata/rabbitmq/data:/var/lib/rabbitmq #数据文件挂载

- /mydata/rabbitmq/log:/var/log/rabbitmq #日志文件挂载

ports:

- 5672:5672

- 15672:15672

elasticsearch:

image: elasticsearch:7.6.2

container_name: elasticsearch

environment:

- "cluster.name=elasticsearch" #设置集群名称为elasticsearch

- "discovery.type=single-node" #以单一节点模式启动

- "ES_JAVA_OPTS=-Xms64m -Xmx512m" #设置使用jvm内存大小

volumes:

- /mydata/elasticsearch/plugins:/usr/share/elasticsearch/plugins #插件文件挂载

- /mydata/elasticsearch/data:/usr/share/elasticsearch/data #数据文件挂载

ports:

- 9200:9200

- 9300:9300

logstash:

image: logstash:7.6.2

container_name: logstash

environment:

- TZ=Asia/Shanghai

volumes:

- /mydata/logstash/logstash.conf:/usr/share/logstash/pipeline/logstash.conf #挂载logstash的配置文件

depends_on:

- elasticsearch #kibana在elasticsearch启动之后再启动

links:

- elasticsearch:es #可以用es这个域名访问elasticsearch服务

ports:

- 4560:4560

- 4561:4561

- 4562:4562

- 4563:4563

kibana:

image: kibana:7.6.2

container_name: kibana

links:

- elasticsearch:es #可以用es这个域名访问elasticsearch服务

depends_on:

- elasticsearch #kibana在elasticsearch启动之后再启动

environment:

- "elasticsearch.hosts=http://es:9200" #设置访问elasticsearch的地址

ports:

- 5601:5601

mongo:

image: mongo:4.2.5

container_name: mongo

volumes:

- /mydata/mongo/db:/data/db #数据文件挂载

ports:

- 27017:27017

上传完后在当前目录下执行如下命令:

docker-compose -f docker-compose-env.yml up -d

对依赖服务进行以下设置

当所有依赖服务启动完成后,需要对以下服务进行一些设置

elasticsearch

需要安装中文分词器IKAnalyzer,并重新启动。

docker exec -it elasticsearch /bin/bash

#此命令需要在容器中运行

elasticsearch-plugin install https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v7.6.2/elasticsearch-analysis-ik-7.6.2.zip

docker restart elasticsearch

logstash

需要安装

json_lines插件,并重新启动。

docker exec -it logstash /bin/bash

logstash-plugin install logstash-codec-json_lines

docker restart logstash

docker-compose.yml配置说明

# 运行的是mysql5.7的镜像

image: mysql:5.7

# 容器名称为mysql

container_name: mysql

# 将宿主机的3306端口映射到容器的3306端口

ports:

- 3306:3306

# 将外部文件挂载到myql容器中

volumes:

- /mydata/mysql/log:/var/log/mysql

- /mydata/mysql/data:/var/lib/mysql

- /mydata/mysql/conf:/etc/mysql

# 设置mysqlroot帐号密码的环境变量

environment:

- MYSQL_ROOT_PASSWORD=root

# 可以以database为域名访问服务名称为db的容器

links:

- db:database

Docker Compose常用命令

构建、创建、启动相关容器

# -d表示在后台运行

docker-compose up -d

指定文件启动

docker-compose -f docker-compose.yml up -d

停止所有相关容器

docker-compose stop

列出所有容器信息

docker-compose ps

tabase为域名访问服务名称为db的容器

links:

- db:database

Docker Compose常用命令

构建、创建、启动相关容器

# -d表示在后台运行

docker-compose up -d

指定文件启动

docker-compose -f docker-compose.yml up -d

停止所有相关容器

docker-compose stop

列出所有容器信息

docker-compose ps

991

991

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?