建立一个java工程项目

然后建一个包lib

下载hadoop官网的hadoop后在window中解压

先把common里面jar包灌到lib里面

然后将commonlib 里面的所有jar包灌进lib里

然后客户端依赖jar灌进来lib

然后这里面的全灌进来,重名的无所谓

覆盖

编译就可以了

查看文档

配置可以自己定义,可是没有必要

这里我用maven构建一个hdfs工具类

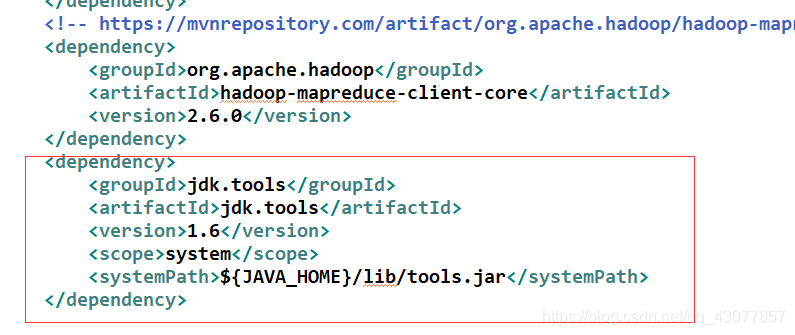

这是pom依赖 这里版本一定要跟你在linux上面的版本一致

<!-- hadoop hdfs 2.6.0 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.6.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-client -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.6.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-hdfs -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.6.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-mapreduce-client-core -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>2.6.0</version>

</dependency>

<dependency>

<groupId>jdk.tools</groupId>

<artifactId>jdk.tools</artifactId>

<version>1.6</version>

<scope>system</scope>

<systemPath>${JAVA_HOME}/lib/tools.jar</systemPath>

</dependency>

这个一定要,要不然报错

下面是这个工具类,里面包含上传,删除,在hdfs里面转移,复制方法

package com.crsri.util;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.springframework.beans.factory.annotation.Value;

/**

-

HDFS工具类

-

@author CRSRI

*/

public class HDFSApiUtil {/**

- 定义静态变量

*/

FileSystem fileSystem=null;//HDFS文件操作系统

@Value(" s p r i n g . h d f s . u r l " ) p r i v a t e S t r i n g h d f s u r l ; / / H D F S 连 接 路 径 @ V a l u e ( " {spring.hdfs.url}") private String hdfsurl;//HDFS连接路径 @Value(" spring.hdfs.url")privateStringhdfsurl;//HDFS连接路径@Value("{spring.hdfs.user}")

private String hdfsuser;//HDFS连接用户

/**

- 创建fileSystem

*/

public FileSystem createFileSystem() throws IOException, InterruptedException, URISyntaxException {

fileSystem = FileSystem.get(new URI(hdfsurl), new Configuration(), hdfsuser);

return fileSystem;

}

/**

- 上传文件

- @param path 文件路径

- @param foldername 文件夹名称

*/

public void fileToHDFS(String path,String foldername) throws IllegalArgumentException, IOException, InterruptedException, URISyntaxException {

fileSystem = createFileSystem();

fileSystem.copyFromLocalFile(new Path(path), new Path(foldername));

fileSystem.close();

}

/**

- 删除文件夹或文件

- @param foldname 文件夹名称或文件名称

*/

public void delFold(String foldname) throws IOException, InterruptedException, URISyntaxException {

fileSystem = createFileSystem();

fileSystem.delete(new Path(foldname), true);

fileSystem.close();

}

/**

- 在HDFS 复制文件

- @param srcpath 原目标

- @param drcpath 复制到的目标

*/

public void copeFile(String srcpath,String drcpath) throws IOException, InterruptedException, URISyntaxException {

fileSystem = createFileSystem();

FSDataInputStream input = fileSystem.open(new Path(srcpath));//建立输入流

FSDataOutputStream output = fileSystem.create(new Path(drcpath));//建立输出流

//两个流的对接

byte[]b=new byte[1024];

int hasRead=0;

while((hasRead=input.read(b))>0) {

output.write(b, 0, hasRead);

}

input.close();

output.close();

fileSystem.close();

}

/**

- 在HDFS 转移文件

- @param oldpath 转移前文件在HDFS的路径

- @param newpath 转移后文件在HDFS的路径

*/

public void moveFile(String oldpath,String newpath) throws IOException, InterruptedException, URISyntaxException {

fileSystem = createFileSystem();

fileSystem.rename(new Path(oldpath), new Path(newpath));

fileSystem.close();

}

- 定义静态变量

}

这个是在application.properties里面定义的

#HDFS

spring.hdfs.url=hdfs://192.168.1.11:9000

spring.hdfs.user=root

下面是建立的测试类 测试的方法有效

package com.crsri.hdfs.test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.LocatedFileStatus;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.fs.RemoteIterator;

import org.junit.Test;

import com.crsri.TgdsmApplicationTests;

/**

-

HDFS测试

-

@author CRSRI

*/

public class Hdfsapp extends TgdsmApplicationTests{//创建文件

@Test

public void createHDFSTest() throws IOException, InterruptedException, URISyntaxException {Configuration conf = new Configuration(); conf.set("dfs.replication", "2"); conf.set("dfs.blocksize", "64m"); FileSystem fileSystem = FileSystem.get(new URI("hdfs://192.168.1.11:9000/"), conf, "root"); fileSystem.copyFromLocalFile(new Path("D:\\soft\\kibana-6.4.1-windows-x86_64.zip"), new Path("/")); fileSystem.close();}

//转移文件

@Test

public void moveHDFSTest() throws IOException, InterruptedException, URISyntaxException {Configuration conf = new Configuration(); conf.set("dfs.replication", "2"); conf.set("dfs.blocksize", "64m"); FileSystem fileSystem = FileSystem.get(new URI("hdfs://192.168.1.11:9000/"), conf, "root"); fileSystem.rename(new Path("/kibana-6.4.1-windows-x86_64.zip"), new Path("/aaa/kiba")); fileSystem.close();}

//创建文件夹

@Test

public void creatHDFSFoldTest() throws IOException, InterruptedException, URISyntaxException {

Configuration conf = new Configuration();

conf.set(“dfs.replication”, “2”);

conf.set(“dfs.blocksize”, “64m”);

FileSystem fileSystem = FileSystem.get(new URI(“hdfs://192.168.1.11:9000/”), conf, “root”);

fileSystem.mkdirs(new Path("/bb/kk/cc"));

fileSystem.close();

}//删除文件

@Test

public void delHDFSFoldTest() throws IOException, InterruptedException, URISyntaxException {

Configuration conf = new Configuration();

conf.set(“dfs.replication”, “2”);

conf.set(“dfs.blocksize”, “64m”);

FileSystem fileSystem = FileSystem.get(new URI(“hdfs://192.168.1.11:9000/”), conf, “root”);

fileSystem.delete(new Path("/aaa/kiba"), true);

fileSystem.close();

}//复制文件

@Test

public void copyHDFSFileTest() throws IOException, InterruptedException, URISyntaxException {

Configuration conf = new Configuration();

conf.set(“dfs.replication”, “2”);

conf.set(“dfs.blocksize”, “64m”);

FileSystem fileSystem = FileSystem.get(new URI(“hdfs://192.168.1.11:9000/”), conf, “root”);

FSDataInputStream input = fileSystem.open(new Path("/aaa/1.pdf"));//建立输入流

FSDataOutputStream output = fileSystem.create(new Path("/1.pdf"));//建立输出流

//两个流的对接

byte[]b=new byte[1024];

int hasRead=0;

while((hasRead=input.read(b))>0) {

output.write(b, 0, hasRead);

}

input.close();

output.close();

fileSystem.close();

}//遍历文件信息

@Test

public void getHDFSFoldTest() throws IOException, InterruptedException, URISyntaxException {

Configuration conf = new Configuration();

conf.set(“dfs.replication”, “2”);

conf.set(“dfs.blocksize”, “64m”);

FileSystem fileSystem = FileSystem.get(new URI(“hdfs://192.168.1.11:9000/”), conf, “root”);

RemoteIterator listFiles = fileSystem.listFiles(new Path("/"),true);

while(listFiles.hasNext()) {

LocatedFileStatus status = listFiles.next();

System.out.println(status.getBlockSize());

System.out.println(status.getBlockSize());

System.out.println(status.getBlockSize());

}

fileSystem.close();

}

}

216

216

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?