1. 软件配置

| 软件 | 版本 | 位置 |

|---|---|---|

| Java | jdk1.8.0_301 | D:\Java\jdk1.8.0_301 |

| ant | apache-ant-1.10.5 | D:\apache-ant-1.10.5 |

| eclipse | eclipse-jee-2018-09-win32-x86_64 | D:\eclipse |

| hadoop | hadoop-3.3.1 | D:\hadoop-3.3.1 |

2. 下载eclipse-hadoop3x-master

下载地址:eclipse-hadoop3x-master

下载后解压缩存放于D盘

3. ant编译生成jar包

打开D:\eclipse-hadoop3x-master\ivy路径下的libraries.properties文件,修改如下:

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#This properties file lists the versions of the various artifacts used by hadoop and components.

#It drives ivy and the generation of a maven POM

# This is the version of hadoop we are generating

hadoop.version=3.3.1 #修改为自己的hadoop版本

hadoop-gpl-compression.version=0.1.0

#These are the versions of our dependencies (in alphabetical order)

apacheant.version=1.7.0

ant-task.version=2.0.10

asm.version=3.2

aspectj.version=1.6.5

aspectj.version=1.6.11

checkstyle.version=4.2

commons-cli.version=1.2

commons-codec.version=1.4

commons-collections.version=3.2.2

commons-configuration.version=2.1.1

commons-daemon.version=1.0.13

commons-httpclient.version=3.0.1

commons-lang.version=2.6

commons-logging.version=1.0.4

commons-logging-api.version=1.0.4

commons-math.version=3.11

commons-el.version=1.0

commons-fileupload.version=1.2

commons-io.version=2.1

commons-net.version=3.1

core.version=3.1.1

coreplugin.version=1.3.2

hsqldb.version=1.8.0.10

ivy.version=2.1.0

jasper.version=5.5.12

jackson.version=1.9.13

#not able to figureout the version of jsp & jsp-api version to get it resolved throught ivy

# but still declared here as we are going to have a local copy from the lib folder

jsp.version=2.1

jsp-api.version=5.5.12

jsp-api-2.1.version=6.1.14

jsp-2.1.version=6.1.14

jets3t.version=0.6.1

jetty.version=6.1.26

jetty-util.version=6.1.26

jersey-core.version=1.8

jersey-json.version=1.8

jersey-server.version=1.8

junit.version=4.5

jdeb.version=0.8

jdiff.version=1.0.9

json.version=1.0

protobuf.version=2.5.0

kfs.version=0.1

netty.version=3.10.6.Final

log4j.version=1.2.17

lucene-core.version=2.3.1

htrace.version=4.1.0-incubating

mockito-all.version=1.8.5

jsch.version=0.1.42

oro.version=2.0.8

rats-lib.version=0.5.1

servlet.version=4.0.6

servlet-api.version=2.5

slf4j-api.version=1.7.30

slf4j-log4j12.version=1.7.30

guava.version=27.0-jre

wagon-http.version=1.0-beta-2

xmlenc.version=0.52

xerces.version=1.4.4

打开D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin路径下的build.xml文件,修改如下:

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<project default="jar" name="eclipse-plugin">

<import file="../build-contrib.xml"/>

<path id="eclipse-sdk-jars">

<fileset dir="${eclipse.home}/plugins/">

<include name="org.eclipse.ui*.jar"/>

<include name="org.eclipse.jdt*.jar"/>

<include name="org.eclipse.core*.jar"/>

<include name="org.eclipse.equinox*.jar"/>

<include name="org.eclipse.debug*.jar"/>

<include name="org.eclipse.osgi*.jar"/>

<include name="org.eclipse.swt*.jar"/>

<include name="org.eclipse.jface*.jar"/>

<include name="org.eclipse.team.cvs.ssh2*.jar"/>

<include name="com.jcraft.jsch*.jar"/>

</fileset>

</path>

<path id="hadoop-sdk-jars">

<fileset dir="${hadoop.home}/share/hadoop/mapreduce">

<include name="hadoop*.jar"/>

</fileset>

<fileset dir="${hadoop.home}/share/hadoop/hdfs">

<include name="hadoop*.jar"/>

</fileset>

<fileset dir="${hadoop.home}/share/hadoop/common">

<include name="hadoop*.jar"/>

</fileset>

</path>

<!-- Override classpath to include Eclipse SDK jars -->

<path id="classpath">

<pathelement location="${build.classes}"/>

<!--pathelement location="${hadoop.root}/build/classes"/-->

<path refid="eclipse-sdk-jars"/>

<path refid="hadoop-sdk-jars"/>

<!-- new add -->

<fileset dir="${hadoop.root}">

<include name="**/*.jar" />

</fileset>

</path>

<!-- Skip building if eclipse.home is unset. -->

<target name="check-contrib" unless="eclipse.home">

<property name="skip.contrib" value="yes"/>

<echo message="eclipse.home unset: skipping eclipse plugin"/>

</target>

<target name="compile" unless="skip.contrib">

<!--<target name="compile" depends="init, ivy-retrieve-common" unless="skip.contrib">-->

<echo message="contrib: ${name}"/>

<javac

encoding="${build.encoding}"

srcdir="${src.dir}"

includes="**/*.java"

destdir="${build.classes}"

debug="${javac.debug}"

deprecation="${javac.deprecation}"

includeantruntime="on">

<classpath refid="classpath"/>

</javac>

</target>

<!-- Override jar target to specify manifest -->

<target name="jar" depends="compile" unless="skip.contrib">

<mkdir dir="${build.dir}/lib"/>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/mapreduce">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/common">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/hdfs">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/yarn">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/classes" verbose="true">

<fileset dir="${root}/src/java">

<include name="*.xml"/>

</fileset>

</copy>

<copy file="${hadoop.home}/share/hadoop/common/lib/protobuf-java-${protobuf.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/log4j-${log4j.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-cli-${commons-cli.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-configuration2-2.1.1.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-lang3-3.7.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-collections-${commons-collections.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-core-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-mapper-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-log4j12-${slf4j-log4j12.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-api-${slf4j-api.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/guava-${guava.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/hadoop-auth-${hadoop.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/netty-${netty.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/javax.servlet-api-3.1.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-io-2.8.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/htrace-core4-${htrace.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/woodstox-core-5.3.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/stax2-api-4.2.1.jar" todir="${build.dir}/lib" verbose="true"/>

<jar

jarfile="${build.dir}/hadoop-${name}-${hadoop.version}.jar"

manifest="${root}/META-INF/MANIFEST.MF">

<manifest>

<attribute name="Bundle-ClassPath"

value="classes/,

lib/hadoop-mapreduce-client-core-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-common-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-jobclient-${hadoop.version}.jar,

lib/hadoop-auth-${hadoop.version}.jar,

lib/hadoop-common-${hadoop.version}.jar,

lib/hadoop-hdfs-${hadoop.version}.jar,

lib/protobuf-java-${protobuf.version}.jar,

lib/log4j-${log4j.version}.jar,

lib/commons-cli-${commons-cli.version}.jar,

lib/commons-configuration2-2.1.1.jar,

lib/commons-httpclient-${commons-httpclient.version}.jar,

lib/commons-lang3-3.7.jar,

lib/commons-collections-${commons-collections.version}.jar,

lib/jackson-core-asl-${jackson.version}.jar,

lib/jackson-mapper-asl-${jackson.version}.jar,

lib/slf4j-log4j12-${slf4j-log4j12.version}.jar,

lib/slf4j-api-${slf4j-api.version}.jar,

lib/guava-${guava.version}.jar,

lib/hadoop-auth-${hadoop.version}.jar,

lib/netty-${netty.version}.jar,

lib/javax.servlet-api-3.1.0.jar,

lib/commons-io-2.8.0jar,

lib/woodstox-core-5.3.0.jar,

lib/stax2-api-4.2.1.jar"/>

</manifest>

<fileset dir="${build.dir}" includes="classes/ lib/"/>

<!--fileset dir="${build.dir}" includes="*.xml"/-->

<fileset dir="${root}" includes="resources/ plugin.xml"/>

</jar>

</target>

</project>

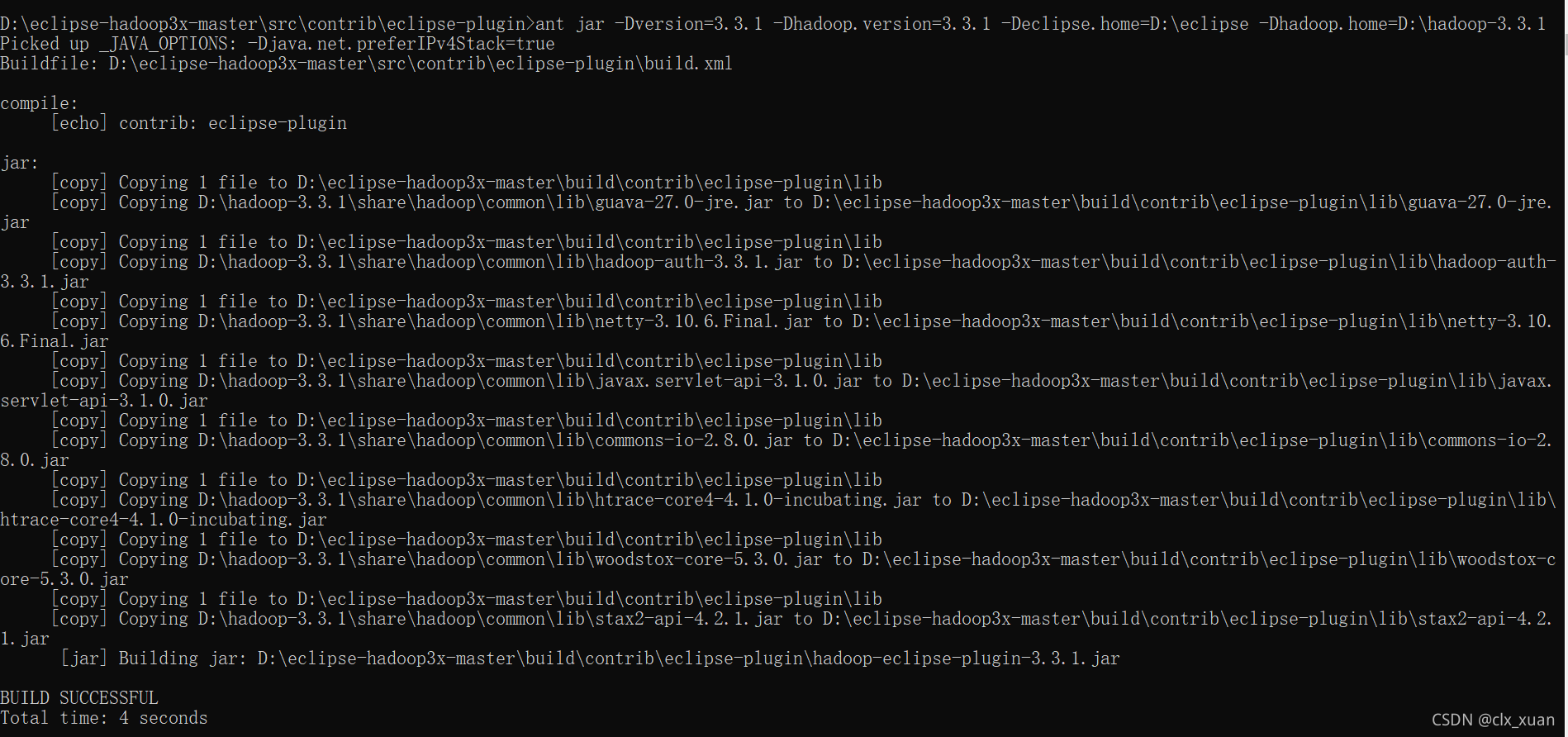

打开命令提示符,执行以下指令:

cd /d D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin

ant jar -Dversion=3.3.1 -Dhadoop.version=3.3.1 -Declipse.home=D:\eclipse -Dhadoop.home=D:\hadoop-3.3.1

执行指令后报错如下:

D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin>ant jar -Dversion=3.3.1 -Dhadoop.version=3.3.1 -Declipse.home=D:\eclipse -Dhadoop.home=D:\hadoop-3.3.1

Picked up _JAVA_OPTIONS: -Djava.net.preferIPv4Stack=true

Buildfile: D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin\build.xml

compile:

[echo] contrib: eclipse-plugin

BUILD FAILED

D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin\build.xml:80: destination directory "D:\eclipse-hadoop3x-master\build\contrib\eclipse-plugin\classes" does not exist or is not a directory

Total time: 0 seconds

提示找不到D:\eclipse-hadoop3x-master\build\contrib\eclipse-plugin\classes,找不到就自己建一个,执行以下指令:

mkdir D:\eclipse-hadoop3x-master\build\contrib\eclipse-plugin\classes

ant jar -Dversion=3.3.1 -Dhadoop.version=3.3.1 -Declipse.home=D:\eclipse -Dhadoop.home=D:\hadoop-3.3.1

ant编译后再次报错如下:

BUILD FAILED

D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin\build.xml:123: Warning: Could not find file D:\hadoop-3.3.1\share\hadoop\common\lib\slf4j-log4j12-1.7.25.jar to copy.

报错显示找不到要copy的文件,上文已经修改过libraries.properties文件,hadoop-3.3.1中slf4j-log4j12的版本应该是1.7.30而不是1.7.25,因此可以看出编译使用的libraries.properties文件并不是之前修改的D:\eclipse-hadoop3x-master\ivy目录下的文件

修改D:\eclipse-hadoop3x-master\src\contrib\eclipse-plugin\ivy目录下的libraries.properties文件同上文所示。

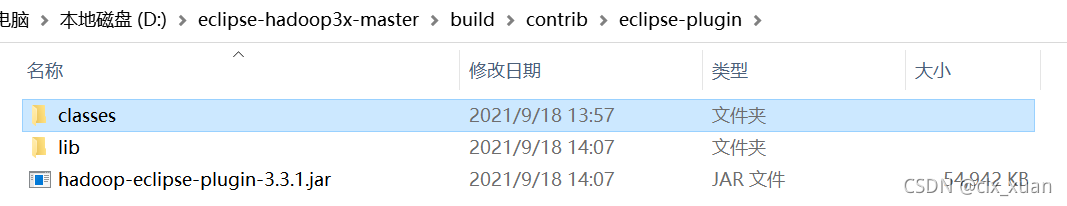

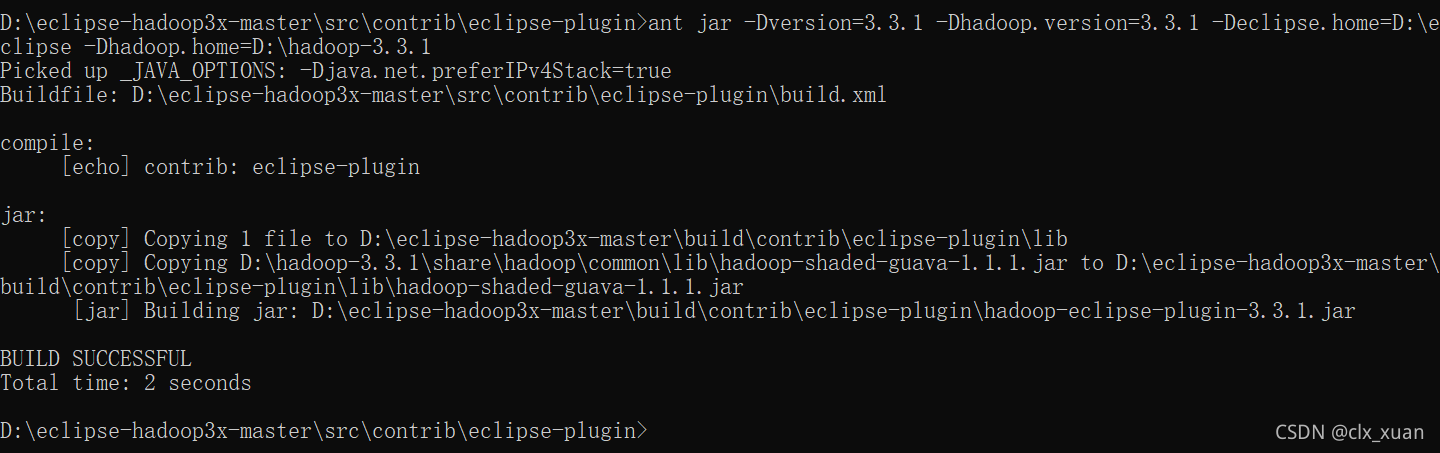

再次执行ant编译指令生成jar包,最后编译成功

4. 配置插件

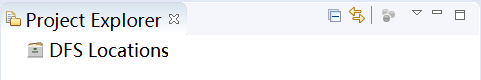

将D:\eclipse-hadoop3x-master\build\contrib\eclipse-plugin文件夹中生成的jar包hadoop-eclipse-plugin-3.3.1.jar拷贝到D:\eclipse\plugins目录下,然后重启eclipse,可以看到eclipse的Project Explorer下出现了DFS Locations的图标。

接着打开windows->Preferences->Hadoop Map/Reduce配置hadoop路径,如下图。

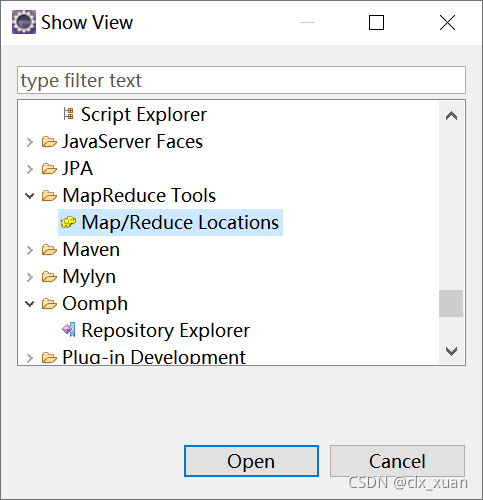

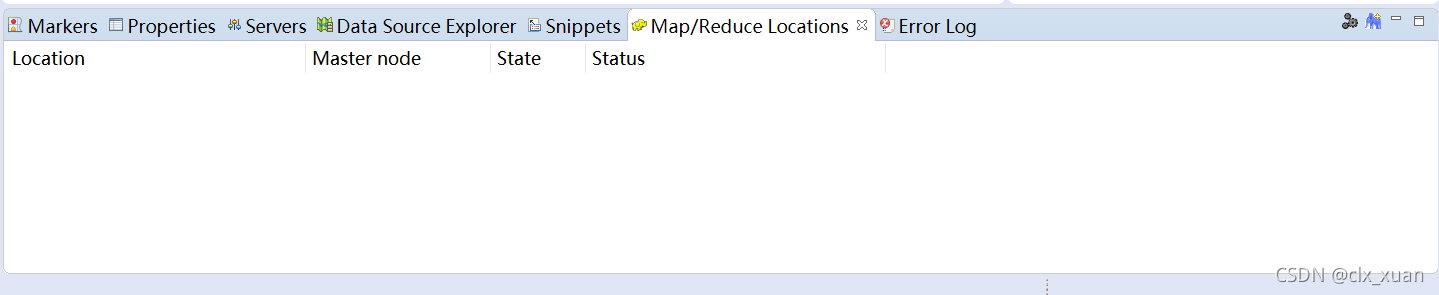

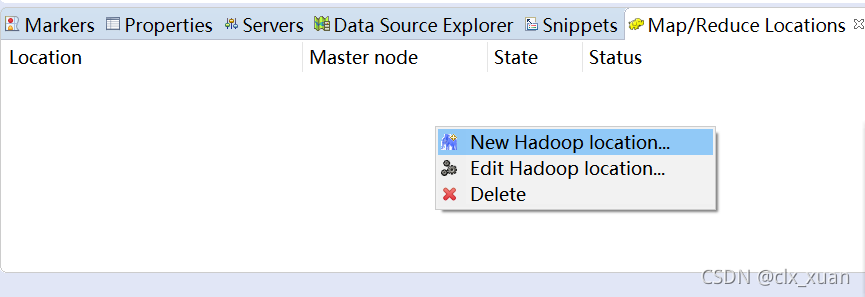

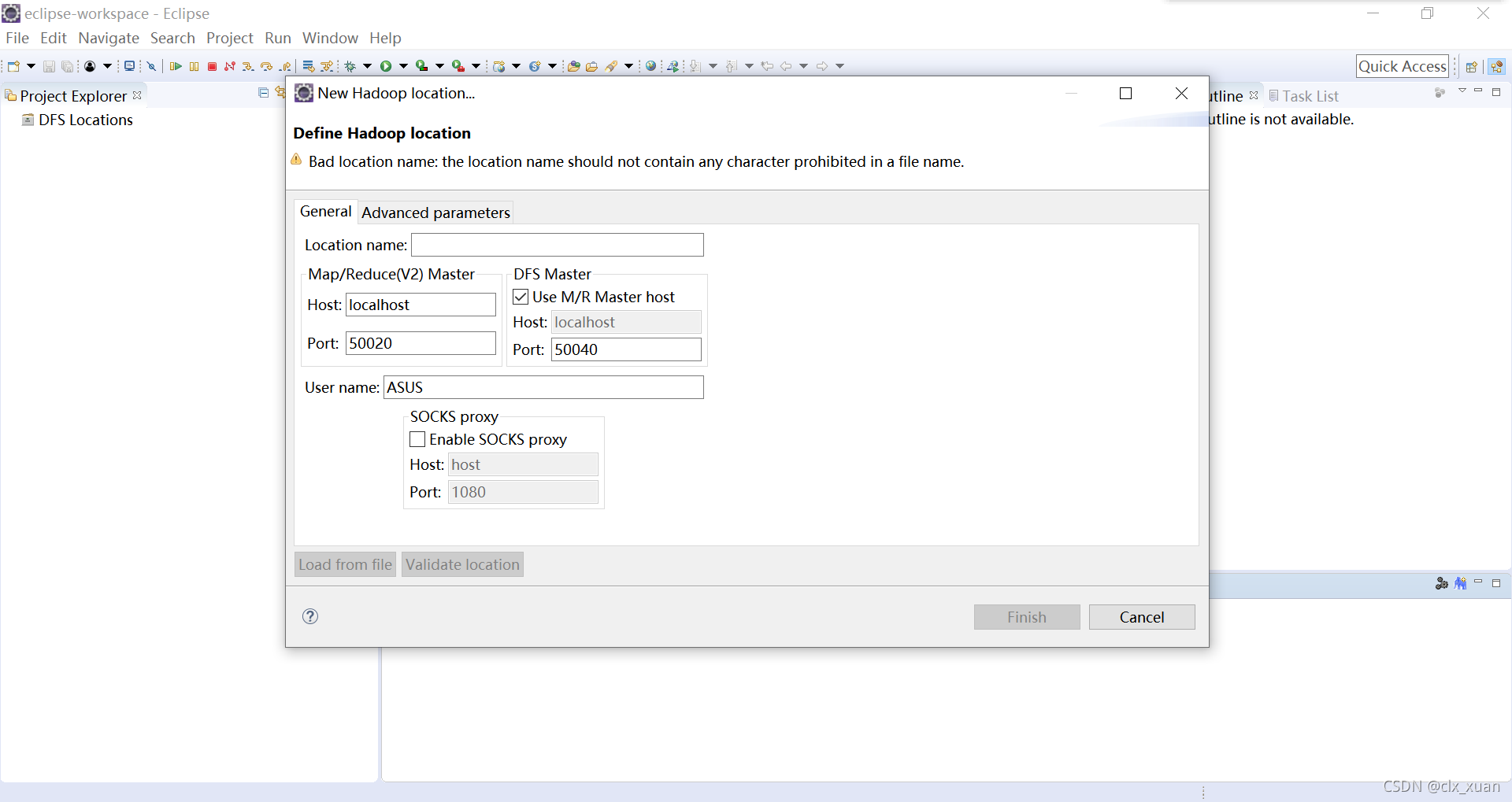

然后选择Window->Show view->Others,打开Map/Reduce Locations视图

在Map/Reduce Locations视图的空白处右键选择New Hadoop Location,发现出现了新问题,New Hadoop Location点击无响应。

5. 解决New Hadoop Location无响应问题

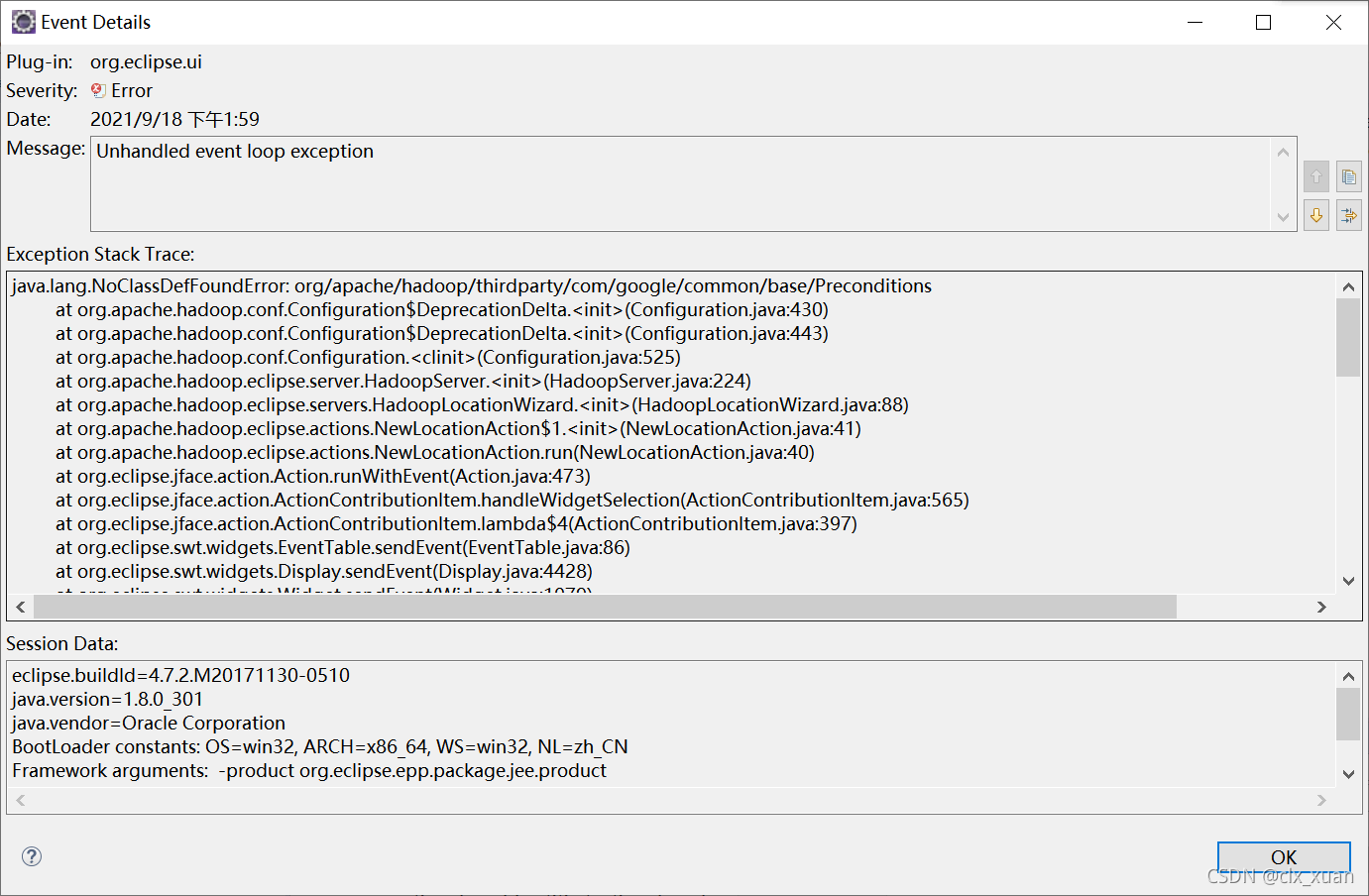

打开eclipse的Error Log,查找无响应的具体报错原因

具体报错如下:

Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.thirdparty.com.google.common.base.Preconditions cannot be found by org.apache.hadoop.eclipse_0.18.0

本想用Open Type检索缺失包,但是不知道为什么检索不了,于是百度搜索发现该缺失类一般在guava包下,由于guava包早就copy到插件中了,那么估计缺失的是guava的相关包https://blog.csdn.net/zimiao552147572/article/details/108230569给了我启发

猜想缺失包可能是hadoop-shaded-guava-1.1.1.jar,

于是尝试将该jar包拷贝到${build.dir}/lib下并设置为Bundle-ClassPath

build.xml添加代码如下:

<copy file="${hadoop.home}/share/hadoop/common/lib/hadoop-shaded-guava-1.1.1.jar" todir="${build.dir}/lib" verbose="true"/>

lib/hadoop-shaded-guava-1.1.1.jar,

cmd中再次执行编译命令

将新生成的jar拷贝到eclipse的plugins文件下,覆盖原有jar包,重启eclipse,尝试New Hadoop Location,问题成功解决,撒花★,°:.☆( ̄▽ ̄)/$:.°★ 。

解决这个问题的过程中更换了多次eclipse版本,eclipse-hadoop-plugin包也更换了,原先猜想hadoop-shaded-guava-1.1.1.jar缺失时,以为jar包早就拷贝到了${build.dir}/lib下,只是没有设置路径,所以只更改添加了Bundle-ClassPath,结果还是不行。

后来又花费了不少时间,偶然发现hadoop-shaded-guava-1.1.1.jar其实在D:\hadoop-3.3.1\share\hadoop\common的lib文件夹下,生成的插件内并没有拷贝该jar包,需要另外添加copy代码进行拷贝,试着添加了copy该缺失包的代码后,果然解决了问题。

下面是修改后的build.xml:

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one or more

contributor license agreements. See the NOTICE file distributed with

this work for additional information regarding copyright ownership.

The ASF licenses this file to You under the Apache License, Version 2.0

(the "License"); you may not use this file except in compliance with

the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<project default="jar" name="eclipse-plugin">

<import file="../build-contrib.xml"/>

<path id="eclipse-sdk-jars">

<fileset dir="${eclipse.home}/plugins/">

<include name="org.eclipse.ui*.jar"/>

<include name="org.eclipse.jdt*.jar"/>

<include name="org.eclipse.core*.jar"/>

<include name="org.eclipse.equinox*.jar"/>

<include name="org.eclipse.debug*.jar"/>

<include name="org.eclipse.osgi*.jar"/>

<include name="org.eclipse.swt*.jar"/>

<include name="org.eclipse.jface*.jar"/>

<include name="org.eclipse.team.cvs.ssh2*.jar"/>

<include name="com.jcraft.jsch*.jar"/>

</fileset>

</path>

<path id="hadoop-sdk-jars">

<fileset dir="${hadoop.home}/share/hadoop/mapreduce">

<include name="hadoop*.jar"/>

</fileset>

<fileset dir="${hadoop.home}/share/hadoop/hdfs">

<include name="hadoop*.jar"/>

</fileset>

<fileset dir="${hadoop.home}/share/hadoop/common">

<include name="hadoop*.jar"/>

</fileset>

</path>

<!-- Override classpath to include Eclipse SDK jars -->

<path id="classpath">

<pathelement location="${build.classes}"/>

<!--pathelement location="${hadoop.root}/build/classes"/-->

<path refid="eclipse-sdk-jars"/>

<path refid="hadoop-sdk-jars"/>

<!-- new add -->

<fileset dir="${hadoop.root}">

<include name="**/*.jar" />

</fileset>

</path>

<!-- Skip building if eclipse.home is unset. -->

<target name="check-contrib" unless="eclipse.home">

<property name="skip.contrib" value="yes"/>

<echo message="eclipse.home unset: skipping eclipse plugin"/>

</target>

<target name="compile" unless="skip.contrib">

<!--<target name="compile" depends="init, ivy-retrieve-common" unless="skip.contrib">-->

<echo message="contrib: ${name}"/>

<javac

encoding="${build.encoding}"

srcdir="${src.dir}"

includes="**/*.java"

destdir="${build.classes}"

debug="${javac.debug}"

deprecation="${javac.deprecation}"

includeantruntime="on">

<classpath refid="classpath"/>

</javac>

</target>

<!-- Override jar target to specify manifest -->

<target name="jar" depends="compile" unless="skip.contrib">

<mkdir dir="${build.dir}/lib"/>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/mapreduce">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/common">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/hdfs">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/lib/" verbose="true">

<fileset dir="${hadoop.home}/share/hadoop/yarn">

<include name="hadoop*.jar"/>

</fileset>

</copy>

<copy todir="${build.dir}/classes" verbose="true">

<fileset dir="${root}/src/java">

<include name="*.xml"/>

</fileset>

</copy>

<copy file="${hadoop.home}/share/hadoop/common/lib/protobuf-java-${protobuf.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/log4j-${log4j.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-cli-${commons-cli.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-configuration2-2.1.1.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-lang3-3.7.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-collections-${commons-collections.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-core-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-mapper-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-log4j12-${slf4j-log4j12.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-api-${slf4j-api.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/guava-${guava.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/hadoop-auth-${hadoop.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/netty-${netty.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/javax.servlet-api-3.1.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-io-2.8.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/htrace-core4-${htrace.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/woodstox-core-5.3.0.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/stax2-api-4.2.1.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/hadoop-shaded-guava-1.1.1.jar" todir="${build.dir}/lib" verbose="true"/>

<jar

jarfile="${build.dir}/hadoop-${name}-${hadoop.version}.jar"

manifest="${root}/META-INF/MANIFEST.MF">

<manifest>

<attribute name="Bundle-ClassPath"

value="classes/,

lib/hadoop-mapreduce-client-core-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-common-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-jobclient-${hadoop.version}.jar,

lib/hadoop-auth-${hadoop.version}.jar,

lib/hadoop-common-${hadoop.version}.jar,

lib/hadoop-hdfs-${hadoop.version}.jar,

lib/protobuf-java-${protobuf.version}.jar,

lib/log4j-${log4j.version}.jar,

lib/commons-cli-${commons-cli.version}.jar,

lib/commons-configuration2-2.1.1.jar,

lib/commons-httpclient-${commons-httpclient.version}.jar,

lib/commons-lang3-3.7.jar,

lib/commons-collections-${commons-collections.version}.jar,

lib/jackson-core-asl-${jackson.version}.jar,

lib/jackson-mapper-asl-${jackson.version}.jar,

lib/slf4j-log4j12-${slf4j-log4j12.version}.jar,

lib/slf4j-api-${slf4j-api.version}.jar,

lib/guava-${guava.version}.jar,

lib/hadoop-auth-${hadoop.version}.jar,

lib/netty-${netty.version}.jar,

lib/javax.servlet-api-3.1.0.jar,

lib/commons-io-2.8.0jar,

lib/woodstox-core-5.3.0.jar,

lib/stax2-api-4.2.1.jar,

lib/hadoop-shaded-guava-1.1.1.jar,"/>

</manifest>

<fileset dir="${build.dir}" includes="classes/ lib/"/>

<!--fileset dir="${build.dir}" includes="*.xml"/-->

<fileset dir="${root}" includes="resources/ plugin.xml"/>

</jar>

</target>

</project>

在解决问题过程中参考了以下链接:

https://zhuanlan.zhihu.com/p/38630695

https://blog.csdn.net/zimiao552147572/article/details/108230569

6. 其他问题

在实际应用中又遇到了其他问题

- DFS Location提示Error: No FileSystem for Scheme:hdfs

- 连接不上虚拟机,提示缺失类

目前插件还是有问题,先用回2.x版本的,后续有空再研究。

本文档详细记录了解决Hadoop Eclipse插件在编译过程中遇到的问题,包括软件配置、ant编译错误、jar包生成、配置插件以及解决NewHadoopLocation无响应的故障排查过程。主要涉及对build.xml和libraries.properties的修改,以及添加缺失jar包到编译路径。最终成功解决并实现Eclipse中Hadoop项目的配置和管理。

本文档详细记录了解决Hadoop Eclipse插件在编译过程中遇到的问题,包括软件配置、ant编译错误、jar包生成、配置插件以及解决NewHadoopLocation无响应的故障排查过程。主要涉及对build.xml和libraries.properties的修改,以及添加缺失jar包到编译路径。最终成功解决并实现Eclipse中Hadoop项目的配置和管理。

2473

2473

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?