1. 修改spark-env.sh

| cd /export/server/spark/conf cp spark-env.sh.template spark-env.sh vim /export/server/spark/conf/spark-env.sh 添加以下内容: HADOOP_CONF_DIR=/export/server/hadoop/etc/hadoop YARN_CONF_DIR=/export/server/hadoop/etc/hadoop SPARK_HISTORY_OPTS="-Dspark.history.fs.logDirectory=hdfs://node1:8020/sparklog/ -Dspark.history.fs.cleaner.enabled=true" 同步到其他两台(单节点时候, 不需要处理) cd /export/server/spark/conf scp -r spark-env.sh node2:$PWD scp -r spark-env.sh node3:$PW |

2.修改hadoop的yarn-site.xml

| cd /export/server/hadoop-3.3.0/etc/hadoop/ vim /export/server/hadoop-3.3.0/etc/hadoop/yarn-site.xml 添加以下内容: <configuration> <!-- 配置yarn主节点的位置 --> <property> <name>yarn.resourcemanager.hostname</name> <value>node1</value> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <!-- 设置yarn集群的内存分配方案 --> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>20480</value> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>2048</value> </property> <property> <name>yarn.nodemanager.vmem-pmem-ratio</name> <value>2.1</value> </property> <!-- 开启日志聚合功能 --> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> </property> <!-- 设置聚合日志在hdfs上的保存时间 --> <property> <name>yarn.log-aggregation.retain-seconds</name> <value>604800</value> </property> <!-- 设置yarn历史服务器地址 --> <property> <name>yarn.log.server.url</name> <value>http://node1:19888/jobhistory/logs</value> </property> <!-- 关闭yarn内存检查 --> <property> <name>yarn.nodemanager.pmem-check-enabled</name> <value>false</value> </property> <property> <name>yarn.nodemanager.vmem-check-enabled</name> <value>false</value> </property> </configuration> |

将其同步到其他两台

| cd /export/server/hadoop/etc/hadoop scp -r yarn-site.xml node2:$PWD scp -r yarn-site.xml node3:$PWD |

3.Spark设置历史服务地址

| cd /export/server/spark/conf cp spark-defaults.conf.template spark-defaults.conf vim spark-defaults.conf 添加以下内容: spark.eventLog.enabled true spark.eventLog.dir hdfs://node1:8020/sparklog/ spark.eventLog.compress true spark.yarn.historyServer.address node1:18080 配置后, 需要在HDFS上创建 sparklog目录 hdfs dfs -mkdir -p /sparklog |

4.设置日志级别:

| cd /export/server/spark/conf cp log4j.properties.template log4j.properties vim log4j.properties 修改以下内容: log4j.rootCategory=WARN, console |

同步到其他节点

| cd /export/server/spark/conf scp -r spark-defaults.conf log4j.properties node2:$PWD scp -r spark-defaults.conf log4j.properties node3:$PWD |

5.配置依赖spark jar包 当Spark Application应用提交运行在YARN上时,默认情况下,每次提交应用都需要将依赖Spark相关jar包上传到YARN 集群中,为了节省提交时间和存储空间,将Spark相关jar包上传到HDFS目录中,设置属性告知Spark Application应用。 |

| hadoop fs -mkdir -p /spark/jars/ hadoop fs -put /export/server/spark/jars/* /spark/jars/ 修改spark-defaults.conf cd /export/server/spark/conf vim spark-defaults.conf 添加以下内容: spark.yarn.jars hdfs://node1:8020/spark/jars/* |

同步到其他节点(无需分发, spark只有一个单节点)

| cd /export/server/spark/conf scp -r spark-defaults.conf root@node2:$PWD scp -r spark-defaults.conf root@node3:$PWD |

6.启动服务

Spark Application运行在YARN上时,上述配置完成

启动服务:HDFS、YARN、MRHistoryServer和Spark HistoryServer,命令如下:

| ## 启动HDFS和YARN服务,在node1执行命令 start-dfs.sh start-yarn.sh 或 start-all.sh 注意:在onyarn模式下不需要启动start-all.sh(jps查看一下看到worker和master) ## 启动MRHistoryServer服务,在node1执行命令 mr-jobhistory-daemon.sh start historyserver ## 启动Spark HistoryServer服务,,在node1执行命令 /export/server/spark/sbin/start-history-server.sh |

- Spark HistoryServer服务WEB UI页面地址:http://node1:18080/

- 测试代码

1,spark 自带sample

cd /export/server/spark/bin

./spark-submit \

--master yarn \

--conf "spark.pyspark.driver.python=/root/anaconda3/bin/python3" \

--conf "spark.pyspark.python=/root/anaconda3/bin/python3" \

/export/server/spark/examples/src/main/python/pi.py \

10

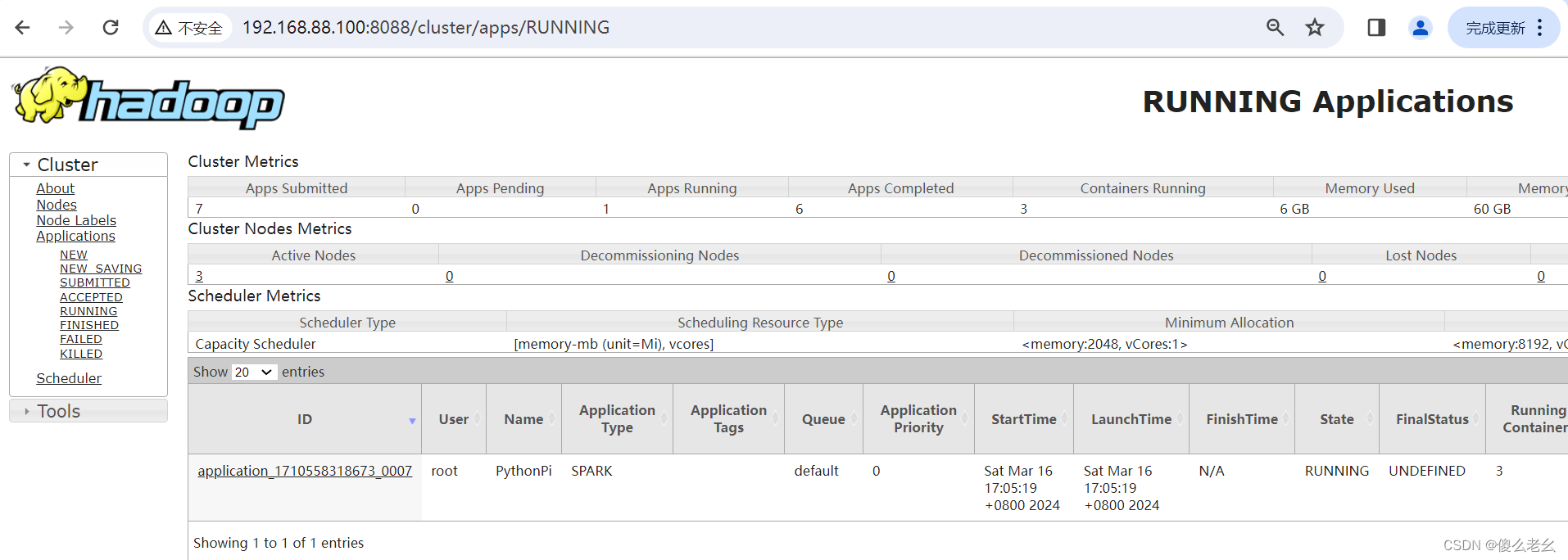

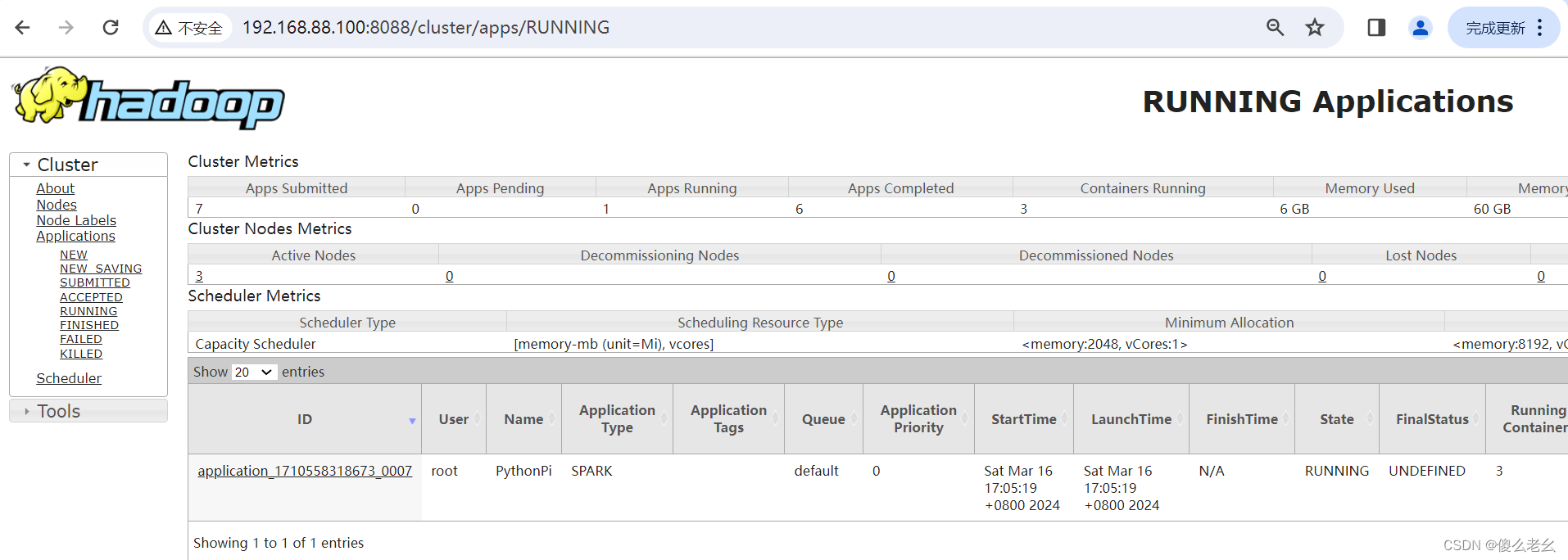

http://node1:8088/

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?