前言

在文章Sequencer: Deep LSTM for Image Classification中,提出一种新的双方向向BiLSTM来对图像进行分类,参数量较小,但笔者认为RNN系列和CNN以及Transformer比较参数量就是耍流氓,应该加入计算量等多个标准,但本文主要对提出的Sequencer2D block进行复现。

模型结构

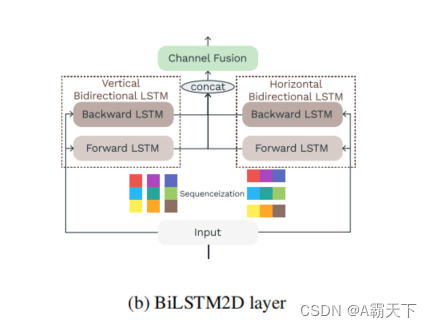

论文中首先提出了BiLSTM2D layer,他先把图片shape从[B,W,H,C]分别变为:[B,HC,W]和[B,H,WC],然后分别经过两个BiLSTM进行处理,处理后的结构进行concat,之后在经过FC层。

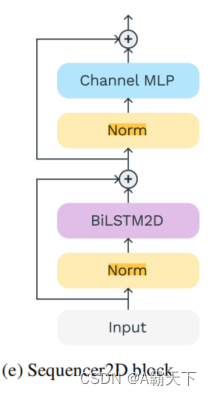

在Sequencer2D block有两个跳跃连接结构第一个流程先经过layernorm+BiLSTM2D layer,第二个流程layernorm+MLP。

代码实现

BiLSTM2D layer代码:

class BiLSTM2D(nn.Module):

def __init__(self, input_size, hidden_size,length):

super().__init__()

self.layer1 = BiLSTM(input_size, hidden_size)

self.layer2 = BiLSTM(input_size, hidden_size)

self.layer3 = nn.Linear(hidden_size*4*length, hidden_size*length)

def forward(self, x):

B,W,H,C = x.size()

Hver = torch.reshape(x,(B,W,H*C))

Hhor = torch.reshape(x.permute(0, 2, 1, 3),(B,H,W*C))

print(Hver.size(),Hhor.size())

#x2 = x2.permute(0, 2, 1)

Hver = self.layer1(Hver)

Hhor = self.layer2(Hhor)

Hall = torch.cat([Hver,Hhor],-1).permute(0, 2, 1)

Hall = torch.reshape(Hall,(B,-1))

Hall = self.layer3(Hall)

Hall = torch.reshape(Hall,(B,W,H,C))

print(Hver.size(),Hhor.size(),Hall.size())

return Hall

Sequencer2D_block代码:

class Sequencer2D_block(nn.Module):

def __init__(self, input_size, hidden_size,length):

super().__init__()

self.ln1 = torch.nn.LayerNorm(3,eps=1e-6)

self.layer1 = BiLSTM2D(input_size, hidden_size,length)

self.ln2 = torch.nn.LayerNorm(3,eps=1e-6)

self.MLP = nn.Sequential(

nn.Linear(hidden_size*length,hidden_size*length) ,

nn.ReLU())

def forward(self, x):

B,W,H,C = x.size()

#x = torch.reshape(x,(B,W*H,C))

x1 = self.ln1(x)

x2 = self.layer1(x1)

x2 = x+x2

x3 = torch.reshape(self.MLP(torch.reshape(self.ln2(x2),(B,-1))),(B,W,H,C))+x2

print(x1.size(),x2.size(),x3.size())

return x3

4082

4082

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?