看了Andrew Ng的deeplearning课程,这是其中的一个作业:实现restnet

coursea速度好慢,只能在大佬的博客里找quiz和作业

参考吴恩达《深度学习》课后作业

一、resNet简介

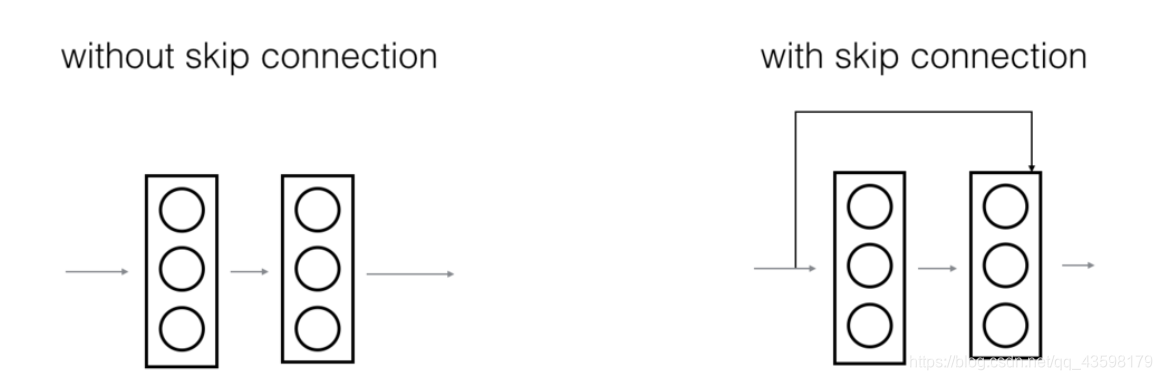

神经网络在发展中不断变深,这使得它可以表示非常复杂多样的特征,但是在更深的网络学习过程中,随着层数的加深,会出现梯度消失的问题,这使得学习速度迅速下降。而残差网络,通过添加shortcut(捷径)的方式,允许将梯度直接反向传播到较早的层,将shortcut和输入添加在一起,然后应用ReLU激活函数。

二、

ResNet主要使用了两种块,这取决输入输出的尺寸是否相同

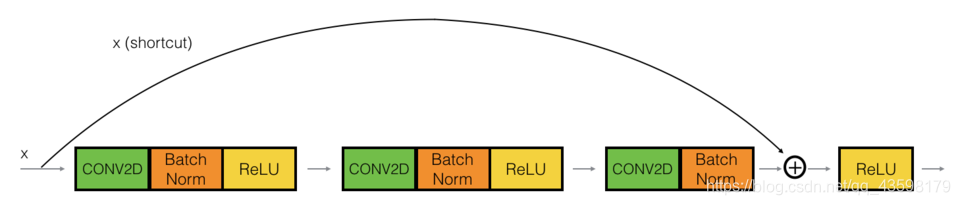

- 标识块(identity_block)

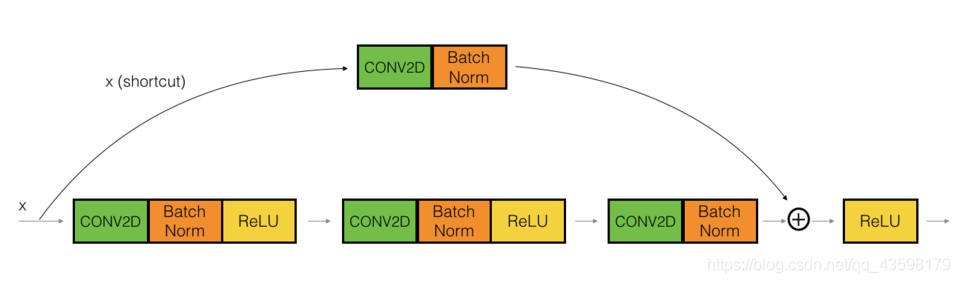

- 卷积块(convolutional_block)

两者的区别,就是identity_block在shortcut上添加了一个卷积层,因为此时输入输出尺寸不相同

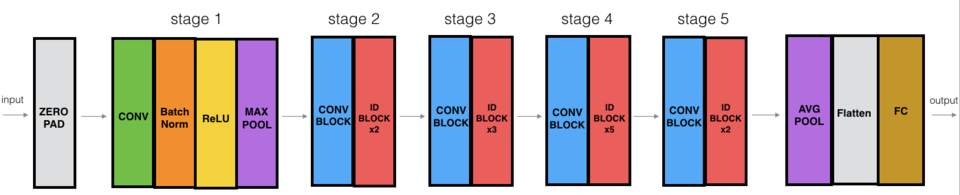

三、resNet-50的构建

identity_block实现

def identity_block(X, f, filters, stage, block):

"""

:param X: input tensor

:param f: shape for conv2 filter

:param filters: List, the number of filters in the CONV layers of the main path

:param stage: integer,used to name the layers, depending on their position in the network

:param block: name

:return:

"""

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

F1, F2, F3 = filters

X_shortcut = X

# first part

X = Conv2D(filters=F1, kernel_size=(1, 1), strides=(1, 1), padding='valid',

name=conv_name_base + '2a', kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2a')(X)

X = Activation('relu')(X)

# second part

X = Conv2D(filters=F2, kernel_size=(f, f), strides=(1, 1), padding='same',

name=conv_name_base + '2b', kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2b')(X)

X = Activation('relu')(X)

# third part

X = Conv2D(filters=F3, kernel_size=(1, 1), strides=(1, 1), padding='valid',

name=conv_name_base + '2c', kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2c')(X)

# combine the shortcut with the main path

X = layers.add([X, X_shortcut])

X = Activation('relu')(X)

return X

convolutional_block实现

def convolutional_block(X, f, filters, stage, block, s=2):

"""

:param X: input tensor

:param f: shape of the second conv

:param filters: list, the number of filters in the CONV layers of the main path

:param stage: Integer, used to name the layers, depending on their position in the network

:param block: same to the parameter stage

:param s: Integer, stride

:return:

"""

conv_name_base = 'res' + str(stage) + block + '_branch'

bn_name_base = 'bn' + str(stage) + block + '_branch'

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value

X_shortcut = X

# first part

X = Conv2D(filters=F1, kernel_size=(1, 1), strides=(s, s), padding='valid',

name=conv_name_base + '2a', kernel_initializer=glorot_uniform(seed=0))(X)

X = BatchNormalization(axis=3, name=bn_name_base + '2a')(X)

X = Activation('relu')(X)

本文介绍了resNet的基本原理,如何解决深度网络中的梯度消失问题,并详细阐述了如何利用keras实现resNet-50模型,包括identity_block和convolutional_block的构建过程,以及模型的训练与保存。

本文介绍了resNet的基本原理,如何解决深度网络中的梯度消失问题,并详细阐述了如何利用keras实现resNet-50模型,包括identity_block和convolutional_block的构建过程,以及模型的训练与保存。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?