高可用的作用

高可用是资源池中的某些物理主机出现故障后,故障物理主机上的虚拟机会在资源池内其他正常的物理主机上启动,从而保障资源池安全可靠的持续运行,是服务器虚拟化软件的常见功能

搭建步骤

本次实验用四个虚拟机,分别为server1,server2,server3,server4,server1作为主调度器,server4作为辅助调度器,当主调度器故障后辅助调度会代替工作

1.server1:配置yum源

vim /etc/yum.repos.d/rhel-source.repo

1 [rhel-source]

2 name=Red Hat Enterprise Linux $releasever - $basearch - Source

3 baseurl=http://172.25.61.250/rhel6.5

4 enabled=1

5 gpgcheck=1

6 gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release

7

8 [LoadBalancer]

9 name=LoadBalancer

10 baseurl=http://172.25.61.250/rhel6.5/LoadBalancer

11 gpgcheck=0

12

13 [HighAvailability]

14 name=HighAvailability

15 baseurl=http://172.25.61.250/rhel6.5/HighAvailability

16 gpgcheck=0

2.在主调度器上安装源码包keepalived-2.0.6

解压 :tar zxf keepalived-2.0.6.tar.gz

3.安装编译所需软件

yum install -y gcc libnl libnl-devel

yum install -y openssl-devel

yum install -y libnfnetlink-devel-1.0.0-1.el6.x86_64.rpm

4.进入解压好的目录下,编译,安装

cd keepalived-2.0.6

./configure --prefix=/usr/local/keepalive --with-init=SYSV

make && make install

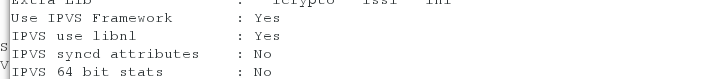

看到 Use IPVS Framework 为yes就算编译安装完成

5.将主调度器上编译完成的keeplived发给辅助rs一份

scp /usr/local/keepalived/ server4:/usr/local

6.给予可执行权限,制作软链接

chmod +x /usr/local/keepalived/etc/rc.d/init.d/keepalived

5 ln -s /usr/local/keepalived/etc/rc.d/init.d/keepalived /etc/init.d/

6 ln -s /usr/local/keepalived/etc/sysconfig/keepalived /etc/sysconfig/

7 ln -s /usr/local/keepalived/etc/keepalived/ /etc/

8 ln -s /usr/local/keepalived/sbin/keepalived /sbin/

辅助rs相同的步骤

7.安装调度器

yum install ipvsadm -y

编写主rs的keepalived主配置文件

vim /etc/keepalived/keepalived.conf

3 global_defs {

4 notification_email {

5 root@localhost

6 }

7 notification_email_from Alexandre.Cassen@firewall.loc

8 smtp_server 127.0.0.1

9 smtp_connect_timeout 30

10 router_id LVS_DEVEL

11 vrrp_skip_check_adv_addr

12 #vrrp_strict

13 vrrp_garp_interval 0

14 vrrp_gna_interval 0

15 }

16

17 vrrp_instance VI_1 {

18 state MASTER

19 interface eth0

20 virtual_router_id 51

21 priority 100

22 advert_int 1

23 authentication {

24 auth_type PASS

25 auth_pass 1111

26 }

27 virtual_ipaddress {

28 172.25.61.100

29 }

30 }

31

32 virtual_server 172.25.61.100 80 {

33 delay_loop 3

34 lb_algo rr

35 lb_kind DR

36 #persistence_timeout 50

37 protocol TCP

38

39 real_server 172.25.61.2 80 {

40 TCP_CHECK{

41 weight 1

42 connect_timeout 3

43 retry 3

44 delay_before_retry 3

45 }

46 }

47

48 real_server 172.25.61.3 80 {

49 TCP_CHECK{

50 weight 1

51 connect_timeout 3

52 retry 3

53 delay_before_retry 3

54 }

55 }

56 }

备用调度器上配置文件要将MASTER改为BACKUP,priority权重系数改为50

8.server2和server3的apache服务开启

9.server1和server4(即主调度器和辅助调度器)的服务打开

/etc/init.d/keepalived start

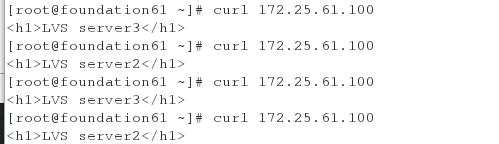

10.测试

在真机上测试

发现server2和server3轮循调度

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server3</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server2</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server3</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server2</h1>

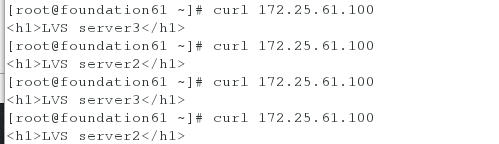

将主调度器上把keepalived服务停掉,在测试发现server2和server3仍然轮循,但ip已经漂移到副调度器上

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server3</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server2</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server3</h1>

[root@foundation61 ~]# curl 172.25.61.100

<h1>LVS server2</h1>

[root@server4 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 52:54:00:3f:af:58 brd ff:ff:ff:ff:ff:ff

inet 172.25.61.4/24 brd 172.25.61.255 scope global eth0

inet 172.25.61.100/32 scope global eth0

inet6 fe80::5054:ff:fe3f:af58/64 scope link

valid_lft forever preferred_lft forever

296

296

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?