spark操作hbase中的数据

1、在hbase中创建表,并添加相应的数据

hbase(main):002:0> create 'tb','info'

0 row(s) in 23.4720 seconds

=> Hbase::Table - tb

hbase(main):003:0> put 'tb','1001','info:name','Tom'

0 row(s) in 0.7480 seconds

hbase(main):004:0> put 'tb','1001','info:sex','male'

0 row(s) in 0.1530 seconds

hbase(main):005:0> put 'tb','1001','info:age','18'

0 row(s) in 0.0990 seconds

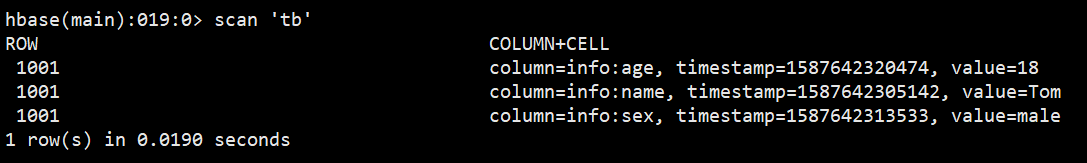

2、查看已创建表的情况

3、添加依赖`

`<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.11.8</version>

</dependency>

<dependency>

<groupId>com.opencsv</groupId>

<artifactId>opencsv</artifactId>

<version>4.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.11</artifactId>

<version>2.2.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.2.0</version>

</dependency>

<!-- <dependency>-->

<!-- <groupId>org.apache.hadoop</groupId>-->

<!-- <artifactId>hadoop-client</artifactId>-->

<!-- <version>2.7.2</version>-->

<!-- </dependency>-->

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-shaded-client</artifactId>

<version>1.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-common</artifactId>

<version>1.3.1</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-shaded-server</artifactId>

<version>1.3.1</version>

</dependency>

<dependency>

<groupId>com.google.protobuf</groupId>

<artifactId>protobuf-java</artifactId>

<version>3.6.1</version>

</dependency>

4、编写scala代码

package readandsavefile

import org.apache.hadoop.conf.Configuration

import org.apache.hadoop.hbase.HBaseConfiguration

import org.apache.hadoop.hbase.client.Result

import org.apache.hadoop.hbase.io.ImmutableBytesWritable

import org.apache.hadoop.hbase.mapreduce.TableInputFormat

import org.apache.hadoop.hbase.util.Bytes

import org.apache.spark.{SparkConf, SparkContext}

import org.apache.spark.rdd.RDD

/**

* @author shkstart

* @date 2020-4-23 - 8:09

*/

object readHbase {

def initSpark(): SparkContext = {

val sc = new SparkContext(new SparkConf().setMaster("local[2]").setAppName("readHbase"))

sc

}

def main(args: Array[String]): Unit = {

val sc: SparkContext = initSpark()

//spark从hbase中读数据

//做出hbase的配置信息

val hbaseConf: Configuration = HBaseConfiguration.create()

hbaseConf.set("hbase.zookeeper.quorum","hadoop131,hadoop132,hadoop133")

hbaseConf.set("hbase.zookepper.property.clientPort","2181")

hbaseConf.set(TableInputFormat.INPUT_TABLE,"tb")

/**

* 参数1:hbase的配置信息

* 参数2:classOf[TableInputFormat] 读数据的数据类型

* 参数3/4:classOf[ImmutableBytesWritable],classOf[Result]

* 读到的数据的rdd元组中类型

*/

val rdd: RDD[(ImmutableBytesWritable, Result)] = sc.newAPIHadoopRDD(hbaseConf,classOf[TableInputFormat],classOf[ImmutableBytesWritable],classOf[Result])

val count: Long = rdd.count()

print(count)

rdd.cache()

rdd.foreach({case(_,result) =>

val key: String = Bytes.toString(result.getRow)

val name: String = Bytes.toString(result.getValue("info".getBytes,"name".getBytes))

val sex: String = Bytes.toString(result.getValue("info".getBytes,"sex".getBytes))

val age: String = Bytes.toString(result.getValue("info".getBytes,"age".getBytes))

println(key+" "+ name+" "+ sex+" "+ age)

}

)

}

}

注意

(1)如果出现zookeeper connection连接失败以及无法找到hadoop132之类的问题,可能原因是未在windows中配置hadoop132的ip,需要按下面的步骤添加ip,也可以在程序中直接写hadoop132的具体ip地址,但个人建议使用前者。

进入C:\Windows\System32\drivers\etc\hosts添加虚拟机的相应ip

添加好以后,程序就能够成功运行了!

(2)、如果程序出现这种错误提示

[Exception in thread “main” org.apache.hadoop.hbase.client.RetriesExhaustedException: Can’t get the locations

说明hadoop 的版本和hbase的版本不兼容,可以调整hbase的版本

3949

3949

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?