以MemSourceBatchOp组件为例,首先创建一个maven项目,然后在pom.xml文件中写入依赖信息

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.zhang</groupId>

<artifactId>AlinkDemo1</artifactId>

<version>1.0</version>

<properties>

<java.version>1.8</java.version>

<flink.version>1.11.2</flink.version>

<scala.binary.version>2.11</scala.binary.version>

<flink.binary.version>1.11.2</flink.binary.version>

<log4j.version>2.12.1</log4j.version>

</properties>

<dependencies>

<!-- https://mvnrepository.com/artifact/com.alibaba.alink/alink_core_flink-1.10 -->

<dependency>

<groupId>com.alibaba.alink</groupId>

<artifactId>alink_core_flink-1.13_2.11</artifactId>

<version>1.5.5</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-common</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner-blink_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-jdbc_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-cep_${scala.binary.version}</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-csv</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-json</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/com.alibaba.alink/alink_core_flink-1.11 -->

<dependency>

<groupId>com.alibaba.alink</groupId>

<artifactId>alink_core_flink-1.11_2.11</artifactId>

<version>1.5.5</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.75</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.6</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.49</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.11</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>1.2.1</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-slf4j-impl</artifactId>

<version>${log4j.version}</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-api</artifactId>

<version>${log4j.version}</version>

</dependency>

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>${log4j.version}</version>

</dependency>

</dependencies>

<build>

<plugins>

<!-- Java Compiler -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<!-- Scala Compiler -->

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<version>2.15.2</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.4</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

这里需要注意版本问题,首先查看自己的 flink 集群版本

[root@master data]# flink --version

2022-06-30 16:01:16,350 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-root.

2022-06-30 16:01:16,350 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-root.

Version: 1.11.2

我的flink版本是1.11.2,所以pom.xml里面的flink版本也是1.11.2,保持一致

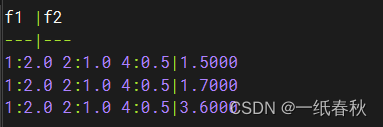

代码如下

import com.alibaba.alink.operator.batch.BatchOperator;

import com.alibaba.alink.operator.batch.source.MemSourceBatchOp;

import org.apache.flink.types.Row;

import java.util.Arrays;

import java.util.List;

public class demo1 {

public static void main(String[] args) throws Exception {

List<Row> df = Arrays.asList(

Row.of("1:2.0 2:1.0 4:0.5", 1.5),

Row.of("1:2.0 2:1.0 4:0.5", 1.7),

Row.of("1:2.0 2:1.0 4:0.5", 3.6)

);

BatchOperator<?> data = new MemSourceBatchOp(df, "f1 string, f2 double");

data.print();

}

}

然后利用maven的package打包功能,生成一个 AlinkDemo1-1.0-jar-with-dependencies.jar

之后在github上找到Alink的页面

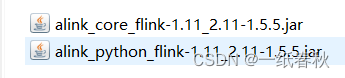

这里我的Alink版本是1.5.5,点进去找到对应版本,然后下载对应的文件。这里我下载的pyalink_flink_1.11-1.5.5-py3-none-any.whl,将后缀名改成.zip,之后解压。在解压后目录的 /pyalink/lib 目录下面可以找到两个Jar包

将这两个Jar包放到flink集群的lib目录下

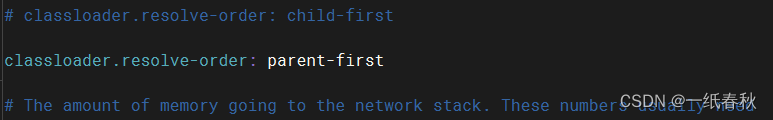

然后修改flink-conf.yaml文件,添加 classloader.resolve-order: parent-first

然后启动flink集群

将之前打包好的Jar包,上传到服务器里面,然后在Jar包所在目录下执行命令

flink run -c demo1 AlinkDemo1-1.0-jar-with-dependencies.jar

执行结果如下

968

968

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?