问题描述

idea里面pom引入spark_hive的版本默认是2.7.3,但是我服务器的hive版本是2.1.0

执行程序报错:

Exception in thread "main" org.apache.spark.sql.AnalysisException: org.apache.hadoop.hive.ql.metadata.HiveException: Unable to fetch table ads_pl_manf_insurance_month_di. Invalid method name: 'get_table_req';

显示版本问题,导致没有该方法

pom文件参考

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>project_7mo</artifactId>

<version>1.0-SNAPSHOT</version>

<repositories>

<!-- Jcenter http://jcenter.bintray.com/

Jboss http://repository.jboss.org/nexus/content/groups/public/

Maven Central http://repo2.maven.org/maven2/

Ibiblio http://mirrors.ibiblio.org/pub/mirrors/maven2

UK Maven http://uk.maven.org/maven2/-->

<!-- <repository>-->

<!-- <id>central-repos</id>-->

<!-- <name>Central Repository</name>-->

<!-- <url>http://repo.maven.apache.org/maven2</url>-->

<!-- </repository>-->

<repository>

<id>aliyun</id>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

</repository>

<repository>

<id>activiti-repos2</id>

<name>Activiti Repository 2</name>

<url>https://app.camunda.com/nexus/content/groups/public</url>

</repository>

<repository>

<id>Ibiblio</id>

<url>http://mirrors.ibiblio.org/pub/mirrors/maven2</url>

</repository>

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos/</url>

</repository>

<!-- <repository>-->

<!-- <id>jboss</id>-->

<!-- <url>http://repository.jboss.com/nexus/content/groups/public</url>-->

<!-- </repository>-->

</repositories>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

<scala.version>2.12.10</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<spark.version>3.0.0</spark.version>

<hadoop.version>2.7.3</hadoop.version>

<hudi.version>0.9.0</hudi.version>

<mysql.version>5.1.48</mysql.version>

</properties>

<dependencies>

<!-- 依赖Scala语言 -->

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!-- Spark Core 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark SQL 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Structured Streaming + Kafka 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql-kafka-0-10_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Hadoop Client 依赖 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<!-- hudi-spark3 -->

<dependency>

<groupId>org.apache.hudi</groupId>

<artifactId>hudi-spark3-bundle_2.12</artifactId>

<version>${hudi.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-avro_2.12</artifactId>

<version>${spark.version}</version>

</dependency>

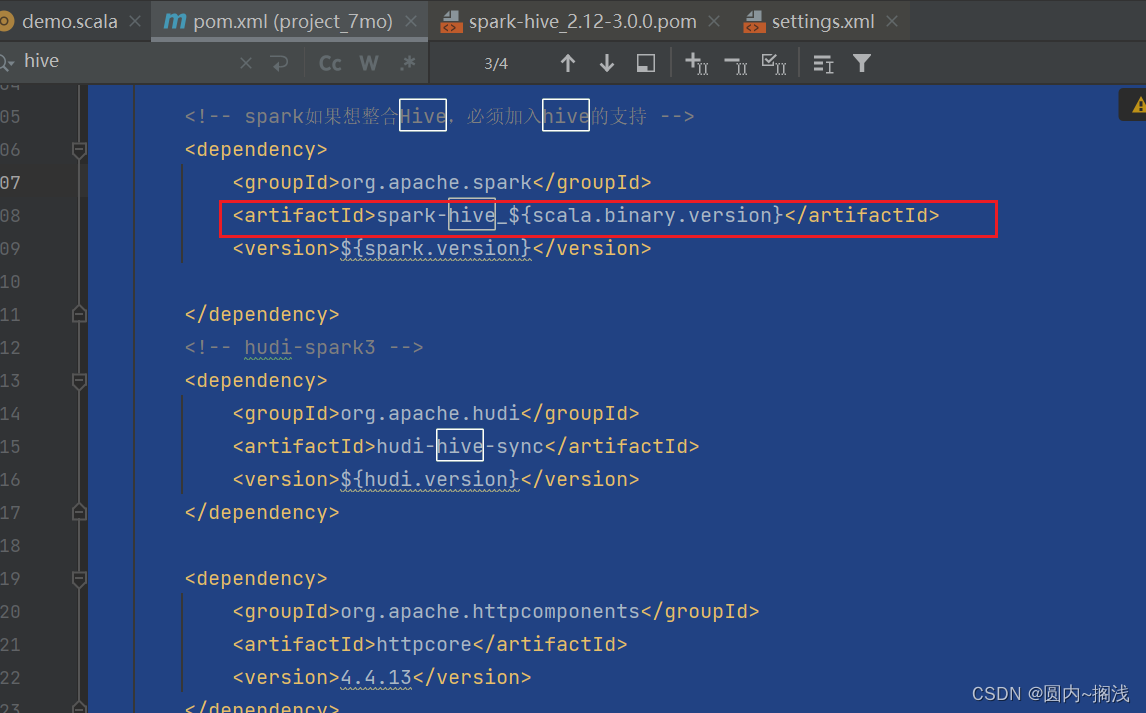

<!-- spark如果想整合Hive,必须加入hive的支持 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- hudi-spark3 -->

<dependency>

<groupId>org.apache.hudi</groupId>

<artifactId>hudi-hive-sync</artifactId>

<version>${hudi.version}</version>

</dependency>

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpcore</artifactId>

<version>4.4.13</version>

</dependency>

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>4.5.12</version>

</dependency>

<dependency>

<groupId>org.lionsoul</groupId>

<artifactId>ip2region</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>${mysql.version}</version>

</dependency>

<dependency>

<groupId>org.lionsoul</groupId>

<artifactId>ip2region</artifactId>

<version>1.7.2</version>

</dependency>

</dependencies>

<build>

<outputDirectory>target/classes</outputDirectory>

<testOutputDirectory>target/test-classes</testOutputDirectory>

<resources>

<resource>

<directory>${project.basedir}/src/main/resources</directory>

</resource>

</resources>

<!-- Maven 编译的插件 -->

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.0</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.0</version>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

问题解决

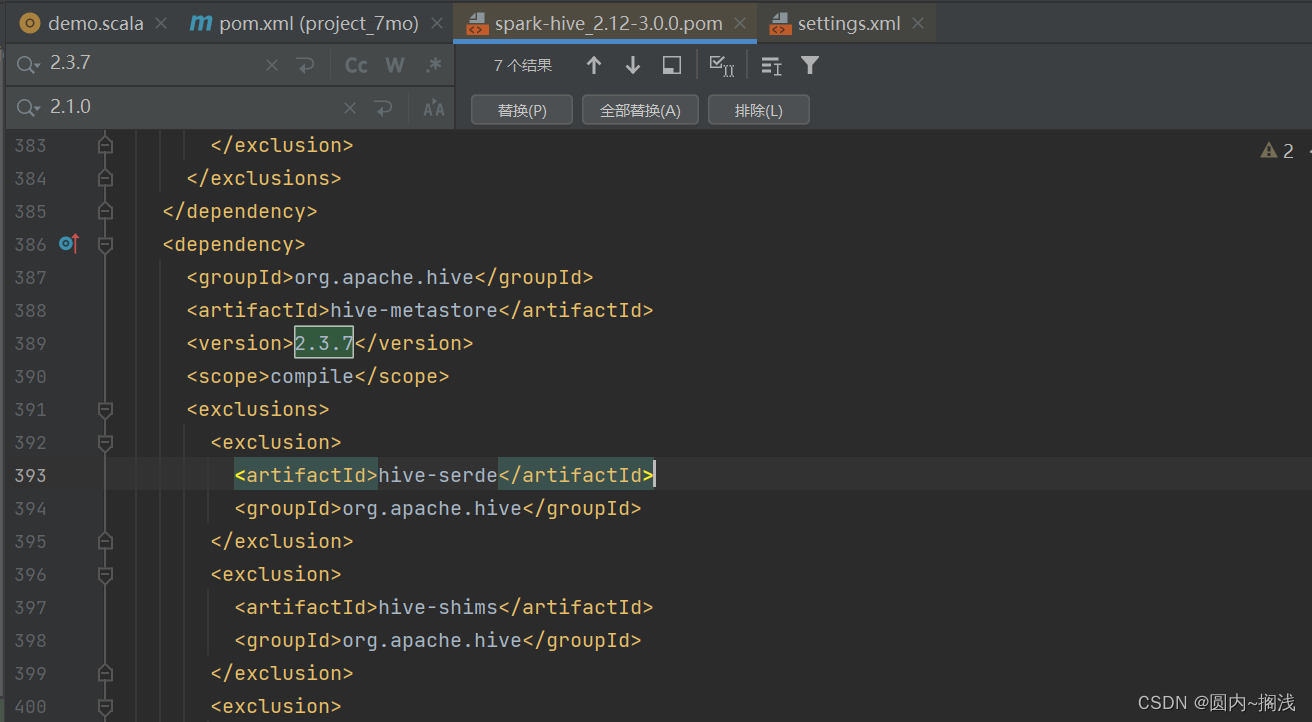

修改默认的hive版本

按住ctrl点击,进入另一个pom文件

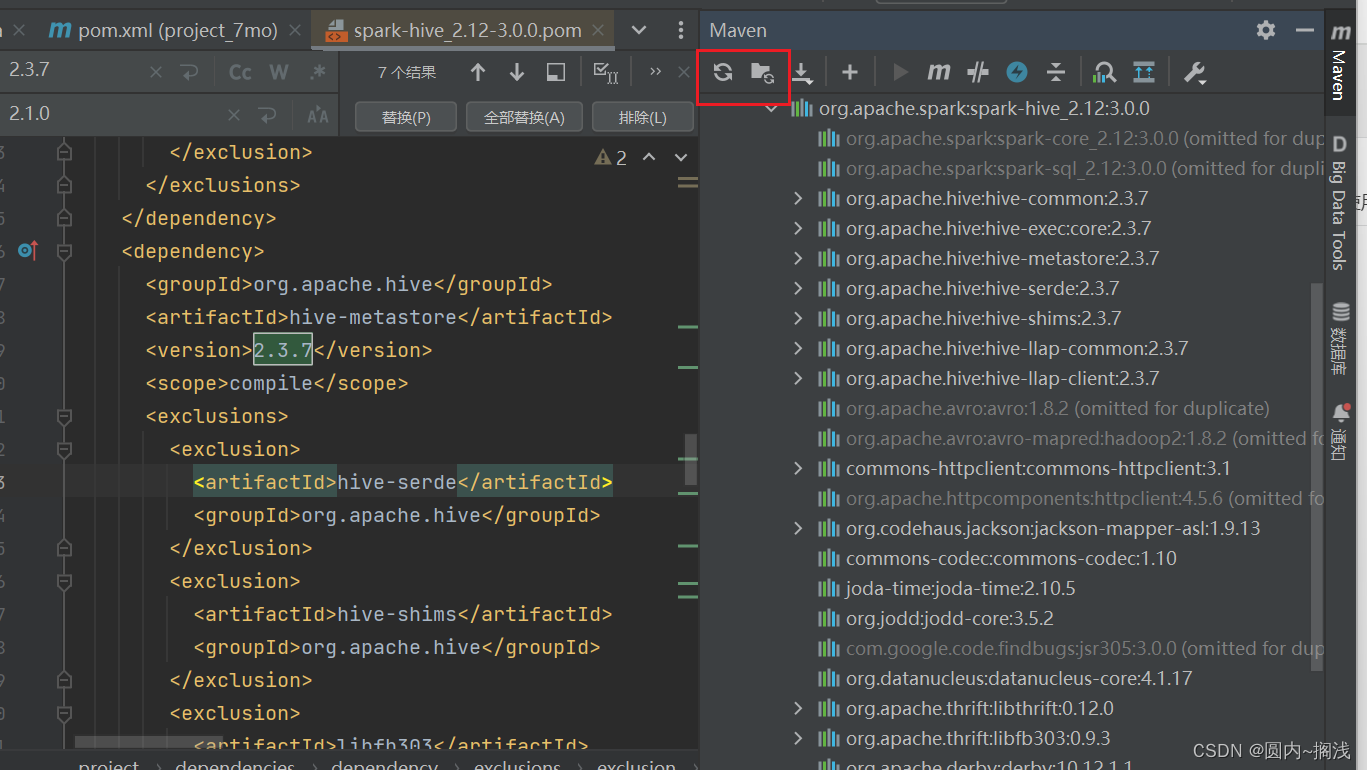

全局替换版本

全局替换版本

刷新依赖文件

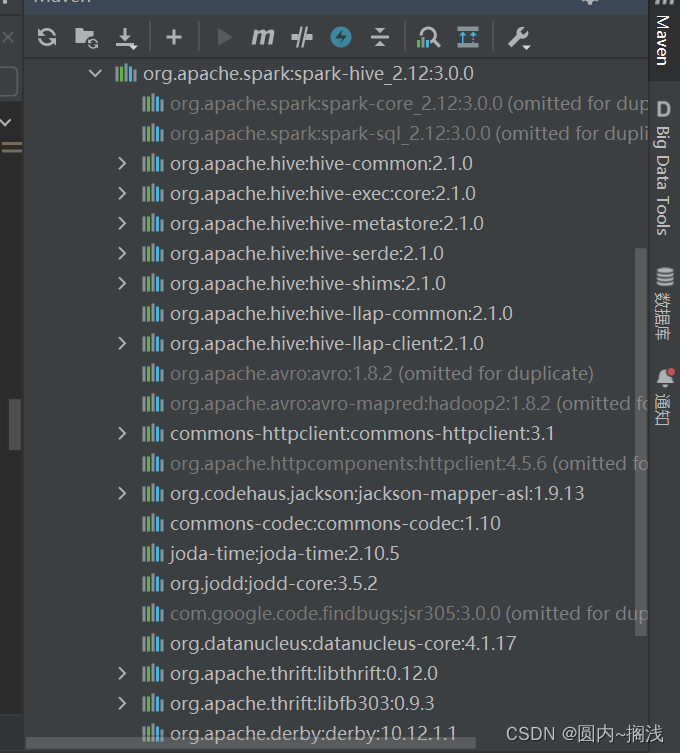

成功更换默认版本

文章讲述了在使用ApacheSpark时遇到的问题,因为IDEA中的POM文件默认引用了较新的Spark_Hive2.7.3,而服务器上的Hive版本为2.1.0,导致分析异常。解决方案是修改POM文件中Hive的版本并与服务器版本保持一致。

文章讲述了在使用ApacheSpark时遇到的问题,因为IDEA中的POM文件默认引用了较新的Spark_Hive2.7.3,而服务器上的Hive版本为2.1.0,导致分析异常。解决方案是修改POM文件中Hive的版本并与服务器版本保持一致。

602

602

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?