HashMap源码分析

本文参照了黑马程序员的HashMap视频

HashMap集合简介

HashMap是基于基于哈希表的 Map 接口的实现。允许使用null值和null键,是线程不安全的

JDK1.8之前HashMap由数组+链表组成,数组为主题,链表是为了解决哈希冲突存在的。JDK1.8以后,当链表长度大于阈值(或者红黑树的边界值,默认值为8)并且当前数组的长度大于64时,此时此索引位置上的所有数据改为使用红黑树储存。

注:将链表转换为红黑树前会判断,即使阈值大于8,但是数组长度小于64,此时并不会将链表变为红黑树,而是选择进行数组扩容。

HashMap底层的数据结构储存数据的过程

HashMap<String,Integer> map = HashMap<>();

创建HashMap对象时,在jdk8之前,构造方法中会创建一个长度为16的Entry[]用来储存键值对数据的;在jdk8以后不是在HashMap的构造方法中创建数组了,而是在put方法中创建数组Node[](准确来说是putVal方法,put方法调用putVal方法)

以以下代码为例:

HashMap<String ,Integer> map = new HashMap<>();

map.put("k1",1);

map.put("k2",2);

map.put("k3",3);

map.put("k1",4);

System.out.println(map);

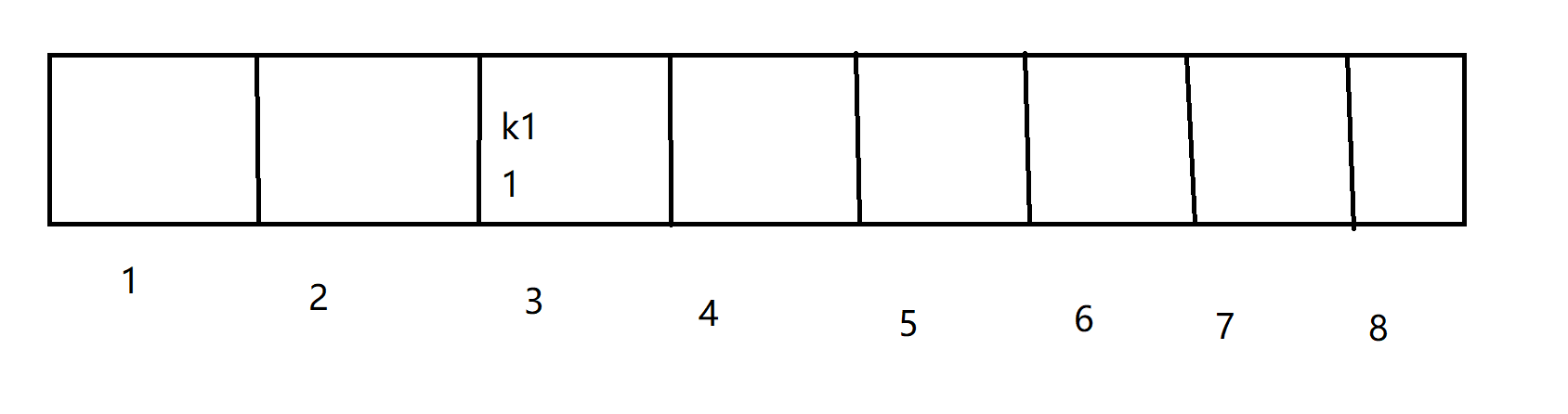

1、首先putk1这个键值对,在HashMap中先将键值的原hash码无符号右移16位,再与原hash码做异或运算得到HashMap中的哈希值

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

计算出在node数组中的索引值,如果计算出的索引空间没有数据,则直接将k1键值对储存到数组中(假设计算出的索引是3)

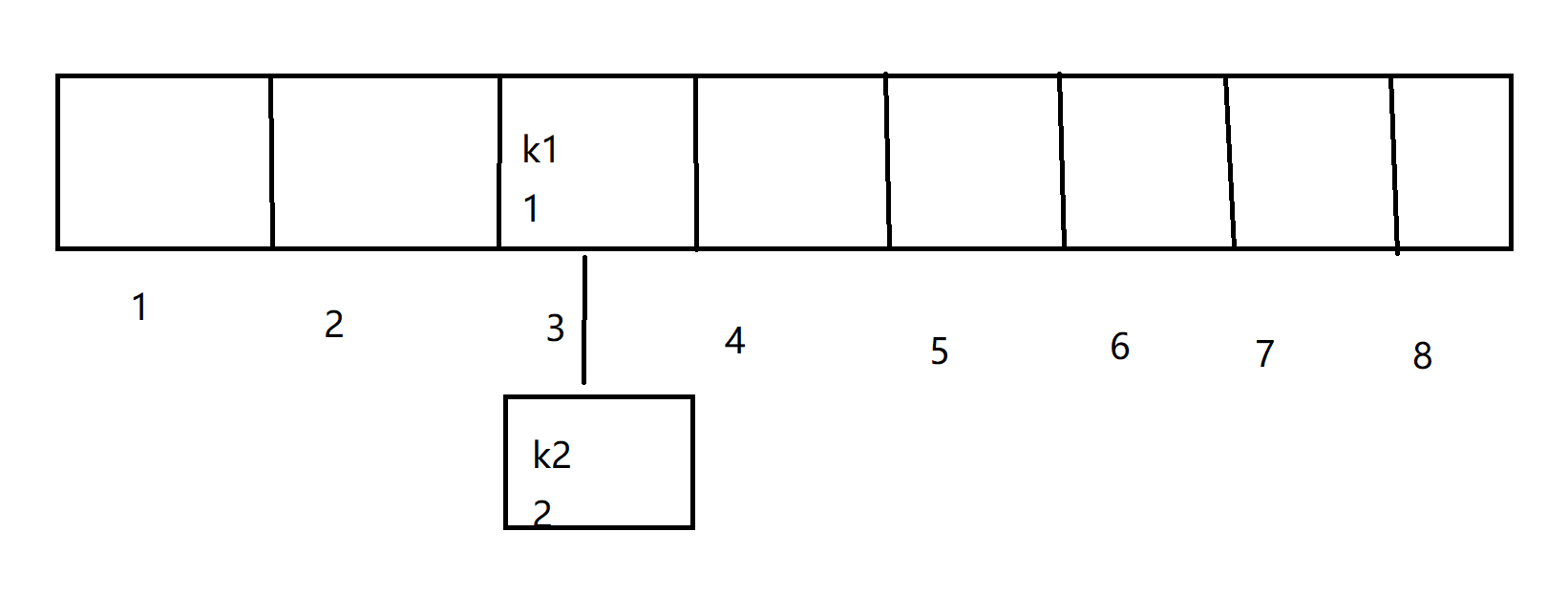

2、向Hash Map中储存k2键值对,假设k2计算出的索引值也为3,此时底层会比较k2与k1的hash值是否一致,如果不一致,则再次空间上划出一个节点来储存

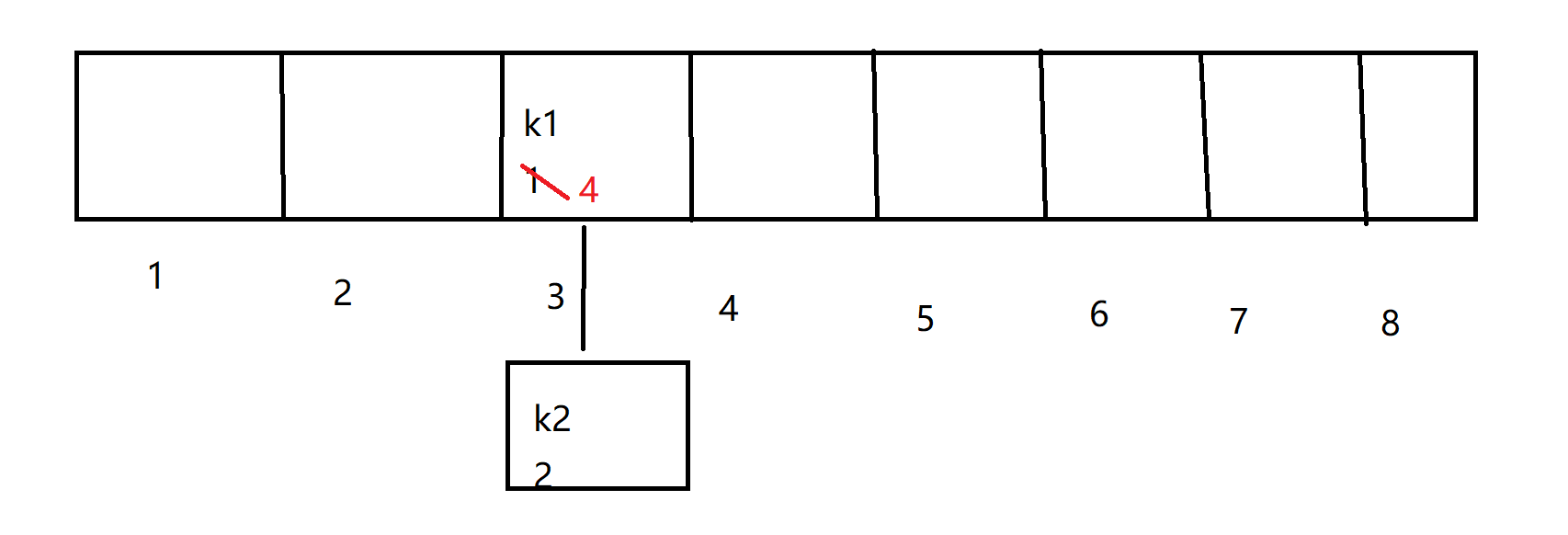

3、假设向HashMap中储存k1—4键值对,首先根据k1求出的索引也肯定是3,此时比较后储存的数据k1和已经存在的数据,如果hash值相等,此时发生哈希碰撞。 那么底层会调用所属类的equals方法比较两个内容是否相等,相等则将后来的数据的value覆盖掉之前的value,不相等那么继续向下和其他的数据的key进行比较,如果都不相等则划出一个节点储存数据。

说明:

- size表示Map中键值对的实时数量,而不是这个数组的长度

- threshold(临界值) = capacity(容量) * loadFactor(加载因子)。这个值是当前已占用数组长度的最大值,size超过这个临界值重新resize(扩容),扩容后的HashMap容量是之前容量的两倍。

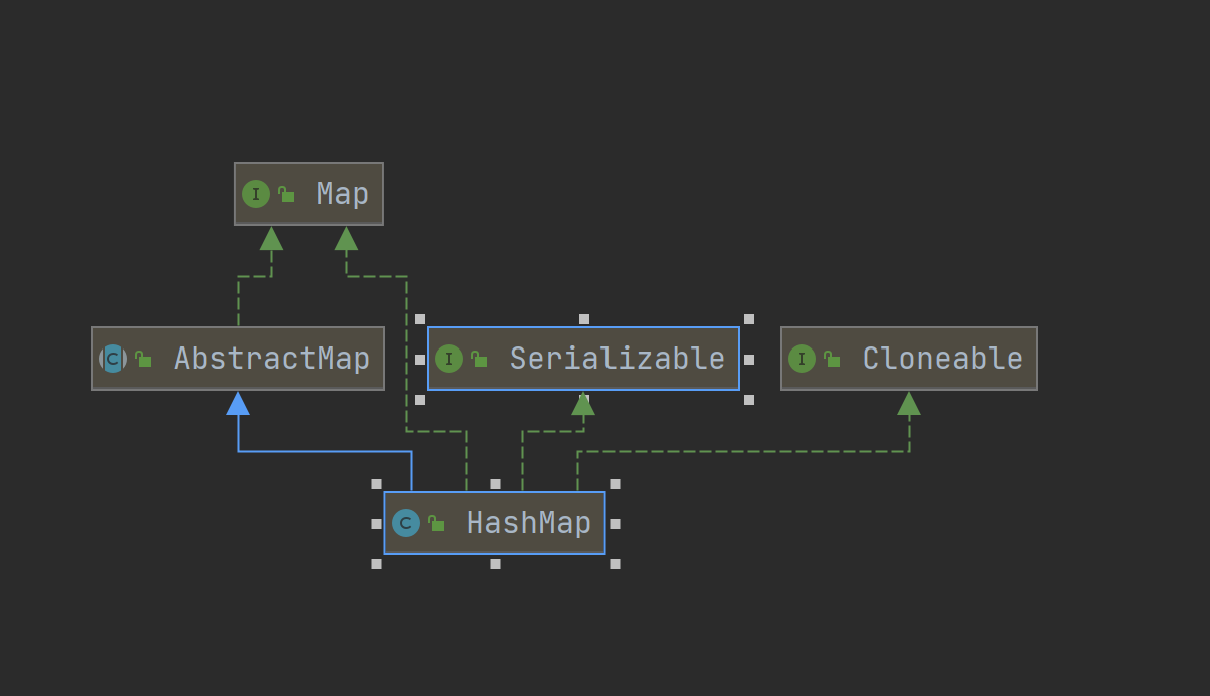

HashMap的继承关系

实现了两个标记接口Serializable和Cloneable表明它是可拷贝的和可序列化的

成员变量

集合的初始化容量(必须是二的n次幂)

/**

* The default initial capacity - MUST be a power of two.

*/

static final int DEFAULT_INITIAL_CAPACITY = 1 << 4; // aka 16

为什么一定要是二的n次幂呢?因为后面计算数组索引值的时候采用(length-1) & hash的方法,类似于取余。只有当length为二的n次方时,length-1在二进制下各位数字都是1,做与运算可以达到取余的效果。此种方法可以减少碰撞,提高效率。

那么当初始化容量不是二的n次幂时呢?

HashMap的处理方式是利用位运算找到大于这个非二的n次幂的数的最近的二的n次幂

这个位运算的算法如下:

static final int tableSizeFor(int cap) {

int n = cap - 1;

n |= n >>> 1;

n |= n >>> 2;

n |= n >>> 4;

n |= n >>> 8;

n |= n >>> 16;

return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1;

}

这个算法非常巧妙,通过不断地右移与原来的数进行或运算,让每个位上都变成1,最后再加上一,就得到了最近的二的n次幂

还有个细节,就是这里的cap - 1,为什么要减一?因为当cap恰好为二的n次幂时,如果不减一,最后的出来的结果是cap的两倍,为了避免这种错误所以才减一

2、默认的负载因子,默认值为0.75

/**

* The load factor used when none specified in constructor.

*/

static final float DEFAULT_LOAD_FACTOR = 0.75f;

3、集合最大容量230

/**

* The maximum capacity, used if a higher value is implicitly specified

* by either of the constructors with arguments.

* MUST be a power of two <= 1<<30.

*/

static final int MAXIMUM_CAPACITY = 1 << 30;

4、树型阈值(当链表的值超过8会转化为红黑树)

/**

* The bin count threshold for using a tree rather than list for a

* bin. Bins are converted to trees when adding an element to a

* bin with at least this many nodes. The value must be greater

* than 2 and should be at least 8 to mesh with assumptions in

* tree removal about conversion back to plain bins upon

* shrinkage.

*/

static final int TREEIFY_THRESHOLD = 8;

那么这个阈值为什么是8呢?

因为红黑树的查找平均复杂度为O(logn),而链表的查找的平均复杂度为O(n),时间效率上红黑树比链表要快,但是建立红黑树占用的空间大约是链表的两倍,对红黑树的增删都需要进行左旋右旋等操作,为了考虑时间和空间的平衡,所以设置一个阈值。

那么为什么偏偏是8呢?在HashMap的源码的注释中给出了答案

Because TreeNodes are about twice the size of regular nodes, we * use them only when bins contain enough nodes to warrant use * (see TREEIFY_THRESHOLD). And when they become too small (due to * removal or resizing) they are converted back to plain bins. In * usages with well-distributed user hashCodes, tree bins are * rarely used. Ideally, under random hashCodes, the frequency of * nodes in bins follows a Poisson distribution * (http://en.wikipedia.org/wiki/Poisson_distribution) with a * parameter of about 0.5 on average for the default resizing * threshold of 0.75, although with a large variance because of * resizing granularity. Ignoring variance, the expected * occurrences of list size k are (exp(-0.5) * pow(0.5, k) / * factorial(k)). The first values are: * * 0: 0.60653066 * 1: 0.30326533 * 2: 0.07581633 * 3: 0.01263606 * 4: 0.00157952 * 5: 0.00015795 * 6: 0.00001316 * 7: 0.00000094 * 8: 0.00000006 * more: less than 1 in ten million

大意就是如果hashCode的分布良好的话,那么红黑树是很少被用到的,理想情况下,链表长度符合泊松分布,注释中给出了长度为1到8的命中概率,可以看出当长度为8时概率仅为0.00000006,可以说概率是非常小了。此时再转化为红黑树,时间和空间复杂度就达到了一个非常平衡的程度。

我们可以举个例子,阈值为8时,比如现在有8个元素,用链表访问的平均查找长度为n/2,8/2=4,红黑树log(8)为3,红黑树查找效率比链表高,但是如果阈值是6,链表6/2 = 3,红黑树log(6) = 2.6,虽然速度也比链表快,但是转化为树形结构的也需要时间,所以阈值为6并不适合

5、当链表的值小于6时则会从红黑树转回链表

static final int UNTREEIFY_THRESHOLD = 6;

6、当数组长度超过64时,才会进行转化为红黑树的操作,否则进行数组扩容

/**

* The smallest table capacity for which bins may be treeified.

* (Otherwise the table is resized if too many nodes in a bin.)

* Should be at least 4 * TREEIFY_THRESHOLD to avoid conflicts

* between resizing and treeification thresholds.

*/

static final int MIN_TREEIFY_CAPACITY = 64;

7、table数组,用来初始化储存键值对(长度需是2的n次幂)

/**

* The table, initialized on first use, and resized as

* necessary. When allocated, length is always a power of two.

* (We also tolerate length zero in some operations to allow

* bootstrapping mechanics that are currently not needed.)

*/

transient Node<K,V>[] table;

8、用来存放缓存

/**

* Holds cached entrySet(). Note that AbstractMap fields are used

* for keySet() and values().

*/

transient Set<Map.Entry<K,V>> entrySet;

9、HashMap中存放元素的个数(重点)

/**

* The number of key-value mappings contained in this map.

*/

transient int size;

10、用来记录HashMap的修改次数

transient int modCount;

11、阈值

/**

* The next size value at which to resize (capacity * load factor).

*

* @serial

*/

// (The javadoc description is true upon serialization.

// Additionally, if the table array has not been allocated, this

// field holds the initial array capacity, or zero signifying

// DEFAULT_INITIAL_CAPACITY.)

int threshold;

下一次的数组扩容的临界值,超过了这个值数组就要进行扩容,这个值计算方式为(容量 * 负载因子)

12、哈希表的加载因子

/**

* The load factor for the hash table.

*

* @serial

*/

final float loadFactor;

用来衡量数组满的程度,默认值为0.75,第一次扩容的阈值16 * 0.75 = 12,也就是说当数组长度超过12就进行扩容。

0.75是官方给出的一个比较好的临界值,当数组里面的元素已经达到HashMap数组长度的75%时,表示这个数组太满了

构造方法

1、构造一个空的HashMap,默认初始容量(16)和负载因子(0.75)

public HashMap() {

this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted

}

2、构造一个具有指定的初始容量和默认负载因子(0.75)HashMap

public HashMap(int initialCapacity) {

this(initialCapacity, DEFAULT_LOAD_FACTOR);

}

3、构造一个具有指定的初始容量和负载因子的HashMap

public HashMap(int initialCapacity, float loadFactor) {

if (initialCapacity < 0)

throw new IllegalArgumentException("Illegal initial capacity: " +

initialCapacity);

if (initialCapacity > MAXIMUM_CAPACITY)

initialCapacity = MAXIMUM_CAPACITY;

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new IllegalArgumentException("Illegal load factor: " +

loadFactor);

this.loadFactor = loadFactor;

this.threshold = tableSizeFor(initialCapacity);

}

有人可能会问 this.threshold = tableSizeFor(initialCapacity);这一行,threshold的计算方式默认的话不是16 * 0.75 = 12嘛,如果传入的initialCapacity为9到16之间的一个数,那么返回结果就为16,thrashold的值就为16了,这不是与前面的相矛盾嘛,应该写成 this.threshold = tableSizeFor(initialCapacity) * this.loadFactor;但其实,在put方法会对threshold重新计算

4、包含另一个Map的构造函数

public HashMap(Map<? extends K, ? extends V> m) {

this.loadFactor = DEFAULT_LOAD_FACTOR;

putMapEntries(m, false);

}

final void putMapEntries(Map<? extends K, ? extends V> m, boolean evict) {

int s = m.size();

if (s > 0) {

if (table == null) { // pre-size

float ft = ((float)s / loadFactor) + 1.0F;

int t = ((ft < (float)MAXIMUM_CAPACITY) ?

(int)ft : MAXIMUM_CAPACITY);

if (t > threshold)

threshold = tableSizeFor(t);

}

else if (s > threshold)

resize();

for (Map.Entry<? extends K, ? extends V> e : m.entrySet()) {

K key = e.getKey();

V value = e.getValue();

putVal(hash(key), key, value, false, evict);

}

}

}

值得注意的是,float ft = ((float)s / loadFactor) + 1.0F;这里为什么要加上一个1呢?

这里加上一是因为size/loadFactor如果算出来是小数,最终强制转换为int会导致这个小数向下取整,会造成容量不够大,所以需要加上1,还有加上1,也能避免数组多次扩容,浪费时间和空间

成员方法

put(putVal)方法

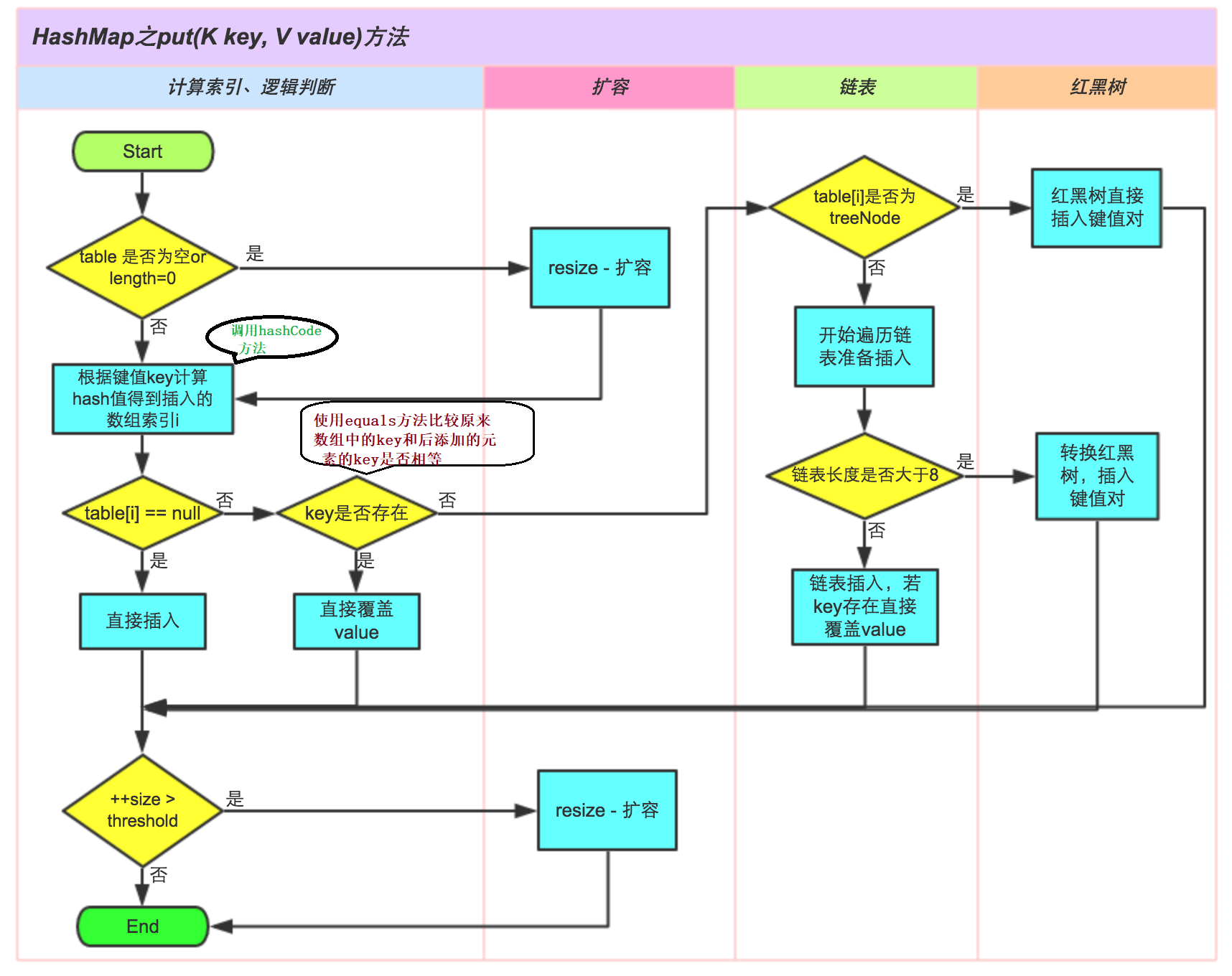

大概步骤如下:

- 先通过hash值计算出key映射到哪个桶

- 如果桶上没有碰撞冲突,则直接插入

- 如果出现碰撞冲突了,则需要处理冲突

- 如果该桶使用红黑树处理冲突,则调用红黑树的方法插入数据

- 否则采用传统的链式方法插入,如果链的长度达到临界值,则把链转变为红黑树

- 如果桶中存在重复的键,则为该键替换新值value

- 如果size大于阈值threshold,则进行扩容

具体的方法如下:

public V put(K key, V value) {

return putVal(hash(key), key, value, false, true);

}

再看hash方法

static final int hash(Object key) {

int h;

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

}

这里为什么要无符号右移16位异或呢?

这与putVal中的计算索引值有关

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

Node<K,V>[] tab; Node<K,V> p; int n, i;

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

else {

Node<K,V> e; K k;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

else {

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

++modCount;

if (++size > threshold)

resize();

afterNodeInsertion(evict);

return null;

}

i = (n - 1) & hash这一行,是为了计算索引值,相当于hash%length,hash值是随机的hashcode无符号右移16位异或得到的,为什么要右移16位呢?

如果不右移16位异或,直接与length-1进行&操作的话,由于length长度一般比较小,参与运算的往往只用到了hash值得低位,散列程度不够,容易发生hash碰撞。所以Java采用将hash值右移16位再与原hash值异或的方法,这样高16位不变,低16位由原来的高16位和低16位共同决定,增加了散列程度,减少了哈希碰撞。

看到putVal方法

参数:

- hash (key),key的hash值

- key 原始key

- value 要存放的值

- onlyIfAbsent 如果true代表不更改现有的值

- evict:如果位false表示table位创建状态

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

Node<K,V>[] tab; Node<K,V> p; int n, i;

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

else {

Node<K,V> e; K k;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

else {

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

++modCount;

if (++size > threshold)

resize();

afterNodeInsertion(evict);

return null;

}

treeifyBin方法

将链表转化为红黑树

/**

* Replaces all linked nodes in bin at index for given hash unless

* table is too small, in which case resizes instead.

*/

final void treeifyBin(Node<K,V>[] tab, int hash) {

int n, index; Node<K,V> e;

if (tab == null || (n = tab.length) < MIN_TREEIFY_CAPACITY)

resize();

else if ((e = tab[index = (n - 1) & hash]) != null) {

//hd:红黑树的头节点,tl:红黑树的尾节点

TreeNode<K,V> hd = null, tl = null;

do {

TreeNode<K,V> p = replacementTreeNode(e, null);

if (tl == null)

hd = p;

else {

p.prev = tl;

tl.next = p;

}

tl = p;

} while ((e = e.next) != null);

if ((tab[index] = hd) != null)

hd.treeify(tab);

}

}

// For treeifyBin

//就是将原来的链表节点转化为树节点

TreeNode<K,V> replacementTreeNode(Node<K,V> p, Node<K,V> next) {

return new TreeNode<>(p.hash, p.key, p.value, next);

}

这段代码主要做了以下几件事:

- 检查链表长度是否大于8并且数组长度大于64.否则数组扩容

- 将链表用hd头节点tl尾节点pre前指针连接起来

- 在treeify方法中进行树化

resize扩容方法

什么时候需要扩容?

当HashMap中的元素个数超过数组大小(数组长度)* LoadFactor(负载因子)时,就会进行数组长度扩容,就会把数组扩大一倍,然后重新计算每个元素在数组中的位置,这个操作十分耗费性能,所以我们如果预知了元素的个数HashMap的性能能够有效提升.

扩容之后原来元素的位置

当数组扩容为原来的两倍时,length转化为

二进制就相当于在高位前加个1,然后(length-1)& hash

得到新的索引值,当hash值在length的最高位上恰好是1时,新的数组索引值 = 原索引值 + 原数组长度

当hash值在length的最高位上恰好是0时,新的数组索引值 = 原索引值

resize()源码

/**

* Initializes or doubles table size. If null, allocates in

* accord with initial capacity target held in field threshold.

* Otherwise, because we are using power-of-two expansion, the

* elements from each bin must either stay at same index, or move

* with a power of two offset in the new table.

*

* @return the table

*/

final Node<K,V>[] resize() {

Node<K,V>[] oldTab = table;

int oldCap = (oldTab == null) ? 0 : oldTab.length;

int oldThr = threshold;

int newCap, newThr = 0;

if (oldCap > 0) {

if (oldCap >= MAXIMUM_CAPACITY) {

threshold = Integer.MAX_VALUE;

return oldTab;

}

else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&

oldCap >= DEFAULT_INITIAL_CAPACITY)

newThr = oldThr << 1; // double threshold

}

else if (oldThr > 0) // initial capacity was placed in threshold

newCap = oldThr;

else { // zero initial threshold signifies using defaults

newCap = DEFAULT_INITIAL_CAPACITY;

newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY);

}

if (newThr == 0) {

float ft = (float)newCap * loadFactor;

newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ?

(int)ft : Integer.MAX_VALUE);

}

threshold = newThr;

@SuppressWarnings({"rawtypes","unchecked"})

Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap];

table = newTab;

if (oldTab != null) {

for (int j = 0; j < oldCap; ++j) {//将旧的数组放到新数组里

Node<K,V> e;

if ((e = oldTab[j]) != null) {

oldTab[j] = null;

if (e.next == null)

newTab[e.hash & (newCap - 1)] = e;

else if (e instanceof TreeNode)//判断是否是树节点

((TreeNode<K,V>)e).split(this, newTab, j, oldCap);

else { // preserve order 链表节点

Node<K,V> loHead = null, loTail = null;

Node<K,V> hiHead = null, hiTail = null;

Node<K,V> next;

do {

next = e.next;

if ((e.hash & oldCap) == 0) {//高位是0的节点,数组索引位置不变,将原链表以此分为两条短链表

if (loTail == null)

loHead = e;

else

loTail.next = e;

loTail = e;

}

else {//高位是1的节点,数组索引位置变为length+1

if (hiTail == null)

hiHead = e;

else

hiTail.next = e;

hiTail = e;

}

} while ((e = next) != null);

if (loTail != null) {//高位为0的链表索引不变

loTail.next = null;

newTab[j] = loHead;

}

if (hiTail != null) {//索引变为length+j

hiTail.next = null;

newTab[j + oldCap] = hiHead;

}

}

}

}

}

return newTab;

}

remove方法

public V remove(Object key) {

Node<K,V> e;

return (e = removeNode(hash(key), key, null, false, true)) == null ?

null : e.value;

}

final Node<K,V> removeNode(int hash, Object key, Object value,

boolean matchValue, boolean movable) {

Node<K,V>[] tab; Node<K,V> p; int n, index;

if ((tab = table) != null && (n = tab.length) > 0 &&

(p = tab[index = (n - 1) & hash]) != null) {

Node<K,V> node = null, e; K k; V v;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))//如果要删除的元素为数组节点

node = p;//用node接收要删节点,在下面进行删除

else if ((e = p.next) != null) {//如果要删元素为链表节点

if (p instanceof TreeNode)

node = ((TreeNode<K,V>)p).getTreeNode(hash, key);//如果是红黑树节点

else {

do {

if (e.hash == hash &&

((k = e.key) == key ||

(key != null && key.equals(k)))) {

node = e;

break;

}

p = e;

} while ((e = e.next) != null);

}

}

if (node != null && (!matchValue || (v = node.value) == value ||

(value != null && value.equals(v)))) {

if (node instanceof TreeNode)

((TreeNode<K,V>)node).removeTreeNode(this, tab, movable);

else if (node == p)

tab[index] = node.next;

else

p.next = node.next;

++modCount;

--size;

afterNodeRemoval(node);

return node;

}

}

return null;

get方法

由于HashMap采取hash索引的方法,故可以直接计算出元素的数组索引位置,时间复杂度为O(1),如果存在链表,遍历链表即可,存在红黑树变历红黑树即可。

public V remove(Object key) {

Node<K,V> e;

return (e = removeNode(hash(key), key, null, false, true)) == null ?

null : e.value;

}

final Node<K,V> removeNode(int hash, Object key, Object value,

boolean matchValue, boolean movable) {

Node<K,V>[] tab; Node<K,V> p; int n, index;

if ((tab = table) != null && (n = tab.length) > 0 &&

(p = tab[index = (n - 1) & hash]) != null) {

Node<K,V> node = null, e; K k; V v;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

node = p;

else if ((e = p.next) != null) {

if (p instanceof TreeNode)

node = ((TreeNode<K,V>)p).getTreeNode(hash, key);

else {

do {

if (e.hash == hash &&

((k = e.key) == key ||

(key != null && key.equals(k)))) {

node = e;

break;

}

p = e;

} while ((e = e.next) != null);

}

}

if (node != null && (!matchValue || (v = node.value) == value ||

(value != null && value.equals(v)))) {

if (node instanceof TreeNode)

((TreeNode<K,V>)node).removeTreeNode(this, tab, movable);

else if (node == p)

tab[index] = node.next;

else

p.next = node.next;

++modCount;

--size;

afterNodeRemoval(node);

return node;

}

}

return null;

}

遍历HashMap集合的几种方式

-

分别遍历key和value

private static void method1(HashMap<String, Integer> map) { Set<String> strings = map.keySet(); Collection<Integer> values = map.values(); for (String string : strings) { System.out.println(string); } for (Integer value : values) { System.out.println(value); } } -

利用iterator迭代器迭代

private static void method2(HashMap<String, Integer> map) { Set<Map.Entry<String, Integer>> entries = map.entrySet(); Iterator<Map.Entry<String, Integer>> iterator = entries.iterator(); while(iterator.hasNext()){ Map.Entry<String, Integer> entry = iterator.next(); System.out.println(entry.getKey()+":"+entry.getValue()); } } -

利用get(不推荐,用了两次迭代器,效率低下)

private static void method3(HashMap<String, Integer> map) { Set<String> strings = map.keySet(); Collection<Integer> values = map.values(); for (String string : strings) { System.out.println(string+":"+ map.get(string)); } } -

利用Java8以后的map接口中的默认方法

private static void method4(HashMap<String, Integer> map) { map.forEach((key,value)-> System.out.println(key+":"+value)); }

94

94

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?