Kafka+Spark-Streaming实现流式计算(WordCount)

1.所需jar包下载

-

将/home/DYY/spark/kafka_2.12-3.0.0/libs/目录下的kafka-clients-3.0.0.jar拷贝到/home/DYY/spark/spark-3.1.1-bin-hadoop2.7/jars目录下

2.编写生产者程序

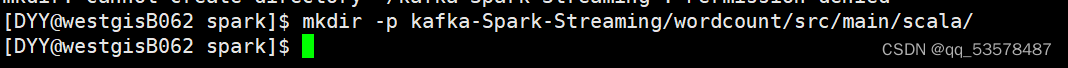

- 自己选择一个文件夹创建目录:(注意:mkdir -p /kafka-Spark-Streaming/wordcount/src/main/scala/是错误的)

mkdir -p kafka-Spark-Streaming/wordcount/src/main/scala/

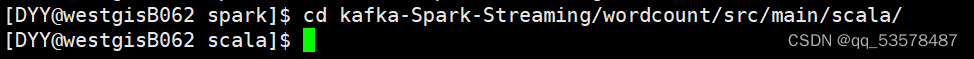

- 进入 wordcount/src/main/scala 目录下

cd kafka-Spark-Streaming/wordcount/src/main/scala/

- 编写生产者程序( vim KafkaWordProducer.scala )

vim KafkaWordProducer.scala

import java.util.HashMap

import org.apache.kafka.clients.producer.{KafkaProducer, ProducerConfig, ProducerRecord}

import org.apache.spark.SparkConf

import org.apache.spark.streaming._

import org.apache.spark.stre

本文介绍了如何使用Kafka和Spark-Streaming实现流式计算WordCount应用。首先,下载必要的jar包,包括spark-streaming-kafka-0-10和spark-token-provider-kafka-0-10。接着,编写生产者和消费者程序,生产者程序发送数据到Kafka,消费者程序使用Spark-Streaming进行处理。在消费者程序中,设置检查点以确保数据正确性。通过sbt打包并编译程序,最后分别运行生产者和消费者,确保IP地址匹配。

本文介绍了如何使用Kafka和Spark-Streaming实现流式计算WordCount应用。首先,下载必要的jar包,包括spark-streaming-kafka-0-10和spark-token-provider-kafka-0-10。接着,编写生产者和消费者程序,生产者程序发送数据到Kafka,消费者程序使用Spark-Streaming进行处理。在消费者程序中,设置检查点以确保数据正确性。通过sbt打包并编译程序,最后分别运行生产者和消费者,确保IP地址匹配。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

521

521

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?