参考: https://blog.csdn.net/jiecxy/article/details/78011630

1. 首先创建一个maven项目,添加如下的hadoop-client依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.allen</groupId>

<artifactId>JavaTest</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<kotlin.version>1.2.21</kotlin.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.9.0</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>2 添加如下的代码

package com.allen;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

import java.net.URI;

import java.net.URISyntaxException;

public class HdfsClientDemo {

public static void main(String[] args) throws URISyntaxException, IOException {

System.out.println("Hdfs client demo");

// 设置HADOOP_USER_NAME环境变量

System.setProperty("HADOOP_USER_NAME", "hadoop");

Configuration conf = new Configuration();

conf.set("fs.hdfs.impl", "org.apache.hadoop.hdfs.DistributedFileSystem");

String filePath = "hdfs://mini1:9000/text/text.txt";

Path path = new Path(filePath);

FileSystem fs = FileSystem.get(new URI(filePath), conf);

System.out.println("Writing ==================");

byte[] buff = "This is hello world from java api!\n".getBytes();

FSDataOutputStream os = fs.create(path);

os.write(buff, 0, buff.length);

os.close();

System.out.println("Reading ================");

FSDataInputStream is = fs.open(path);

BufferedReader br = new BufferedReader(new InputStreamReader(is));

String content = br.readLine();

System.out.println(content);

br.close();

fs.close();

}

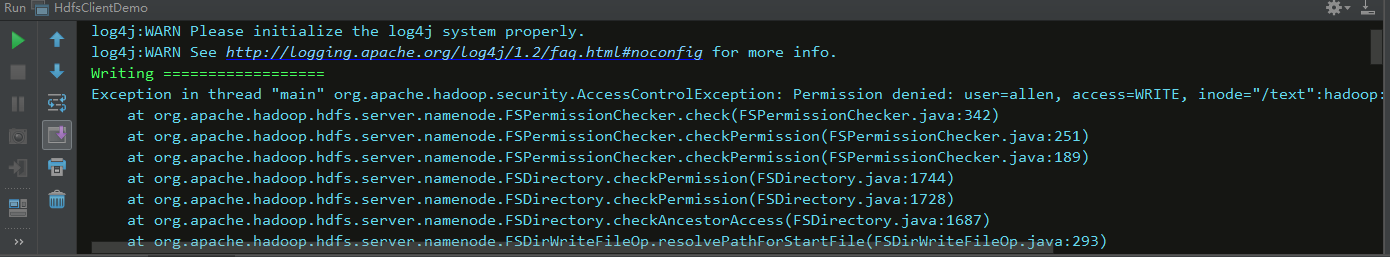

}其中 System.setProperty("HADOOP_USER_NAME", "xxxx"); 这行代码是设置程序运行时hadoop用户的身份,需要xxxx用户具有操作hdfs系统的权限,不然可能会出现Permission denied的错误

3 执行程序,输出如下

log4j:WARN No appenders could be found for logger (org.apache.hadoop.util.Shell).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Writing ==================

Reading ================

This is hello world from java api!

Process finished with exit code 0

5057

5057

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?