k8s环境准备

准备一台linux电脑

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-UzrJw8In-1630113298414)(_md_images/image-20201212165356597.png)]](https://img-blog.csdnimg.cn/af262c97b50f440e9589c33861a26191.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

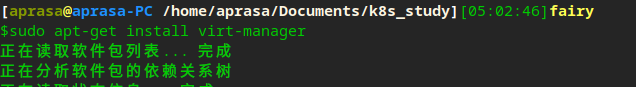

安装kvm,初始化k8s集群节点

sudo apt-get install virt-manager

安装os

配置kvm网段

sudo vi /etc/libvirt/qemu/networks/default.xml

# 配置完成后,重启网络

sudo systemctl restart network-manager

打通ssh tunnel

# 生成本地key

ssh-keygen -t rsa -C "cat@test.com"

# 实现免密登陆

ssh-copy-id root@10.4.7.11

配置各节点基本信息

# 配置hostname

hostnamectl set-hostname hdss7-11.host.com

# 配置网络信息

vi /etc/sysconfig/network-scripts/ifcfg-eth0

systemctl restart network

为Kube节点安装基本包

# 配置epel源

yum install epel-release -y

# 安装基本包

yum install wget net-tools telnet tree nmap sysstat lrzsz dos2unix bind-utils -y

关闭selinux及防火墙

# 关闭selinux

setenforce 0

# 关闭防火墙

systemctl stop firewalld

# 关闭防火墙开机启动

systemctl disable firewalld

配置dns服务器

安装bind

yum install bind -y

配置dns文件

vi /etc/named.conf

# 检查配置是否报错

named-checkconf

配置dns域

vi /etc/named.rfc1912.zones

# 末尾添加自己的zone

zone "host.com" IN {

type master;

file "host.com.zone";

allow-update { 10.4.7.11; };

};

zone "od.com" IN {

type master;

file "od.com.zone";

allow-update { 10.4.7.11; };

};

配置dns数据库

vi /var/named/host.com.zone

$ORIGIN host.com.

$TTL 1D

@ IN SOA dns.host.com. dnsadmin.host.com. (

2020121001 ; serial

1D ; refresh

1H ; retry

1W ; expire

3H ) ; minimum

NS dns.host.com.

A 127.0.0.1

AAAA ::1

dns A 10.4.7.11

HDSS7-11 A 10.4.7.11

HDSS7-12 A 10.4.7.12

HDSS7-21 A 10.4.7.21

HDSS7-22 A 10.4.7.22

HDSS7-200 A 10.4.7.200

vi /var/named/od.com.zone

$ORIGIN od.com.

$TTL 1D

@ IN SOA dns.od.com. dnsadmin.od.com. (

2020121001 ; serial

1D ; refresh

1H ; retry

1W ; expire

3H ) ; minimum

NS dns.od.com.

A 127.0.0.1

AAAA ::1

dns A 10.4.7.11

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Eag4qlft-1630113298422)(_md_images/image-20201212055147999.png)]](https://img-blog.csdnimg.cn/feadf1fcfa854866bb99b2fd1c27acb5.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

检查配置文件并启动dns

named-checkconf

systemctl start named

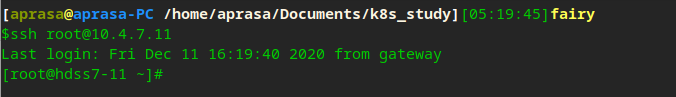

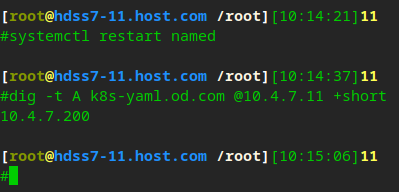

测试dns是否连通

# 查看port是否已经开启

netstat -luntp | grep 53

# 测试域名 测试A记录,在10.4.7.11的服务器,短输出

dig -t A hdss7-200.host.com @10.4.7.11 +short

配置证书机构

安装cfssl

# 下载二进制软件

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/bin/cfssl-json

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/bin/cfssl-certinfo

# 添加可执行权限

chmod +x /usr/bin/cfssl*

创建证书目录

cd /opt/

mkdir certs

cd certs/

生成ca证书

# 创建ca-csr.json文件

vi ca-csr.json

{

"CN": "OldboyEdu",

"hosts":[

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "od",

"OU": "ops"

}

],

"ca": {

"expiry": "438000h"

}

}

#生成证书

cfssl gencert -initca ca-csr.json | cfssl-json -bare ca

准备docker环境

在hdss7-200,hdss7-21,hdss7-22这三台机器上准备环境

# 在hdss7-200,hdss7-21,hdss7-22

使用在线脚本安装docker-ce

curl -fsSL https://get.docker.com | bash -s docker --mirror Aliyun

配置docker

#创建配置文件

mkdir /etc/docker

vi /etc/docker/daemon.json

{

"graph": "/data/docker",

"storage-driver": "overlay2",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.od.com"],

"registry-mirrors": ["https://q2gr04ke.mirror.aliyuncs.com"],

"bip": "172.7.21.1/24",

"exec-opts": ["native.cgroupdriver=systemd"],

"live-restore": true

}

# 创建数据目录

mkdir -p /data/docker

启动docker

systemctl start docker

# 查看docker信息

docker version

docker info

准备docker私有仓库harbor

# 官方地址

https://github.com/goharbor/harbor

下载harbor镜像

cd /opt/

mkdir docker_src

cd docker_src/

wget https://github.com/goharbor/harbor/releases/download/v2.0.5/harbor-offline-installer-v2.0.5.tgz

配置harbor

# 解压到opt目录

tar xf harbor-offline-installer-v2.0.5.tgz -C /opt/

# 标记版本号

cd /opt/

mv harbor harbor-v2.0.5

# 创建软链接,便于将来升级

ln -s /opt/harbor-v2.0.5/ /opt/harbor

# 创建配置文件

cp harbor.yml.tmpl harbor.yml

# 更改配置

vi harbor.yml

# 创建日志路径

mkdir -p /data/harbor/logs

# 创建数据路径

mkdir /data/harbor

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-HFLv4Aaa-1630113298429)(_md_images/image-20201212160423821.png)]](https://img-blog.csdnimg.cn/2a6a5d1d7b334aaebda91e67a72e0beb.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-jfXbxJ38-1630113298430)(_md_images/image-20201212155225456.png)]](https://img-blog.csdnimg.cn/af2d825d3c02482998124ca1924b1326.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

运行harbor

# 由于harbor是在docker中运行的,它依赖于docker-compose做单机编排

yum install docker-compose -y

# 运行安装脚本

./install.sh

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-IZK3vgW2-1630113298430)(_md_images/image-20201212155804618.png)]](https://img-blog.csdnimg.cn/03dc2b1cdddc4726836ed61f85dbc983.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

#可以用docker-compose查看单机编排上启动的任务

docker-compose ps

使用nginx反向代理harbor

# 安装nginx

yum install nginx -y

# 配置nginx文件

vi /etc/nginx/conf.d/harbor.od.com.conf

client_max_body_size 1000m; 由于harbor每层镜像大小不一样,不配置这个有可能报错

server {

listen 80;

server_name harbor.od.com;

client_max_body_size 1000m;

location / {

proxy_pass http://127.0.0.1:180;

}

}

#查看配置是否正确

nginx -t

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-4lPt1eh8-1630113298432)(_md_images/image-20201212162250516.png)]](https://img-blog.csdnimg.cn/9a0afae8b898489784b71e800e75a82b.png)

# 配置开机启动nginx

systemctl enable nginx

# 启动nginx服务

systemctl start nginx

添加harbor域名到dns服务器

# 检查发现域名不通

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-q4apvQtm-1630113298433)(_md_images/image-20201212162527747.png)]](https://img-blog.csdnimg.cn/74fa800166c44a44804d2ac4de891b1e.png)

# 添加域名到hdss7-11

vi /var/named/od.com.zone

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-UiOIwa0W-1630113298434)(_md_images/image-20201212162842352.png)]](https://img-blog.csdnimg.cn/9c13a550344c4a19b25377d82da54a19.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 重启named服务

systemctl restart named

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Gx7uwK8o-1630113298435)(_md_images/image-20201212163014269.png)]](https://img-blog.csdnimg.cn/d3ab923b76104fea93e7d96e413d448d.png)

可以看到,在配置完dns后,就可以curl了

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-3uRWjjsO-1630113298435)(_md_images/image-20201212163541204.png)]](https://img-blog.csdnimg.cn/0a4087cb4f1048f5823bc54b773f1ff7.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

通过浏览器访问harbor

http://harbor.od.com/

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-GCHH1l4s-1630113298436)(_md_images/image-20201212164122241.png)]](https://img-blog.csdnimg.cn/681236ebeba744ef915339e1dc6cc2a5.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-qGABLpRo-1630113298437)(_md_images/image-20201212164221415.png)]](https://img-blog.csdnimg.cn/e83e617d1e9d41f09b2bfbce31317318.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

新建public项目仓库

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-pQjGjVKD-1630113298437)(_md_images/image-20201212172227285.png)]](https://img-blog.csdnimg.cn/5db9cbf4cdb84c63890bd5e10f040a7b.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

测试自建的public harbor仓库

# 从公网上拉一个nginx镜像

docker pull nginx:1.7.9

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-eclN6UM1-1630113298438)(_md_images/image-20201212173149642.png)]](https://img-blog.csdnimg.cn/3691f0f249364efb93154d1ac642fc2e.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 打tag

docker tag 84581e99d807 harbor.od.com/public/nginx:v1.7.9

# 推送到本地harbor仓库

docker login harbor.od.com

docker push harbor.od.com/public/nginx:v1.7.9

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-lxEzoJ8F-1630113298439)(_md_images/image-20201212173436608.png)]](https://img-blog.csdnimg.cn/b8e1581f990e463d8b28175e756eb5f7.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

可以在harbor portal上看到推送成功

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-QWFifycj-1630113298440)(_md_images/image-20201212173538849.png)]](https://img-blog.csdnimg.cn/5fe31dbe48bf4adfba8ce3bd50740720.png)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-61awbYf4-1630113298441)(_md_images/image-20201212173707254.png)]](https://img-blog.csdnimg.cn/af773924cacb42c7b81c9f03e11a8b17.png)

安装主控节点-Master

部署kube master节点服务

部署etcd节点

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-MFCbrZvl-1630113298441)(_md_images/image-20201212173858981.png)]](https://img-blog.csdnimg.cn/5204241dcd054af79e068aa534d9f672.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

集群规划

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-d028oD5L-1630113298442)(_md_images/image-20201212173937993.png)]](https://img-blog.csdnimg.cn/cdf4782763c040bcae26411c8438ec0e.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建etcd server证书

# 在hdss7-200机器上创建ca配置

vi /opt/certs/ca-config.json

{

"signing": {

"default": {

"expiry": "438000h"

},

"profiles": {

"server": {

"expiry": "438000h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "438000h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "438000h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

# 创建etcd自签证书配置

vi /opt/certs/etcd-peer-csr.json

hosts多填了10.4.7.11,预防其它3个节点有down的情况后,还可以用10.4.7.11当个buffer备用

{

"CN": "k8s-etcd",

"hosts": [

"10.4.7.11",

"10.4.7.12",

"10.4.7.21",

"10.4.7.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "GuangZhou",

"L": "GuangZhou",

"O": "k8s",

"OU": "yw"

}

]

}

# 开始签发证书

cd /opt/certs/

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json | cfssl-json -bare etcd-peer

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-sTxFfytJ-1630113298443)(_md_images/image-20201212180007611.png)]](https://img-blog.csdnimg.cn/9cb57e5f70ac43d2a333dc17b1e80ec1.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-kojeeAwa-1630113298444)(_md_images/image-20201212180127711.png)]](https://img-blog.csdnimg.cn/e27dcb69a6be4371b1fd491397099642.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建etcd用户

useradd -s /sbin/nologin -M etcd

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-EE3I9iO8-1630113298444)(_md_images/image-20201212181017932.png)]](https://img-blog.csdnimg.cn/c5ad777e031d4aac9e0e1f1ab0076fbd.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

下载etcd软件

cd /opt/src/

# 参照https://github.com/etcd-io/etcd/releases/tag/v3.1.20 文档说明,保存脚本到本地,再执行

sh download.sh

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-4yJ9K5lo-1630113298445)(_md_images/image-20201212193347396.png)]](https://img-blog.csdnimg.cn/125e7459e9e04a18b269a043754e0f27.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

部署etcd

mv etcd-download-test/ etcd-3.1.20/

ln -s /opt/etcd-3.1.20/ /opt/etcd

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-DtB6EPPp-1630113298446)(_md_images/image-20201212193557962.png)]](https://img-blog.csdnimg.cn/74f3326dab3c4d61a1fdddad1f8d46a5.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 创建相关目录

mkdir -p /opt/etcd/certs /data/etcd /data/logs/etcd-server

# 拷贝证书到当前系统

cd /opt/etcd/certs

scp hdss7-200:/opt/certs/ca.pem .

scp hdss7-200:/opt/certs/etcd-peer.pem .

scp hdss7-200:/opt/certs/etcd-peer-key.pem .

# 私钥的权限是600

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-i3sOmiKz-1630113298446)(_md_images/image-20201212182638908.png)]](https://img-blog.csdnimg.cn/a51a1d4755254c88ba21f5cfa2fc1415.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建etcd启动脚本

# 创建启动脚本

vi /opt/etcd/etcd-server-startup.sh

#!/bin/sh

/opt/etcd/etcd --name etcd-server-7-12 \

--data-dir /data/etcd/etcd-server \

--listen-peer-urls https://10.4.7.12:2380 \

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--quota-backend-bytes 8000000000 \

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file /opt/etcd/certs/ca.pem \

--cert-file /opt/etcd/certs/etcd-peer.pem \

--key-file /opt/etcd/certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file /opt/etcd/certs/ca.pem \

--peer-ca-file /opt/etcd/certs/ca.pem \

--peer-cert-file /opt/etcd/certs/etcd-peer.pem \

--peer-key-file /opt/etcd/certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file /opt/etcd/certs/ca.pem \

--log-output stdout

# 给脚本执行权限

chmod +x /opt/etcd/etcd-server-startup.sh

# 更改属主和属组

chown -R etcd.etcd /opt/etcd-3.1.20/

chown -R etcd.etcd /data/etcd/

chown -R etcd.etcd /data/logs/etcd-server/

通过supervisord程序后台运行etcd

# 安装后台管理进程的软件

yum install supervisor -y

systemctl start supervisord

systemctl enable supervisord

# 创建supervisord的启动文件

vi /etc/supervisord.d/etcd-server.ini

[program:etcd-server-7-12]

command=/opt/etcd/etcd-server-startup.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/etcd ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=etcd ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/etcd-server/etcd.stdout.log ; stdout log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

killasgroup=true

stopasgroup=true

# 执行并查看后台启动任务

supervisorctl update

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-dLjzu0Qt-1630113298447)(_md_images/image-20201212225305393.png)]](https://img-blog.csdnimg.cn/88196a85d8834c74aa3fa422ac93747a.png)

可以查看日志 tailf /data/logs/etcd-server/etcd.stdout.log ,一段时间后起来了。

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-blDEk8F8-1630113298449)(_md_images/image-20201212225436475.png)]](https://img-blog.csdnimg.cn/277b3e51770c454ea1bf0bc1e8c2f74c.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

将三台机器依次配置后,可以进行集群检查

./etcdctl cluster-health

./etcdctl member list

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-hjcWefYM-1630113298449)(_md_images/image-20201212231629792.png)]](https://img-blog.csdnimg.cn/737f1ec95adf464abf115e0d7b835bd9.png)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-FHiXZP49-1630113298451)(_md_images/image-20201212232302240.png)]](https://img-blog.csdnimg.cn/b31051446ce84ef8ab229011689ca135.png)

部署apiserver

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ffbM56x4-1630113298451)(_md_images/image-20201212232510867.png)]](https://img-blog.csdnimg.cn/8328f81bdcb64209b6a1360df1ff8b2d.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

下载apiserver软件

# 从官方github上找到下载链接 https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.15.md#downloads-for-v1154

wget https://dl.k8s.io/v1.15.4/kubernetes-server-linux-amd64.tar.gz

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-lHJMuWvZ-1630113298452)(_md_images/image-20201213105132494.png)]](https://img-blog.csdnimg.cn/5f0195695e7641cd959f860938c5015b.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

部署apiserver

# 解压后部署到指定目录

tar xf kubernetes-server-linux-amd64.tar.gz -C /opt/

mv kubernetes/ kubernetes-v1.15.4

ln -s /opt/kubernetes-v1.15.4/ /opt/kubernetes

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-G2n6kTdz-1630113298452)(_md_images/image-20201213105539687.png)]](https://img-blog.csdnimg.cn/10787b99dfeb440b98b6e6ae5a9a819c.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

/opt/kubernetes/server/bin下的.tar和docker_tag是docker镜像,由于没用kubeadm的方式部署,可以把docker相关的文件删除

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-2yrdQdmu-1630113298453)(_md_images/image-20201213105759227.png)]](https://img-blog.csdnimg.cn/bf41786f5b4a4d9a8e88e7a7f6e9cc82.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

签发client证书

在hdss7-200机器上签发证书

# apiserver(client)和etcd(server)通信用的

vi /opt/certs/client-csr.json

{

"CN": "k8s-node",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Hangzhou",

"L": "Hangzhou",

"O": "od",

"OU": "ops"

}

]

}

cd /opt/certs

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json | cfssl-json -bare client

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-uU2guxHA-1630113298453)(_md_images/image-20201213172533719.png)]](https://img-blog.csdnimg.cn/e2bf03500ec24c8e9236adc8590026d0.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

签发apiserver证书

vi apiserver-csr.json

{

"CN": "k8s-apiserver",

"hosts": [

"127.0.0.1",

"192.168.0.1",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "ShangHai",

"L": "ShangHai",

"O": "od",

"OU": "ops"

}

]

}

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server apiserver-csr.json |cfssl-json -bare apiserver

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-u2utEru1-1630113298455)(_md_images/image-20201213173040034.png)]](https://img-blog.csdnimg.cn/eb35bae8e03c4a5eaad4ddc973161e36.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

将证书拷贝到apiserver

cd /opt/kubernetes/server/bin

mkdir cert

cd cert/

scp hdss7-200:/opt/certs/ca.pem .

scp hdss7-200:/opt/certs/ca-key.pem .

scp hdss7-200:/opt/certs/client.pem .

scp hdss7-200:/opt/certs/client-key.pem .

scp hdss7-200:/opt/certs/apiserver.pem .

scp hdss7-200:/opt/certs/apiserver-key.pem .

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ck60wKdW-1630113298455)(_md_images/image-20201213173812286.png)]](https://img-blog.csdnimg.cn/583dd5db2d1345ebb4f1180e5f90f738.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建apiserver启动配置文件

cd /opt/kubernetes/server/bin

mkdir conf

cd conf/

vi audit.yaml

apiVersion: audit.k8s.io/v1beta1 # This is required.

kind: Policy

# Don't generate audit events for all requests in RequestReceived stage.

omitStages:

- "RequestReceived"

rules:

# Log pod changes at RequestResponse level

- level: RequestResponse

resources:

- group: ""

# Resource "pods" doesn't match requests to any subresource of pods,

# which is consistent with the RBAC policy.

resources: ["pods"]

# Log "pods/log", "pods/status" at Metadata level

- level: Metadata

resources:

- group: ""

resources: ["pods/log", "pods/status"]

# Don't log requests to a configmap called "controller-leader"

- level: None

resources:

- group: ""

resources: ["configmaps"]

resourceNames: ["controller-leader"]

# Don't log watch requests by the "system:kube-proxy" on endpoints or services

- level: None

users: ["system:kube-proxy"]

verbs: ["watch"]

resources:

- group: "" # core API group

resources: ["endpoints", "services"]

# Don't log authenticated requests to certain non-resource URL paths.

- level: None

userGroups: ["system:authenticated"]

nonResourceURLs:

- "/api*" # Wildcard matching.

- "/version"

# Log the request body of configmap changes in kube-system.

- level: Request

resources:

- group: "" # core API group

resources: ["configmaps"]

# This rule only applies to resources in the "kube-system" namespace.

# The empty string "" can be used to select non-namespaced resources.

namespaces: ["kube-system"]

# Log configmap and secret changes in all other namespaces at the Metadata level.

- level: Metadata

resources:

- group: "" # core API group

resources: ["secrets", "configmaps"]

# Log all other resources in core and extensions at the Request level.

- level: Request

resources:

- group: "" # core API group

- group: "extensions" # Version of group should NOT be included.

# A catch-all rule to log all other requests at the Metadata level.

- level: Metadata

# Long-running requests like watches that fall under this rule will not

# generate an audit event in RequestReceived.

omitStages:

- "RequestReceived"

创建apiserver启动脚本

cd /opt/kubernetes/server/bin

vi kube-apiserver.sh

#!/bin/bash

./kube-apiserver \

--apiserver-count 2 \

--audit-log-path /data/logs/kubernetes/kube-apiserver/audit-log \

--audit-policy-file ./conf/audit.yaml \

--authorization-mode RBAC \

--client-ca-file ./cert/ca.pem \

--requestheader-client-ca-file ./cert/ca.pem \

--enable-admission-plugins NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota \

--etcd-cafile ./cert/ca.pem \

--etcd-certfile ./cert/client.pem \

--etcd-keyfile ./cert/client-key.pem \

--etcd-servers https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--service-account-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--service-node-port-range 3000-29999 \

--target-ram-mb=1024 \

--kubelet-client-certificate ./cert/client.pem \

--kubelet-client-key ./cert/client-key.pem \

--log-dir /data/logs/kubernetes/kube-apiserver \

--tls-cert-file ./cert/apiserver.pem \

--tls-private-key-file ./cert/apiserver-key.pem \

--v 2

通过supervisord后台启动apiserver

chmod +x kube-apiserver.sh

mkdir -p /data/logs/kubernetes/kube-apiserver

vi /etc/supervisord.d/kube-apiserver.ini

[program:kube-apiserver-7-21]

command=/opt/kubernetes/server/bin/kube-apiserver.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-apiserver/apiserver.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false

supervisorctl update

# 查看状态

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-XNCxdSjM-1630113298462)(_md_images/image-20201213181221949.png)]](https://img-blog.csdnimg.cn/af9fd080a72d437c973c31bbc55f64d8.png)

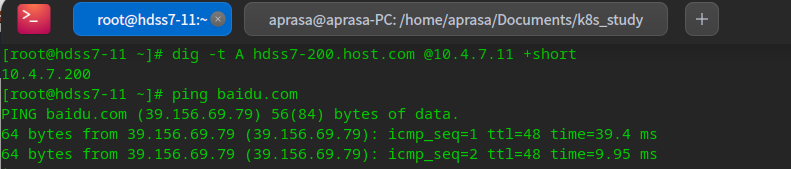

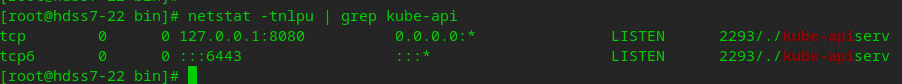

安装proxy,做四层反向代理

# 查看apiserver占用的端口

netstat -tnlpu | grep kube-api

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-8oUQzdBX-1630113298463)(_md_images/image-20201213181947745.png)]](https://img-blog.csdnimg.cn/4225c3ea44b24f1c94286c6717c73c52.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

安装nginx

# 在7-11和7-12上装nginx

yum install nginx -y

配置四层反向代理

# 注意是末尾追加,不要添加到http语句块中[七层],将6443端口映射为7443

# stream是四层配置

vi /etc/nginx/nginx.conf

stream {

upstream kube-apiserver {

server 10.4.7.21:6443 max_fails=3 fail_timeout=30s;

server 10.4.7.22:6443 max_fails=3 fail_timeout=30s;

}

server {

listen 7443;

proxy_connect_timeout 2s;

proxy_timeout 900s;

proxy_pass kube-apiserver;

}

}

启动nginx

# 检查nginx配置

nginx -t

# 启动nginx

systemctl start nginx

systemctl enable nginx

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-pNNdYiUb-1630113298464)(_md_images/image-20201213183407105.png)]](https://img-blog.csdnimg.cn/76a9771c34fc4063b0c623ea8477b43f.png)

安装keepalived,跑vip

安装keepalived

yum install keepalived -y

配置keepalived监听脚本

vi /etc/keepalived/check_port.sh

#!/bin/bash

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lnt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

exit 1

fi

else

echo "Check Port Cant Be Empty!"

fi

chmod +x /etc/keepalived/check_port.sh

keepalived主

# 保存默认配置备份

mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.template

vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.11

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.4.7.11

nopreempt

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

keepalived从

vi /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.12

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 251

priority 90

advert_int 1

mcast_src_ip 10.4.7.12

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

启动keepalived

systemctl start keepalived

systemctl enalbe keepalived

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-4MIHpBGI-1630113298465)(_md_images/image-20201213185659374.png)]](https://img-blog.csdnimg.cn/32b4fb33954d4d68a8dbff092b03b51e.png)

可以看到vip已经在主节点上了

备节点上没有

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-6qMzFLfe-1630113298466)(_md_images/image-20201213190031121.png)]](https://img-blog.csdnimg.cn/cc466ec6b3a049a2aa1d25781024d4d7.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

测试vip漂移

# 停掉主节点上的nginx,可以看到vip漂移到备节点上

nginx -s stop

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-wXSVfLKs-1630113298467)(_md_images/image-20201213191055362.png)]](https://img-blog.csdnimg.cn/6d5d0218054a4ee397100cc80729c055.png)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-8tH5M9Mt-1630113298467)(_md_images/image-20201213191115306.png)]](https://img-blog.csdnimg.cn/81f9dd34f6c6495494da270aa2f60b7d.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 注意在从节点上,不要配置nopreempt,这个是抢占式

# 这是因为主节点发生故障后,vip会漂移到从节点

# 在修好主节点后,vip不会自动漂移过来。[生产上]只有在确认万无一失后,才可以触发备节点vip漂移到主节点

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-4br7D45S-1630113298468)(_md_images/image-20201213192015677.png)]](https://img-blog.csdnimg.cn/3776a7052ecf4bf198294ab992f3eb03.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 在修好后,只有重新启动主从节点的keepalived服务后,ip才会漂移回来

systemctl restart keepalived

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-vEOg5upX-1630113298469)(_md_images/image-20201213192235134.png)]](https://img-blog.csdnimg.cn/5d7bce1862ab4e7193645f4f7483ec4e.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

部署kube-controller

在10.4.7.21和10.4.7.22服务器上

创建kube-controller启动脚本

vi /opt/kubernetes/server/bin/kube-controller-manager.sh

#!/bin/sh

./kube-controller-manager \

--cluster-cidr 172.7.0.0/16 \

--leader-elect true \

--log-dir /data/logs/kubernetes/kube-controller-manager \

--master http://127.0.0.1:8080 \

--service-account-private-key-file ./cert/ca-key.pem \

--service-cluster-ip-range 192.168.0.0/16 \

--root-ca-file ./cert/ca.pem \

--v 2

chmod +x /opt/kubernetes/server/bin/kube-controller-manager.sh

mkdir -p /data/logs/kubernetes/kube-controller-manager

通过superviso的配置后台启动kube-controller

vi /etc/supervisord.d/kube-conntroller-manager.ini

[program:kube-controller-manager-7-21]

command=/opt/kubernetes/server/bin/kube-controller-manager.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-controller-manager/controller.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

# 执行并查看后台启动任务

supervisorctl update

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-27NMzWTI-1630113298469)(_md_images/image-20201213193711911.png)]](https://img-blog.csdnimg.cn/1648ff4172604a5eb886a92fd9f1e53d.png)

部署kube-scheduler

创建kube-scheduler启动脚本

vi /opt/kubernetes/server/bin/kube-scheduler.sh

#!/bin/sh

./kube-scheduler \

--leader-elect \

--log-dir /data/logs/kubernetes/kube-scheduler \

--master http://127.0.0.1:8080 \

--v 2

chmod +x /opt/kubernetes/server/bin/kube-scheduler.sh

mkdir -p /data/logs/kubernetes/kube-scheduler

通过superviso的配置后台启动kube-scheduler

vi /etc/supervisord.d/kube-scheduler.ini

[program:kube-scheduler-7-21]

command=/opt/kubernetes/server/bin/kube-scheduler.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-scheduler/scheduler.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

# 执行并查看后台启动任务

supervisorctl update

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-JnHMjKii-1630113298470)(_md_images/image-20201213194032145.png)]](https://img-blog.csdnimg.cn/173af841793247d8961dcf652712abca.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

检查健康状态

创建kubectl软链接

ln -s /opt/kubernetes/server/bin/kubectl /usr/bin/kubectl

检查集群健康状态

# kubectl get cluster server role

kubectl get cs

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-U2C01ozB-1630113298470)(_md_images/image-20201213194445144.png)]](https://img-blog.csdnimg.cn/63af05d5d7c54ff88c320dccea3e5fc2.png)

安装运算节点-Worker

部署kubelet

签发证书

# 首先在hdss7-200上申请证书

vi /opt/certs/kubelet-csr.json

{

"CN": "k8s-kubelet",

"hosts": [

"127.0.0.1",

"10.4.7.10",

"10.4.7.21",

"10.4.7.22",

"10.4.7.23",

"10.4.7.24",

"10.4.7.25",

"10.4.7.26",

"10.4.7.27",

"10.4.7.28"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

# 申请server证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server kubelet-csr.json | cfssl-json -bare kubelet

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-VNYqLTGw-1630113298471)(_md_images/image-20201214205408906.png)]](https://img-blog.csdnimg.cn/4ff3c83162394f1193acf9f34c64c055.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 分发证书到21,22上

cd /opt/kubernetes/server/bin/cert/

scp hdss7-200:/opt/certs/kubelet.pem .

scp hdss7-200:/opt/certs/kubelet-key.pem .

创建配置

cd /opt/kubernetes/server/bin/conf

# 进入到conf目录执行以下命令:21,22上执行

set-cluster

kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kubelet.kubeconfig

set-credentials

kubectl config set-credentials k8s-node \

--client-certificate=/opt/kubernetes/server/bin/cert/client.pem \

--client-key=/opt/kubernetes/server/bin/cert/client-key.pem \

--embed-certs=true \

--kubeconfig=kubelet.kubeconfig

set-context

kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=k8s-node \

--kubeconfig=kubelet.kubeconfig

use-context

kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig

创建k8s-node集群资源

# hdss7-21上执行:PS:因为不论在哪个节点创建,已经同步到etcd上

vi /opt/kubernetes/server/bin/conf/k8s-node.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: k8s-node

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

kubectl create -f /opt/kubernetes/server/bin/conf/k8s-node.yaml

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-uscIsXPP-1630113298472)(_md_images/image-20201214212326868.png)]](https://img-blog.csdnimg.cn/df9ba18ef8c5466f8eeb3c66d580a85a.png)

检查k8s-node资源创建状态

kubectl get clusterrolebinding k8s-node -o yaml

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ek9NotiN-1630113298472)(_md_images/image-20201214212437224.png)]](https://img-blog.csdnimg.cn/403551d3c5584fb195423efd41b741f0.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建pause基础镜像

# 在运维主机200上

# 公有仓库下载pause镜像

docker pull kubernets/pause

# docker login登陆私有仓库

docker login harbor.od.com

# 打标签并上传到私有仓库

docker tag f9d5de079539 harbor.od.com/public/pause:latest

docker push harbor.od.com/public/pause:latest

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-m4UfulDE-1630113298473)(_md_images/image-20201214222238828.png)]](https://img-blog.csdnimg.cn/98a1218d8cc84ef685a3b8d7e21050e7.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Z9W4Jgi9-1630113298474)(_md_images/image-20201214223105925.png)]](https://img-blog.csdnimg.cn/c2793dcace2744b08932cda95ab406fa.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建kubelet启动脚本

# 在21 22节点上创建启动脚本

vi /opt/kubernetes/server/bin/kubelet.sh

#!/bin/sh

./kubelet \

--anonymous-auth=false \

--cgroup-driver systemd \

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

--fail-swap-on="false" \

--client-ca-file ./cert/ca.pem \

--tls-cert-file ./cert/kubelet.pem \

--tls-private-key-file ./cert/kubelet-key.pem \

--hostname-override hdss7-21.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

chmod +x /opt/kubernetes/server/bin/kubelet.sh

mkdir -p /data/logs/kubernetes/kube-kubelet

mkdir -p /data/kubelet

通过supervisord后台启动kubelet

vi /etc/supervisord.d/kube-kubelet.ini

[program:kube-kubelet-7-21]

command=/opt/kubernetes/server/bin/kubelet.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-kubelet/kubelet.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

supervisorctl update

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-63K2peyI-1630113298474)(_md_images/image-20201214224522762.png)]](https://img-blog.csdnimg.cn/2298b2c7b4864021b453e1260c56499c.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

给node打标签

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-IHHvyMcw-1630113298475)(_md_images/image-20201214232658062.png)]](https://img-blog.csdnimg.cn/075d6b8acb994cd1a6a63b19dc3aba65.png)

kubectl label node hdss7-21.host.com node-role.kubernetes.io/master=

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-hmVpugV0-1630113298475)(_md_images/image-20201214232958365.png)]](https://img-blog.csdnimg.cn/8bcea0517d4644c7b8438a80392e8bb6.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

部署kube-proxy

主要作用:连接Pod网络和集群网络

签发证书

# 在hdss7-200上申请证书

vi /opt/certs/kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client kube-proxy-csr.json |cfssl-json -bare kube-proxy-client

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-c9fAN9qq-1630113298476)(_md_images/image-20201214234851106.png)]](https://img-blog.csdnimg.cn/b71b8617dbf245ac9f85a734c464a5f7.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 拷贝证书到21,22上

cd /opt/kubernetes/server/bin/cert/

scp hdss7-200:/opt/certs/kube-proxy-client-key.pem .

scp hdss7-200:/opt/certs/kube-proxy-client.pem .

创建配置

# 到21,22上

cd /opt/kubernetes/server/bin/conf

set-cluster

kubectl config set-cluster myk8s \

--certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

--embed-certs=true \

--server=https://10.4.7.10:7443 \

--kubeconfig=kube-proxy.kubeconfig

set-credentials

kubectl config set-credentials kube-proxy \

--client-certificate=/opt/kubernetes/server/bin/cert/kube-proxy-client.pem \

--client-key=/opt/kubernetes/server/bin/cert/kube-proxy-client-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

set-context

kubectl config set-context myk8s-context \

--cluster=myk8s \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

use-context

kubectl config use-context myk8s-context --kubeconfig=kube-proxy.kubeconfig

加载ipvs模块

# 到21,22上

vi /root/ipvs.sh

#!/bin/bash

ipvs_mods_dir="/usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs"

for i in $(ls $ipvs_mods_dir|grep -o "^[^.]*")

do

/sbin/modinfo -F filename $i &>/dev/null

if [ $? -eq 0 ];then

/sbin/modprobe $i

fi

done

chmod u+x /root/ipvs.sh

sh /root/ipvs.sh

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ZB0gSur3-1630113298476)(_md_images/image-20201215000623187.png)]](https://img-blog.csdnimg.cn/8db8ddd6d4c5414ab73c4036927f2c7f.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建kube-proxy启动脚本

# 到21,22上

vi /opt/kubernetes/server/bin/kube-proxy.sh

#!/bin/sh

./kube-proxy \

--cluster-cidr 172.7.0.0/16 \

--hostname-override hdss7-21.host.com \

--proxy-mode=ipvs \

--ipvs-scheduler=nq \

--kubeconfig ./conf/kube-proxy.kubeconfig

chmod +x /opt/kubernetes/server/bin/kube-proxy.sh

mkdir -p /data/logs/kubernetes/kube-proxy

通过supervisord后台启动kube-proxy

vi /etc/supervisord.d/kube-proxy.ini

[program:kube-proxy-7-21]

command=/opt/kubernetes/server/bin/kube-proxy.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-proxy/proxy.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

supervisorctl update

supervisorctl status

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Hg9pTe7V-1630113298477)(_md_images/image-20201215002011365.png)]](https://img-blog.csdnimg.cn/4ea5e6396d6e4494a2229660d3f43e07.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

验证启动后采用的ipvs算法

查看日志,发现已经有ipvs算法调度

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-Bfzy9ODn-1630113298477)(_md_images/image-20201215002514936.png)]](https://img-blog.csdnimg.cn/019795d20ede488da30a9b1c8cb253f3.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 安装ipvsadm进行查看

yum install ipvsadm -y

ipvsadm -Ln

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-whauLHL5-1630113298478)(_md_images/image-20201215002934157.png)]](https://img-blog.csdnimg.cn/72973f0efcc844cdbd3d686689d68814.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

验证kubernets集群

在任意一个运算节点,创建一个资源配置清单

# 21上执行就行

vi /root/nginx-ds.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nginx-ds

spec:

template:

metadata:

labels:

app: nginx-ds

spec:

containers:

- name: my-nginx

image: harbor.od.com/public/nginx:v1.7.9

ports:

- containerPort: 80

kubectl create -f nginx-ds.yaml

kubectl get pods

kubectl get cs

kubectl get nodes

pod目前状态还不支持跨宿主机通信

需要安装flannel实现跨宿主机通信

部署CNI网络插件 Flannel

部署Flannel

下载Flannel,并部署到目录

# Flannel下载地址:https://github.com/coreos/flannel/releases/

# 在21 22机器上操作

cd /opt/src/

wget https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

# 创建目录并解压进去

mkdir /opt/flannel-v0.11.0

tar xf flannel-v0.11.0-linux-amd64.tar.gz -C /opt/flannel-v0.11.0/

# 部署到目录

ln -s /opt/flannel-v0.11.0/ /opt/flannel

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-N8lu7Ui1-1630113298480)(_md_images/image-20201220164022405.png)]](https://img-blog.csdnimg.cn/c8d00c30ed824e8c84846c2afa296340.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

拷贝证书

mkdir /opt/flannel/cert

cd /opt/flannel/cert

# 因为flannel需要与etcd进行通信,进行储存和配置,当作etcd的客户端

scp hdss7-200:/opt/certs/ca.pem .

scp hdss7-200:/opt/certs/client.pem .

scp hdss7-200:/opt/certs/client-key.pem .

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-6UqCwIkT-1630113298480)(_md_images/image-20201220164254904.png)]](https://img-blog.csdnimg.cn/3fc2744a90384126b10746fe546ed431.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建配置

cd /opt/flannel

vi subnet.env

FLANNEL_NETWORK=172.7.0.0/16

FLANNEL_SUBNET=172.7.22.1/24

FLANNEL_MTU=1500

FLANNEL_IPMASQ=false

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-fhqvKG1U-1630113298480)(_md_images/image-20201220164412976.png)]](https://img-blog.csdnimg.cn/c9c42985c5164654a731ba0b6f5185c8.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建启动脚本

cd /opt/flannel

vi flanneld.sh

#!/bin/sh

./flanneld \

--public-ip=10.4.7.22 \

--etcd-endpoints=https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379 \

--etcd-keyfile=./cert/client-key.pem \

--etcd-certfile=./cert/client.pem \

--etcd-cafile=./cert/ca.pem \

--iface=eth0 \

--subnet-file=./subnet.env \

--healthz-port=2401

chmod u+x flanneld.sh

操作etcd,增加host-gw

# flannel依赖etcd存储信息,需要在etcd上设置flannel网络配置

# 这个配置操作一次即可,就写入了etcd了,不用重复操作

cd /opt/etcd

./etcdctl set /coreos.com/network/config '{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}'

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-c40v1FIT-1630113298482)(_md_images/image-20201220164757132.png)]](https://img-blog.csdnimg.cn/7199218558964efc8323e3f12f7a4177.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

创建supervisor配置

vi /etc/supervisord.d/flannel.ini

[program:flanneld-7-22]

command=/opt/flannel/flanneld.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/flannel ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/flanneld/flanneld.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

mkdir -p /data/logs/flanneld/

supervisorctl update

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-F2NMClmQ-1630113298483)(_md_images/image-20201220165053628.png)]](https://img-blog.csdnimg.cn/04a9d30eddaa48fe998346a194fbcfd6.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

k8s内部容器一定要做SNAT规则配置

# 让容器pod互相看到的是真实的pod IP,而不是node(物理机)上的ip

# pod访问外部网络用SNAT,因为外部不认pod的ip。但pod访问pod,就应该用它自己的ip来显示了

在未配置前

默认使用了宿主机的ip

# 可以看到上面的pod访问下面的pod时,

# 显示的是上面pod的宿主机ip

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-5wxSJzLV-1630113298484)(_md_images/image-20201221220437355.png)]](https://img-blog.csdnimg.cn/fc398f5d0abb4628b946f6364b656f62.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

查看iptables中SNAT表

# 这里需要修改iptables优化SNAT规则,否则在访问时,其他节点记录的是node节点的ip 10.4.7.22,而不是pod集群内部的172.7.22.x

# 查看iptables中SNAT表的POSTROUTING转发规则

iptables-save | grep -i postrouting

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-JIrYobZ6-1630113298485)(_md_images/image-20201221221109153.png)]](https://img-blog.csdnimg.cn/5f510438927947c5acf5f3be700b8829.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

配置iptables优化选项

# 配置iptables优化选项

# 安装iptables,因为centos7默认不带

yum install iptables-services -y

# 启动iptables

systemctl start iptables

systemctl enable iptables

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-y9Wl3dqq-1630113298485)(_md_images/image-20201221221655539.png)]](https://img-blog.csdnimg.cn/8bc286c8e64b45d297d884a98fd556c1.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 查看规则

iptables-save |grep -i postrouting

# 删除原规则

iptables -t nat -D POSTROUTING -s 172.7.21.0/24 ! -o docker0 -j MASQUERADE

# 插入新规则,目标地址不是172.7.0.0/16的网络,才做SNAT转换

iptables -t nat -I POSTROUTING -s 172.7.21.0/24 ! -d 172.7.0.0/16 ! -o docker0 -j MASQUERADE

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-5s2qolMz-1630113298486)(_md_images/image-20201221222020928.png)]](https://img-blog.csdnimg.cn/5408a9cd38c744b4aaed47c43cc2cfc9.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 保存规则

iptables-save > /etc/sysconfig/iptables

# 注意 需要都在宿主机上做,即21 22的机器

# 可以发现在配置完iptables后,网络不通了

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-eHYa1COn-1630113298488)(_md_images/image-20201221223323752.png)]](https://img-blog.csdnimg.cn/8048298f3b0e448cbd82a45290cc3a30.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 这是由于安装iptables后,它的规则比较严厉

# 去掉些规则就可以了

# 最后再保存下

iptables-save > /etc/sysconfig/iptables

# 最终可以显示为pod的ip才为正常

安装部署coredns

k8s服务发现:不同服务之前相互定位的过程

开始通过部署容器的方式向k8s交付服务

部署k8s的http服务

配置nginx

# 首先在运维主机hdss7-200上创建一个nginx虚拟主机,用来获取资源配置清单

vi /etc/nginx/conf.d/k8s-yaml.od.com.conf

server {

listen 80;

server_name k8s-yaml.od.com;

location / {

autoindex on;

default_type text/plain;

root /data/k8s-yaml;

}

}

mkdir -p /data/k8s-yaml/coredns

nginx -t

nginx -s reload

添加域名解析

# 在自建的dns主机hdss-11上,添加域名解析

# 在最后添加一条解析记录

vi /var/named/od.com.zone

# 重启服务

systemctl restart named

# 可以看到解析正常

dig -t A k8s-yaml.od.com @10.4.7.11 +short

# 可以看到,在浏览器中能访问

准备coredns镜像

# 官方网站 https://github.com/kubernetes/kubernetes/tree/master/cluster/addons/dns/coredns

# 在运维主机hdss7-200上部署coredns

cd /data/k8s-yaml/coredns

# 将会以容器的方式在k8s里交付软件

# 下载官方镜像

docker pull docker.io/coredns/coredns:1.6.1

# 再放在私有仓库

docker tag c0f6e815079e harbor.od.com/public/coredns:v1.6.1

docker push harbor.od.com/public/coredns:v1.6.1

准备资源配置清单

# 官方配置文件清单

# https://github.com/kubernetes/kubernetes/blob/master/cluster/addons/dns/coredns/coredns.yaml.base

cd /data/k8s-yaml/coredns

rbac.yaml–拿到集群相关权限

vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: Reconcile

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: EnsureExists

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

cm.yaml–configmap 对集群的相关配置

vi cm.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

log

health

ready

kubernetes cluster.local 192.168.0.0/16 #service资源cluster地址

forward . 10.4.7.11 #上级DNS地址

cache 30

loop

reload

loadbalance

}

dp.yaml—pod控制器

vi dp.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/name: "CoreDNS"

spec:

replicas: 1

selector:

matchLabels:

k8s-app: coredns

template:

metadata:

labels:

k8s-app: coredns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

containers:

- name: coredns

image: harbor.od.com/public/coredns:v1.6.1

args:

- -conf

- /etc/coredns/Corefile

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

svc.yaml—service资源

vi svc.yaml

apiVersion: v1

kind: Service

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: coredns

clusterIP: 192.168.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

- name: metrics

port: 9153

protocol: TCP

可以看到,已经同步在http上

创建资源,完成部署

# 使用http请求资源配置清单yaml的方式来创建资源:在任意node节点上创建

kubectl create -f http://k8s-yaml.od.com/coredns/rbac.yaml

kubectl create -f http://k8s-yaml.od.com/coredns/cm.yaml

kubectl create -f http://k8s-yaml.od.com/coredns/dp.yaml

kubectl create -f http://k8s-yaml.od.com/coredns/svc.yaml

# 查看运行情况

kubectl get all -n kube-system

# 可以看到,在pod内,有dns解析搜索域等设置

部署服务暴露ingress之Traefik

以traefik为例部署

下载traefik到私有仓库

# 在hdss7-200上执行:

# git地址:https://github.com/traefik/traefik

docker pull traefik:v1.7.2-alpine

docker tag add5fac61ae5 harbor.od.com/public/traefik:v1.7.2

docker push harbor.od.com/public/traefik:v1.7.2

创建资源配置清单

创建rbac

cd /data/k8s-yaml/traefik/

vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: traefik-ingress-controller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: traefik-ingress-controller

rules:

- apiGroups:

- ""

resources:

- services

- endpoints

- secrets

verbs:

- get

- list

- watch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- get

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

创建ds

vi ds.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: traefik-ingress

namespace: kube-system

labels:

k8s-app: traefik-ingress

spec:

template:

metadata:

labels:

k8s-app: traefik-ingress

name: traefik-ingress

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 60

containers:

- image: harbor.od.com/public/traefik:v1.7.2

name: traefik-ingress

ports:

- name: controller

containerPort: 80

hostPort: 81

- name: admin-web

containerPort: 8080

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

args:

- --api

- --kubernetes

- --logLevel=INFO

- --insecureskipverify=true

- --kubernetes.endpoint=https://10.4.7.10:7443

- --accesslog

- --accesslog.filepath=/var/log/traefik_access.log

- --traefiklog

- --traefiklog.filepath=/var/log/traefik.log

- --metrics.prometheus

创建svc

vi svc.yaml

kind: Service

apiVersion: v1

metadata:

name: traefik-ingress-service

namespace: kube-system

spec:

selector:

k8s-app: traefik-ingress

ports:

- protocol: TCP

port: 80

name: controller

- protocol: TCP

port: 8080

name: admin-web

创建ingress

vi ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-web-ui

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: traefik.od.com

http:

paths:

- path: /

backend:

serviceName: traefik-ingress-service

servicePort: 8080

node节点上创建资源

kubectl create -f http://k8s-yaml.od.com/traefik/rbac.yaml

kubectl create -f http://k8s-yaml.od.com/traefik/ds.yaml

kubectl create -f http://k8s-yaml.od.com/traefik/svc.yaml

kubectl create -f http://k8s-yaml.od.com/traefik/ingress.yaml

可以看到,81端口已经启用

配置nginx解析

# hdss7-11,hdss7-12 七层反代

vi /etc/nginx/conf.d/od.com.conf

upstream default_backend_traefik {

server 10.4.7.21:81 max_fails=3 fail_timeout=10s;

server 10.4.7.22:81 max_fails=3 fail_timeout=10s;

}

server {

server_name *.od.com;

location / {

proxy_pass http://default_backend_traefik;

proxy_set_header Host $http_host;

proxy_set_header x-forwarded-for $proxy_add_x_forwarded_for;

}

}

# 测试并重启nginx

nginx -t

nginx -s reload

解析域名

# 在hdss7-11上添加域名解析:在ingress.yaml中的host值:

vi /var/named/od.com.zone

systemctl restart named

# 然后我们就可以在集群外,通过浏览器访问这个域名了:

# http://traefik.od.com #我们的宿主机的虚拟网卡指定了bind域名解析服务器

dashboard安装

准备dashboard镜像

# 首先下载镜像上传到我们的私有仓库中:hdss7-200

docker pull k8scn/kubernetes-dashboard-amd64:v1.8.3

docker tag fcac9aa03fd6 harbor.od.com/public/dashboard:v1.8.3

docker push harbor.od.com/public/dashboard:v1.8.3

准备资源配置清单

rbac.yaml

mkdir -p /data/k8s-yaml/dashboard

cd /data/k8s-yaml/dashboard

vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

name: kubernetes-dashboard-admin

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard-admin

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard-admin

namespace: kube-system

dp.yaml

vi dp.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

priorityClassName: system-cluster-critical

containers:

- name: kubernetes-dashboard

image: harbor.od.com/public/dashboard:v1.8.3

resources:

limits:

cpu: 100m

memory: 300Mi

requests:

cpu: 50m

memory: 100Mi

ports:

- containerPort: 8443

protocol: TCP

args:

# PLATFORM-SPECIFIC ARGS HERE

- --auto-generate-certificates

volumeMounts:

- name: tmp-volume

mountPath: /tmp

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard-admin

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

svc.yaml

vi svc.yaml

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

k8s-app: kubernetes-dashboard

ports:

- port: 443

targetPort: 8443

ingress.yaml

vi ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kubernetes-dashboard

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: dashboard.od.com

http:

paths:

- backend:

serviceName: kubernetes-dashboard

servicePort: 443

创建资源

# 在任意node上执行

kubectl create -f http://k8s-yaml.od.com/dashboard/rbac.yaml

kubectl create -f http://k8s-yaml.od.com/dashboard/dp.yaml

kubectl create -f http://k8s-yaml.od.com/dashboard/svc.yaml

kubectl create -f http://k8s-yaml.od.com/dashboard/ingress.yaml

添加域名解析

vi /var/named/od.com.zone

# dashboard A 10.4.7.10

# 在数据量的情况下,不要直接重启服务。用rndc指定reload某个区域

systemctl restart named

测试:通过浏览器访问

签发证书

# 依然使用cfssl来申请证书:hdss7-200

cd /opt/certs/

vi dashboard-csr.json

{

"CN": "*.od.com",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server dashboard-csr.json |cfssl-json -bare dashboard

配置nginx代理https

# 拷贝到我们nginx的服务器上:7-11 7-12 都需要

cd /etc/nginx/

mkdir certs

cd certs

scp hdss7-200:/opt/certs/dash* ./

cd /etc/nginx/conf.d/

vi dashboard.od.com.conf

server {

listen 80;

server_name dashboard.od.com;

rewrite ^(.*)$ https://${server_name}$1 permanent;

}

server {

listen 443 ssl;

server_name dashboard.od.com;

ssl_certificate "certs/dashboard.pem";

ssl_certificate_key "certs/dashboard-key.pem";

ssl_session_cache shared:SSL:1m;

ssl_session_timeout 10m;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

location / {

proxy_pass http://default_backend_traefik;

proxy_set_header Host $http_host;

proxy_set_header x-forwarded-for $proxy_add_x_forwarded_for;

}

}

nginx -t

nginx -s reload

可以看到,显示了证书信息

平滑升级k8s

查看当前部署版本

选取node负载低的机器

kubectl get pods -A -o wide

# 当我们遇到K8S有漏洞的时候,或者为了满足需求,有时候可能会需要升级或者降级版本,

# 为了减少对业务的影响,尽量选择在业务低谷的时候来升级:这里先选择了21机器进行操作

摘除4和7层的负载均衡

# 移除4层负载均衡

vi /etc/nginx/nginx.conf

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-UfO7R9LB-1630113298504)(_md_images/image-20201231215830358.png)]](https://img-blog.csdnimg.cn/57619693672b48818a5fb60d825417c1.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 移除7层负载均衡

vi /etc/nginx/conf.d/od.com.conf

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-UrFrIzQo-1630113298504)(_md_images/image-20201231220154250.png)]](https://img-blog.csdnimg.cn/c9ccf7a92abf4ae485a78c04bcb87cbf.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 重启nginx

nginx -t

nginx -s reload

下线node

kubectl delete node hdss7-21.host.com

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-13ErSvjd-1630113298505)(_md_images/image-20201231215051530.png)]](https://img-blog.csdnimg.cn/45549826cfcb444d90b579d383631fbe.png)

部署新版本包

cd /opt/src/

wget https://dl.k8s.io/v1.15.2/kubernetes-server-linux-amd64.tar.gz

# 解压

mkdir kubetmp/

tar -zxvf kubernetes-server-linux-amd64.tar.gz.1 -C kubetmp/

# 迁移到部署目录

mv kubetmp/kubernetes/ ../kubernetes-v1.15.2

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-sHoMdKKG-1630113298505)(_md_images/image-20201231221209891.png)]](https://img-blog.csdnimg.cn/5b34d3b1c20e4f8c8c0185edc61a74e5.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

更新配置

# 从原版本中拷贝配置

cd /opt/kubernetes-v1.15.2/server/bin/

mkdir conf

mkdir cert

cp /opt/kubernetes/server/bin/conf/* conf/

cp /opt/kubernetes/server/bin/cert/* cert/

cp /opt/kubernetes/server/bin/*.sh .

# 更新软链接

cd /opt/

rm -rf kubernetes

ln -s kubernetes-v1.15.2/ kubernetes

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-eD8p7vqE-1630113298505)(_md_images/image-20201231222207325.png)]](https://img-blog.csdnimg.cn/fedeadacbc45424f9165a03c75c1a620.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

升级版本

supervisorctl status

supervisorctl restart all

# 可以看到版本已经平滑改变

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-ixxLUz2V-1630113298506)(_md_images/image-20201231223306406.png)]](https://img-blog.csdnimg.cn/9b5d92f77bba421b93ee78db29b50e78.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

恢复4和7层的负载均衡

# 去掉相关注释

vi /etc/nginx/nginx.conf

vi /etc/nginx/conf.d/od.com.conf

# 重启nginx

nginx -t

nginx -s reload

交付dubbo服务到k8s集群

dubbo架构图

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-wyfIwBuN-1630113298506)(_md_images/image-20210101174239027.png)]](https://img-blog.csdnimg.cn/fe9f43043dac4234bfcc42d80746961c.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

dubbo在k8s架构图

# 因为zookeeper属于有状态服务

# 不建议将有状态服务,交付到k8s,如mysql,zk等

部署dubbo服务

首先部署zk集群:zk是java服务,需要依赖jdk,jdk请自行下载:

集群分布:7-11,7-12,7-21

安装Jdk

mkdir /opt/src

cd /opt/src

# 将准备好的包拷进来

scp aprasa@10.4.7.254:/home/aprasa/Downloads/jdk-15.0.1_linux-x64_bin.tar.gz .

mkdir /usr/java

cd /opt/src

tar -xf jdk-15.0.1_linux-x64_bin.tar.gz -C /usr/java/

cd /usr/java/

# 创建jdk软链接

ln -s jdk-15.0.1/ jdk

vi /etc/profile

#JAVA HOME

export JAVA_HOME=/usr/java/jdk

export PATH=$JAVA_HOME/bin:$PATH

export CLASSPATH=$CLASSPATH:$JAVA_HOME/lib:$JAVA_HOME/lib/tools.jar

source /etc/profile

java -version

部署zk

# 下载zookeeper

cd /opt/src

wget https://archive.apache.org/dist/zookeeper/zookeeper-3.4.14/zookeeper-3.4.14.tar.gz

tar -zxf zookeeper-3.4.14.tar.gz -C ../

ln -s /opt/zookeeper-3.4.14/ /opt/zookeeper

# 创建日志及数据目录

mkdir -pv /data/zookeeper/data /data/zookeeper/logs

# 配置

vi /opt/zookeeper/conf/zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/data/zookeeper/data

dataLogDir=/data/zookeeper/logs

clientPort=2181

server.1=zk1.od.com:2888:3888

server.2=zk2.od.com:2888:3888

server.3=zk3.od.com:2888:3888

# 添加dns解析

vi /var/named/od.com.zone

systemctl restart named

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-uu107cp9-1630113298509)(_md_images/image-20210101183213959.png)]](https://img-blog.csdnimg.cn/a44e57617e214e8092c65d1ea80031b8.png?x-oss-process=image/watermark,type_ZHJvaWRzYW5zZmFsbGJhY2s,shadow_50,text_Q1NETiBA5aW257OW56We56ul,size_20,color_FFFFFF,t_70,g_se,x_16)

# 修改zk集群,配置myid

# 7-11

echo 1 > /data/zookeeper/data/myid

# 7-12

echo 2 > /data/zookeeper/data/myid

# 7-21

echo 3 > /data/zookeeper/data/myid

# 启动zk

/opt/zookeeper/bin/zkServer.sh start

# 查看zk集群情况

/opt/zookeeper/bin/zkServer.sh status