from urllib.request import urlopen

from bs4 import BeautifulSoup

import re

pages = set()

def getLinks(pageUrl):

global pages

html = urlopen("http://en.wikipedia.org"+pageUrl)

bsObj = BeautifulSoup(html, "html.parser")

try:

print(bsObj.h1.get_text())

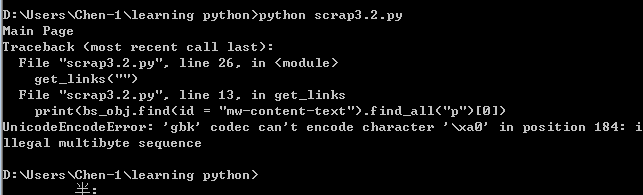

print(bsObj.find(id="mw-content-text").findAll("p")[0], decoding='utf-8')

print(bsObj.find(id="ca-edit").find("span").find("a").attrs['href'], encoding='utf-8')

except AttributeError:

print("页面缺少一些属性!不过不用担心!")

for link in bsObj.findAll("a", href=re.compile("^(/wiki/)")):

if 'href' in link.attrs:

if link.attrs['href'] not in pages:

# 我们遇到了新页面

newPage = link.attrs['href']

print("----------------\n"+newPage)

pages.add(newPage)

getLinks(newPage)

getLinks("")

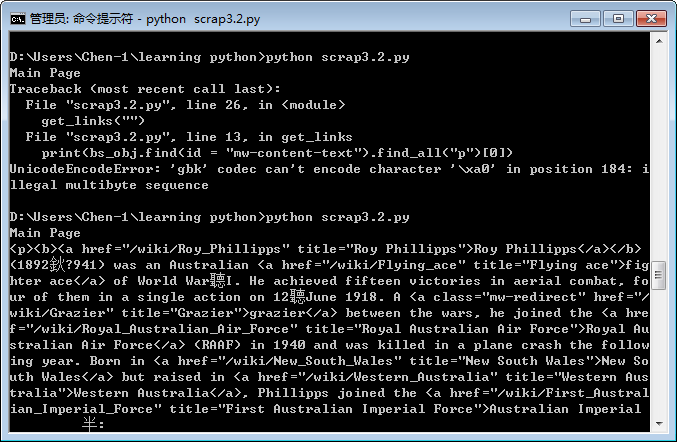

import io, sys

sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='utf8') #改变标准输出的默认编码

不过作为一只菜鸟,根本就不知道到底是不是成功了。。。

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?