通过思路:应用数据——>kafka——>clickhouse

例子1:数据类型:

{"b":[{"a":2,"b":"abc"}]}

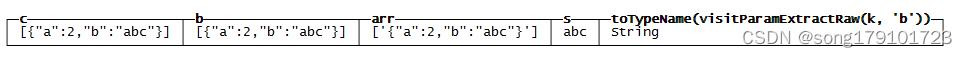

with '{"b":[{"a":2,"b":"abc"}]}' as k

SELECT

visitParamExtractRaw(k, 'b') AS c,

JSONExtractRaw(k, 'b') AS b,

JSONExtractArrayRaw(c) AS arr,

JSONExtractString(arrayJoin(arr),'b') as s,

toTypeName(c);对数据进行测试

最后一行则是对数据格式进行查看,方便建表时候建相应的数据格式。

另外在处理kafka过来的json数据的时候发现如果数据量过大的情况会无法提取数据进行入库,而且select数据的时候会显示不全,暂时没找到解决办法;

例子2:数据类型json

[{"a":"aaa","b":"bbb","c":{"d":[{"aa":1,"bb":"bbbbbb"},{"aa":2,"bb":"dddddd"}]},"e":"eeee"}]

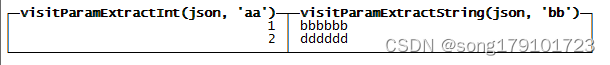

with '[{"a":"aaa","b":"bbb","c":{"d":[{"aa":1,"bb":"bbbbbb"},{"aa":2,"bb":"dddddd"}]},"e":"eeee"}]' as raw

select

visitParamExtractInt(json,'aa'),

visitParamExtractString(json,'bb')

from

(select

JSONExtractArrayRaw(raw) as a,

arrayJoin(a) as b,

JSONExtractRaw(b,'a') as a1,

JSONExtractRaw(b,'c') as c1,

JSONExtractArrayRaw(c1,'d') as d1,

arrayJoin(d1) as json);多层json数据提取。

以上是对json数据的提取,下面就需要建相应的接收表格对数据进行存储。

CREATE TABLE db_test.target

(

`aa` Int8,

`bb` String

)

ENGINE = MergeTree()

ORDER BY sendTime建kafka引擎的数据源

CREATE TABLE db_test.source

(

msg String

)

ENGINE = Kafka()

SETTINGS kafka_broker_list = '1.1.1.1:9092',

kafka_topic_list = 'JSON_TOPIC',

kafka_group_name = 'group_01',

kafka_format = 'JSONAsString',

kafka_skip_broken_messages = 1,

kafka_num_consumers = 1,

input_format_import_nested_json = 1,

input_format_allow_errors_num=100;建转换表

CREATE MATERIALIZED VIEW db_test.source_mv TO db_test.target AS

SELECT

visitParamExtractInt(json, 'aa'),

visitParamExtractString(json, 'bb')

FROM

(

SELECT

JSONExtractArrayRaw(raw) AS a,

arrayJoin(a) AS b,

JSONExtractRaw(b, 'a') AS a1,

JSONExtractRaw(b, 'c') AS c1,

JSONExtractArrayRaw(c1, 'd') AS d1,

arrayJoin(d1) AS json from db_test.source865

);例子3:数据类型json

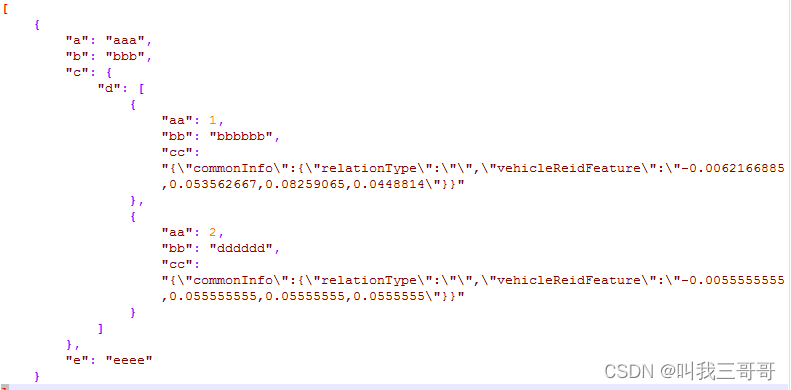

[{"a":"aaa","b":"bbb","c":{"d":[{"aa":1,"bb":"bbbbbb","cc":"{\"commonInfo\":{\"relationType\":\"\",\"vehicleReidFeature\":\"-0.0062166885,0.053562667,0.08259065,0.0448814\"}}"},{"aa":2,"bb":"dddddd","cc":"{\"commonInfo\":{\"relationType\":\"\",\"vehicleReidFeature\":\"-0.0055555555,0.055555555,0.05555555,0.0555555\"}}"}]},"e":"eeee"}]

方便观看进行格式化json格式

该数据为从kafka 中读取的数据,可以看到里面带有转义符,但是如果直接通过insert into存储到数据库中,该转义符就会消失。目前没找到好的办法直接将该数据通过clickhouse的kafka引擎直接读取该数据入库。

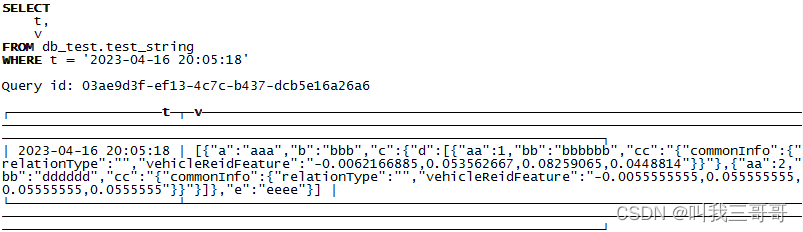

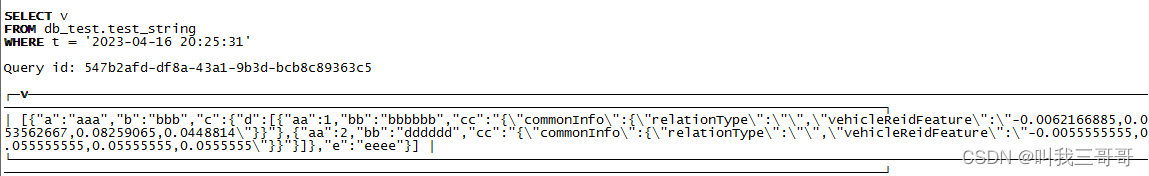

将该部分呢数据插入数据库:

INSERT INTO db_test.test_string(t,v) values(now(),'[{"a":"aaa","b":"bbb","c":{"d":[{"aa":1,"bb":"bbbbbb","cc":"{\"commonInfo\":{\"relationType\":\"\",\"vehicleReidFeature\":\"-0.0062166885,0.053562667,0.08259065,0.0448814\"}}"},{"aa":2,"bb":"dddddd","cc":"{\"commonInfo\":{\"relationType\":\"\",\"vehicleReidFeature\":\"-0.0055555555,0.055555555,0.05555555,0.0555555\"}}"}]},"e":"eeee"}]');插入结果:发现转义字符“\”已经被自动去掉。如下图

通过JSONExtractArrayRaw进行提取数据结果为空:

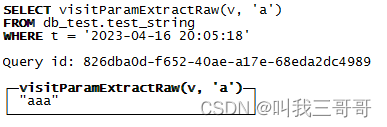

select JSONExtractArrayRaw(v) from db_test.test_string where t='2023-04-16 20:05:18';通过 visitParamExtractRaw对字段进行提取:

select visitParamExtractRaw(v,'a') from db_test.test_string where t='2023-04-16 20:05:18';

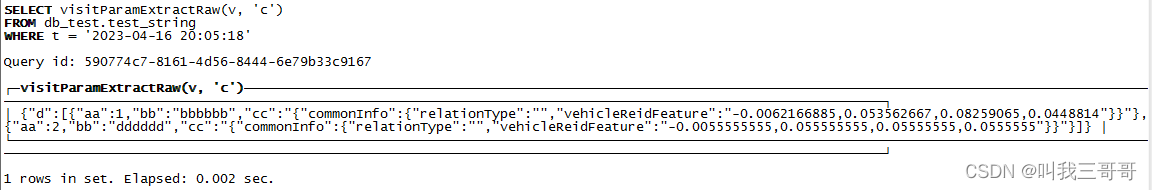

继续进行解析,发现一个奇怪现象:

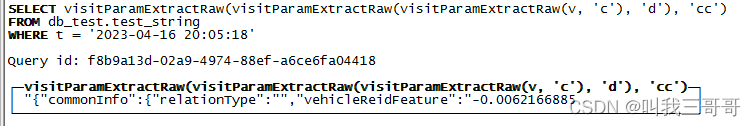

select visitParamExtractRaw(visitParamExtractRaw(visitParamExtractRaw(v,'c'),'d'),'cc') from db_test.test_string where t='2023-04-16 20:05:18';显示的结果是不全的,如下图

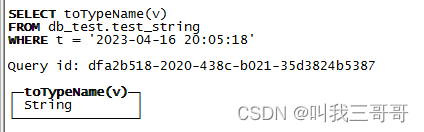

而且通过JSONExtractString均无法解析,但是通过toTypeName(raw)显示确实为string类型。

后来尝试将转义字符\转换成\\,转换后再重新插入数据库

INSERT INTO db_test.test_string(t,v) values(now(),'[{"a":"aaa","b":"bbb","c":{"d":[{"aa":1,"bb":"bbbbbb","cc":"{\\"commonInfo\\":{\\"relationType\\":\\"\\",\\"vehicleReidFeature\\":\\"-0.0062166885,0.053562667,0.08259065,0.0448814\\"}}"},{"aa":2,"bb":"dddddd","cc":"{\\"commonInfo\\":{\\"relationType\\":\\"\\",\\"vehicleReidFeature\\":\\"-0.0055555555,0.055555555,0.05555555,0.0555555\\"}}"}]},"e":"eeee"}]');

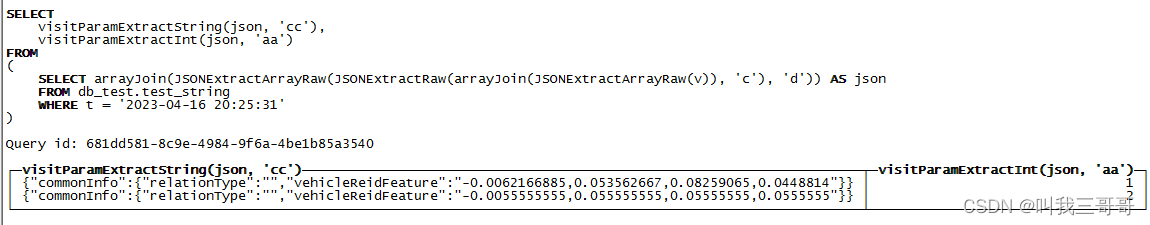

经过层层剥离:

select visitParamExtractString(json,'cc'),visitParamExtractInt(json,'aa') from (select arrayJoin(JSONExtractArrayRaw(JSONExtractRaw(arrayJoin(JSONExtractArrayRaw(v)),'c'),'d')) as json from db_test.test_string where t='2023-04-16 20:25:31');

得到想要的结果,但是问题又来了,如果该数据(带转义字符\)是从kafka过来的,要直接通过clickhouse的kafka引擎直接拉取数据入库是没办法在拉取过程中进行\转换成\\,这样就没办法进行json数据提取。甚至都没办法将原始数据入库。会一直报错。

CREATE TABLE db_test.source

(

`msg` String

)

ENGINE = Kafka()

SETTINGS kafka_broker_list = '1.1.1.1:9092',

kafka_topic_list = 'json_test',

kafka_group_name = 'my_group',

kafka_format = 'JSONAsString',

kafka_skip_broken_messages = 1,

kafka_num_consumers = 1,

input_format_import_nested_json = 1,

input_format_allow_errors_num=100;![]()

1606

1606

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?