Apache Knox安装测试

一、Knox简介

Announcing Apache Knox 1.6.1!

REST API and Application Gateway for the Apache Hadoop Ecosystem

The Apache Knox™ Gateway is an Application Gateway for interacting with the REST APIs and UIs

of Apache Hadoop deployments.

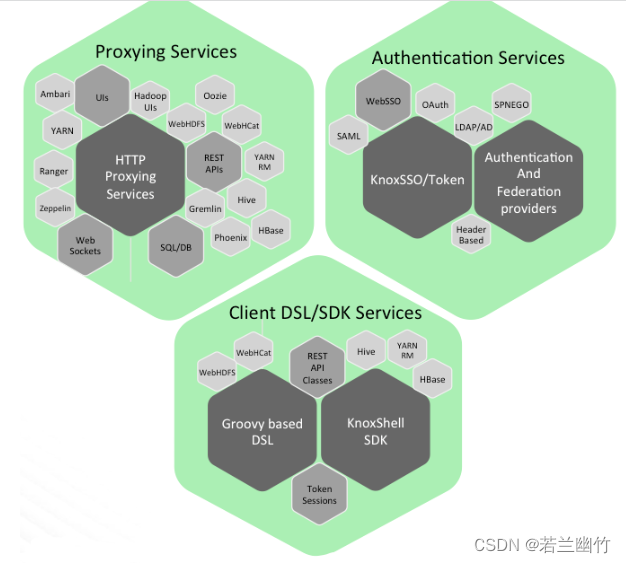

The Knox Gateway provides a single access point for all REST and HTTP interactions with Apache Hadoop

clusters.Knox delivers three groups of user facing services:

官网介绍:

Apache Knox网关是一个为集群中的Apache Hadoop服务提供单点身份验证和访问的系统。目标是为用户(即访问集群数据和执行作业的用户)和操作员(即控制访问和管理集群的用户)简化Hadoop安全。网关作为服务器(或服务器集群)运行,提供对一个或多个Hadoop集群的集中访问。一般来说,网关的目标如下:

- 为Hadoop REST API提供外围安全,使Hadoop安全更易于设置和使用

- 在外围提供身份验证和令牌验证

- 实现与企业和云身份管理系统的身份验证集成

- 在外围提供服务级别授权

- 公开聚合Hadoop集群的REST API的单个URL层次结构

- 限制访问Hadoop集群所需的网络端点(以及防火墙漏洞)

- 对潜在攻击者隐藏内部Hadoop群集拓扑

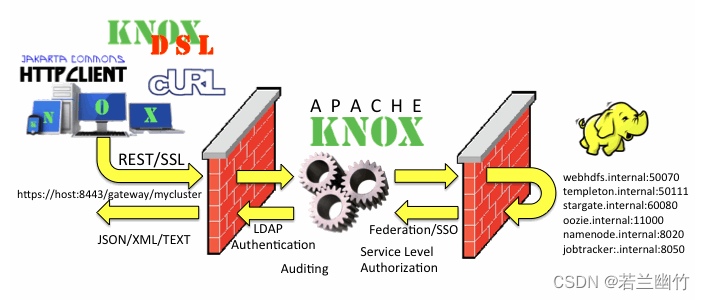

运行逻辑图:

一、下载Knox

- 下载地址:下载Knox链接

二、安装Hadoop和Knox

- Hadoop安装可参考博文:Hadoop2.7.3全分布式环境搭建

- 安装Knox

- 注意:需要使用普通用户安装,如omm

- 新建用户组和用户,如:

groupadd knoxgroup useradd -g knoxgroup omm passwd omm

- 新建用户组和用户,如:

- 可参考官网:Quick Start

- 使用omm用户登录服务器,默认安装在/home/omm下

- 上传knox1.6.1.zip包到虚拟机中

- 解压安装:

unzip knox1.6.1.zip - 环境配置(可选)

- 启动LDAP,进入到解压之后的目录/home/omm/knox1.6.1下执行命令:

bin/ldap.sh start - 创建Master密钥,执行如下命令:

bin/knoxcli.sh create-master,回车,输入密钥密码 - 启动Knox服务,执行:

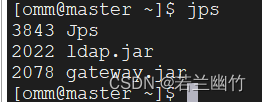

bin/gateway.sh start - 检查LDAP和knox服务是否正常启动,执行:

jps,结果如下:

- 注意:需要使用普通用户安装,如omm

三、读写HDFS

-

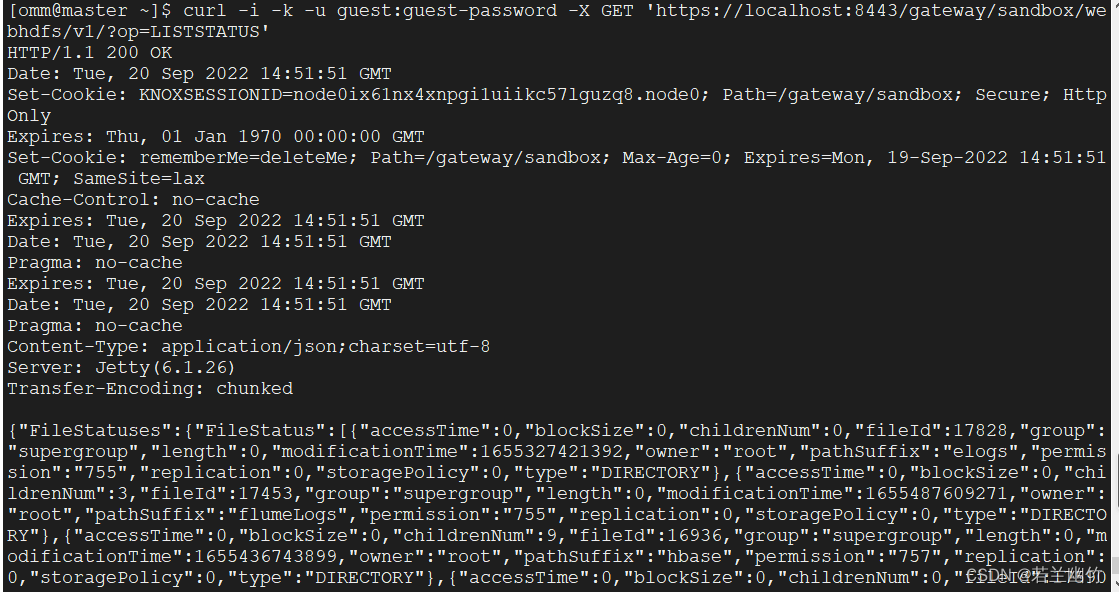

通过网关gateway在WebHDFS上调用LISTSTATUS操作,命令如下:

-

执行命令:

curl -i -k -u guest:guest-password -X GET 'https://localhost:8443/gateway/sandbox/webhdfs/v1/?op=LISTSTATUS' -

结果如图:

-

文字结果:

HTTP/1.1 200 OK Date: Tue, 20 Sep 2022 13:55:54 GMT Set-Cookie: KNOXSESSIONID=node01jvpkpq1sedtp1xcfgvzi9ok721.node0; Path=/gateway/sandbox; Secure; HttpOnly Expires: Thu, 01 Jan 1970 00:00:00 GMT Set-Cookie: rememberMe=deleteMe; Path=/gateway/sandbox; Max-Age=0; Expires=Mon, 19-Sep-2022 13:55:54 GMT; SameSite=lax Cache-Control: no-cache Expires: Tue, 20 Sep 2022 13:55:54 GMT Date: Tue, 20 Sep 2022 13:55:54 GMT Pragma: no-cache Expires: Tue, 20 Sep 2022 13:55:54 GMT Date: Tue, 20 Sep 2022 13:55:54 GMT Pragma: no-cache Content-Type: application/json;charset=utf-8 Server: Jetty(6.1.26) Transfer-Encoding: chunked { "FileStatuses": { "FileStatus": [ { "accessTime": 0, "blockSize": 0, "childrenNum": 0, "fileId": 17828, "group": "supergroup", "length": 0, "modificationTime": 1655327421392, "owner": "root", "pathSuffix": "elogs", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 3, "fileId": 17453, "group": "supergroup", "length": 0, "modificationTime": 1655487609271, "owner": "root", "pathSuffix": "flumeLogs", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 9, "fileId": 16936, "group": "supergroup", "length": 0, "modificationTime": 1655436743899, "owner": "root", "pathSuffix": "hbase", "permission": "757", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 0, "fileId": 17590, "group": "supergroup", "length": 0, "modificationTime": 1655281104031, "owner": "suben", "pathSuffix": "hdfsdemo", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 6, "fileId": 16644, "group": "supergroup", "length": 0, "modificationTime": 1656012489237, "owner": "root", "pathSuffix": "inputs", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 2, "fileId": 16658, "group": "supergroup", "length": 0, "modificationTime": 1653646793954, "owner": "root", "pathSuffix": "output", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 4, "fileId": 17375, "group": "supergroup", "length": 0, "modificationTime": 1655463859092, "owner": "root", "pathSuffix": "outputs", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 1, "fileId": 17570, "group": "supergroup", "length": 0, "modificationTime": 1654919667421, "owner": "root", "pathSuffix": "rdbms", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 0, "fileId": 16641, "group": "supergroup", "length": 0, "modificationTime": 1653636523487, "owner": "root", "pathSuffix": "sparkhistory", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 30, "fileId": 16642, "group": "supergroup", "length": 0, "modificationTime": 1656024159488, "owner": "root", "pathSuffix": "sparklogs", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 1, "fileId": 18294, "group": "supergroup", "length": 0, "modificationTime": 1656035964378, "owner": "root", "pathSuffix": "testDataSet", "permission": "755", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 3, "fileId": 16575, "group": "supergroup", "length": 0, "modificationTime": 1653628122500, "owner": "root", "pathSuffix": "tmp", "permission": "733", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" }, { "accessTime": 0, "blockSize": 0, "childrenNum": 2, "fileId": 16582, "group": "supergroup", "length": 0, "modificationTime": 1654752608696, "owner": "root", "pathSuffix": "user", "permission": "757", "replication": 0, "storagePolicy": 0, "type": "DIRECTORY" } ] } }

-

-

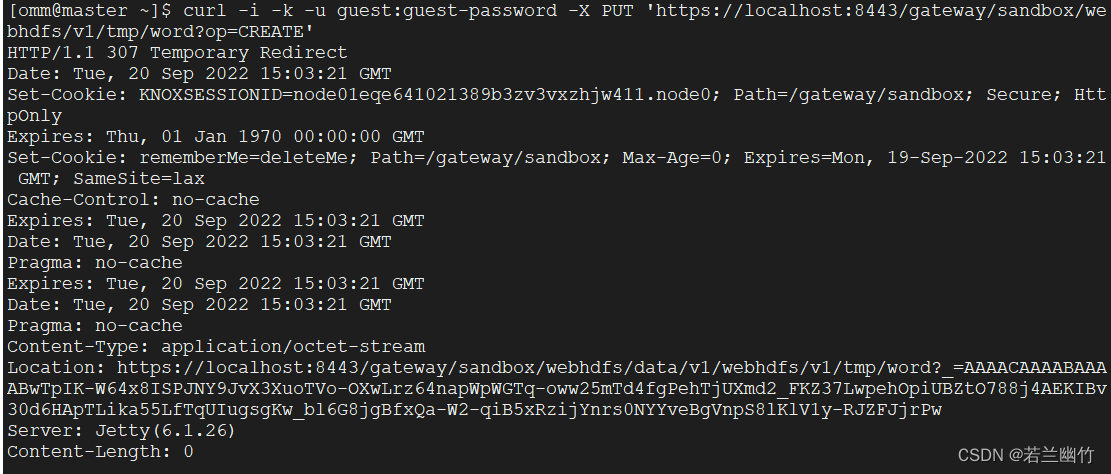

通过网关gateway在上传文件到HDFS上:

-

执行create操作命令:

curl -i -k -u guest:guest-password -X PUT 'https://localhost:8443/gateway/sandbox/webhdfs/v1/tmp/LICENSE?op=CREATE' -

生成结果:

HTTP/1.1 307 Temporary Redirect Date: Tue, 20 Sep 2022 15:03:21 GMT Set-Cookie: KNOXSESSIONID=node01eqe641021389b3zv3vxzhjw411.node0; Path=/gateway/sandbox; Secure; HttpOnly Expires: Thu, 01 Jan 1970 00:00:00 GMT Set-Cookie: rememberMe=deleteMe; Path=/gateway/sandbox; Max-Age=0; Expires=Mon, 19-Sep-2022 15:03:21 GMT; SameSite=lax Cache-Control: no-cache Expires: Tue, 20 Sep 2022 15:03:21 GMT Date: Tue, 20 Sep 2022 15:03:21 GMT Pragma: no-cache Expires: Tue, 20 Sep 2022 15:03:21 GMT Date: Tue, 20 Sep 2022 15:03:21 GMT Pragma: no-cache Content-Type: application/octet-stream Location: https://localhost:8443/gateway/sandbox/webhdfs/data/v1/webhdfs/v1/tmp/word?_=AAAACAAAABAAAABwTpIK-W64x8ISPJNY9JvX3XuoTVo-OXwLrz64napWpWGTq-oww25mTd4fgPehTjUXmd2_FKZ37LwpehOpiUBZtO788j4AEKIBv30d6HApTLika55LfTqUIugsgKw_bl6G8jgBfxQa-W2-qiB5xRzijYnrs0NYYveBgVnpS8lKlV1y-RJZFJjrPw Server: Jetty(6.1.26) Content-Length: 0把上面结果内容中的

Location的结果单独复制出来,后面上传文件时需要使用到,如下:https://localhost:8443/gateway/sandbox/webhdfs/data/v1/webhdfs/v1/tmp/word?_=AAAACAAAABAAAABwTpIK-W64x8ISPJNY9JvX3XuoTVo-OXwLrz64napWpWGTq-oww25mTd4fgPehTjUXmd2_FKZ37LwpehOpiUBZtO788j4AEKIBv30d6HApTLika55LfTqUIugsgKw_bl6G8jgBfxQa-W2-qiB5xRzijYnrs0NYYveBgVnpS8lKlV1y-RJZFJjrPw -

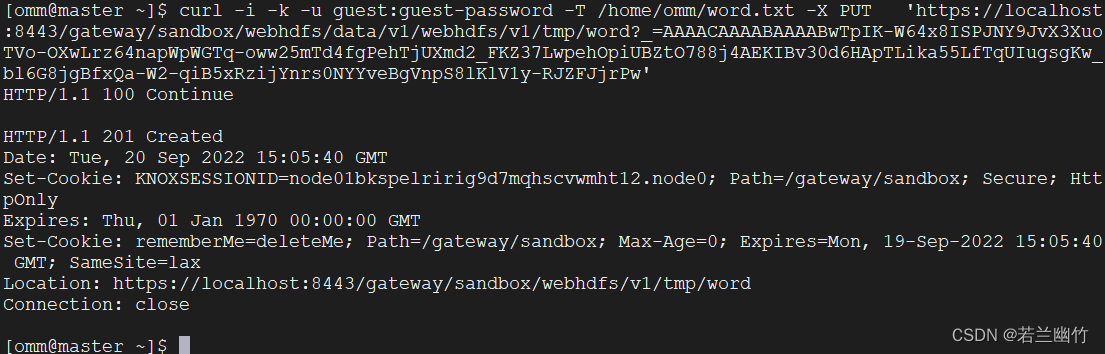

执行上传Put操作上传本地文件word.txt(如没有请自行创建)到HDFS上:

curl -i -k -u guest:guest-password -T /home/omm/word.txt -X PUT 'https://localhost:8443/gateway/sandbox/webhdfs/data/v1/webhdfs/v1/tmp/word?_=AAAACAAAABAAAABwTpIK-W64x8ISPJNY9JvX3XuoTVo-OXwLrz64napWpWGTq-oww25mTd4fgPehTjUXmd2_FKZ37LwpehOpiUBZtO788j4AEKIBv30d6HApTLika55LfTqUIugsgKw_bl6G8jgBfxQa-W2-qiB5xRzijYnrs0NYYveBgVnpS8lKlV1y-RJZFJjrPw' -

生成结果

-

-

通过网关gateway在查看HDFS上的文件:

-

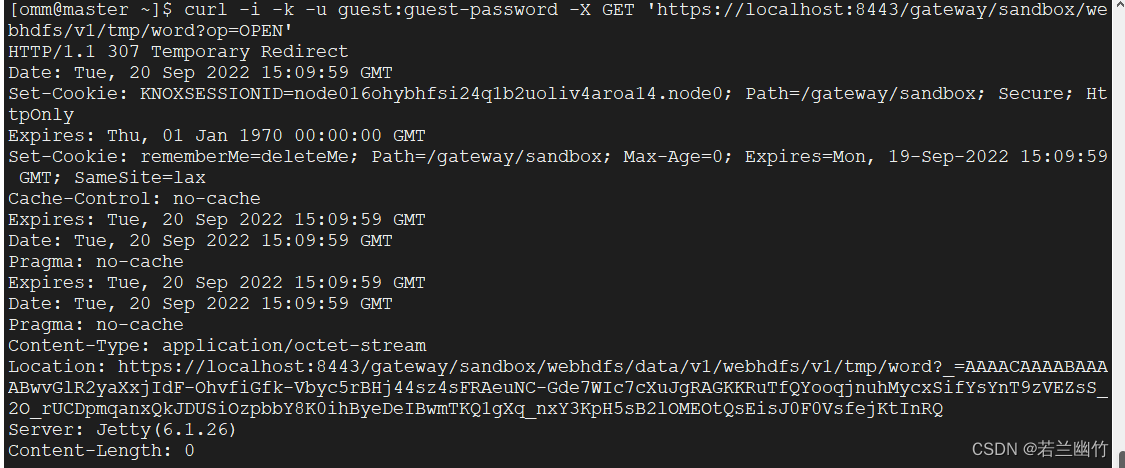

执行open操作,命令:

curl -i -k -u guest:guest-password -X GET 'https://localhost:8443/gateway/sandbox/webhdfs/v1/tmp/LICENSE?op=OPEN'结果:

将

Location对应的值复制出来,如下:https://localhost:8443/gateway/sandbox/webhdfs/data/v1/webhdfs/v1/tmp/word?_=AAAACAAAABAAAABwvGlR2yaXxjIdF-OhvfiGfk-Vbyc5rBHj44sz4sFRAeuNC-Gde7WIc7cXuJgRAGKKRuTfQYooqjnuhMycxSifYsYnT9zVEZsS_2O_rUCDpmqanxQkJDUSiOzpbbY8K0ihByeDeIBwmTKQ1gXq_nxY3KpH5sB2lOMEOtQsEisJ0F0VsfejKtInRQ -

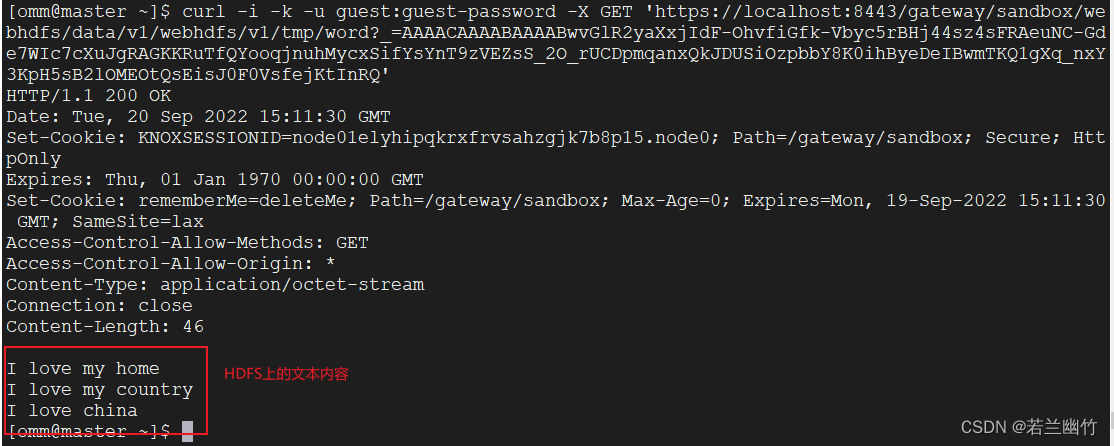

执行Get操作查看文件,命令:

curl -i -k -u guest:guest-password -X GET 'https://localhost:8443/gateway/sandbox/webhdfs/data/v1/webhdfs/v1/tmp/word?_=AAAACAAAABAAAABwvGlR2yaXxjIdF-OhvfiGfk-Vbyc5rBHj44sz4sFRAeuNC-Gde7WIc7cXuJgRAGKKRuTfQYooqjnuhMycxSifYsYnT9zVEZsS_2O_rUCDpmqanxQkJDUSiOzpbbY8K0ihByeDeIBwmTKQ1gXq_nxY3KpH5sB2lOMEOtQsEisJ0F0VsfejKtInRQ'结果:

-

-

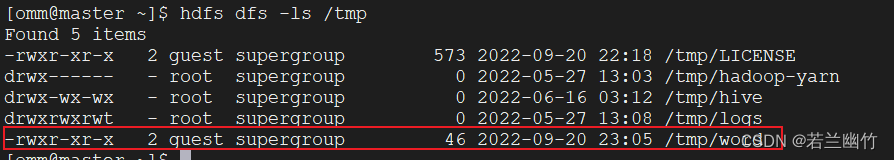

当然可以使用hdfs命令查看/tmp目录下是不是多出了一个/tmp/word的文件,如下所示:

查看下内容:hdfs dfs -cat /tmp/word,结果和上述的结果一样,具体如下所示:

至此,简单的安装测试apache knox就到此结束,该篇博文参考了knox官网教程,在验证过程中记录下来,当作是练习使用吧,希望对你有所帮助~~~

1668

1668

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?