第一步: Create docker-compose.yml

创建docker-合成.yml对于Docker Compose。Docker Compose是一个用于定义和运行多容器Docker应用程序的工具。通过下面的YAML文件,您可以通过一个命令创建和启动所有服务(在本例中是Apache、Fluentd、Elasticsearch、Kibana):

version: '3'

services:

fluentd:

build: ./fluentd

volumes:

- ./fluentd/conf:/fluentd/etc

links:

- "elasticsearch"

ports:

- "24224:24224"

- "24224:24224/udp"

elasticsearch:

image: docker.elastic.co/elasticsearch/elasticsearch:7.2.0

environment:

- "discovery.type=single-node"

expose:

- "9200"

ports:

- "9200:9200"

kibana:

image: kibana:7.2.0

links:

- "elasticsearch"

ports:

- "5601:5601"

如果你已经安装es及kibana,则可使用下面的配置

version: '3'

services:

fluentd:

build: .

volumes:

- ./fluentd/conf:/fluentd/etc

privileged: true

ports:

- "24224:24224"

- "24224:24224/udp"

environment:

- TZ=Asia/Shanghai

restart: always

logging:

driver: "json-file"

options:

max-size: 100m

max-file: "5"

使用Config+插件创建Fluentd映像

使用fluentd官方Docker映像创建包含以下内容的fluentd/Dockerfile;然后安装Elasticsearch插件:

# fluentd/Dockerfile

FROM fluent/fluentd:v1.6-debian-1

USER root

RUN ["gem", "install", "fluent-plugin-elasticsearch", "--no-document", "--version", "4.2.0"]

USER fluent

然后,创建Fluentd配置文件fluentd/conf/fluent.conf。转发输入插件从Docker日志驱动程序接收日志,elasticsearch输出插件将这些日志转发到elasticsearch。

# fluentd/conf/fluent.conf

<source>

@type forward

port 24224

bind 0.0.0.0

</source>

<match docker.**>

@type copy

<store>

@type elasticsearch

host elasticsearch

port 9200

logstash_format true

logstash_prefix sharechef-logs

logstash_dateformat %Y%m%d

include_tag_key true

type_name access_log

flush_interval 1s

include_tag_key true

reconnect_on_error true

reload_on_failure true

reload_connections false

tag_key @log

</store>

</match>

第二步:启动容器

$ docker-compose up

第三步: 创建验证nginx

docker run -dit -p 80:80\

--log-driver=fluentd \

--log-opt fluentd-address=localhost:24224 \

--log-opt tag="docker.``.`Name`" \

--name nginx \

nginx

curl 127.0.0.1

#查看日志

docker logs fluentd容器id

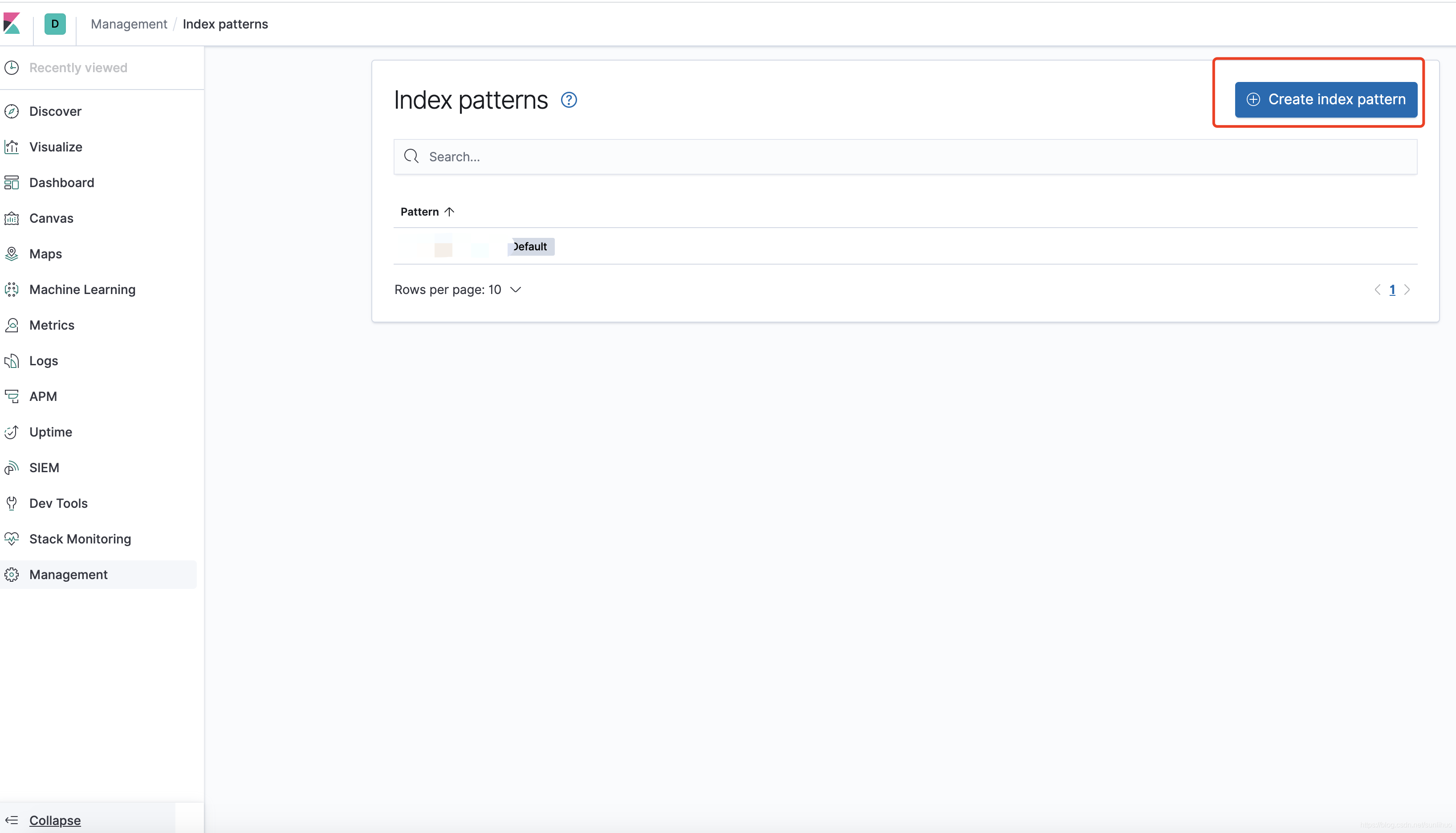

127.0.0.1:5601

登录kibana

GET _cat/indices

#查看索引

GET /docker-logs-20200925/_search

{

"query": {

"match_all": {}

}

}

1096

1096

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?