[twisted] CRITICAL: Unhandled error in Deferred

详细报错内容如下

024-02-22 16:38:30 [twisted] CRITICAL: Unhandled error in Deferred:

Traceback (most recent call last):

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 265, in crawl

return self._crawl(crawler, *args, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 269, in _crawl

d = crawler.crawl(*args, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\twisted\internet\defer.py", line 2256, in unwindGenerator

return _cancellableInlineCallbacks(gen)

File "D:\06Anaconda\envs\site_check\lib\site-packages\twisted\internet\defer.py", line 2168, in _cancellableInlineCallbacks

_inlineCallbacks(None, gen, status, _copy_context())

--- <exception caught here> ---

File "D:\06Anaconda\envs\site_check\lib\site-packages\twisted\internet\defer.py", line 2000, in _inlineCallbacks

result = context.run(gen.send, result)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 155, in crawl

self.spider = self._create_spider(*args, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 169, in _create_spider

return self.spidercls.from_crawler(self, *args, **kwargs)

File "E:\CloudProjects\n_site_check\n_site_check\spiders\sites.py", line 20, in from_crawler

crawler.signals.connect(instance.spider_idle, signal=signals.spider_idle)

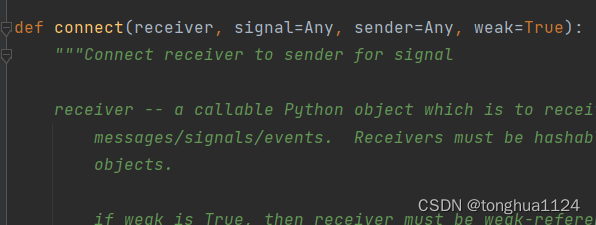

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\signalmanager.py", line 28, in connect

dispatcher.connect(receiver, signal, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\pydispatch\dispatcher.py", line 130, in connect

receiver = saferef.safeRef(receiver, onDelete=_removeReceiver)

File "D:\06Anaconda\envs\site_check\lib\site-packages\pydispatch\saferef.py", line 32, in safeRef

return weakref.ref(target, onDelete)

builtins.TypeError: cannot create weak reference to 'NoneType' object

2024-02-22 16:38:30 [twisted] CRITICAL:

Traceback (most recent call last):

File "D:\06Anaconda\envs\site_check\lib\site-packages\twisted\internet\defer.py", line 2000, in _inlineCallbacks

result = context.run(gen.send, result)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 155, in crawl

self.spider = self._create_spider(*args, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\crawler.py", line 169, in _create_spider

return self.spidercls.from_crawler(self, *args, **kwargs)

File "E:\CloudProjects\n_site_check\n_site_check\spiders\sites.py", line 20, in from_crawler

crawler.signals.connect(instance.spider_idle, signal=signals.spider_idle)

File "D:\06Anaconda\envs\site_check\lib\site-packages\scrapy\signalmanager.py", line 28, in connect

dispatcher.connect(receiver, signal, **kwargs)

File "D:\06Anaconda\envs\site_check\lib\site-packages\pydispatch\dispatcher.py", line 130, in connect

receiver = saferef.safeRef(receiver, onDelete=_removeReceiver)

File "D:\06Anaconda\envs\site_check\lib\site-packages\pydispatch\saferef.py", line 32, in safeRef

return weakref.ref(target, onDelete)

TypeError: cannot create weak reference to 'NoneType' object

错误分析

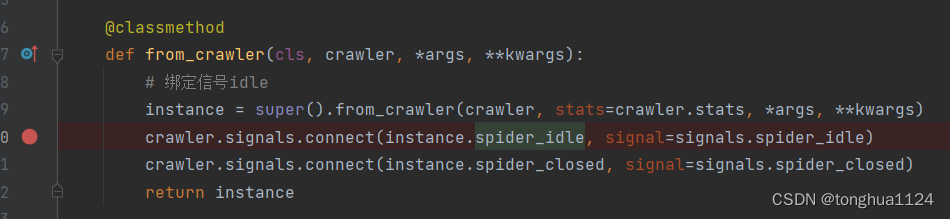

在对错误日志进行研究时,发现没有明确得提到究竟是哪里得异常,于是通过下断点的方式来查找相关异常的原因

错误定位未知

通过断点,找到异常的代码块

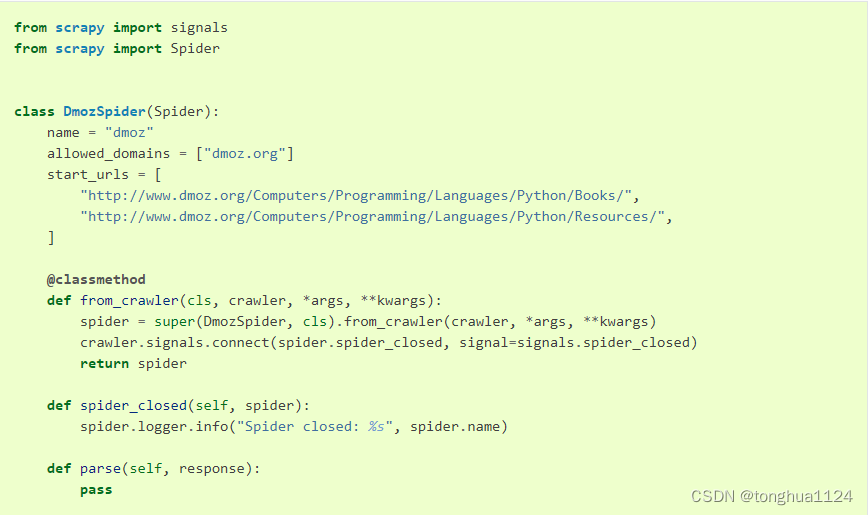

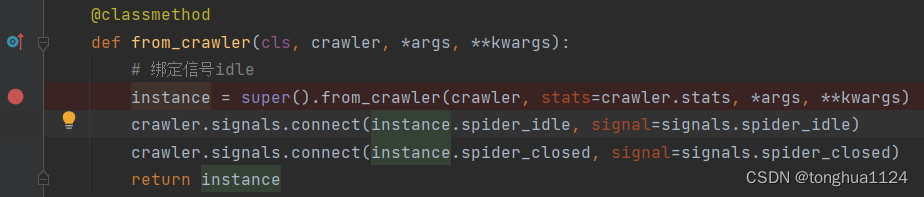

@classmethod

def from_crawler(cls, crawler, *args, **kwargs):

# 绑定信号idle

instance = super().from_crawler(crawler, stats=crawler.stats, *args, **kwargs)

crawler.signals.connect(instance.spider_idle, signal=signals.spider_idle)

crawler.signals.connect(instance.spider_closed, signal=signals.spider_closed)

return instance

代码解释和分析,这一段使用来通过scrapy信号绑定到自己设计的函数中,是的信号被触发时可以根据需求进行个性化处理

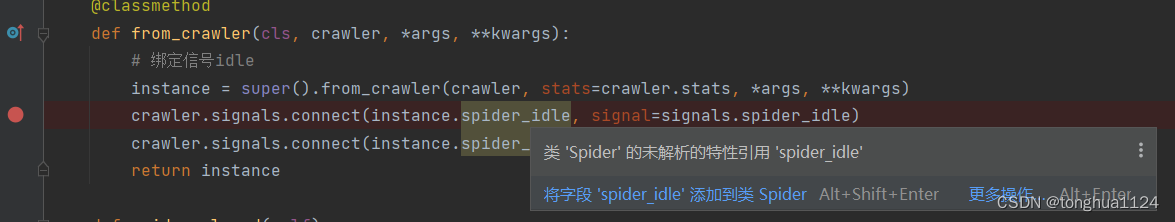

错误查找流程

1. 由于并没有遇到过这样的情况,而且这段信号绑定代码看起来也并没有任何的问题

2. 查找scrapy文档,研究信号绑定是否有新的改变,是否是因为版本问题导致的异常

3.重构一个新的scrapy项目,并根据文档进行信号绑定,发现程序正常运行

4.将我当前的绑定函数放到新的scrapy项目中,发现也可以正常运行

5.于是确定问题就出现在我的信号绑定上面,开始二次断点

6. 通过指针进入到函数内容逐级查看,问题出现在哪里

7. 定位到这个函数上,打印参数值,发现receiver的值为None,发现不正常,按照正常定义,receiver应该是我设计的spider_idle函数,而不应该是空

8. 找到可能的异常点,向上推,查看问题具体出现在哪里

9. 重新设置断点,并逐级向下查看

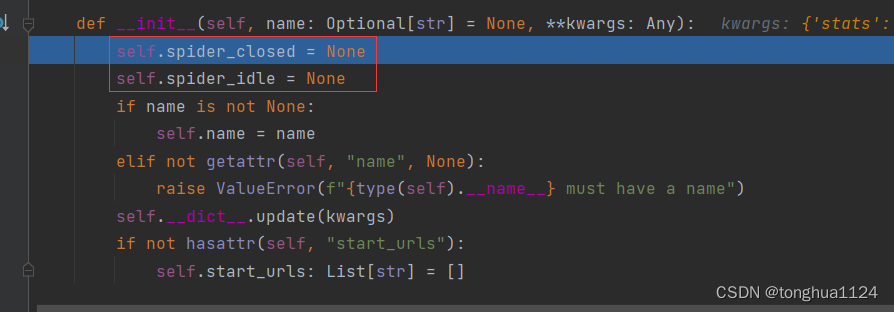

10. 发现问题所在,在__init__时,将这两个内容设置为了None

11. 删掉这两段代码,发现程序可以正常运行

12 删除掉代码后,发现Pycharm提示我有待修复的问题,点击修复,发现__init__里面又多了上面删除掉的两行

3705

3705

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?