1.持久化的重要性

持久化在计算机系统中具有极其重要的地位,尤其是在诸如消息队列、数据库等需要保证数据完整性和可靠性的场景中。以下是持久化的重要性体现:

-

数据可靠性:持久化能够保证数据在硬件故障、系统崩溃或断电等情况发生后仍能保持完整,避免数据丢失。在RocketMQ中,消息一旦被持久化到磁盘,即使Broker服务出现异常重启,也能从磁盘恢复未被消费的消息。

-

事务一致性:对于涉及多个操作的事务处理,持久化可以作为事务提交的关键步骤,只有当数据成功写入持久化存储后,事务才能被认为已成功完成。这对于分布式事务、消息队列的事务消息等功能至关重要。

-

灾难恢复:如果系统遭遇重大故障或者灾难事件,依赖于持久化的数据可以从备份中恢复,使得业务得以迅速恢复正常运行。

-

审计需求:很多业务场景需要长期保存历史数据以满足合规性要求或进行数据分析,这都需要依赖于数据的持久化存储。

-

高可用性:持久化也是实现系统高可用的基础,通过在不同节点间复制持久化数据,可以实现数据冗余,提高系统的容错能力和整体可用性。

2.RocketMQ中几个重要的存储文件

-

CommitLog:

- CommitLog 是 RocketMQ 最核心的消息存储文件,所有生产者发送的消息都会被顺序追加到 CommitLog 文件中,采用单个大文件顺序写的方式,极大地提高了磁盘写入性能。

- 单个 CommitLog 文件大小通常是固定的,如1GB,当文件满后会自动切换到下一个文件,文件命名规则包含物理偏移量信息,方便定位消息。

-

ConsumeQueue:

- ConsumeQueue 是消费者消费消息时使用的逻辑队列,它是基于Topic和Message Queue维度构建的索引文件,每个消费队列对应一个索引文件。

- ConsumeQueue 不直接存储消息内容,而是存储指向 CommitLog 中消息的偏移量、消息大小和其他元数据,消费者通过读取 ConsumeQueue 中的索引来快速定位到 CommitLog 中的具体消息。

-

存储流程:

- 当 Broker 收到一条消息时,它首先会将消息内容写入 CommitLog 文件,并记录消息的物理偏移量。

- 同时,会在对应的 ConsumeQueue 中添加一个索引项,记录该消息在 CommitLog 中的位置信息。

- 根据配置,RocketMQ 提供异步刷盘或同步刷盘策略,确保消息已经持久化到磁盘上,防止数据丢失。

-

MMAP技术与零拷贝:

- RocketMQ 在消息存储中利用了内存映射(Memory-Mapped Files, MMAP)技术,减少数据在操作系统内核空间和用户空间之间的复制次数,实现“零拷贝”操作,进一步提升消息存储和传输效率。

总结来说,RocketMQ 的消息存储机制兼顾了高性能、顺序写优化、消息顺序消费以及灵活的消费模式,是支撑其大规模消息处理能力的重要基础架构。

----------from AI

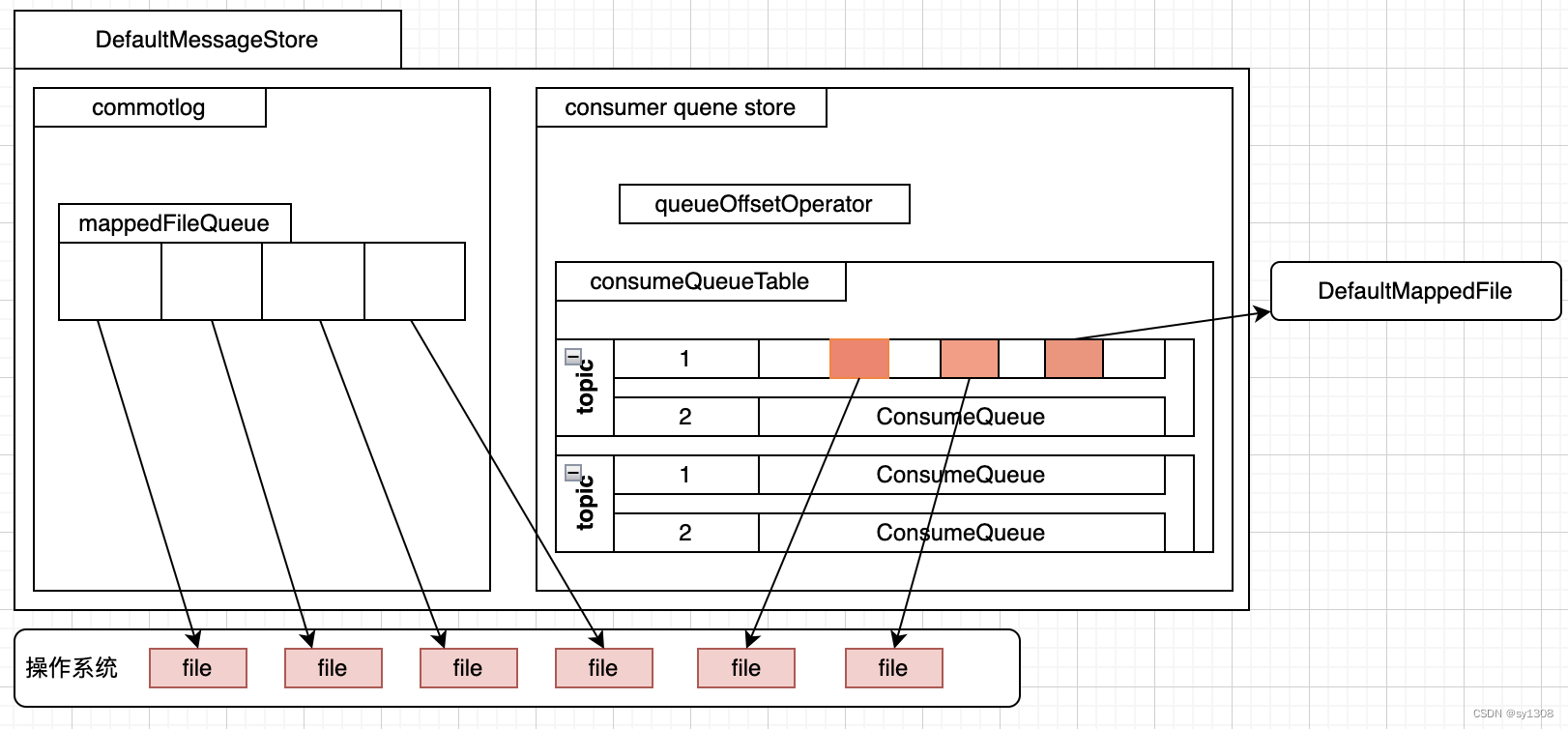

3.存储结构图

这张图在这篇文章已经聊过,本次重点介绍一下DefaultMappedFile文件和如何写入存储消息过程。

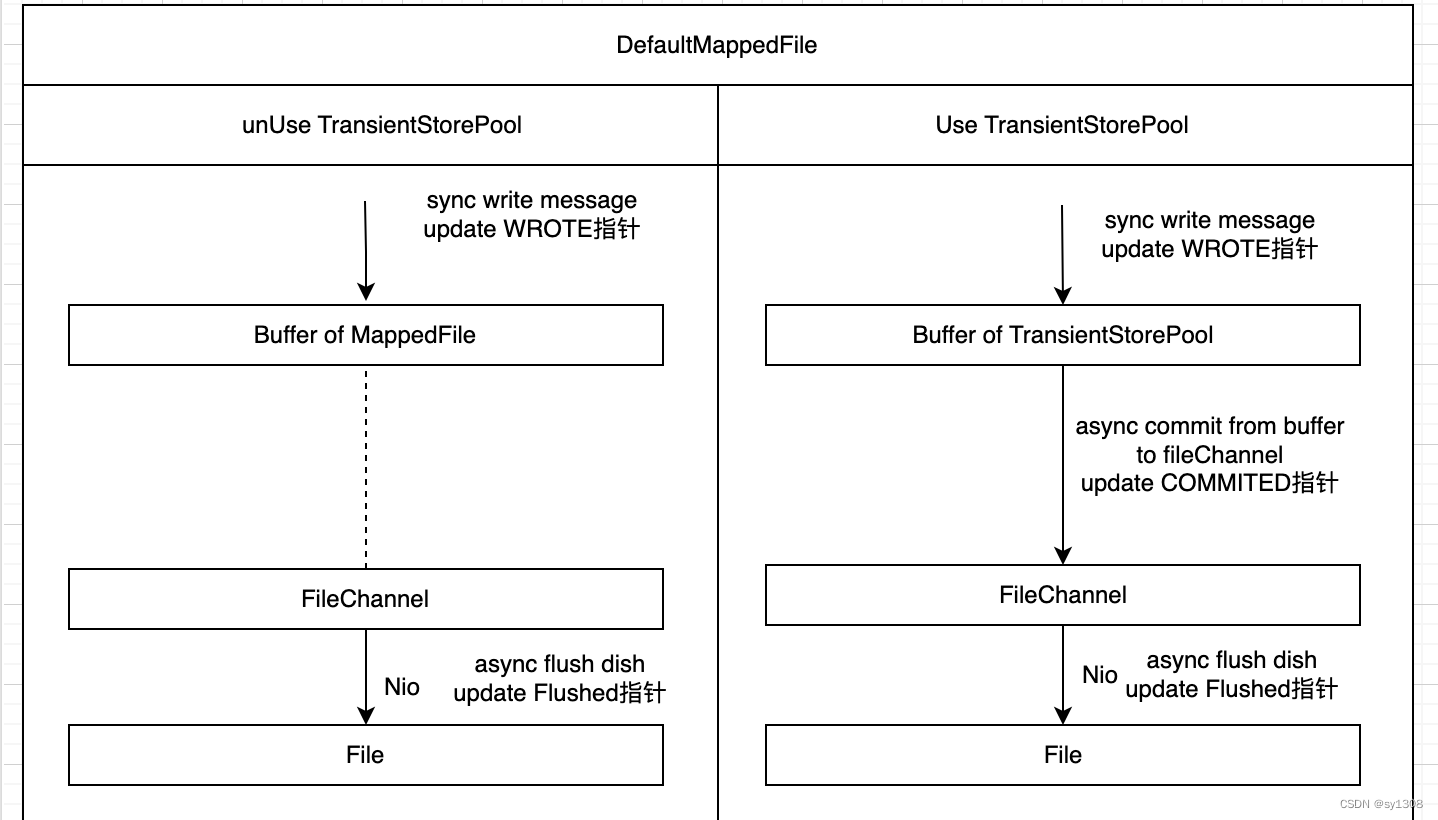

- DefaultMappedFile文件结构

- DefaultMappedFile是对NIO中MappedFile进行了封装,加入了一些特别处理。其中包括WROTE_POSITION_UPDATER,COMMITTED_POSITION_UPDATER,FLUSHED_POSITION_UPDATER三个原子指针,以及Buffer写入,Buffer之间提交,持久化方法等,在使用TransientStorePool时会有本地Buffer来加速处理写入速度,这个会使用到COMMITTED_POSITION_UPDATER指针。

上图可以在使用TransientStorePool流程上会多出commit,下面就来分析一下消息写入已经输盘的逻辑

4.消息写入以及刷盘

1.消息写入过程

broker接受处理消息在org/apache/rocketmq/broker/processor/SendMessageProcessor类中,对应代码

public RemotingCommand sendMessage(final ChannelHandlerContext ctx,

final RemotingCommand request,

final SendMessageContext sendMessageContext,

final SendMessageRequestHeader requestHeader,

final TopicQueueMappingContext mappingContext,

final SendMessageCallback sendMessageCallback) throws RemotingCommandException {

// 省略无关代码

if (brokerController.getBrokerConfig().isAsyncSendEnable()) {

CompletableFuture<PutMessageResult> asyncPutMessageFuture;

if (sendTransactionPrepareMessage) { //这里存储的是事物性消息 line 325

asyncPutMessageFuture = this.brokerController.getTransactionalMessageService().asyncPrepareMessage(msgInner);

} else {

//非事物消息 line 327

asyncPutMessageFuture = this.brokerController.getMessageStore().asyncPutMessage(msgInner);

}

}最后调用的是org.apache.rocketmq.store.logfile.DefaultMappedFile#appendMessagesInner方法将消息追加到Buffer中

protected ByteBuffer appendMessageBuffer() {

this.mappedByteBufferAccessCountSinceLastSwap++;

// 如果使用TransientStorePool,新创建的文件writeBuffer不会为null,重启恢复的文件会是null

return writeBuffer != null ? writeBuffer : this.mappedByteBuffer;

}

public AppendMessageResult appendMessagesInner(final MessageExt messageExt, final AppendMessageCallback cb,

PutMessageContext putMessageContext) {

assert messageExt != null;

assert cb != null;

// 获取当前文件Buffer的写入位置

int currentPos = WROTE_POSITION_UPDATER.get(this);

if (currentPos < this.fileSize) {

// 从当前Buffer创建一个新的Buffer

ByteBuffer byteBuffer = appendMessageBuffer().slice();

// 将新的Buffer指针置到当前位置

byteBuffer.position(currentPos);

AppendMessageResult result;

if (messageExt instanceof MessageExtBatch && !((MessageExtBatch) messageExt).isInnerBatch()) {

// 处理批量消息 --- 这里是一个callback

result = cb.doAppend(this.getFileFromOffset(), byteBuffer, this.fileSize - currentPos,

(MessageExtBatch) messageExt, putMessageContext);

} else if (messageExt instanceof MessageExtBrokerInner) {

// 处理单个消息 --- 这里是一个callback里面会做判断 确认当前Buffer是否能够写入消息等逻辑。写入成功,返回写入消息体大小。如果不能写入,就会放入END_FILE标识,并返回剩余的Buffer大小,表示Buffer已经写满 ,上层收到写满信息,会重新创建文件进行写入

result = cb.doAppend(this.getFileFromOffset(), byteBuffer, this.fileSize - currentPos,

(MessageExtBrokerInner) messageExt, putMessageContext);

} else {

return new AppendMessageResult(AppendMessageStatus.UNKNOWN_ERROR);

}

// 写入后将写指针进行新增操作

WROTE_POSITION_UPDATER.addAndGet(this, result.getWroteBytes());

this.storeTimestamp = result.getStoreTimestamp();

return result;

}

log.error("MappedFile.appendMessage return null, wrotePosition: {} fileSize: {}", currentPos, this.fileSize);

return new AppendMessageResult(AppendMessageStatus.UNKNOWN_ERROR);

}到这里可以看到消息在写入Buffer的过程都是同步过程,那么刷盘和提交动作在哪里完成呢?没错,都是在其他独立的线程中完成。

2.提交动作

在使用TransientStorePool会有从Buffer到fileChannel的提交动作,该动作是由org.apache.rocketmq.store.CommitLog.CommitRealTimeService线程完成,没有使用TransientStorePool该线程不会被执行

@Override

public void run() {

CommitLog.log.info(this.getServiceName() + " service started");

while (!this.isStopped()) {

//内部执行间隔时间。默认500ms

int interval = CommitLog.this.defaultMessageStore.getMessageStoreConfig().getCommitIntervalCommitLog();

// 最小提交的页大小

int commitDataLeastPages = CommitLog.this.defaultMessageStore.getMessageStoreConfig().getCommitCommitLogLeastPages();

//最大执行时间

int commitDataThoroughInterval =

CommitLog.this.defaultMessageStore.getMessageStoreConfig().getCommitCommitLogThoroughInterval();

long begin = System.currentTimeMillis();

if (begin >= (this.lastCommitTimestamp + commitDataThoroughInterval)) {

this.lastCommitTimestamp = begin;

commitDataLeastPages = 0;

}

try {

// 执行commit动作,从TransientStorePool的Buffer提交到FileChannel

boolean result = CommitLog.this.mappedFileQueue.commit(commitDataLeastPages);

long end = System.currentTimeMillis();

if (!result) {

this.lastCommitTimestamp = end; // result = false means some data committed.

// 这里需要唤起刷盘线程,将数据刷新到文件

CommitLog.this.flushManager.wakeUpFlush();

}

CommitLog.this.getMessageStore().getPerfCounter().flowOnce("COMMIT_DATA_TIME_MS", (int) (end - begin));

if (end - begin > 500) {

log.info("Commit data to file costs {} ms", end - begin);

}

this.waitForRunning(interval);

} catch (Throwable e) {

CommitLog.log.error(this.getServiceName() + " service has exception. ", e);

}

}

boolean result = false;

for (int i = 0; i < RETRY_TIMES_OVER && !result; i++) {

result = CommitLog.this.mappedFileQueue.commit(0);

CommitLog.log.info(this.getServiceName() + " service shutdown, retry " + (i + 1) + " times " + (result ? "OK" : "Not OK"));

}

CommitLog.log.info(this.getServiceName() + " service end");

}@Override

public int commit(final int commitLeastPages) {

if (writeBuffer == null) { //如果为空,不用执行提交,默认写的就是FileChannel映射的Buffer

//no need to commit data to file channel, so just regard wrotePosition as committedPosition.

return WROTE_POSITION_UPDATER.get(this);

}

//no need to commit data to file channel, so just set committedPosition to wrotePosition.

if (transientStorePool != null && !transientStorePool.isRealCommit()) {

COMMITTED_POSITION_UPDATER.set(this, WROTE_POSITION_UPDATER.get(this));

} else if (this.isAbleToCommit(commitLeastPages)) { //判断是否需要提交

if (this.hold()) {

commit0(); // 执行提交的位置

this.release();

} else {

log.warn("in commit, hold failed, commit offset = " + COMMITTED_POSITION_UPDATER.get(this));

}

}

// All dirty data has been committed to FileChannel.

if (writeBuffer != null && this.transientStorePool != null && this.fileSize == COMMITTED_POSITION_UPDATER.get(this)) {

this.transientStorePool.returnBuffer(writeBuffer);

this.writeBuffer = null;

}

return COMMITTED_POSITION_UPDATER.get(this);

}

// 将Buffer数据写入到fileChannel 并且将Commited指针移动

protected void commit0() {

int writePos = WROTE_POSITION_UPDATER.get(this);

int lastCommittedPosition = COMMITTED_POSITION_UPDATER.get(this);

if (writePos - lastCommittedPosition > 0) {

try {

ByteBuffer byteBuffer = writeBuffer.slice();

byteBuffer.position(lastCommittedPosition);

byteBuffer.limit(writePos);

this.fileChannel.position(lastCommittedPosition);

this.fileChannel.write(byteBuffer);

COMMITTED_POSITION_UPDATER.set(this, writePos);

} catch (Throwable e) {

log.error("Error occurred when commit data to FileChannel.", e);

}

}

}3.刷盘动作

刷盘时将fileChannel(使用TransientStorePool)或MappedByteBuffer(不使用TransientStorePool)数据刷新到文件中。分为同步和异步刷盘2种,同步刷盘逻辑由org.apache.rocketmq.store.CommitLog.GroupCommitService线程完成,同步刷盘是在写完buffer后会提交一个Request到此线程,线程收到会对本线程进行唤起,进行刷盘操作。主线程会等待刷盘结果,其实也是多个线程完成,这样好处是可以统一刷盘逻辑,都是由其他线程完成。区别就是同步需要等待异步逻辑的结果。

public void putRequest(final GroupCommitRequest request) {

lock.lock();

try {

// 新增一个请求

this.requestsWrite.add(request);

} finally {

lock.unlock();

}

// 唤起当前线程 执行waitEnd方法

this.wakeup();

}

@Override

protected void onWaitEnd() {

this.swapRequests();

}

private void swapRequests() {

lock.lock();

try {

// 将读写互换 减少了数据转移开销

LinkedList<GroupCommitRequest> tmp = this.requestsWrite;

this.requestsWrite = this.requestsRead;

this.requestsRead = tmp;

} finally {

lock.unlock();

}

}

@Override

public void run() {

CommitLog.log.info(this.getServiceName() + " service started");

while (!this.isStopped()) {

try {

this.waitForRunning(10);

this.doCommit(); //执行刷盘逻辑

} catch (Exception e) {

CommitLog.log.warn(this.getServiceName() + " service has exception. ", e);

}

}

// Under normal circumstances shutdown, wait for the arrival of the

// request, and then flush

try {

Thread.sleep(10);

} catch (InterruptedException e) {

CommitLog.log.warn("GroupCommitService Exception, ", e);

}

this.swapRequests();

this.doCommit();

CommitLog.log.info(this.getServiceName() + " service end");

}

private void doCommit() {

if (!this.requestsRead.isEmpty()) {

for (GroupCommitRequest req : this.requestsRead) {

// 获取刷盘指针位置来判断当前是否有可刷盘的数据

boolean flushOK = CommitLog.this.mappedFileQueue.getFlushedWhere() >= req.getNextOffset();

for (int i = 0; i < 1000 && !flushOK; i++) {

// 执行刷盘逻辑

CommitLog.this.mappedFileQueue.flush(0);

flushOK = CommitLog.this.mappedFileQueue.getFlushedWhere() >= req.getNextOffset();

if (flushOK) {

break;

} else {

// When transientStorePoolEnable is true, the messages in writeBuffer may not be committed

// to pageCache very quickly, and flushOk here may almost be false, so we can sleep 1ms to

// wait for the messages to be committed to pageCache.

try {

Thread.sleep(1);

} catch (InterruptedException ignored) {

}

}

}

req.wakeupCustomer(flushOK ? PutMessageStatus.PUT_OK : PutMessageStatus.FLUSH_DISK_TIMEOUT);

}

long storeTimestamp = CommitLog.this.mappedFileQueue.getStoreTimestamp();

if (storeTimestamp > 0) {

CommitLog.this.defaultMessageStore.getStoreCheckpoint().setPhysicMsgTimestamp(storeTimestamp);

}

this.requestsRead = new LinkedList<>();

} else {

// Because of individual messages is set to not sync flush, it

// will come to this process

CommitLog.this.mappedFileQueue.flush(0);

}

}

异步刷盘逻辑由org.apache.rocketmq.store.CommitLog.FlushRealTimeService完成

@Override

public void run() {

CommitLog.log.info(this.getServiceName() + " service started");

while (!this.isStopped()) {

boolean flushCommitLogTimed = CommitLog.this.defaultMessageStore.getMessageStoreConfig().isFlushCommitLogTimed();

int interval = CommitLog.this.defaultMessageStore.getMessageStoreConfig().getFlushIntervalCommitLog();

int flushPhysicQueueLeastPages = CommitLog.this.defaultMessageStore.getMessageStoreConfig().getFlushCommitLogLeastPages();

int flushPhysicQueueThoroughInterval =

CommitLog.this.defaultMessageStore.getMessageStoreConfig().getFlushCommitLogThoroughInterval();

boolean printFlushProgress = false;

// Print flush progress

long currentTimeMillis = System.currentTimeMillis();

if (currentTimeMillis >= (this.lastFlushTimestamp + flushPhysicQueueThoroughInterval)) {

this.lastFlushTimestamp = currentTimeMillis;

flushPhysicQueueLeastPages = 0;

printFlushProgress = (printTimes++ % 10) == 0;

}

try {

// 2种等待方法 第2个是RocketMQ自己封装的,支持唤醒的方法

if (flushCommitLogTimed) {

Thread.sleep(interval);

} else {

this.waitForRunning(interval);

}

if (printFlushProgress) {

this.printFlushProgress();

}

long begin = System.currentTimeMillis();

// 执行刷盘逻辑

CommitLog.this.mappedFileQueue.flush(flushPhysicQueueLeastPages);

long storeTimestamp = CommitLog.this.mappedFileQueue.getStoreTimestamp();

if (storeTimestamp > 0) {

CommitLog.this.defaultMessageStore.getStoreCheckpoint().setPhysicMsgTimestamp(storeTimestamp);

}

long past = System.currentTimeMillis() - begin;

CommitLog.this.getMessageStore().getPerfCounter().flowOnce("FLUSH_DATA_TIME_MS", (int) past);

if (past > 500) {

log.info("Flush data to disk costs {} ms", past);

}

} catch (Throwable e) {

CommitLog.log.warn(this.getServiceName() + " service has exception. ", e);

this.printFlushProgress();

}

}

// Normal shutdown, to ensure that all the flush before exit

boolean result = false;

for (int i = 0; i < RETRY_TIMES_OVER && !result; i++) {

result = CommitLog.this.mappedFileQueue.flush(0);

CommitLog.log.info(this.getServiceName() + " service shutdown, retry " + (i + 1) + " times " + (result ? "OK" : "Not OK"));

}

this.printFlushProgress();

CommitLog.log.info(this.getServiceName() + " service end");

}

mappedFile中刷盘动作

@Override

public int flush(final int flushLeastPages) {

// 判断是否需要刷盘,异步默认4页才会触发刷盘,同步

if (this.isAbleToFlush(flushLeastPages)) {

if (this.hold()) {

int value = getReadPosition();

try {

this.mappedByteBufferAccessCountSinceLastSwap++;

//We only append data to fileChannel or mappedByteBuffer, never both.

if (writeBuffer != null || this.fileChannel.position() != 0) {

// 如果使用TransientStorePool,数据fileChannel

this.fileChannel.force(false);

} else {

// 不使用TransientStorePool或启动加载的文件

this.mappedByteBuffer.force();

}

this.lastFlushTime = System.currentTimeMillis();

} catch (Throwable e) {

log.error("Error occurred when force data to disk.", e);

}

// 修改刷盘指针

FLUSHED_POSITION_UPDATER.set(this, value);

this.release();

} else {

// 说明有线程占用了当前文件

log.warn("in flush, hold failed, flush offset = " + FLUSHED_POSITION_UPDATER.get(this));

FLUSHED_POSITION_UPDATER.set(this, getReadPosition());

}

}

return this.getFlushedPosition();

}

private boolean isAbleToFlush(final int flushLeastPages) {

// 刷盘指针位置

int flush = FLUSHED_POSITION_UPDATER.get(this);

//写入或提交位置

int write = getReadPosition();

//如果盘了就刷盘

if (this.isFull()) {

return true;

}

// 异步默认是4页才会刷盘。同步是0

if (flushLeastPages > 0) {

return ((write / OS_PAGE_SIZE) - (flush / OS_PAGE_SIZE)) >= flushLeastPages;

}

// 同步0判断写入和刷盘指针位置 异步如果超时了flushLeastPages=0也会走这个判断

return write > flush;

}4.同步刷盘如何等待

在commited的org.apache.rocketmq.store.CommitLog#asyncPutMessage方法最后,有handleDiskFlushAndHA处理高可用和刷盘的逻辑。主要看下刷盘的逻辑,位于org.apache.rocketmq.store.CommitLog.DefaultFlushManager#handleDiskFlush

public void handleDiskFlush(AppendMessageResult result, PutMessageResult putMessageResult,

MessageExt messageExt) {

// Synchronization flush 这里是同步刷盘逻辑

if (FlushDiskType.SYNC_FLUSH == CommitLog.this.defaultMessageStore.getMessageStoreConfig().getFlushDiskType()) {

final GroupCommitService service = (GroupCommitService) this.flushCommitLogService;

if (messageExt.isWaitStoreMsgOK()) { //消息是否需要等待刷盘完成

GroupCommitRequest request = new GroupCommitRequest(result.getWroteOffset() + result.getWroteBytes(), CommitLog.this.defaultMessageStore.getMessageStoreConfig().getSyncFlushTimeout());

// 提交一个刷盘请求

service.putRequest(request);

CompletableFuture<PutMessageStatus> flushOkFuture = request.future();

PutMessageStatus flushStatus = null;

// 同步获取刷盘结果返回

flushStatus = flushOkFuture.get(CommitLog.this.defaultMessageStore.getMessageStoreConfig().getSyncFlushTimeout(), TimeUnit.MILLISECONDS);

if (flushStatus != PutMessageStatus.PUT_OK) {

log.error("do groupcommit, wait for flush failed, topic: " + messageExt.getTopic() + " tags: " + messageExt.getTags() + " client address: " + messageExt.getBornHostString());

putMessageResult.setPutMessageStatus(PutMessageStatus.FLUSH_DISK_TIMEOUT);

} else {

// 如果不需要等待刷盘完成,不需要结果就唤起刷盘线程就行

service.wakeup();

}

}

// Asynchronous flush

else {

if (!CommitLog.this.defaultMessageStore.isTransientStorePoolEnable()) {

flushCommitLogService.wakeup();

} else {

commitRealTimeService.wakeup();

}

}

}结语:

这里我们将消息发送到commitlog的写入过程完成了分析,但是consume在消费过程中如何消费?完全遍历commitlog文件是不实际,那么首先想到的是索引文件,没错RocketMQ也是有索引文件来加速处理消费时消息查找,这个文件就是consumequeue,里面记录着各个topic的消息的offset,下一章来学习一下如果生成维护索引数据

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?