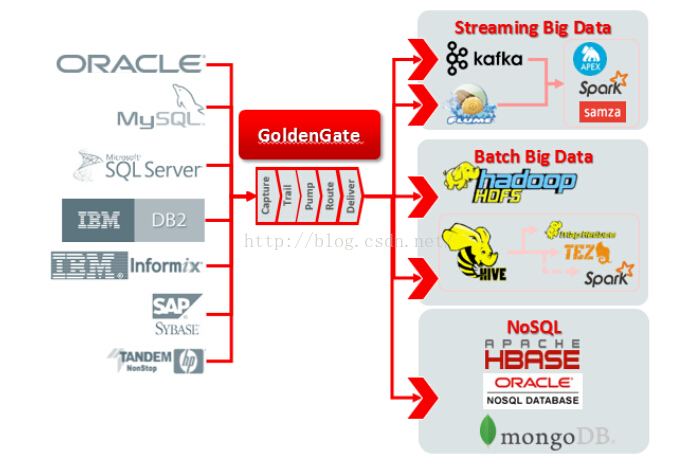

GoldenGate 12.2 新版支持同步如下图

安装kafka

安装zookeeper

配置环境变量,/etc/profile添加以下内容:

修改配置文件

[

root@T2 kafkaogg]# cd zookeeper-3.4.6/conf/

[

root@T2 conf]# ls

configuration.xsl log4j.properties zoo_sample.cfg

接着启动zookeeper服务

[

root@T2 conf]# ../bin/zkServer.sh start

JMX enabled by default

Using config: /opt/app/kafkaogg/zookeeper-3.4.6/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

查看是否启动

[

root@T2 conf]# ../bin/zkServer.sh status

JMX enabled by default

Using config: /opt/app/kafkaogg/zookeeper-3.4.6/bin/../conf/zoo.cfg

Mode: standalone

Server启动之后, 就可以启动client连接server了, 执行脚本:

[

root@T2 bin]#./zkCli.sh -server localhost:2181

安装kafka

安装kafka server之前需要单独安装zookeeper server,而且需要修改config/server.properties里面的IP信息

[

root@T2 config]# pwd

/opt/app/kafkaogg/kafka_2.11-0.9.0.0/config

zookeeper.connect=localhost:2181

这里需要修改默认zookeeper.properties配置

[

root@T2 config]# vi zookeeper.properties

dataDir=/tmp/zkdata

先启动zookeeper,启动前先kill掉之前的zkServer.sh启动的zookeeper服务

启动kafka服务

查看kafka进程是否启动:

16882 QuorumPeerMain

17094 Kafka

17287 Jps

创建topic

[

root@T2 bin]#./kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

Created topic "test".

查看topic列表

[

root@T2 bin]#./kafka-topics.sh --list --zookeeper localhost:2181

test

[

root@T2 config]#cp server.properties ./server-1.properties

[

root@T2 config]#cp server.properties ./server-2.properties

更改以下内容

[

root@T2 config]#vi server-1.properties

broker.id=1

listeners=PLAINTEXT://:9093

log.dir=/tmp/kafka-logs-1

[

root@T2 config]#vi server-2.properties

broker.id=2

listeners=PLAINTEXT://:9094

log.dir=/tmp/kafka-logs-2

其中broker.id是每一个broker的唯一标识号

启动另外两个Broker进程:

[

root@T2 config]#../bin/kafka-server-start.sh ./server-1.properties &

在同一台机器上创建三个broker的topic:

[

root@T2 bin]#./kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 3 --partitions 1 --topic fafacluster

Created topic "fafacluster".

查看三个broker中,leader和replica角色:

[

root@T2 bin]#./kafka-topics.sh --describe --zookeeper localhost:2181 --topic fafacluster

Topic:fafacluster PartitionCount:1 ReplicationFactor:3 Configs:

Topic: fafacluster Partition: 0 Leader: 2 Replicas: 2,0,1 Isr: 2,0,1

对于三个broker的伪cluster,可以尝试杀掉其中一个或两个broker进程,然后看看leader,replica,isr会不会发生变化,消费者还能不能正常消费消息。

生产者推送消息:

[

root@T2 bin]#./kafka-console-producer.sh --broker-list localhost:9092 --topic fafacluster

fafa

fafa01

杀掉一个broker进程

[

root@T2 bin]#kill -9 22497

[

root@T2 bin]#./kafka-console-consumer.sh --zookeeper localhost:2181 --from-beginning --topic fafacluster

..................................

..................................

java.nio.channels.ClosedChannelException

at kafka.network.BlockingChannel.send(BlockingChannel.scala:110)

at kafka.consumer.SimpleConsumer.liftedTree1$1(SimpleConsumer.scala:98)

at kafka.consumer.SimpleConsumer.kafka$consumer$SimpleConsumer$$sendRequest(SimpleConsumer.scala:83)

at kafka.consumer.SimpleConsumer.getOffsetsBefore(SimpleConsumer.scala:149)

at kafka.consumer.SimpleConsumer.earliestOrLatestOffset(SimpleConsumer.scala:188)

at kafka.consumer.ConsumerFetcherThread.handleOffsetOutOfRange(ConsumerFetcherThread.scala:84)

at kafka.server.AbstractFetcherThread$$anonfun$addPartitions$2.apply(AbstractFetcherThread.scala:187)

at kafka.server.AbstractFetcherThread$$anonfun$addPartitions$2.apply(AbstractFetcherThread.scala:182)

at scala.collection.TraversableLike$WithFilter$$anonfun$foreach$1.apply(TraversableLike.scala:778)

at scala.collection.immutable.Map$Map1.foreach(Map.scala:116)

at scala.collection.TraversableLike$WithFilter.foreach(TraversableLike.scala:777)

at kafka.server.AbstractFetcherThread.addPartitions(AbstractFetcherThread.scala:182)

at kafka.server.AbstractFetcherManager$$anonfun$addFetcherForPartitions$2.apply(AbstractFetcherManager.scala:88)

at kafka.server.AbstractFetcherManager$$anonfun$addFetcherForPartitions$2.apply(AbstractFetcherManager.scala:78)

at scala.collection.TraversableLike$WithFilter$$anonfun$foreach$1.apply(TraversableLike.scala:778)

at scala.collection.immutable.Map$Map1.foreach(Map.scala:116)

at scala.collection.TraversableLike$WithFilter.foreach(TraversableLike.scala:777)

at kafka.server.AbstractFetcherManager.addFetcherForPartitions(AbstractFetcherManager.scala:78)

at kafka.consumer.ConsumerFetcherManager$LeaderFinderThread.doWork(ConsumerFetcherManager.scala:95)

at kafka.utils.ShutdownableThread.run(ShutdownableThread.scala:63)

fafa

fafa01

可以看到一大堆报错之后,还是完整的消费到生产的消息

使用kafka连接工具导入导出信息

bootstrap.servers=localhost:9092

key.converter=org.apache.kafka.connect.json.JsonConverter

value.converter=org.apache.kafka.connect.json.JsonConverter

key.converter.schemas.enable=true

value.converter.schemas.enable=true

internal.key.converter=org.apache.kafka.connect.json.JsonConverter

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

498

498

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?