书生·浦语大模型(2-1):InternLM2-Chat-1.8B 模型

生成 300 字的小故事

使用 InternLM2-Chat-1.8B 模型生成 300 字的小故事。

部署 InternLM2-Chat-1.8B

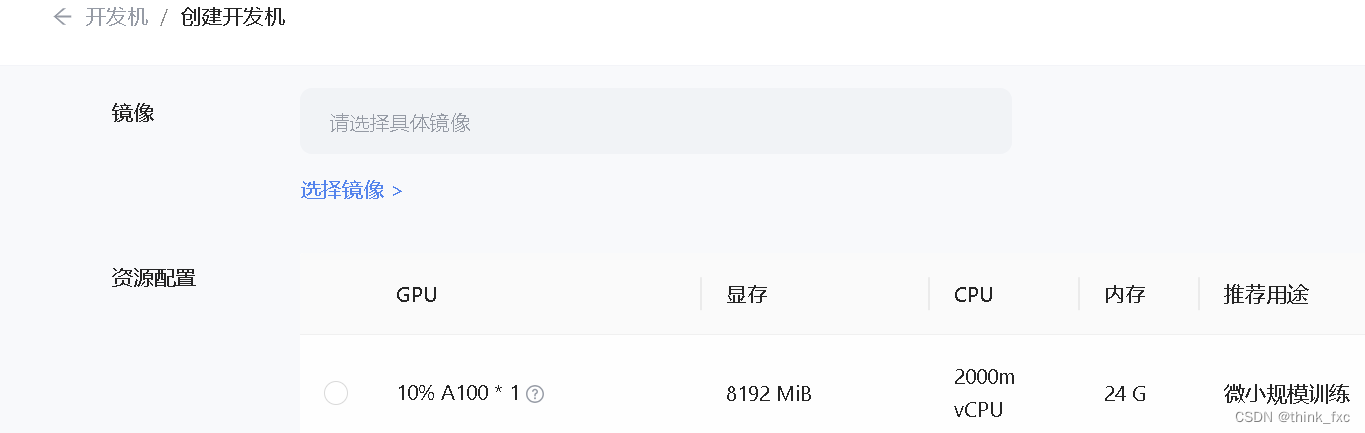

1.1 创建虚拟机

打开 Intern Studio 界面,点击 创建开发机 配置开发机系统

1.2 安装环境

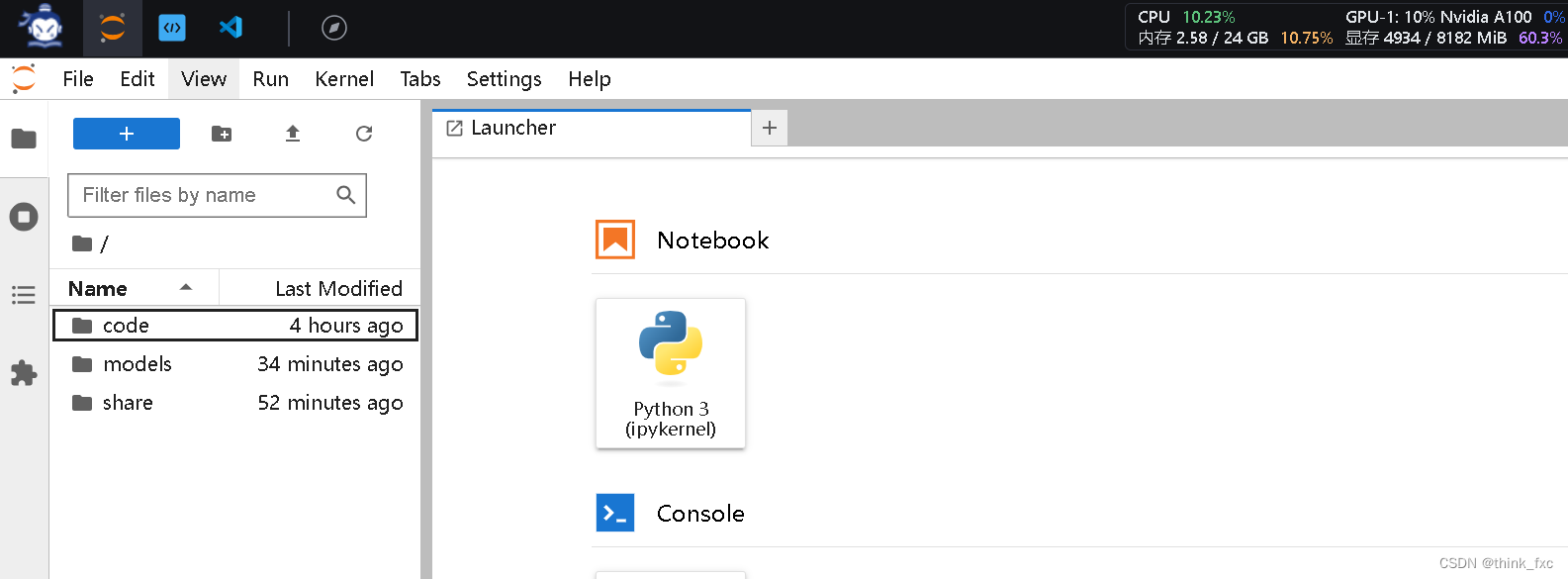

点击 进入开发机选项进入开发机

在 terminal 中环境配置

bash /root/share/install_conda_env_internlm_base.sh internlm-demo # 执行该脚本文件来安装项目实验环境

使用以下命令激活环境

conda activate internlm-demo

在环境中安装所需要的依赖

python -m pip install --upgrade pip

pip install modelscope1.9.5

pip install transformers4.35.2

pip install streamlit1.24.0

pip install sentencepiece0.1.99

pip install accelerate==0.24.1

1.3 模型下载

在 /root 路径下新建目录 models

mkdir -p /root/models/Shanghai_AI_Laboratory

cp -r /root/share/temp/model_repos/internlm2-chat-1_8b /root/model/Shanghai_AI_Laboratory

在目录下新建 download.py 文件并在其中输入以下内容,粘贴代码后保存文件。

import torch

from modelscope import snapshot_download, AutoModel, AutoTokenizer

import os

model_dir = snapshot_download(‘Shanghai_AI_Laboratory/internlm2-chat-1_8b’, cache_dir=‘/root/models’, revision=‘v1.0.3’)

运行以下命令,下载模型

python /root/models/download.py

1.4 准备测试代码

首先 clone 代码,在 /root 路径下新建 code 目录,然后切换路径, clone 代码.

cd /root/code

git clone https://gitee.com/internlm/InternLM.git

将 /root/code/InternLM/web_demo.py 中 29 行和 33 行的模型更换为本地的 /root/model/Shanghai_AI_Laboratory/internlm2-chat-1_8b

1.5 终端运行

我们可以在 /root/code/InternLM 目录下新建一个 cli_demo_1.8b.py 文件,将以下代码填入其中

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_name_or_path = "/root/model/Shanghai_AI_Laboratory/internlm2-chat-1_8b"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='auto')

model = model.eval()

system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语).

- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless.

- InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文.

"""

messages = [(system_prompt, '')]

print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============")

while True:

input_text = input("User >>> ")

input_text = input_text.replace(' ', '')

if input_text == "exit":

break

response, history = model.chat(tokenizer, input_text, history=messages)

messages.append((input_text, response))

print(f"robot >>> {response}")

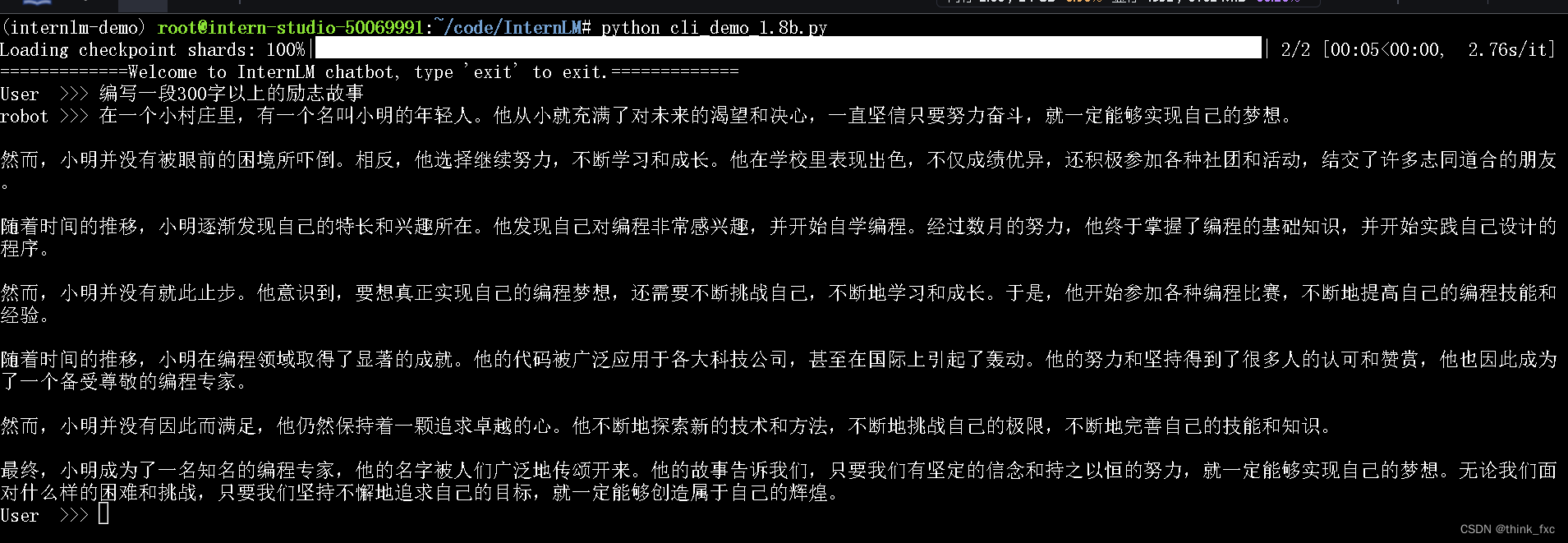

然后在终端运行以下命令,即可体验 InternLM-Chat-7B 模型的对话能力。对话效果如下所示

python /root/code/InternLM/cli_demo_1.8b.py

编写一段300字故事

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?