1.调试core包

1.日志文件配置

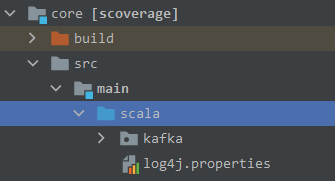

拷贝config/log4j.properties文件到core/src/main/scala下,便于观察日志

修改log4j.properties文件

# 此处将info改成了debug

log4j.rootLogger=debug, stdout, kafkaAppender

2.修改config/server.properties文件

broker.id=0

#delete.topic.enable=true

#listeners=PLAINTEXT://:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/tmp/kafka-logs

num.partitions=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

#log.flush.interval.messages=10000

#log.flush.interval.ms=1000

log.retention.hours=168

#log.retention.bytes=1073741824

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=localhost:2181

zookeeper.connection.timeout.ms=6000

group.initial.rebalance.delay.ms=0

这里修改如下文件

1. log.dirs=F:\\resources\\kafka\\logs

2. zookeeper.connect=localhost:2181

# 如果不是本机安装zookeeper,则localhost替换成zookeeper地址

3. zookeeper.connection.timeout.ms=60000

# 增加了zookeeper连接超时时间

配置文件尽可能使用主机名,不使用ip

3.调试配置

-

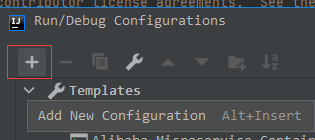

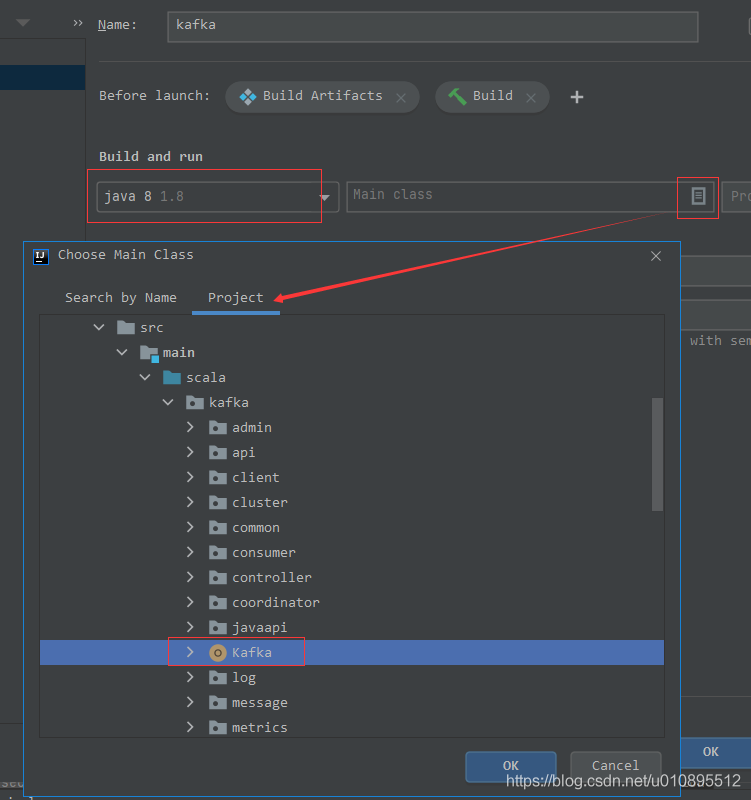

idea点击Run–Edit Configurations,点击+ 号,选择Application

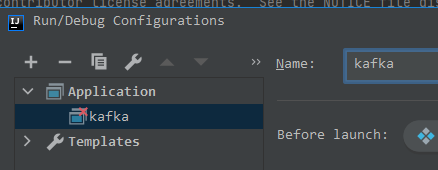

编辑名称为kafka

-

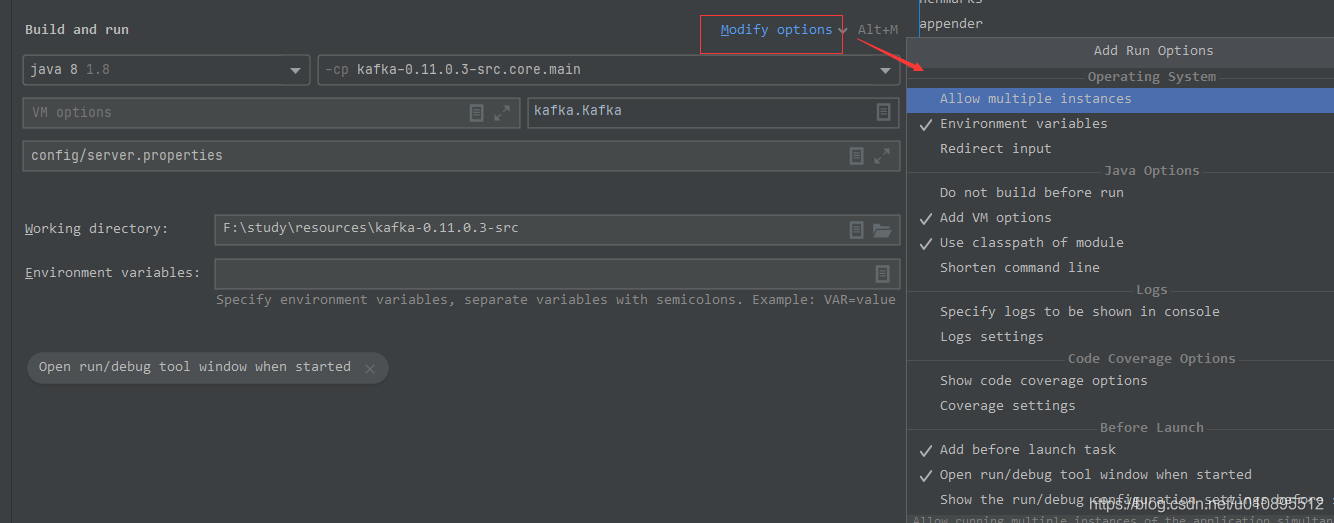

配置运行主类

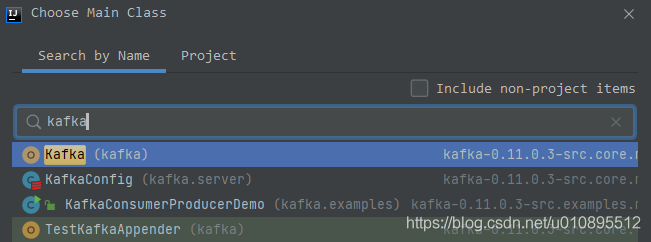

或者搜索kafka

-

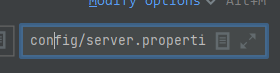

配置参数config/server.properties

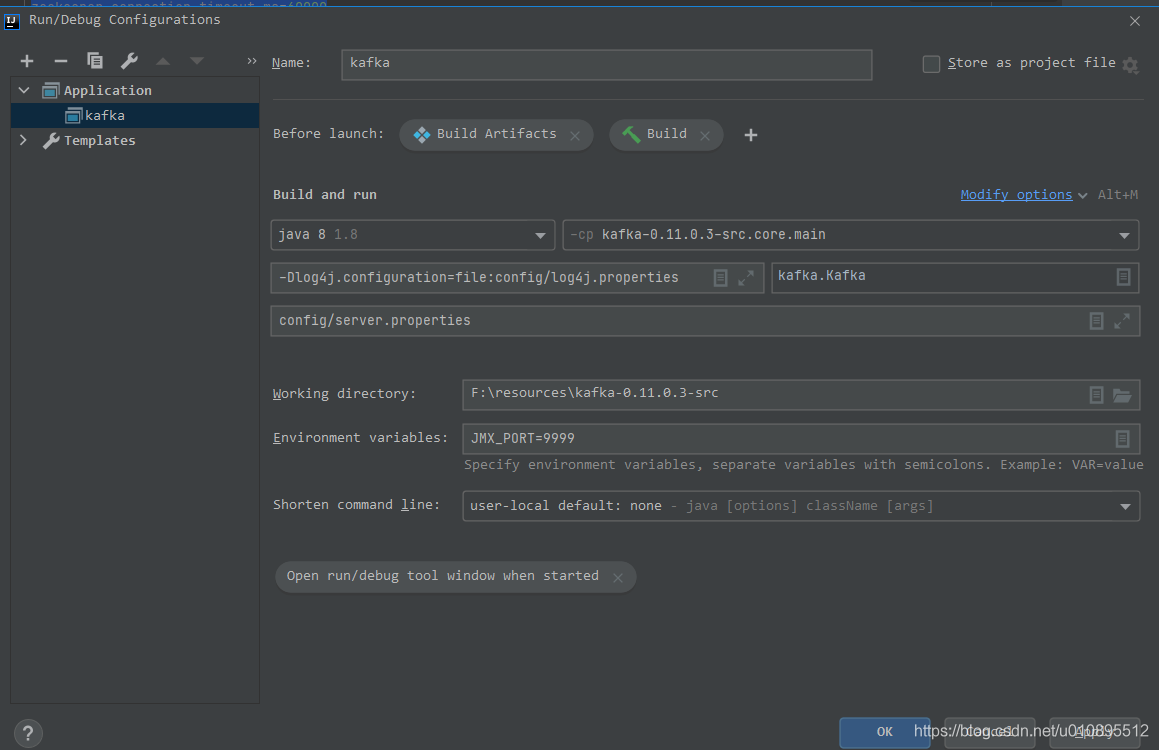

总配置如下:

-

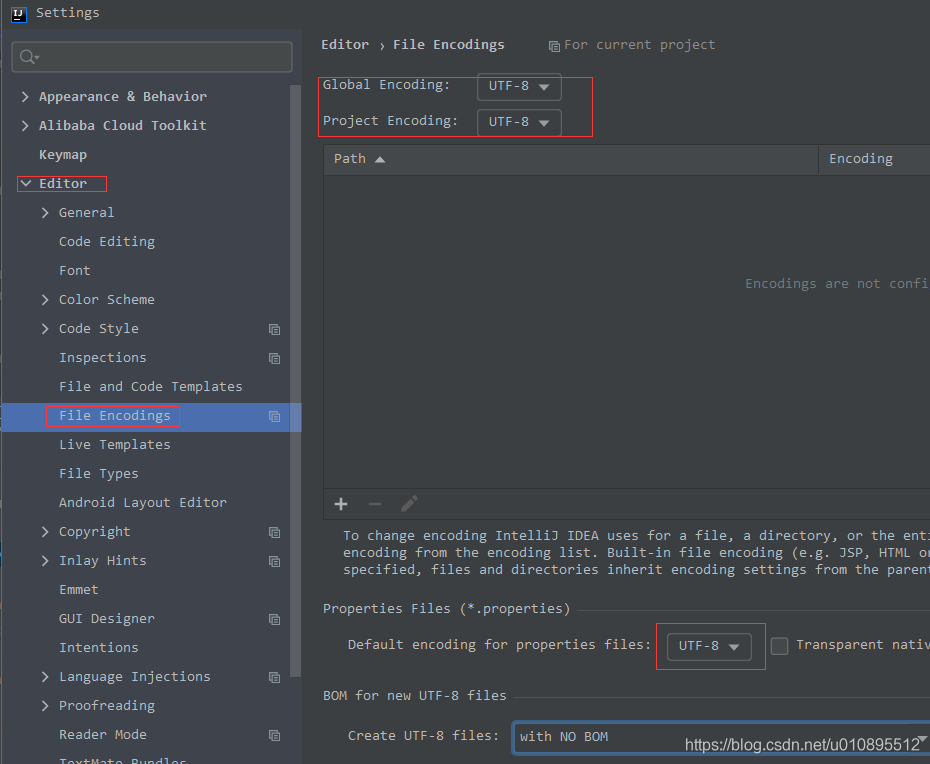

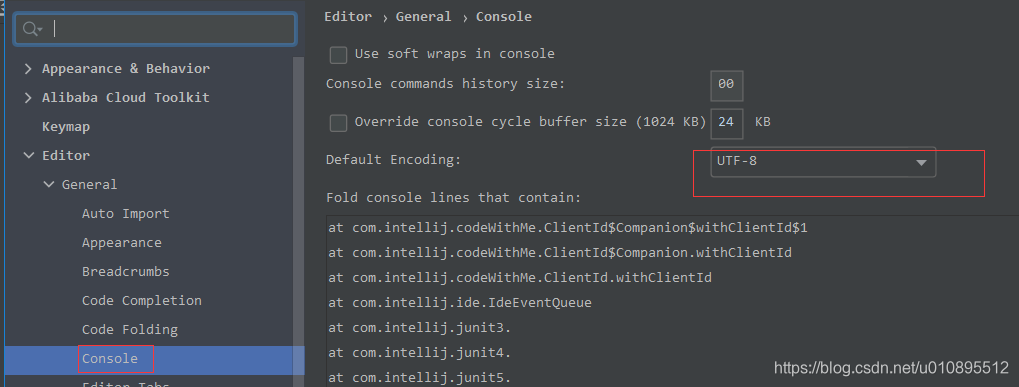

编码格式设置

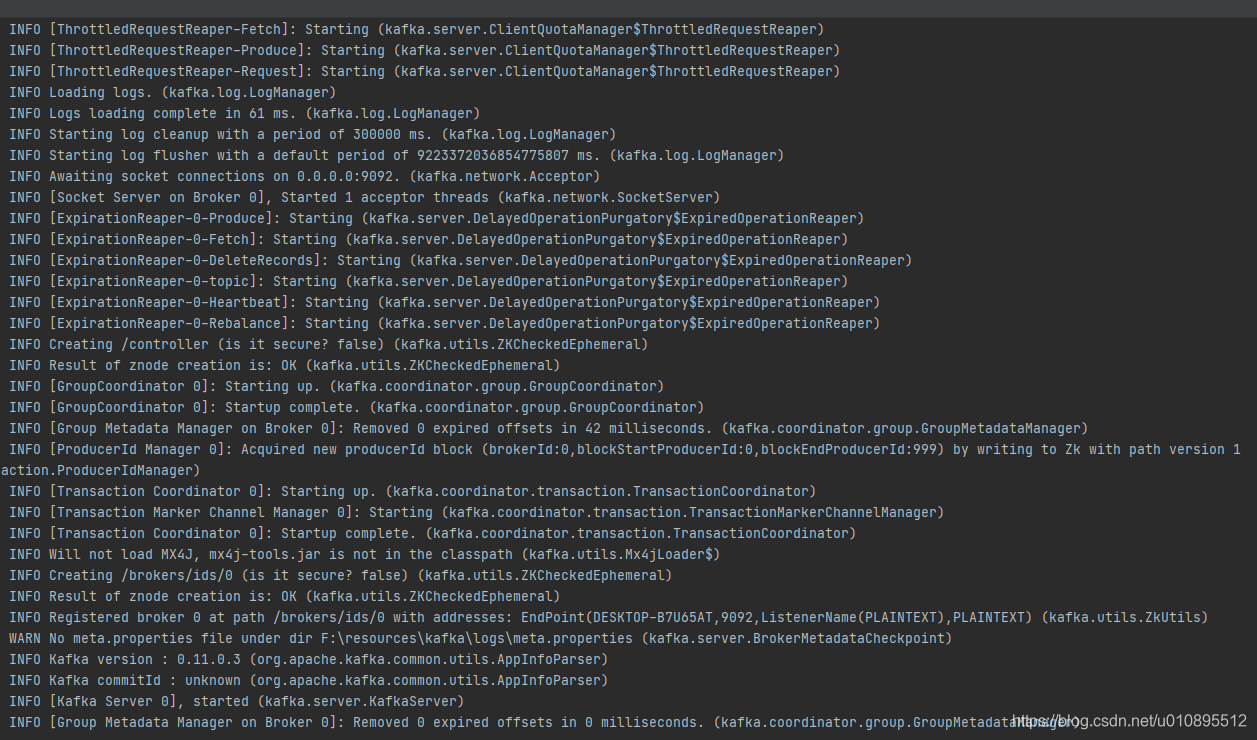

4.启动

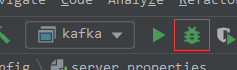

idea出现了刚刚配置的kafka,点击debug图标

报错

Execution failed for task ':core:Kafka.main()'.

> Process 'command 'E:/setupall/java8/bin/java.exe'' finished with non-zero exit value 1

* Try:

Run with --stacktrace option to get the stack trace. Run with --info or --debug option to get more log output. Run with --scan to get full insights.

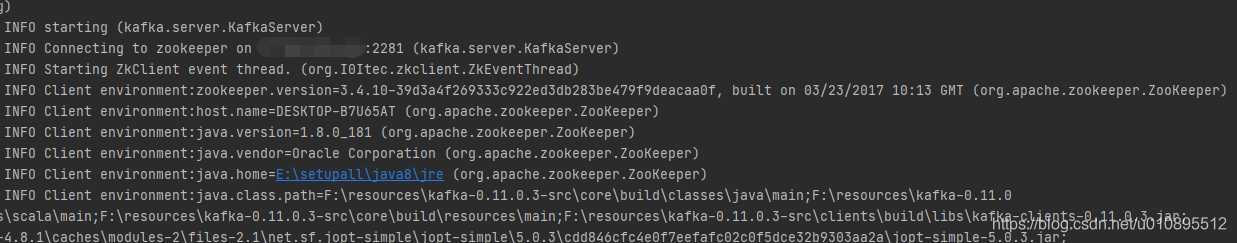

Unable to connect to zookeeper server 'ip:2281' with timeout of 60000 ms

5.访问规则设置

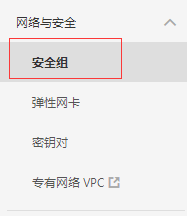

ip为阿里云的服务器公网ip,进入阿里云控制台修改访问规则

点击配置规则

勾选需要添加的端口范围,如全部,点击确定

6.再次启动

2005

2005

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?