1,

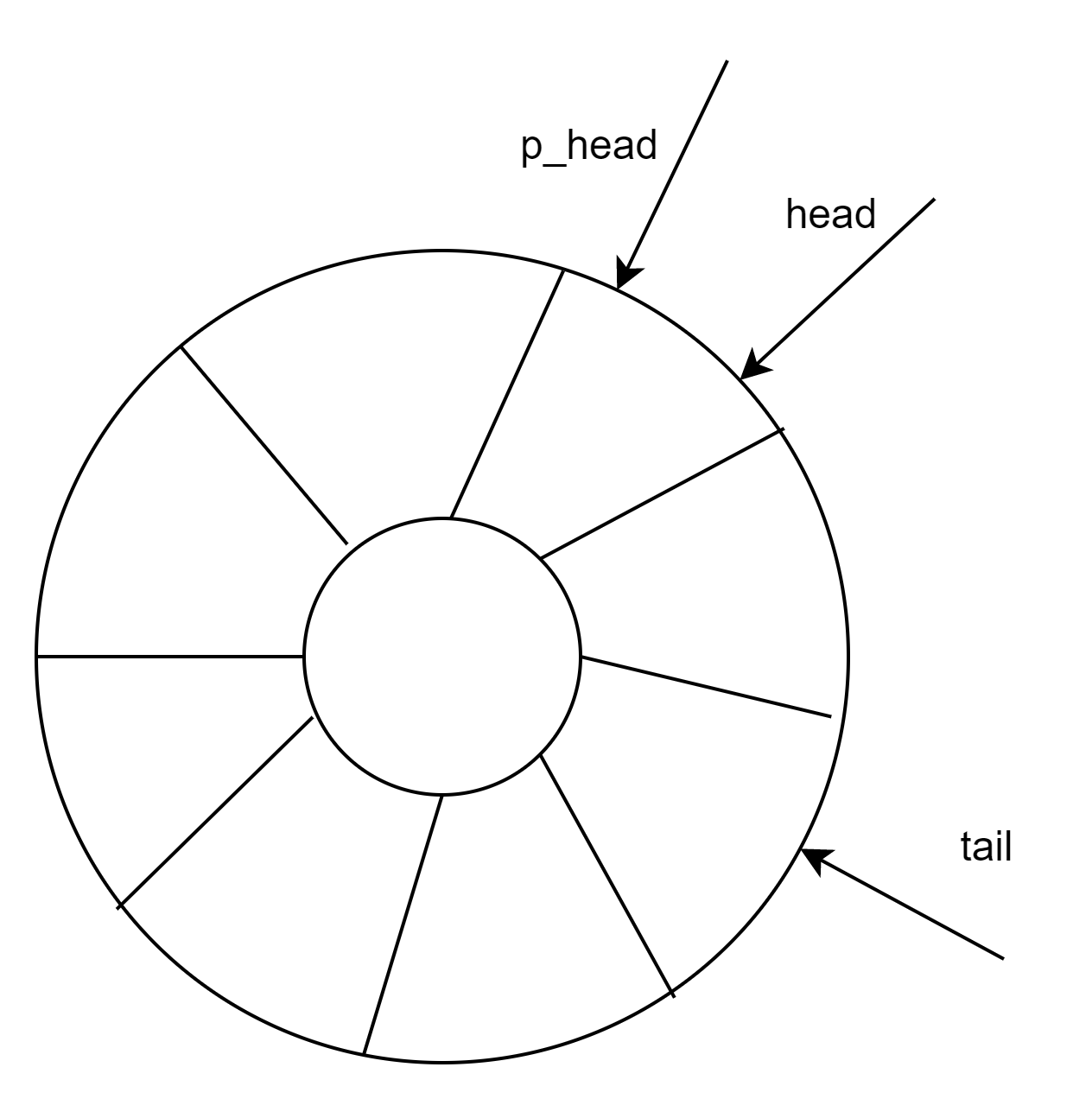

在事件处理层(evdev.c)中结构体evdev_client定义了一个环形缓冲区(circular buffer),其原理是用数组的方式实现了一个先进先出的循环队列(circular queue),用以缓存内核驱动上报给用户层的input_event事件。

struct evdev_client {

unsigned int head; //头指针

unsigned int tail; //尾指针

unsigned int packet_head; /* [future] position of the first element of next packet */ //包指针

spinlock_t buffer_lock; /* protects access to buffer, head and tail */

wait_queue_head_t wait;

struct fasync_struct *fasync;

struct evdev *evdev;

struct list_head node;

enum input_clock_type clk_type;

bool revoked;

unsigned long *evmasks[EV_CNT];

unsigned int bufsize; //循环队列大小

struct input_event buffer[]; //循环队列数组

};evdev_client对象维护了三个偏移量:head、tail以及packet_head。head、tail作为循环队列的头尾指针记录入口与出口偏移,那么包指针packet_head有什么作用呢?

packet_head

内核驱动处理一次输入,可能上报一到多个input_event事件,为表示处理完成,会在上报这些input_event事件后再上报一次同步事件。头指针head以input_event事件为单位,记录缓冲区的入口偏移量,而包指针packet_head则以“数据包”(一到多个input_event事件)为单位,记录缓冲区的入口偏移量。

图1 环形缓冲区

2, 环形缓冲区工作机制

循环队列入队列算法

head++;

head &= bufsize - 1;循环队列出队列算法

tail++;

tail &= bufsize - 1;循环队列已满条件

head == tail;循环队列为空条件

packet_head == tail“求余”和“求与”

为解决头尾指针的上溢和下溢现象,使队列的元素空间可重复使用,一般循环队列的出入队算法都采用“求余”操作:

head = (head + 1) % bufsize; // 入队

tail = (tail + 1) % bufsize; // 出队为避免计算代价高昂的“求余”操作,使内核运作更高效,input子系统的环形缓冲区采用了“求与”算法,这要求bufsize必须为2的幂,在后文中可以看到bufsize的值实际上是为64或者8的n倍,符合“求与”运算的要求。

3,环形缓冲区的构造以及初始化

用户层通过open()函数打开input设备节点时,调用过程如下:

open() -> sys_open() -> evdev_open()

在evdev_open()函数中完成了对evdev_client对象的构造以及初始化,每一个打开input设备节点的用户都在内核中维护了一个evdev_client对象,这些evdev_client对象通过evdev_attach_client()函数注册在evdev1对象的内核链表上。

static int evdev_open(struct inode *inode, struct file *file)

{

struct evdev *evdev = container_of(inode->i_cdev, struct evdev, cdev);

//计算环形缓冲区大小bufsize

unsigned int bufsize = evdev_compute_buffer_size(evdev->handle.dev);

struct evdev_client *client;

int error;

//为evdev_client 分配内核空间

client = kvzalloc(struct_size(client, buffer, bufsize), GFP_KERNEL);

if (!client)

return -ENOMEM;

//初始化等待队列client->wait

init_waitqueue_head(&client->wait);

client->bufsize = bufsize;

spin_lock_init(&client->buffer_lock);

client->evdev = evdev;

//注册到内核链表

evdev_attach_client(evdev, client);

error = evdev_open_device(evdev);

if (error)

goto err_free_client;

file->private_data = client;

stream_open(inode, file);

return 0;

err_free_client:

evdev_detach_client(evdev, client);

kvfree(client);

return error;

}4,生产者消费者模型

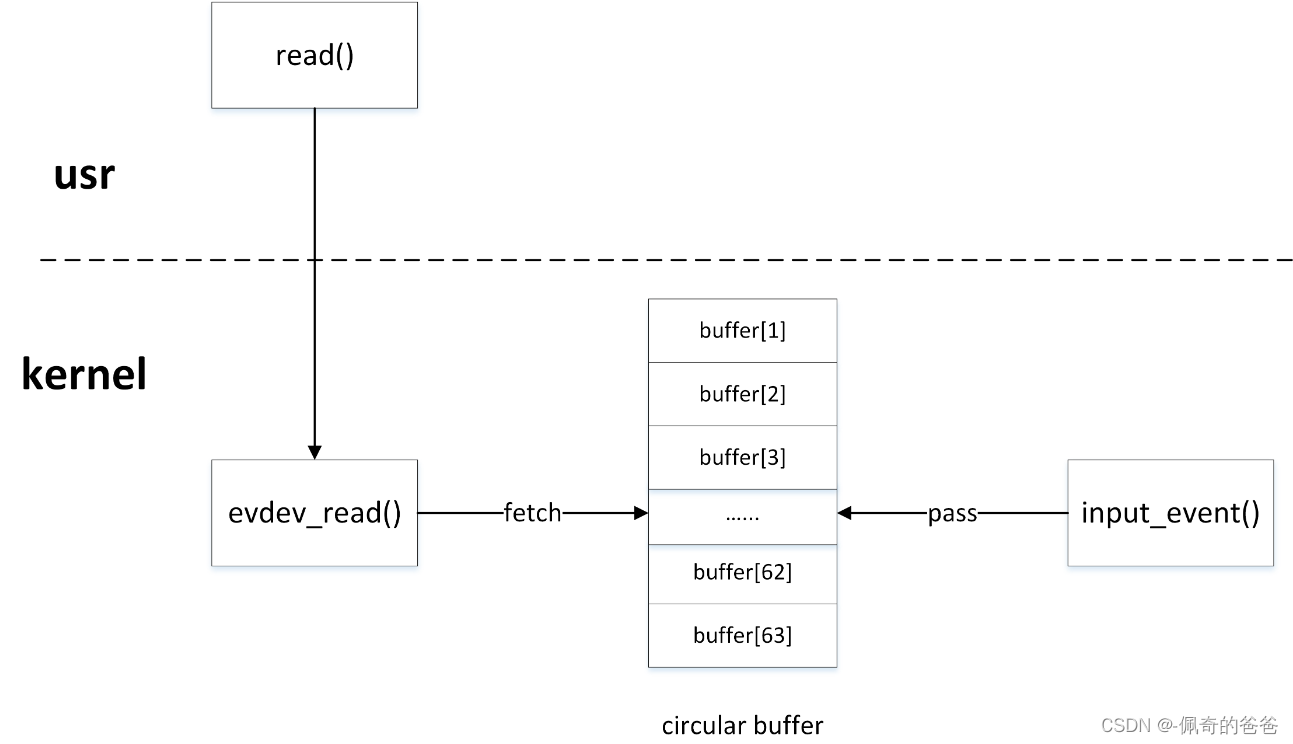

内核驱动与用户程序就是典型的生产者/消费者模型,内核驱动产生input_event事件,然后通过input_event()函数写入环形缓冲区,用户程序通过read()函数从环形缓冲区中获取input_event事件。

图2 生产者消费者模型

5,缓冲区的生产者

内核驱动作为生产者,通过input_event()上报input_event事件时,最终调用___pass_event()函数将事件写入环形缓冲区:

static void evdev_pass_values(struct evdev_client *client,

const struct input_value *vals, unsigned int count,

ktime_t *ev_time)

{

const struct input_value *v;

struct input_event event;

struct timespec64 ts;

bool wakeup = false;

if (client->revoked)

return;

ts = ktime_to_timespec64(ev_time[client->clk_type]);

event.input_event_sec = ts.tv_sec;

event.input_event_usec = ts.tv_nsec / NSEC_PER_USEC;

/* Interrupts are disabled, just acquire the lock. */

spin_lock(&client->buffer_lock);

for (v = vals; v != vals + count; v++) {

if (__evdev_is_filtered(client, v->type, v->code))

continue;

if (v->type == EV_SYN && v->code == SYN_REPORT) {

/* drop empty SYN_REPORT */

if (client->packet_head == client->head)

continue; //如果环形缓冲区内没有有效数据且来了一次EV_SYN event,需要丢弃空的SYN_REPORT

wakeup = true; //fill了一包数据,需要唤醒睡眠的等待队列

event.type = v->type;

event.code = v->code;

event.value = v->value;

__pass_event(client, &event);

}

spin_unlock(&client->buffer_lock);

if (wakeup)

wake_up_interruptible_poll(&client->wait,

EPOLLIN | EPOLLOUT | EPOLLRDNORM | EPOLLWRNORM); //fill了一包数据,需要唤醒睡眠的等待队列

}static void __pass_event(struct evdev_client *client,

const struct input_event *event)

{

// 将input_event事件存入缓冲区,队头head自增指向下一个元素空间

client->buffer[client->head++] = *event;

client->head &= client->bufsize - 1;

// 当队头head与队尾tail相等时,说明缓冲区空间已满

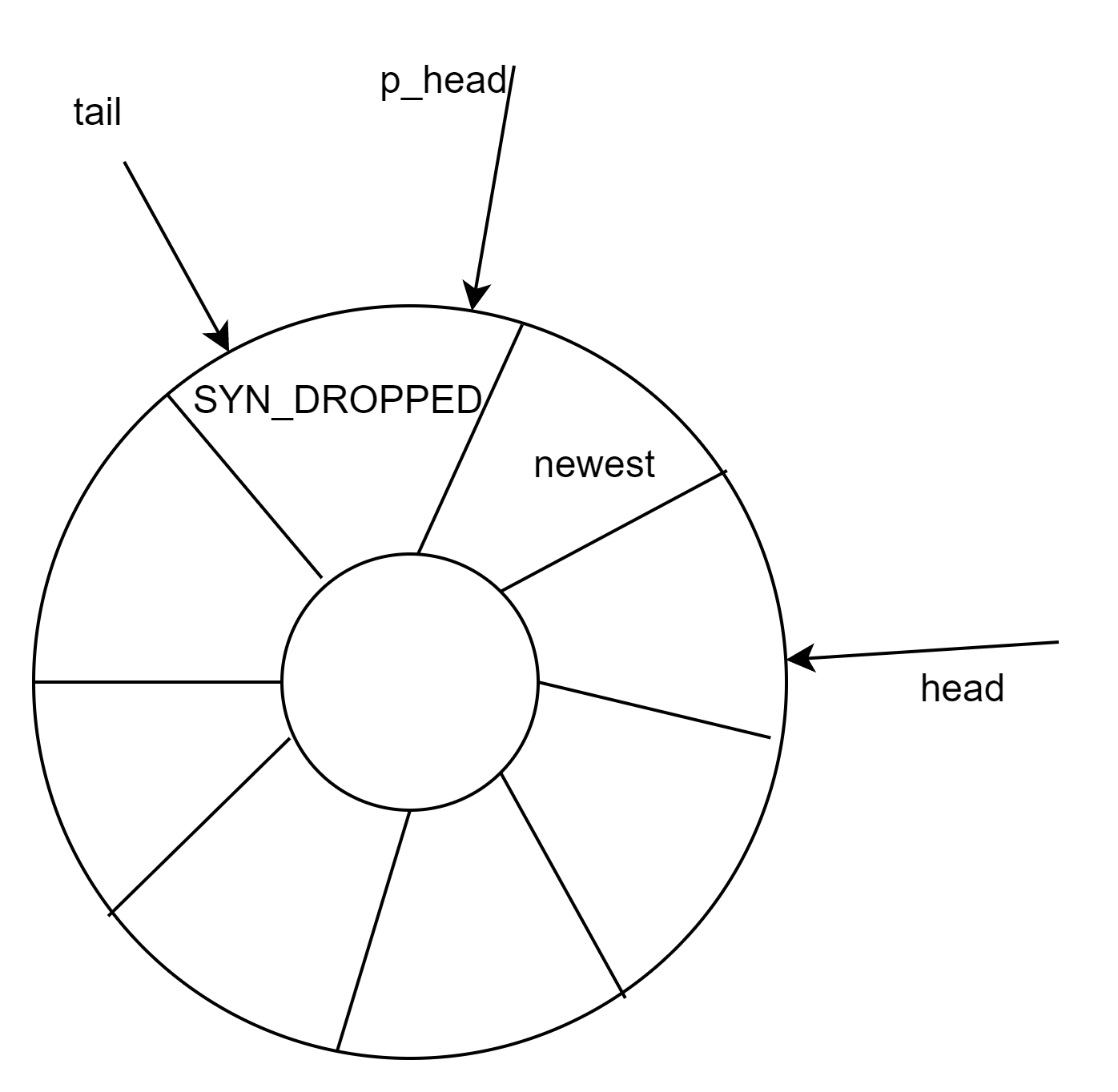

if (unlikely(client->head == client->tail)) {

/*

* This effectively "drops" all unconsumed events, leaving

* EV_SYN/SYN_DROPPED plus the newest event in the queue.

*/

client->tail = (client->head - 2) & (client->bufsize - 1); //丢弃掉环形缓冲区中的数据,只保留队列中EV_SYN/SYN_DROPPED和最新的一次event

//填充EV_SYN/SYN_DROPPED

client->buffer[client->tail] = (struct input_event) {

.input_event_sec = event->input_event_sec,

.input_event_usec = event->input_event_usec,

.type = EV_SYN,

.code = SYN_DROPPED,

.value = 0,

};

client->packet_head = client->tail;

}

// 当遇到EV_SYN/SYN_REPORT同步事件时,packet_head移动到队头head位置

if (event->type == EV_SYN && event->code == SYN_REPORT) {

client->packet_head = client->head;

kill_fasync(&client->fasync, SIGIO, POLL_IN); //异步通知:设备通知用户自身可以访问,之后用户再进行I/O处理

}

}

图3 环形缓冲区满时的处理

6,环形缓冲区的消费者

用户程序作为消费者,通过read()函数读取input设备节点时,最终在内核调用evdev_fetch_next_event()函数从环形缓冲区中读取input_event事件:

static ssize_t evdev_read(struct file *file, char __user *buffer,

size_t count, loff_t *ppos)

{

struct evdev_client *client = file->private_data;

struct evdev *evdev = client->evdev;

struct input_event event;

size_t read = 0;

int error;

if (count != 0 && count < input_event_size())

return -EINVAL;

for (;;) {

if (!evdev->exist || client->revoked)

return -ENODEV;

if (client->packet_head == client->tail &&

(file->f_flags & O_NONBLOCK)) //如果ringbuffer 为空且非阻塞的读,立即返回

return -EAGAIN;

/*

* count == 0 is special - no IO is done but we check

* for error conditions (see above).

*/

if (count == 0)

break;

while (read + input_event_size() <= count &&

evdev_fetch_next_event(client, &event)) {

if (input_event_to_user(buffer + read, &event)) //将event传递到userspace

return -EFAULT;

read += input_event_size();

}

if (read)

break;

if (!(file->f_flags & O_NONBLOCK)) { //如果阻塞的读,等待ringbuffer中有数据

error = wait_event_interruptible(client->wait,

client->packet_head != client->tail ||

!evdev->exist || client->revoked);

if (error)

return error;

}

}

return read;

}static int evdev_fetch_next_event(struct evdev_client *client,

struct input_event *event)

{

int have_event;

spin_lock_irq(&client->buffer_lock);

// 判缓冲区中是否有input_event事件

have_event = client->packet_head != client->tail;

if (have_event) {

// 从缓冲区中读取一次input_event事件,队尾tail自增指向下一个元素空间

*event = client->buffer[client->tail++];

client->tail &= client->bufsize - 1;

}

spin_unlock_irq(&client->buffer_lock);

return have_event;

}kernel空间poll()函数的实现:

/* No kernel lock - fine */

static __poll_t evdev_poll(struct file *file, poll_table *wait)

{

struct evdev_client *client = file->private_data;

struct evdev *evdev = client->evdev;

__poll_t mask;

//在等待队列client->wait上睡眠

poll_wait(file, &client->wait, wait);

if (evdev->exist && !client->revoked)

mask = EPOLLOUT | EPOLLWRNORM;

else

mask = EPOLLHUP | EPOLLERR;

if (client->packet_head != client->tail)

mask |= EPOLLIN | EPOLLRDNORM;

return mask; //用户空间poll()的返回

}7,kill_fasync()异步通知

阻塞与非阻塞访问、poll函数提供了较好的解决设备访问的机制,但是如果有了异步通知,整套机制则更加完整了。

异步通知的意思是:一旦设备就绪,则主动通知应用程序,这样应用程序根本就不需要查询设备状态,这一点非常类似于硬件上“中断”的概念,比较准确的称谓是“信号驱动的异步I/O”。信号是在软件层次上对中断机制的一种模拟,在原理上,一个进程收到一个信号与处理器收到一个中断请求可以说是一样的。信号是异步的,一个进程不必通过任何操作来等待信号的到达,事实上,进程也不知道信号到底什么时候到达。

阻塞I/O意味着一直等待设备可访问后再访问,非阻塞I/O中使用poll()意味着查询设备是否可访问,而异步通知则意味着设备通知用户自身可访问,之后用户再进行I/O处理。由此可见,这几种I/O方式可以相互补充。

使用kill_fasync()异步通知实现按键处理:

应用层

#include <sys/types.h>

#include <sys/stat.h>

#include <fcntl.h>

#include <stdio.h>

#include <poll.h>

#include <signal.h>

#include <sys/types.h>

#include <unistd.h>

#include <fcntl.h>

/* fifthdrvtest

*/

int fd;

//信号处理函数

void my_signal_fun(int signum)

{

unsigned char key_val;

read(fd, &key_val, 1);

printf("key_val: 0x%x\n", key_val);

}

int main(int argc, char **argv)

{

unsigned char key_val;

int ret;

int Oflags;

//在应用程序中捕捉SIGIO信号(由驱动程序发送)

signal(SIGIO, my_signal_fun);

fd = open("/dev/buttons", O_RDWR);

if (fd < 0)

{

printf("can't open!\n");

}

//将当前进程PID设置为fd文件所对应驱动程序将要发送SIGIO,SIGUSR信号进程PID

fcntl(fd, F_SETOWN, getpid());

//获取fd的打开方式

Oflags = fcntl(fd, F_GETFL);

//将fd的打开方式设置为FASYNC --- 即 支持异步通知

//该行代码执行会触发 驱动程序中 file_operations->fasync 函数 ------fasync函数调用fasync_helper初始化一个fasync_struct结构体,该结构体描述了将要发送信号的进程PID (fasync_struct->fa_file->f_owner->pid)

fcntl(fd, F_SETFL, Oflags | FASYNC);

while (1)

{

sleep(1000);

}

return 0;

}驱动层

#include <linux/module.h>

#include <linux/kernel.h>

#include <linux/fs.h>

#include <linux/init.h>

#include <linux/delay.h>

#include <linux/irq.h>

#include <asm/uaccess.h>

#include <asm/irq.h>

#include <asm/io.h>

#include <asm/arch/regs-gpio.h>

#include <asm/hardware.h>

#include <linux/poll.h>

static struct class *fifthdrv_class;

static struct class_device *fifthdrv_class_dev;

//volatile unsigned long *gpfcon;

//volatile unsigned long *gpfdat;

static DECLARE_WAIT_QUEUE_HEAD(button_waitq);

/* 中断事件标志, 中断服务程序将它置1,fifth_drv_read将它清0 */

static volatile int ev_press = 0;

static struct fasync_struct *button_async;

struct pin_desc{

unsigned int pin;

unsigned int key_val;

};

/* 键值: 按下时, 0x01, 0x02, 0x03, 0x04 */

/* 键值: 松开时, 0x81, 0x82, 0x83, 0x84 */

static unsigned char key_val;

/*

* K1,K2,K3,K4对应GPG0,GPG3,GPG5,GPG6

*/

struct pin_desc pins_desc[4] = {

{S3C2410_GPG0, 0x01},

{S3C2410_GPG3, 0x02},

{S3C2410_GPG5, 0x03},

{S3C2410_GPG6, 0x04},

};

/*

* 确定按键值

*/

static irqreturn_t buttons_irq(int irq, void *dev_id)

{

struct pin_desc * pindesc = (struct pin_desc *)dev_id;

unsigned int pinval;

pinval = s3c2410_gpio_getpin(pindesc->pin);

if (pinval)

{

/* 松开 */

key_val = 0x80 | pindesc->key_val;

}

else

{

/* 按下 */

key_val = pindesc->key_val;

}

ev_press = 1; /* 表示中断发生了 */

wake_up_interruptible(&button_waitq); /* 唤醒休眠的进程 */

//发送信号SIGIO信号给fasync_struct 结构体所描述的PID,触发应用程序的SIGIO信号处理函数

kill_fasync (&button_async, SIGIO, POLL_IN);

return IRQ_RETVAL(IRQ_HANDLED);

}

static int fifth_drv_open(struct inode *inode, struct file *file)

{

/* GPG0,GPG3,GPG5,GPG6为中断引脚: EINT8,EINT11,EINT13,EINT14 */

request_irq(IRQ_EINT8, buttons_irq, IRQT_BOTHEDGE, "K1", &pins_desc[0]);

request_irq(IRQ_EINT11, buttons_irq, IRQT_BOTHEDGE, "K2", &pins_desc[1]);

request_irq(IRQ_EINT13, buttons_irq, IRQT_BOTHEDGE, "K3", &pins_desc[2]);

request_irq(IRQ_EINT14, buttons_irq, IRQT_BOTHEDGE, "K4", &pins_desc[3]);

return 0;

}

ssize_t fifth_drv_read(struct file *file, char __user *buf, size_t size, loff_t *ppos)

{

if (size != 1)

return -EINVAL;

/* 如果没有按键动作, 休眠 */

wait_event_interruptible(button_waitq, ev_press);

/* 如果有按键动作, 返回键值 */

copy_to_user(buf, &key_val, 1);

ev_press = 0;

return 1;

}

int fifth_drv_close(struct inode *inode, struct file *file)

{

free_irq(IRQ_EINT8, &pins_desc[0]);

free_irq(IRQ_EINT11, &pins_desc[1]);

free_irq(IRQ_EINT13, &pins_desc[2]);

free_irq(IRQ_EINT14, &pins_desc[3]);

return 0;

}

static unsigned fifth_drv_poll(struct file *file, poll_table *wait)

{

unsigned int mask = 0;

poll_wait(file, &button_waitq, wait); // 不会立即休眠

if (ev_press)

mask |= POLLIN | POLLRDNORM;

return mask;

}

static int fifth_drv_fasync (int fd, struct file *filp, int on)

{

printk("driver: fifth_drv_fasync\n");

//初始化/释放 fasync_struct 结构体 (fasync_struct->fa_file->f_owner->pid)

return fasync_helper (fd, filp, on, &button_async);

}

static struct file_operations sencod_drv_fops = {

.owner = THIS_MODULE, /* 这是一个宏,推向编译模块时自动创建的__this_module变量 */

.open = fifth_drv_open,

.read = fifth_drv_read,

.release = fifth_drv_close,

.poll = fifth_drv_poll,

.fasync = fifth_drv_fasync,

};

int major;

static int fifth_drv_init(void)

{

major = register_chrdev(0, "fifth_drv", &sencod_drv_fops);

fifthdrv_class = class_create(THIS_MODULE, "fifth_drv");

fifthdrv_class_dev = class_device_create(fifthdrv_class, NULL, MKDEV(major, 0), NULL, "buttons"); /* /dev/buttons */

// gpfcon = (volatile unsigned long *)ioremap(0x56000050, 16);

// gpfdat = gpfcon + 1;

return 0;

}

static void fifth_drv_exit(void)

{

unregister_chrdev(major, "fifth_drv");

class_device_unregister(fifthdrv_class_dev);

class_destroy(fifthdrv_class);

// iounmap(gpfcon);

return 0;

}

module_init(fifth_drv_init);

module_exit(fifth_drv_exit);

MODULE_LICENSE("GPL");参考链接:

730

730

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?