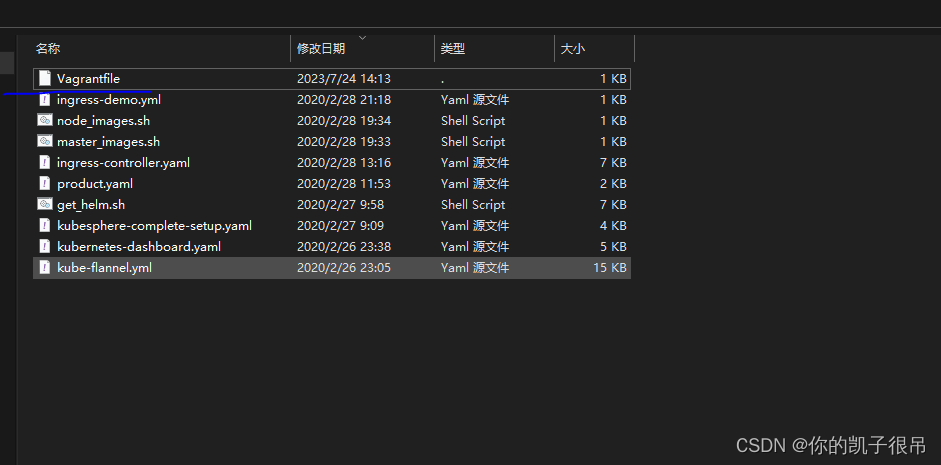

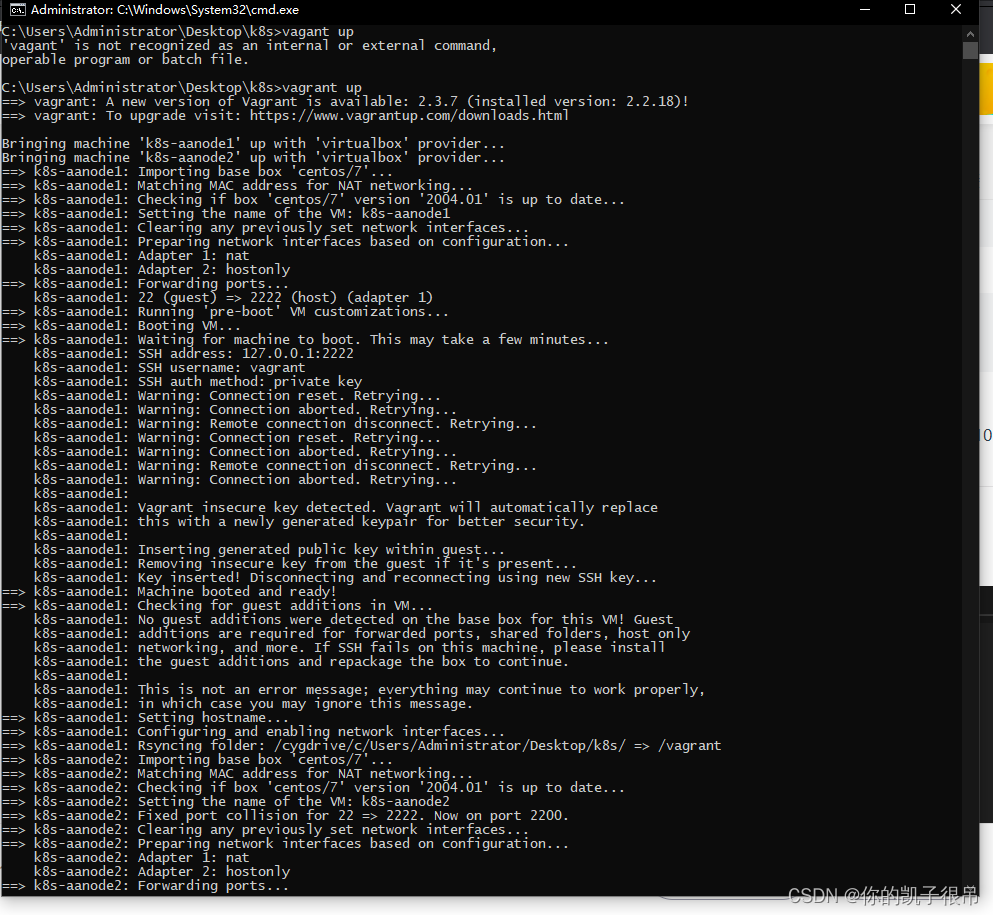

1.vagrant 快速搭建三台服务器

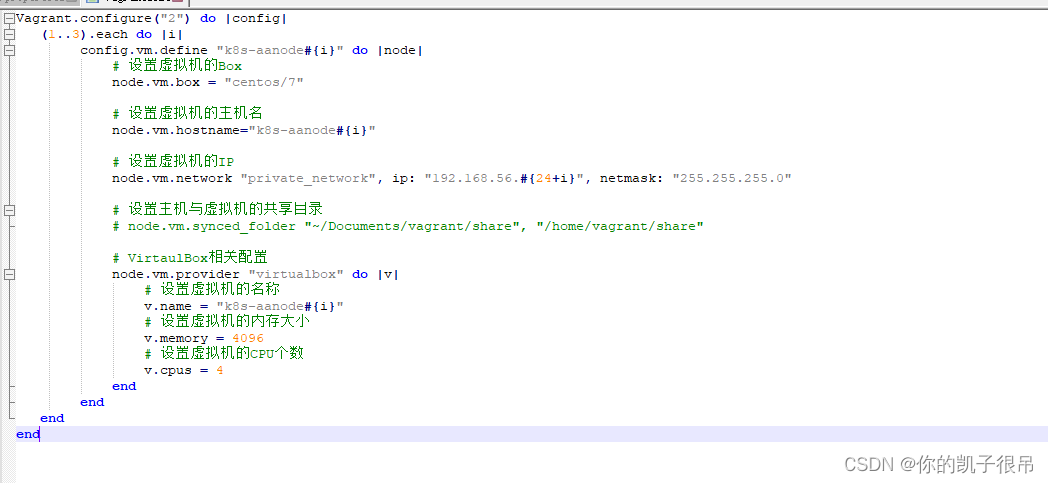

3改成5快速搭建5台虚拟机,看个人需求

Vagrant.configure("2") do |config|

(1..3).each do |i|

config.vm.define "k8s-vanodde#{i}" do |node|

# 设置虚拟机的Box

node.vm.box = "centos/7"

# 设置虚拟机的主机名

node.vm.hostname="k8s-vanodde#{i}"

# 设置虚拟机的IP

node.vm.network "private_network", ip: "192.168.56.#{24+i}", netmask: "255.255.255.0"

# 设置主机与虚拟机的共享目录

# node.vm.synced_folder "~/Documents/vagrant/share", "/home/vagrant/share"

# VirtaulBox相关配置

node.vm.provider "virtualbox" do |v|

# 设置虚拟机的名称

v.name = "k8s-vanodde#{i}"

# 设置虚拟机的内存大小

v.memory = 4096

# 设置虚拟机的CPU个数

v.cpus = 4

end

end

end

end

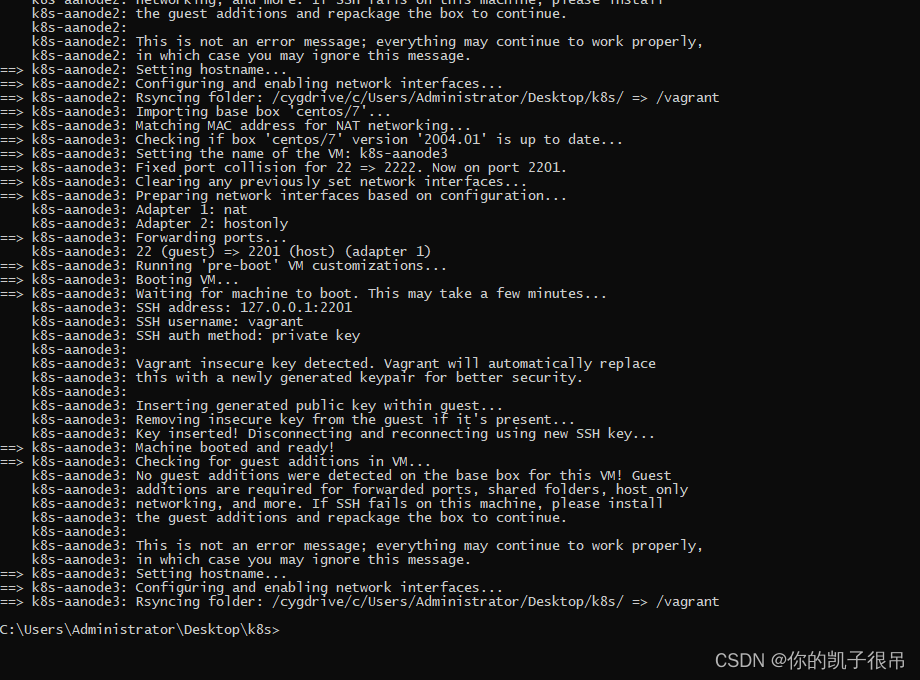

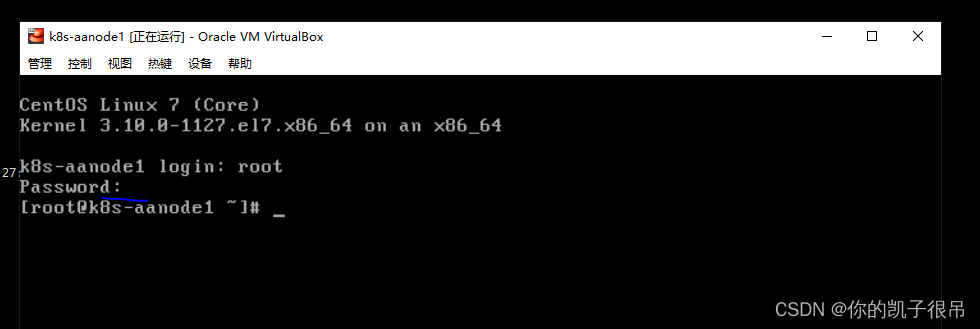

安装成功默认用户root 密码vagrant

vi /etc/ssh/sshd_config 开启密码验证no改成yes,

重启sshd

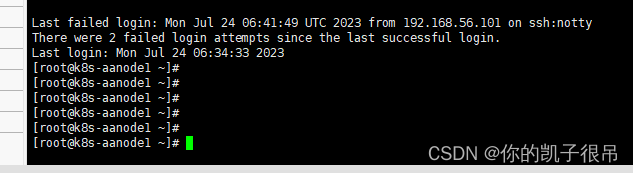

xshell7 链接

默认为密码 vagrant

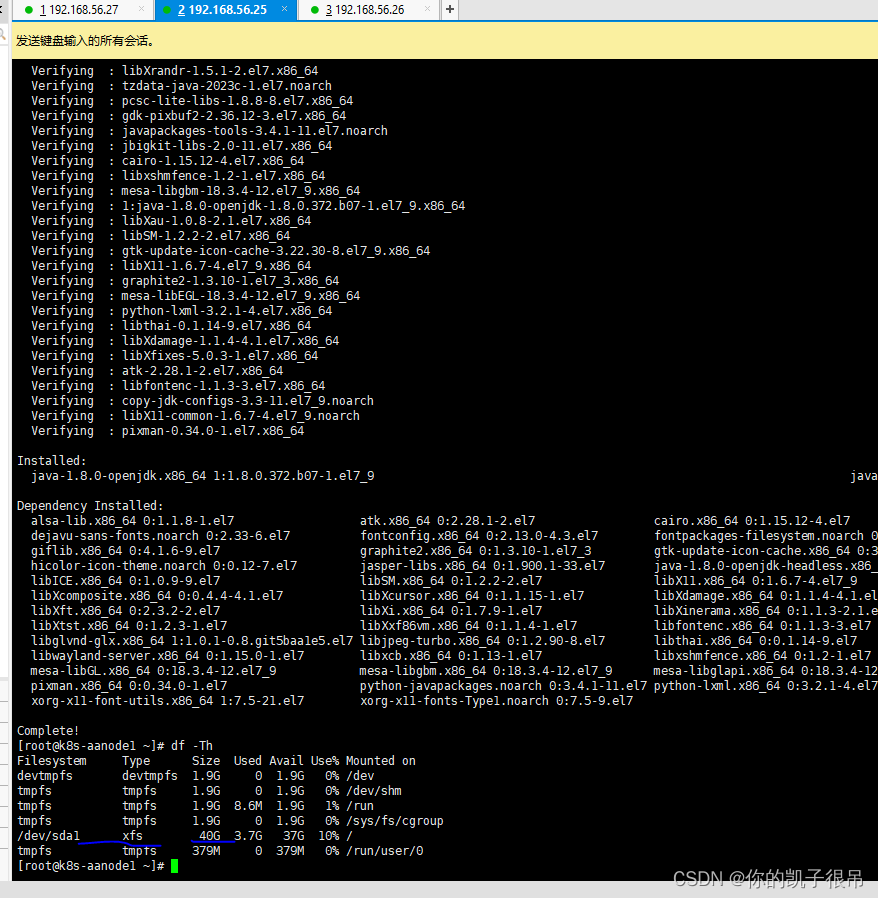

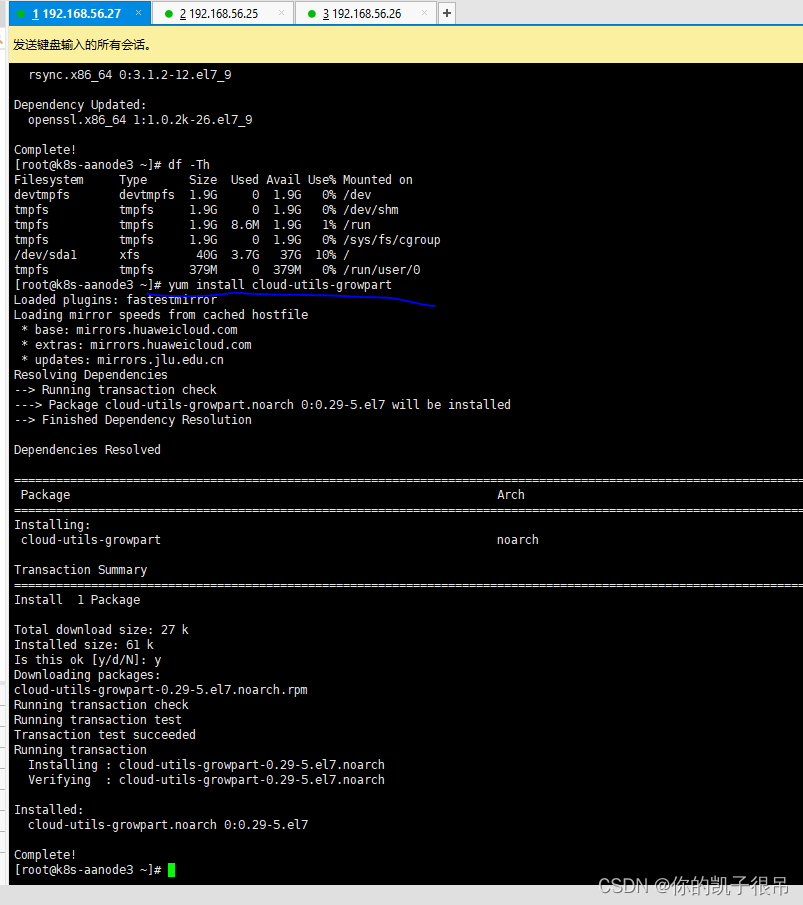

系统扩容

cdh搭建内存和磁盘空间要大一些,以下变化磁盘40G增加空间600G

来看下单台服务器的内存资源

free -h

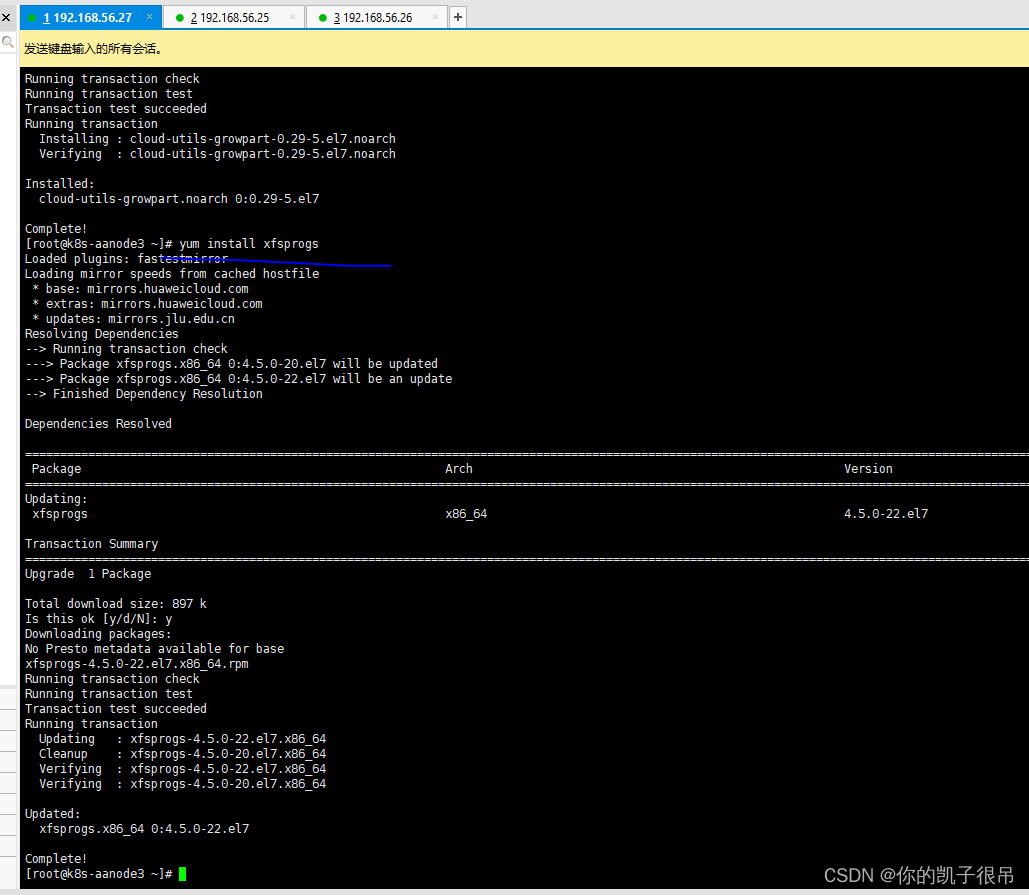

yum install cloud-utils-growpart

yum install xfsprogs

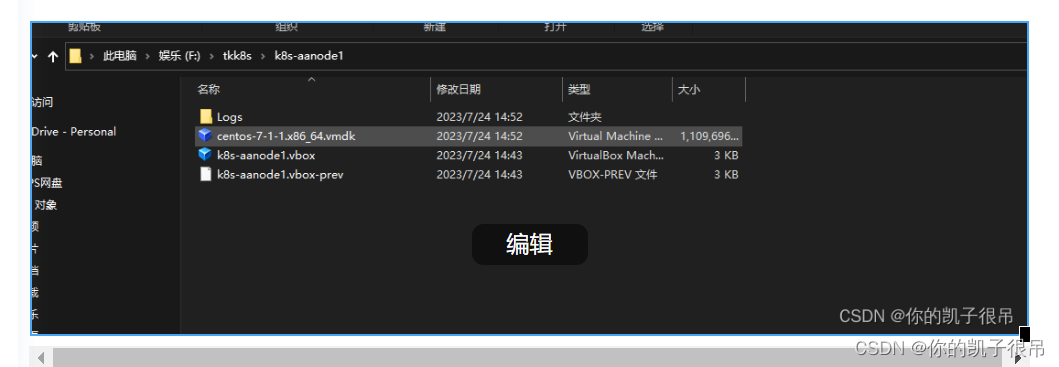

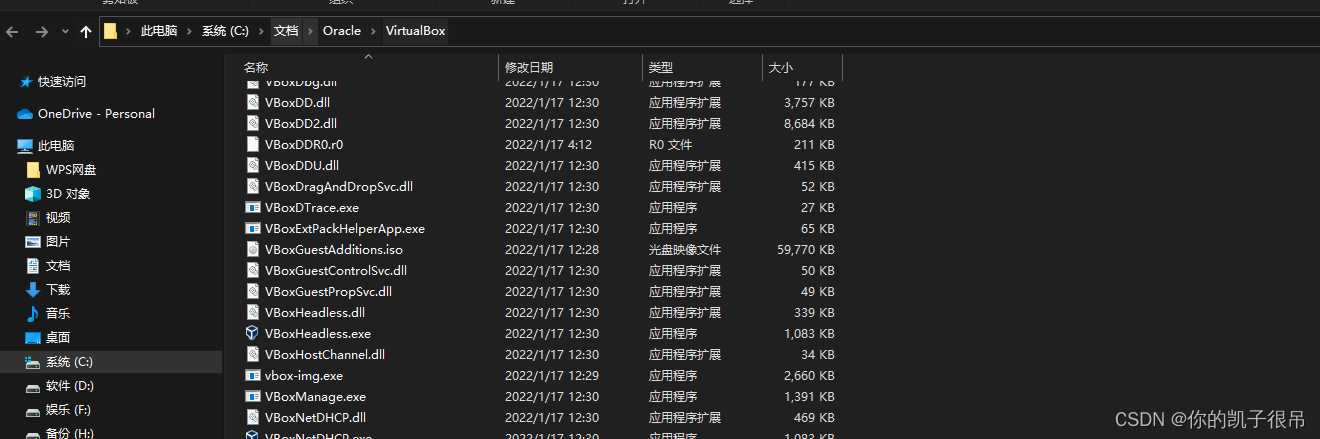

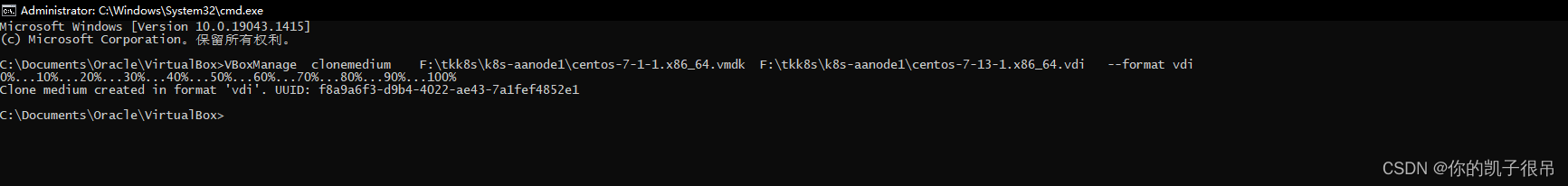

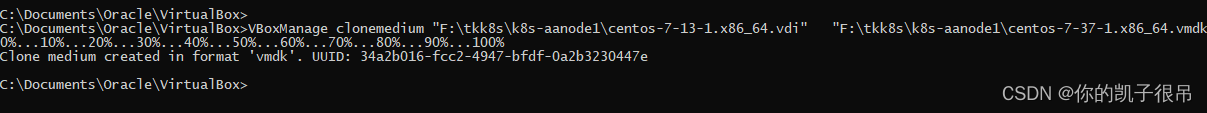

点击鼠标右键打开所在文件位置然后cmd

执行这些之前保证虚拟机已关闭

VBoxManage clonemedium F:\tkk8s\k8s-aanode1\centos-7-1-1.x86_64.vmdk F:\tkk8s\k8s-aanode1\centos-7-13-1.x86_64.vdi --format vdi

VBoxManage modifymedium "F:\tkk8s\k8s-aanode1\centos-7-13-1.x86_64.vdi" --resize 614400

VBoxManage clonemedium "F:\tkk8s\k8s-aanode1\centos-7-13-1.x86_64.vdi" "F:\tkk8s\k8s-aanode1\centos-7-37-1.x86_64.vmdk" --format vmdk

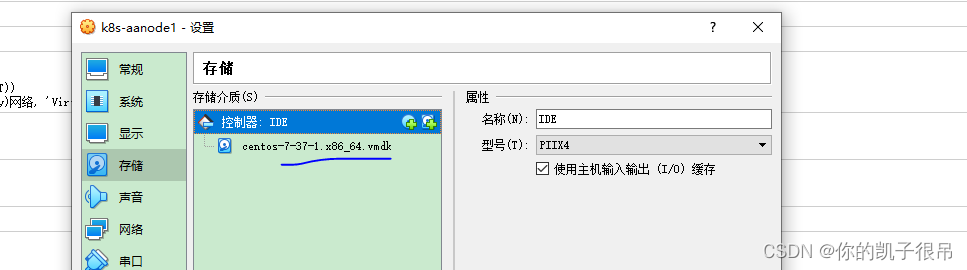

移除这两个文件

选择最新删除原有

查看fdisk -l

执行growpart /dev/sda1

再执行 xfs_growfs /dev/sda1 变成600g,每台虚拟机安装这样增加空间

系统初始化

配置主机名

hostnamectl set-hostname n1

hostnamectl set-hostname n2

hostnamectl set-hostname n3

hostname

bash 刷新

配置系统网络<所有节点>

vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=n1

cat /etc/sysconfig/network配置hosts文件

# 备份旧的hosts文件

mkdir -p /opt/backups && ls -l /opt/backups

cp /etc/hosts /opt/backups

ls -l /opt/backups

cat /opt/backups/hosts

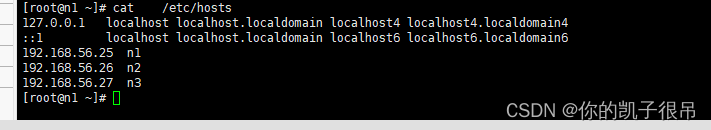

sudo tee /etc/hosts <<-'EOF'

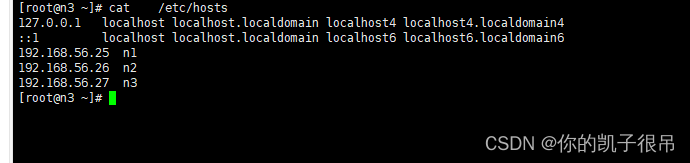

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

192.168.56.25 n1

192.168.56.26 n2

192.168.56.27 n3

EOF

cat /etc/hosts

# 重启网络

/etc/init.d/network restartvim /etc/hosts修改n1后,采用scp的方式拷贝到各个从节点

scp /etc/hosts root@n2:/etc/

scp /etc/hosts root@n3:/etc/

cat /etc/hosts

cat /etc/sysconfig/network

sudo tee /etc/sysconfig/network <<-'EOF'

NETWORKING=yes

HOSTNAME=n1

EOF

vi /etc/sysconfig/network

vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=n2

sudo tee /etc/sysconfig/network <<-'EOF'

NETWORKING=yes

HOSTNAME=n2

EOF

cat /etc/sysconfig/network

cat /etc/sysconfig/network

sudo tee /etc/sysconfig/network <<-'EOF'

NETWORKING=yes

HOSTNAME=n3

EOF

关掉防火墙

scp /opt/auto_config_system_initializ_v1.sh root@192.168.56.26:/opt

$ systemctl stop firewalld

$ systemctl disable firewalld

关闭 SeLinux

执行 getenforce 指令查看 selinux 状态:

[root@cm-server ~]# getenforce

Permissive

如果输出为 Enforcing,则需要处理一下,否则可以跳过这一步。修改 /etc/selinux/config 文件,将 SELINUX=enforcing 修改为SELINUX=disabled,使用以下命令修改并立即生效:

[root@cm-server ~]# sed -i s/SELINUX=enforcing/SELINUX=disabled/g /etc/selinux/config

[root@cm-server ~]# setenforce 0

安装httpd服务

yum -y install httpd httpd-devel

启动httpd systemctl start httpd

配置开机自启 systemctl enable httpd

安装yum-utils、createrepo

yum -y install yum-utils createrepo

首先检查系统中是否安装ntp包

rpm -q ntp

安装NTP服务(所有节点)

yum -y install ntp ntpdate

启动NTP服务

systemctl start ntpd

配置开机启动:systemctl enable ntpd

执行ps也可以看到ntp进程也已经启动

ps -ef | grep ntpd

配置时间同步文件

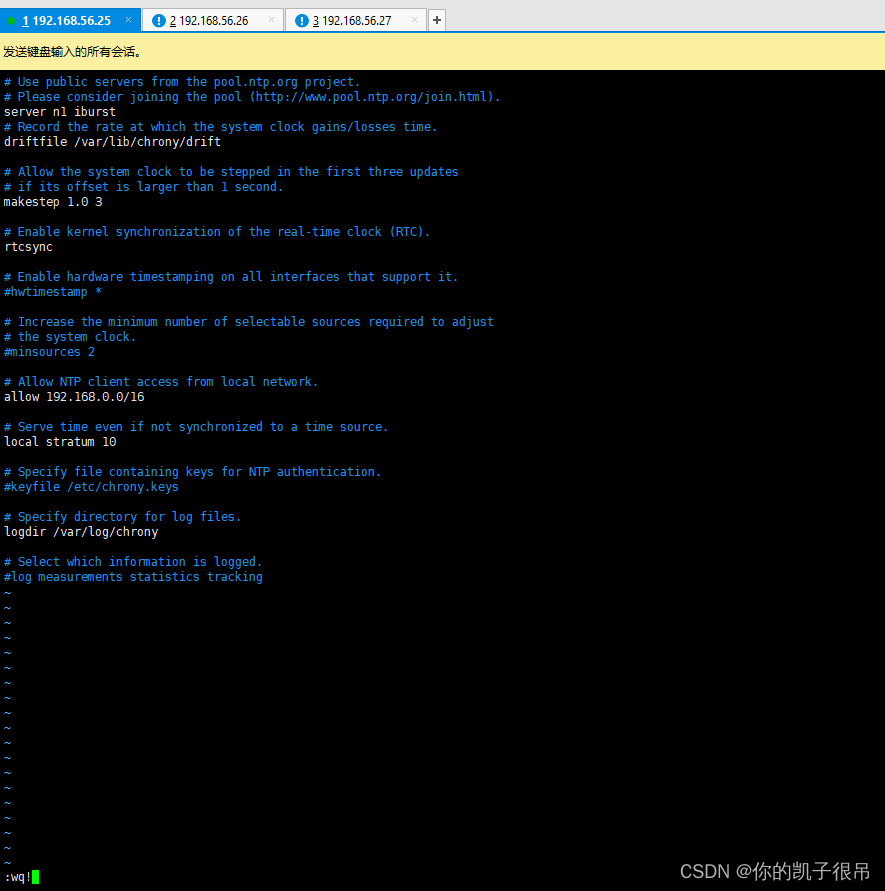

vim /etc/chrony.conf

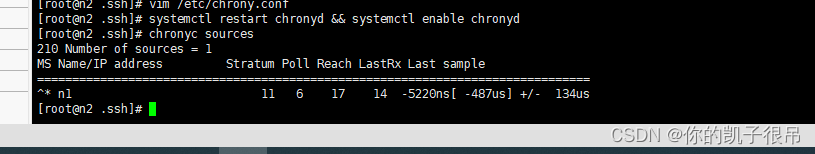

修改完成后重启 chronyd 服务并配置随机启动

systemctl restart chronyd && systemctl enable chronyd

检查时间状态:

chronyc sources

安装python

$ yum install python275

$ ln -s /usr/bin/python2 /usr/bin/python

$ python --version 《先查看是否自带可忽略》

免密设置

在各个个节点中使用ssh-keygen -t rsa生成私钥和公钥

,配置免密登录 (直接一路回车)

ssh-keygencd /root/.ssh

ssh-copy-id n1

ssh-copy-id n2

ssh-copy-id n3

在 各 个 节 点 中 分 别 把 公 钥 命 名 为 authorized_keys_n1 、 authorized_keys_n2 、

authorized_keys_n3

具体操作:

在n1节点上的操作: cp id_rsa.pub authorized_keys_n1

在n2节点上的操作: cp id_rsa.pub authorized_keys_n2

在n3点上的操作: cp id_rsa.pub authorized_keys_n3

把从节点的公钥使用scp authorized_keys_n2(n3) root@n1:/root/.ssh 命

令传送到n1节点的/root/.ssh文件夹中

在n2节点上的操作 scp authorized_keys_n2 root@n1:/root/.ssh

在n3节点上的操作:scp authorized_keys_n3 root@n1:/root/.ssh

在n1上把二个节点的公钥信息保存到authorized_key文件中

[root@n1 .ssh]# cat authorized_keys_n1>> authorized_keys

[root@n1 .ssh]# cat authorized_keys_n2 >>authorized_keys

[root@n1 .ssh]# cat authorized_keys_n3 >>authorized_keys

把该文件分发到其他从节点上(在n1节点上操作)

scp authorized_keys root@n2:/root/.ssh

scp authorized_keys root@n3:/root/.ssh

测试ssh免密码登录是否生效

ssh n1

ssh n2

ssh n3

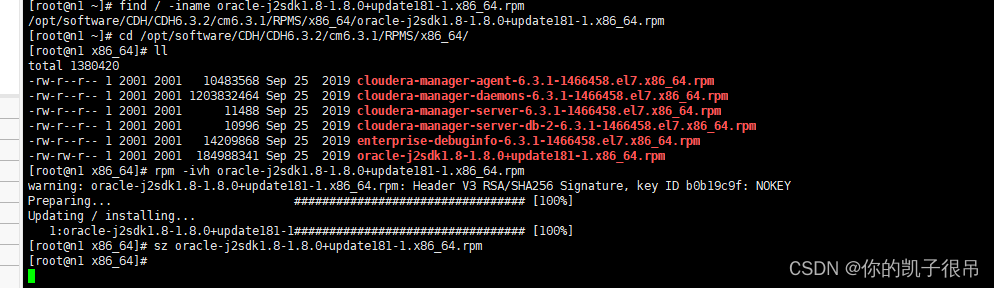

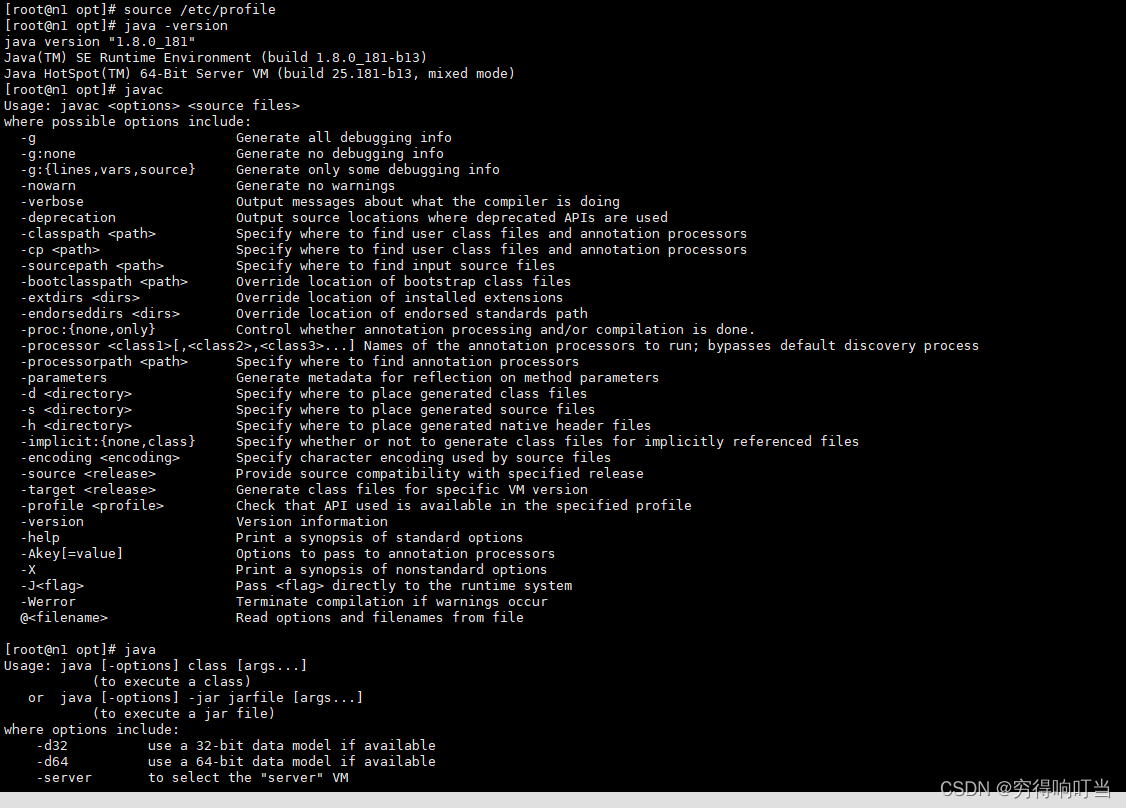

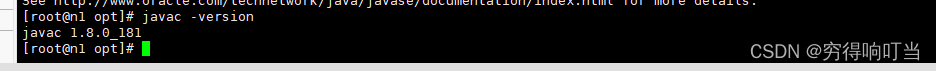

搭建jdk<部署所有节点>

find / -iname oracle-j2sdk1.8-1.8.0+update181-1.x86_64.rpm

cd /opt/software/CDH/CDH6.3.2/cm6.3.1/RPMS/x86_64/

rpm -ivh oracle-j2sdk1.8-1.8.0+update181-1.x86_64.rpm

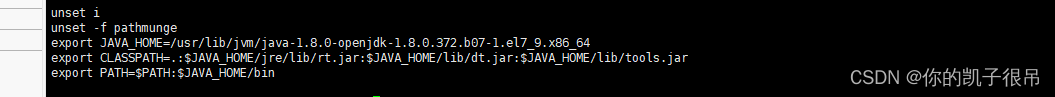

配置环境变量

export JAVA_HOME=/usr/java/jdk1.8.0_181-cloudera

export CLASSPATH=.:$JAVA_HOME/jre/lib:$JAVA_HOME/lib:$JAVA_HOME/lib/tools.jar

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin

source /etc/profile

验证java

java -version

java

javac

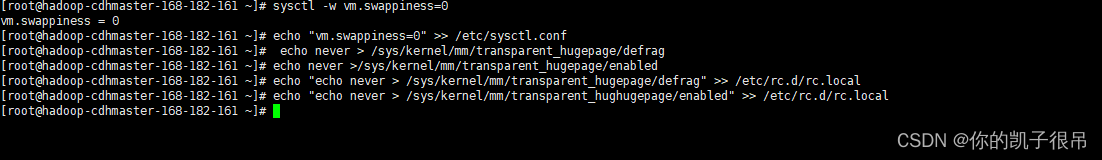

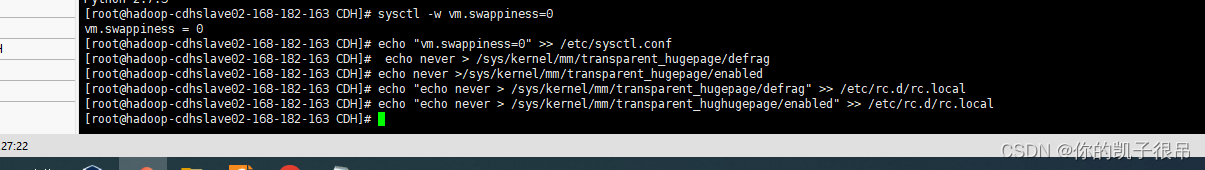

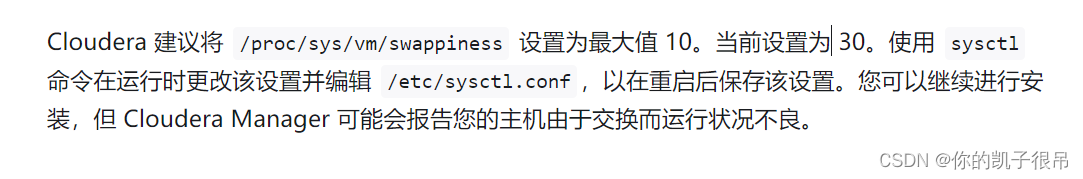

交换分区和大页设置 (所有集群节点都要执行,禁用交换分区和透明大页,否则会在安装配置 CDH 群集环境检测中报错。)

$ sysctl -w vm.swappiness=0

$ echo "vm.swappiness=0" >> /etc/sysctl.conf

$ echo never > /sys/kernel/mm/transparent_hugepage/defrag

$ echo never >/sys/kernel/mm/transparent_hugepage/enabled

$ echo "echo never > /sys/kernel/mm/transparent_hugepage/defrag" >> /etc/rc.d/rc.local

$ echo "echo never > /sys/kernel/mm/transparent_hughugepage/enabled" >> /etc/rc.d/rc.local

主机参数配置

CDH Manager 需要做一些 Linux 系统层面的优化,主要包括两类:禁止透明大页面及交换分区设置。详情请参考 Cloudera 官方网址。

修改swappiness

vm.swappiness 参数可以调整机器使用内存、交互分区的比例。vm.swappiness 的取值范围在 0-100 之间,当 vm.swappiness 为 0 时,表示最大限度地使用物理内存,而后使用 swap 空间;当 swappiness 为 100 时,表示最大限度地使用 swap 空间,把内存中的数据及时搬运到 swap 空间中去。

[root@cm-server ~]# echo vm.swappiness=0 >> /etc/sysctl.conf

[root@cm-server ~]# sysctl -p

关闭透明大页面

大多数 Linux 平台都包含一个称为透明大页面的功能,该功能与 Hadoop 工作节点的交互很差,并且会严重降低性能。

查看透明大页是否启用,[always] never 表示已启用,always [never] 表示已禁用。

[root@cm-server ~]# cat /sys/kernel/mm/transparent_hugepage/defrag

always madvise [never]

如果是启用状态,则执行以下操作关闭透明大页面:

[root@cm-server ~]# echo never > /sys/kernel/mm/transparent_hugepage/enabled

[root@cm-server ~]# echo never > /sys/kernel/mm/transparent_hugepage/defrag

并将以上命令添加到 /etc/rc.d/rc.local 文件中,使系统重启时依然生效

安装Mysql(cdhmaster)

$ wget http://repo.mysql.com/mysql-community-release-el7-5.noarch.rpm

$ rpm -ivh mysql-community-release-el7-5.noarch.rpm

$ yum update -y

$ yum install mysql-server -y

配置/etc/my.cnf

#

cat >> /etc/my.cnf << EOF

[mysqld]

datadir=/var/lib/mysql

socket=/var/lib/mysql/mysql.sock

transaction-isolation = READ-COMMITTED

# Disabling symbolic-links is recommended to prevent assorted security risks;

# to do so, uncomment this line:

symbolic-links = 0

key_buffer_size = 32M

max_allowed_packet = 16M

thread_stack = 256K

thread_cache_size = 64

query_cache_limit = 8M

query_cache_size = 64M

query_cache_type = 1

max_connections = 550

#expire_logs_days = 10

#max_binlog_size = 100M

#log_bin should be on a disk with enough free space.

#Replace '/var/lib/mysql/mysql_binary_log' with an appropriate path for your

#system and chown the specified folder to the mysql user.

log_bin=/var/lib/mysql/mysql_binary_log

#In later versions of MySQL, if you enable the binary log and do not set

#a server_id, MySQL will not start. The server_id must be unique within

#the replicating group.

server_id=1

binlog_format = mixed

read_buffer_size = 2M

read_rnd_buffer_size = 16M

sort_buffer_size = 8M

join_buffer_size = 8M

# InnoDB settings

innodb_file_per_table = 1

innodb_flush_log_at_trx_commit = 2

innodb_log_buffer_size = 64M

innodb_buffer_pool_size = 4G

innodb_thread_concurrency = 8

innodb_flush_method = O_DIRECT

innodb_log_file_size = 512M

[mysqld_safe]

log-error=/var/log/mysqld.log

pid-file=/var/run/mysqld/mysqld.pid

sql_mode=STRICT_ALL_TABLES

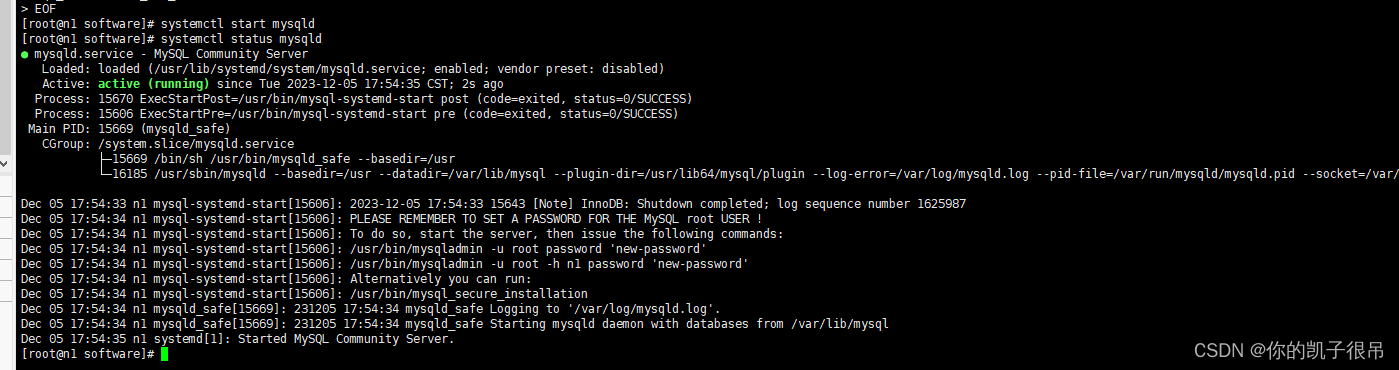

EOF启动服务

# 启动

$ systemctl start mysqld

$ systemctl status mysqld

# 开机自启动

$ systemctl enable mysqld

# 登录,默认没有密码

$ mysql

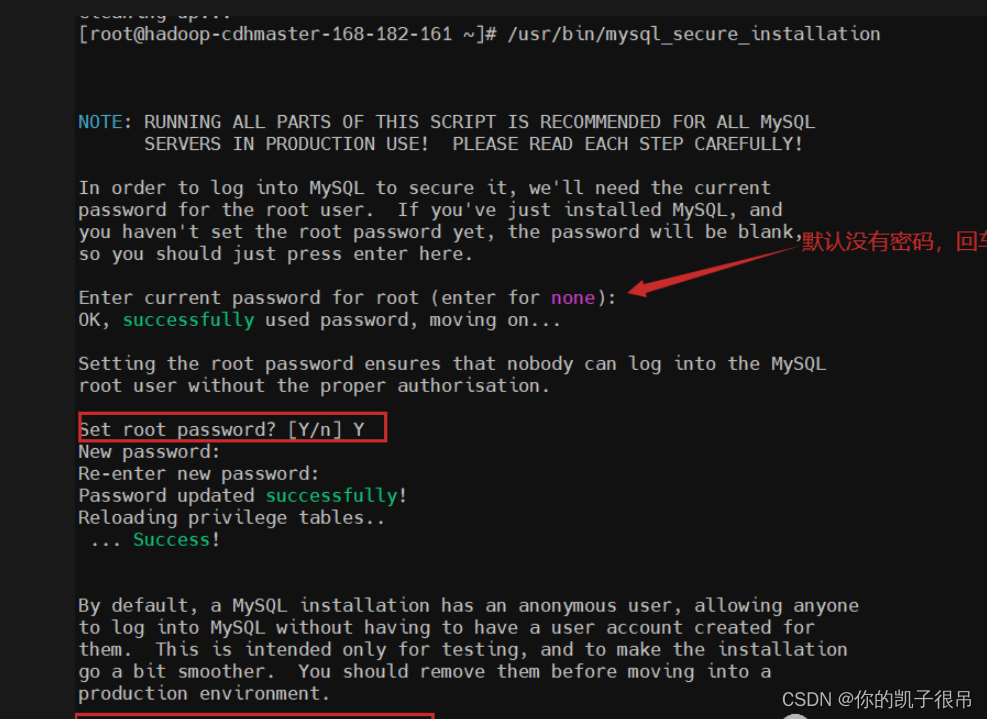

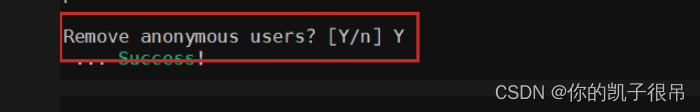

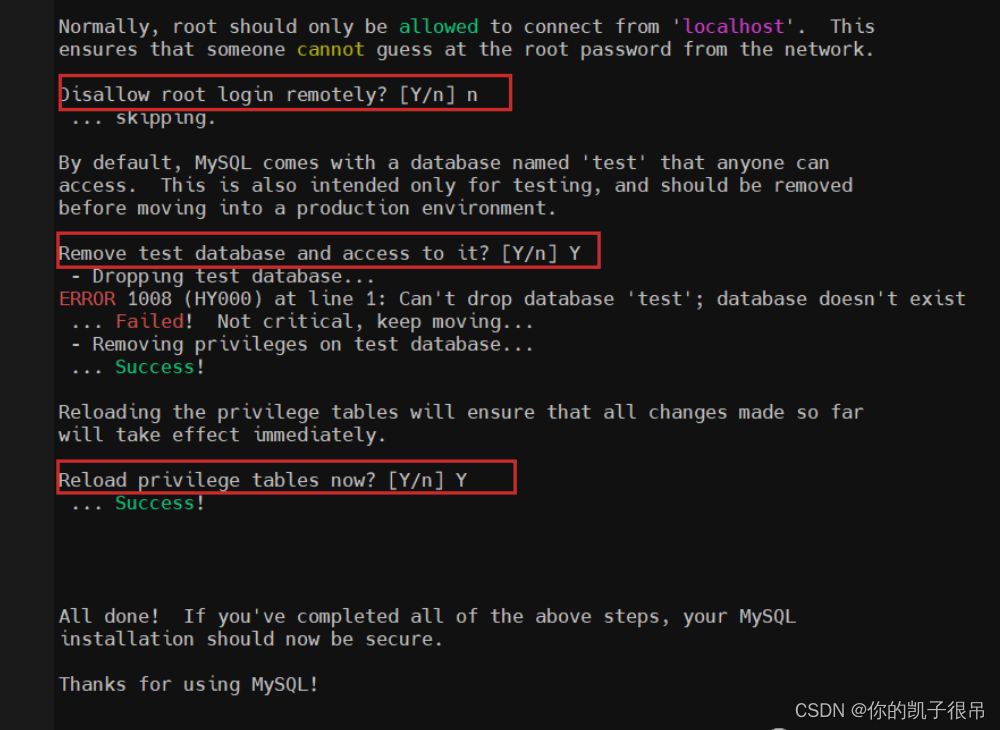

设置root密码

$ /usr/bin/mysql_secure_installation

mysql -uroot -p

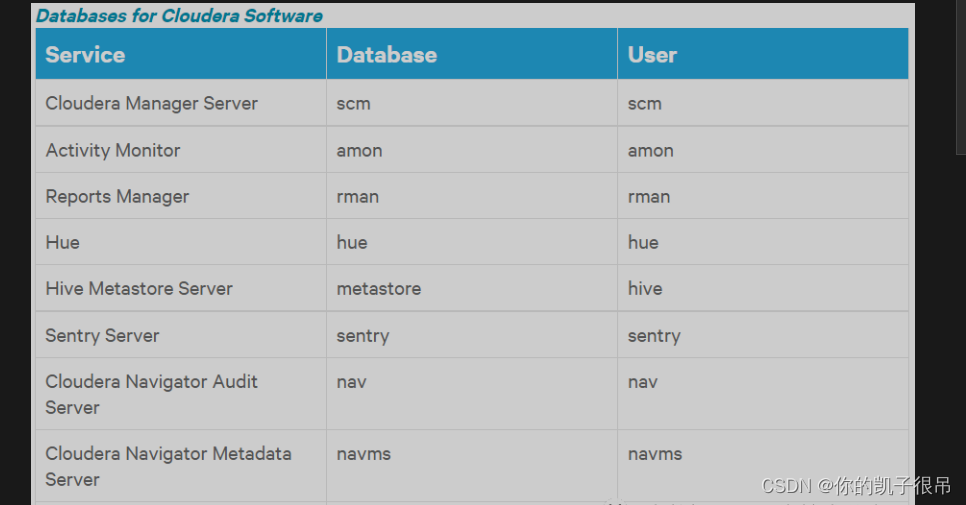

为 Cloudera 各软件创建数据库

密码:123456@abc.COM

### scm

CREATE DATABASE scm DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON scm.* TO 'scm'@'%' IDENTIFIED BY '123456';

### amon

CREATE DATABASE amon DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON amon.* TO 'amon'@'%' IDENTIFIED BY '123456';

### rman

CREATE DATABASE rman DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON rman.* TO 'rman'@'%' IDENTIFIED BY '123456';

### hue

CREATE DATABASE hue DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON hue.* TO 'hue'@'%' IDENTIFIED BY '123456';

### hive

CREATE DATABASE hive DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON hive.* TO 'hive'@'%' IDENTIFIED BY '123456';

### sentry

CREATE DATABASE sentry DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON sentry.* TO 'sentry'@'%' IDENTIFIED BY '123456';

### nav

CREATE DATABASE nav DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON nav.* TO 'nav'@'%' IDENTIFIED BY '123456';

### navms

CREATE DATABASE navms DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON navms.* TO 'navms'@'%' IDENTIFIED BY '123456';

### oozie

CREATE DATABASE oozie DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

GRANT ALL ON oozie.* TO 'oozie'@'%' IDENTIFIED BY '123456';

# 最后刷新一下

flush privileges;

### 检查

show databases;

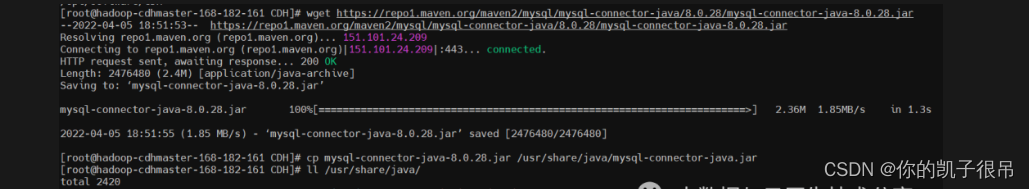

安装 MySQL JDBC(所有节点)

用于各节点连接数据库,JDBC的版本跟mysql版本对应

$ mkdir /opt/software/CDH /opt/server/CDH -p

$ cd /opt/software/CDH

$ wget https://dev.mysql.com/get/Downloads/Connector-J/mysql-connector-java-5.1.46.tar.gz

$ tar -xf mysql-connector-java-5.1.46.tar.gz

# 必须放在/usr/share/java/这个目录下,没有就创建,而且名字得改成mysql-connector-java.jar

$ mkdir -p /usr/share/java/

$ cp mysql-connector-java-5.1.46/mysql-connector-java-5.1.46.jar /usr/share/java/mysql-connector-java.jar

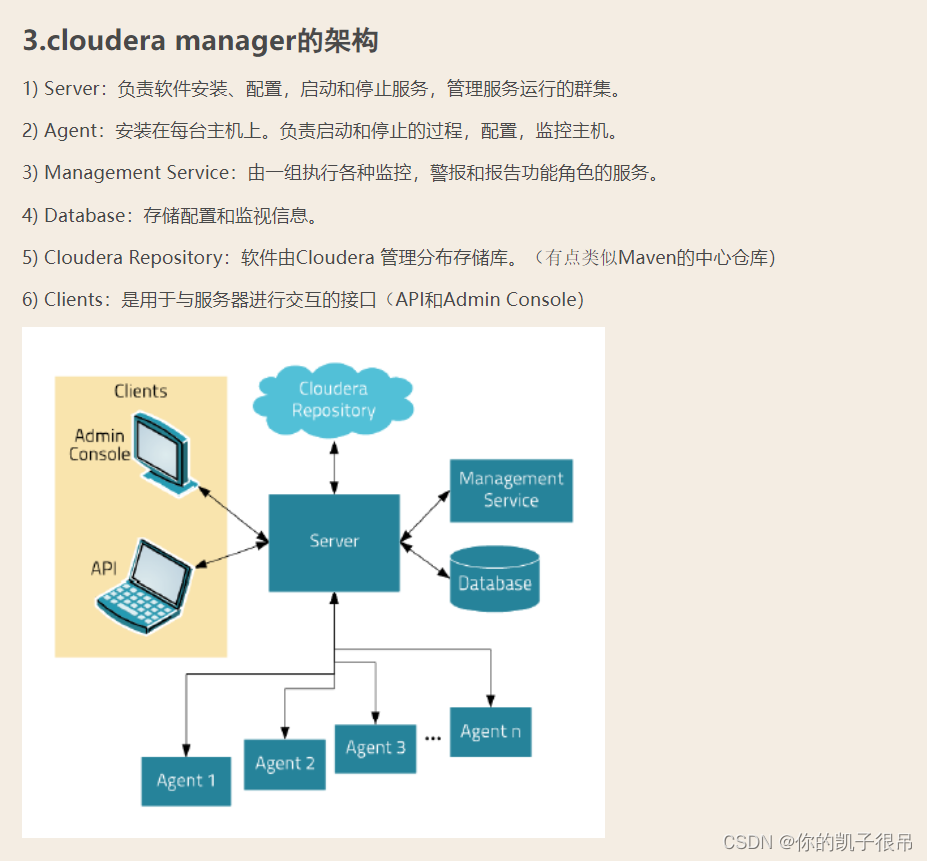

2.安装 CM Server 和 CM Agent

【温馨提示】cloudera-manager-daemons是守护进程,所有节点都得安装。

下载安装包

CDH官方的网站已经无法直接下载安装包了(需要账号密码),也就是说需要钱了,不是免费的了,这里提供百度云下载地址。

链接:https://pan.baidu.com/s/16raZeCbAxoqx6A54Fo3-Nw

提取码:6666

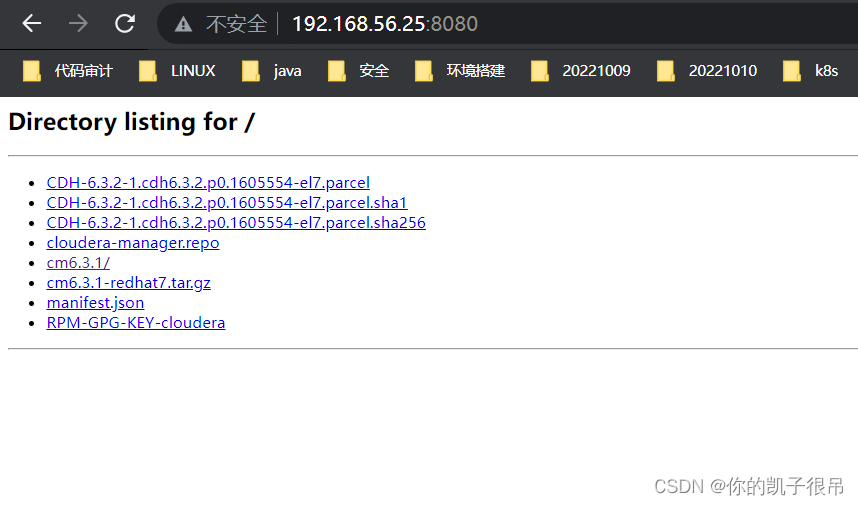

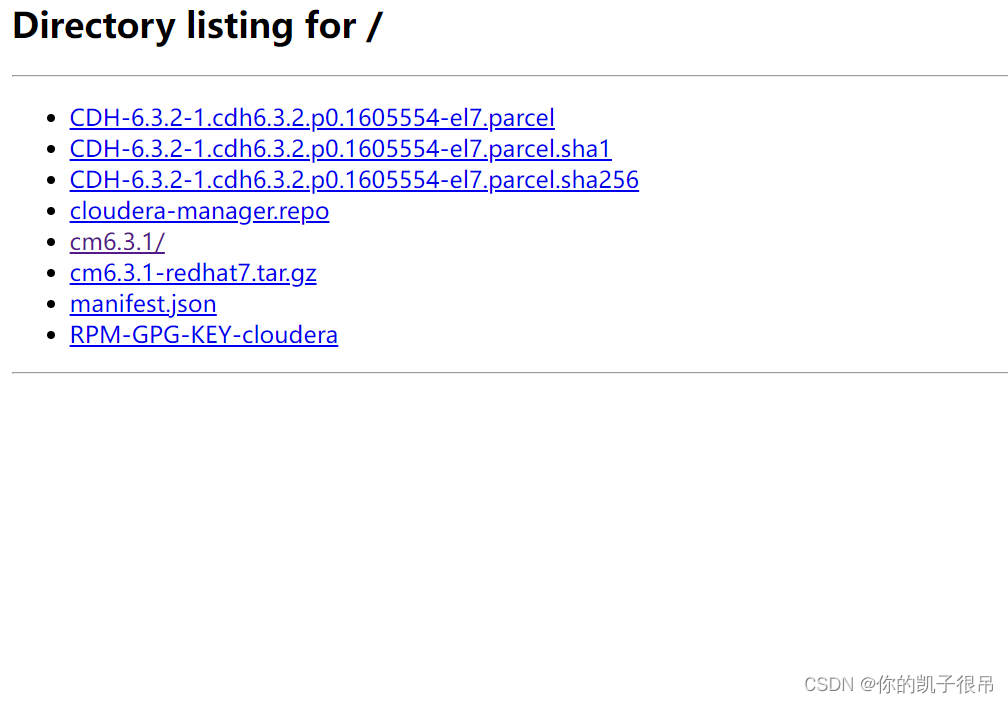

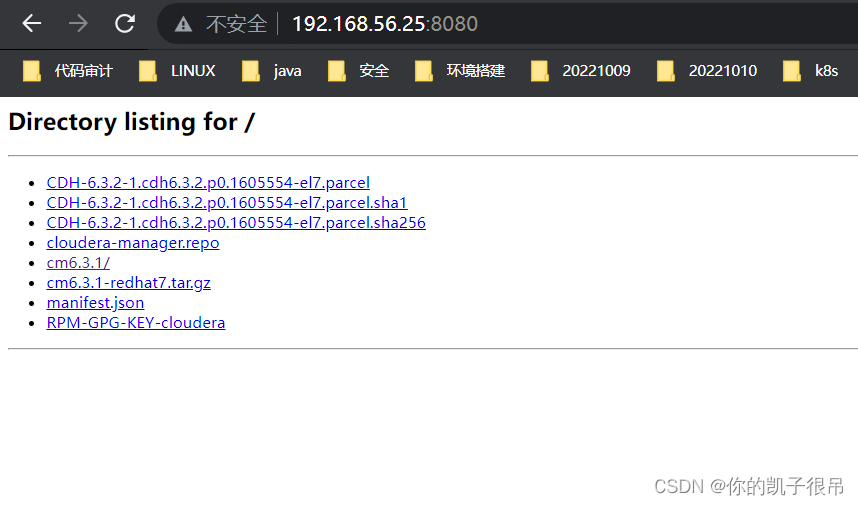

把下载好的压缩包解压,并用python 启动一个本地服务作为本地仓库

$ cd /opt/software/CDH/

$ unzip CDH6.3.2.zip

$ cd CDH6.3.2

$ tar -xf cm6.3.1-redhat7.tar.gz

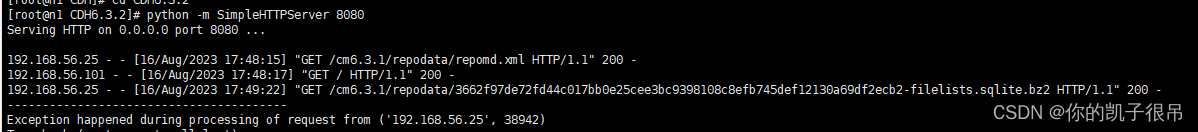

$ python -m SimpleHTTPServer 8080

开放8080端口

开放8080端口

systemctl start firewalld

firewall-cmd --add-port=8080/tcp --zone=public --permanent

firewall-cmd --reload

查看开放端口列表: firewall-cmd --zone=public --list-ports

停止: systemctl stop firewalld

配置本地yum源(所有节点)

cat > /etc/yum.repos.d/cloudera-manager.repo << EOF

[cloudera-manager]

name=Cloudera-Manager

baseurl=http://192.168.56.25:8080/cm6.3.1/

gpgcheck=0

enabled=1

EOF

# 清除缓存并生成新的缓存 $ yum clean all $ yum makecache

检查配置是否成功

yum list | grep cloudera

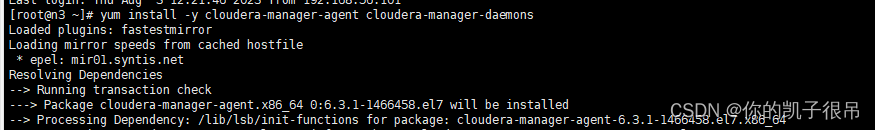

安装CM Server 和Agent(cdhmaster)

yum install -y cloudera-manager-agent cloudera-manager-daemons cloudera-manager-server

安装CM Agent(其它节点)

yum install -y cloudera-manager-agent cloudera-manager-daemons

rpm -qa | grep cloudera

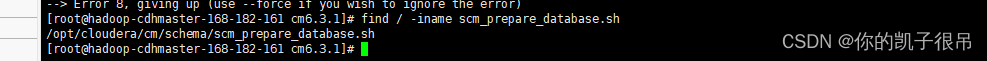

find / -iname scm_prepare_database.sh

CM 数据库初始化

# /opt/cloudera/cm/schema/scm_prepare_database.sh <databaseType> <databaseName> <databaseUser> <password>

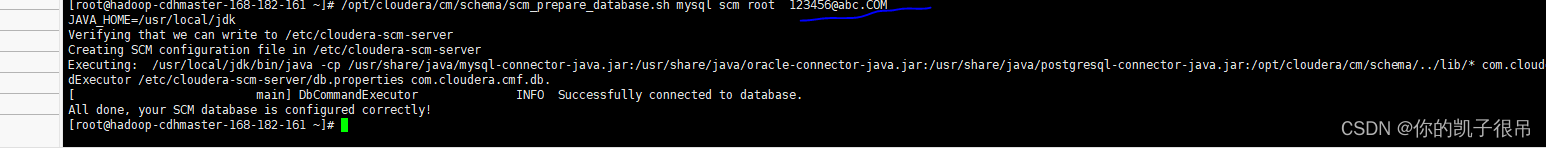

$ /opt/cloudera/cm/schema/scm_prepare_database.sh mysql scm root 123456@abc.COM(各自密码)

会去修改CM server的db配置文件/etc/cloudera-scm-server/db.properties

cat -n /etc/cloudera-scm-server/db.properties

修改CM agent配置

# 修改server_host,跟CM心态检测,根据自己的主机名来修改

$ sed -i '/server_host=/cserver_host=n1' /etc/cloudera-scm-agent/config.ini

cat /etc/cloudera-scm-agent/config.ini | grep server_host

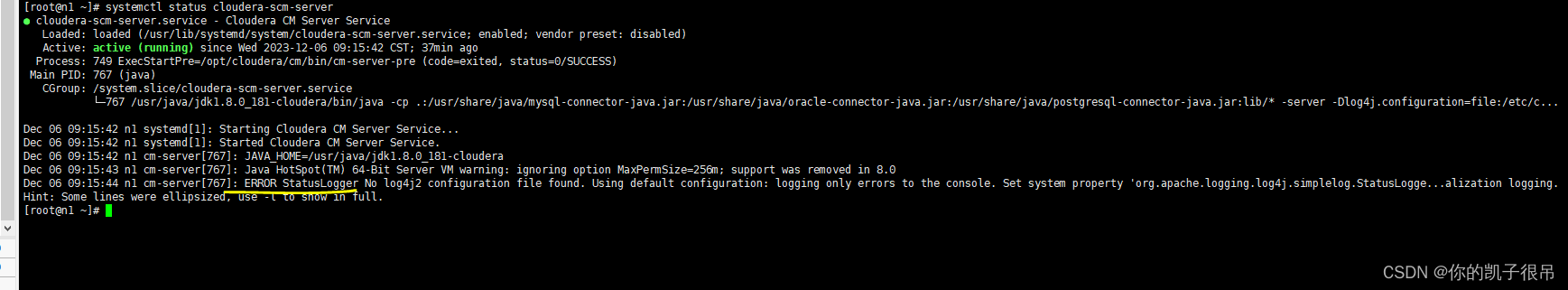

head -n 20 /etc/cloudera-scm-agent/config.ini启动CM服务(CM节点)

systemctl start cloudera-scm-server

systemctl restart cloudera-scm-server

systemctl enable cloudera-scm-server

systemctl status cloudera-scm-server

jps

# 会启动端口7180的服务,服务启动有点慢,需要等待一段时间

netstat -tnlp|grep 7180

单独把日志目录列一下,方便问题定位:

组件日志:/var/log/

CM agent日志:/var/log/cloudera-scm-agent/

CM server日志:/var/log/cloudera-scm-server/

CM agent进程日志:/var/run/cloudera-scm-agent/process/

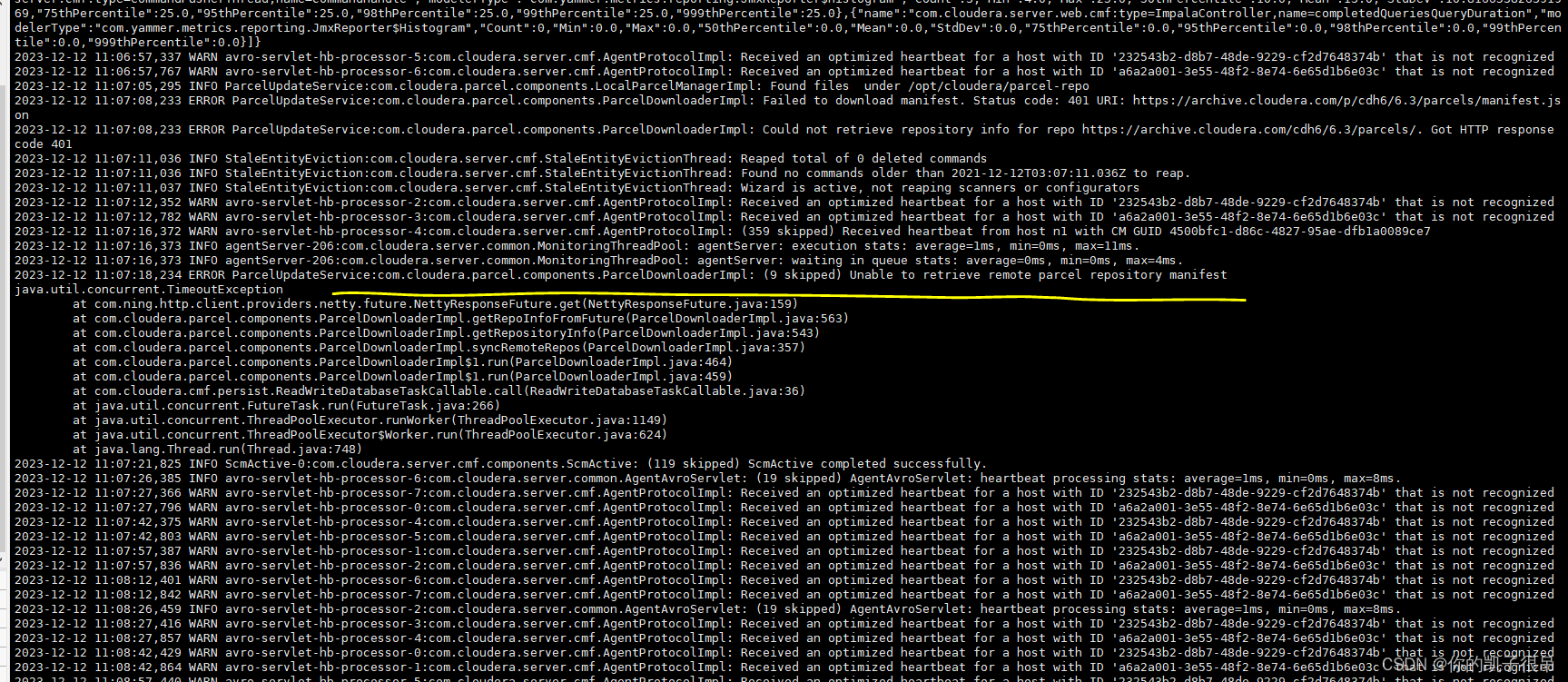

tail -f /var/log/cloudera-scm-server/cloudera-scm-server.log

tailf /var/log/cloudera-scm-server/cloudera-scm-server.log

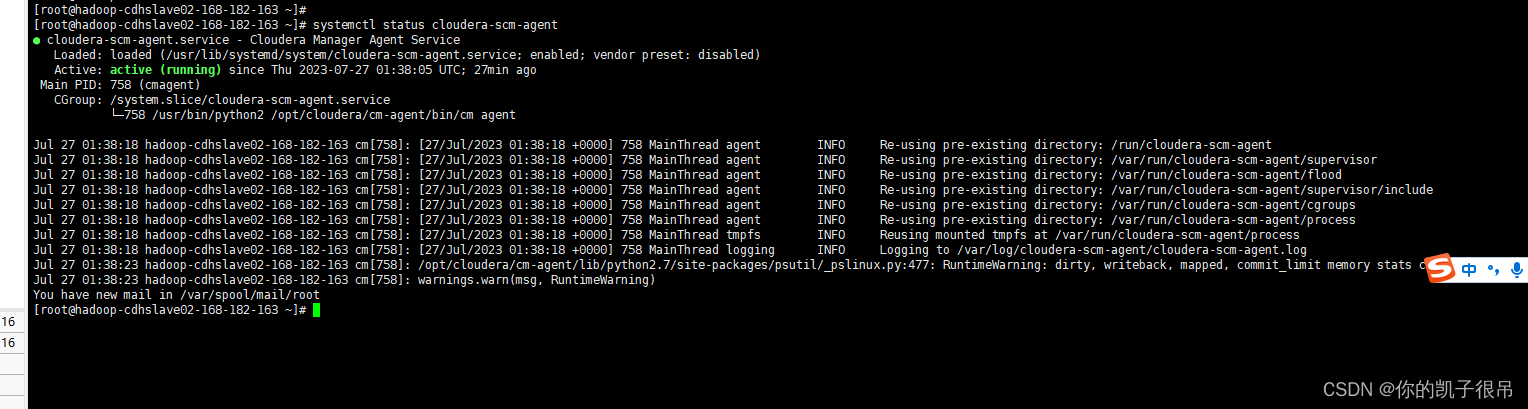

systemctl start cloudera-scm-agent

systemctl restart cloudera-scm-agent

systemctl enable cloudera-scm-agent

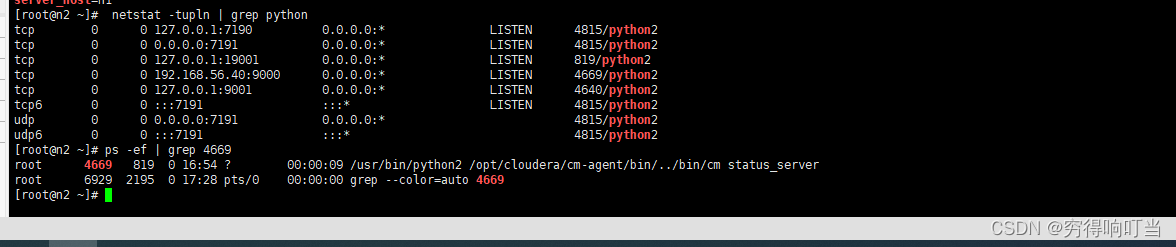

systemctl status cloudera-scm-agent netstat -tupln | grep python ps -ef | grep 4669

查看agent的日志:

tailf /var/log/cloudera-scm-agent/cloudera-scm-agent.log

tail -f /var/log/cloudera-scm-agent/cloudera-scm-agent.log

tail -1000f /var/log/cloudera-scm-agent/cloudera-scm-agent.log

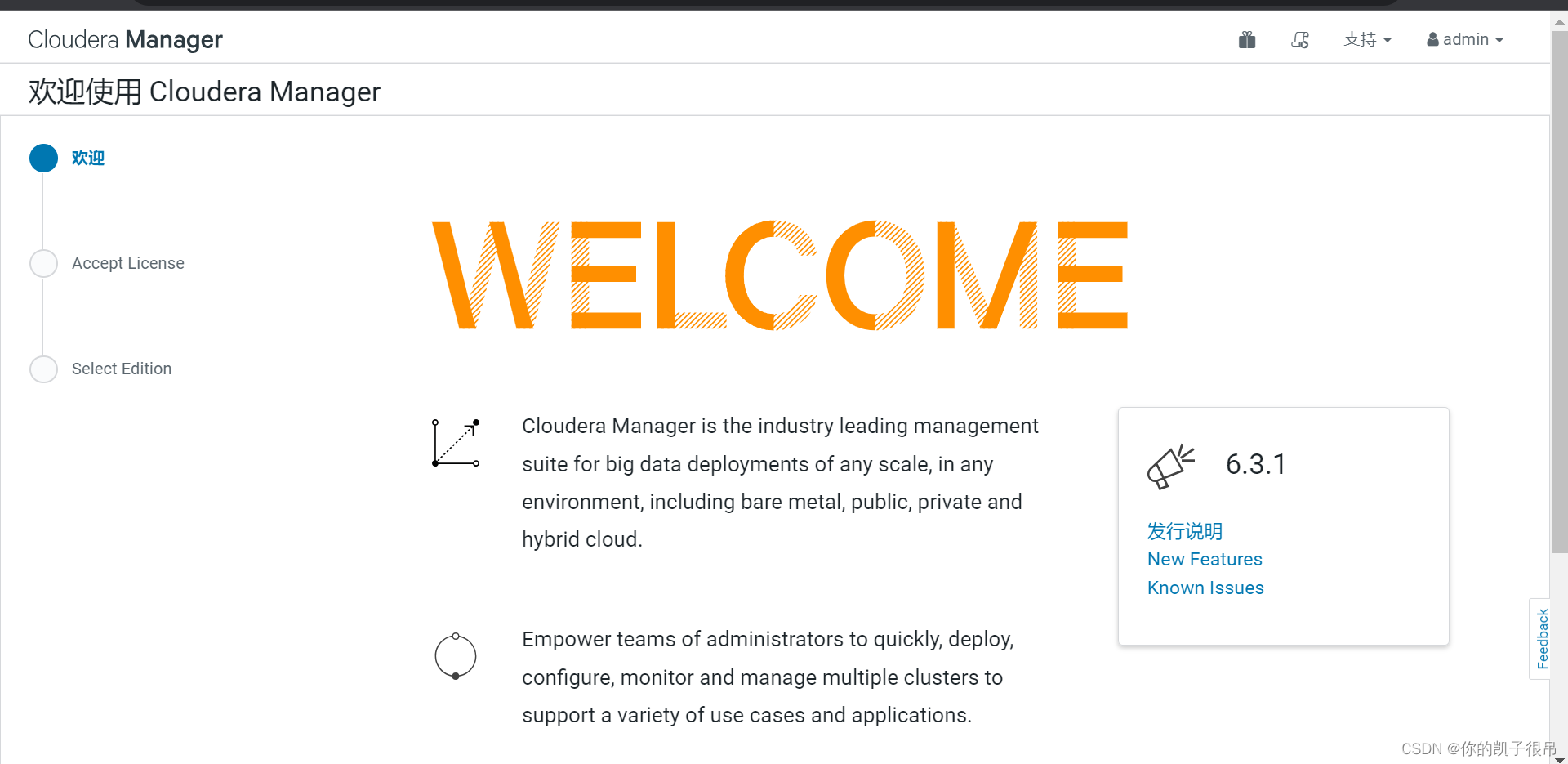

网页登录Cloudera manager

1)通过http://n1:7180访问cloudera manager,默认的用户名和密码均为admin

![]()

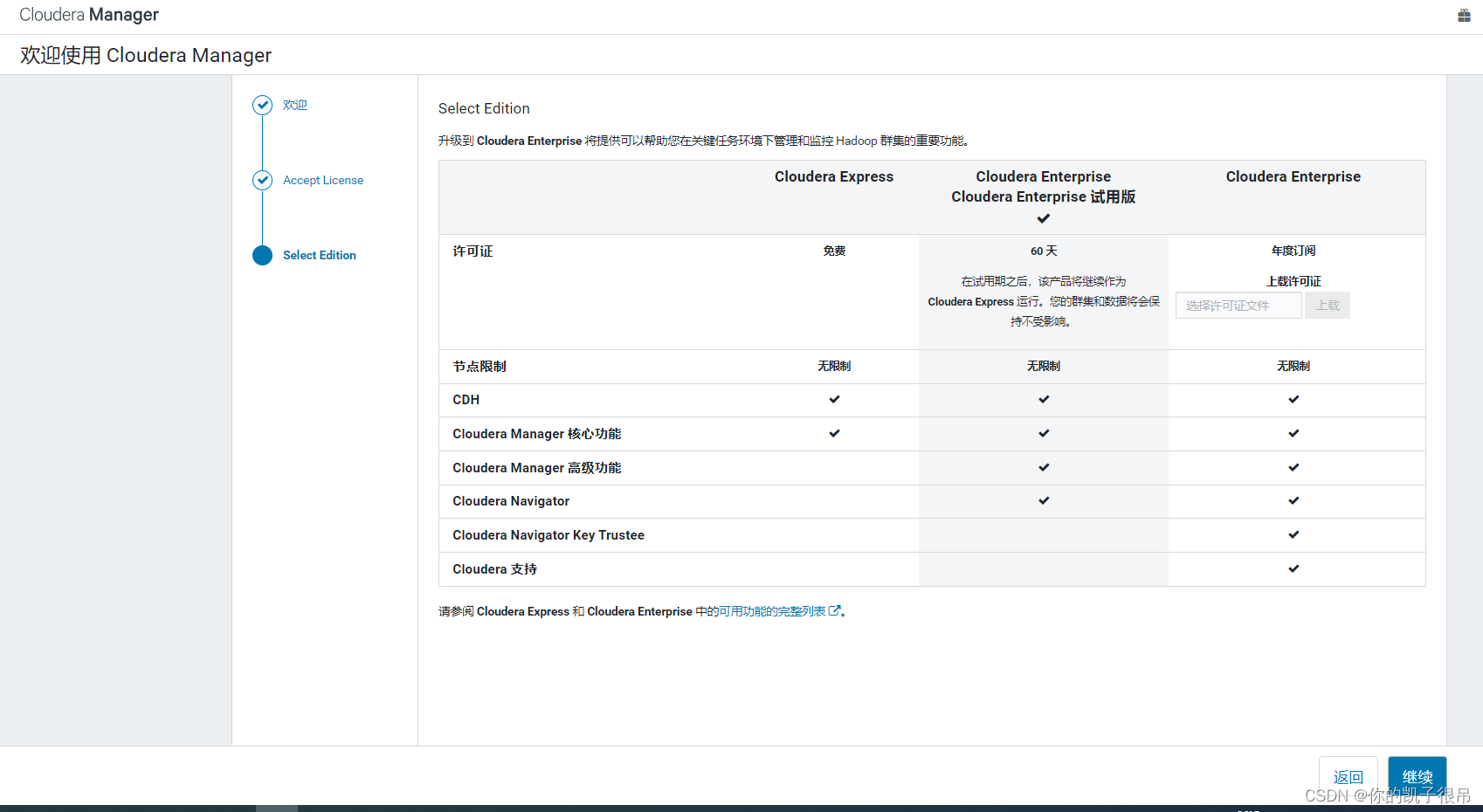

2)Hadoop及其组件安装选择Cloudera版本(如果有license请选择cloudera enterprise,上传许可证)

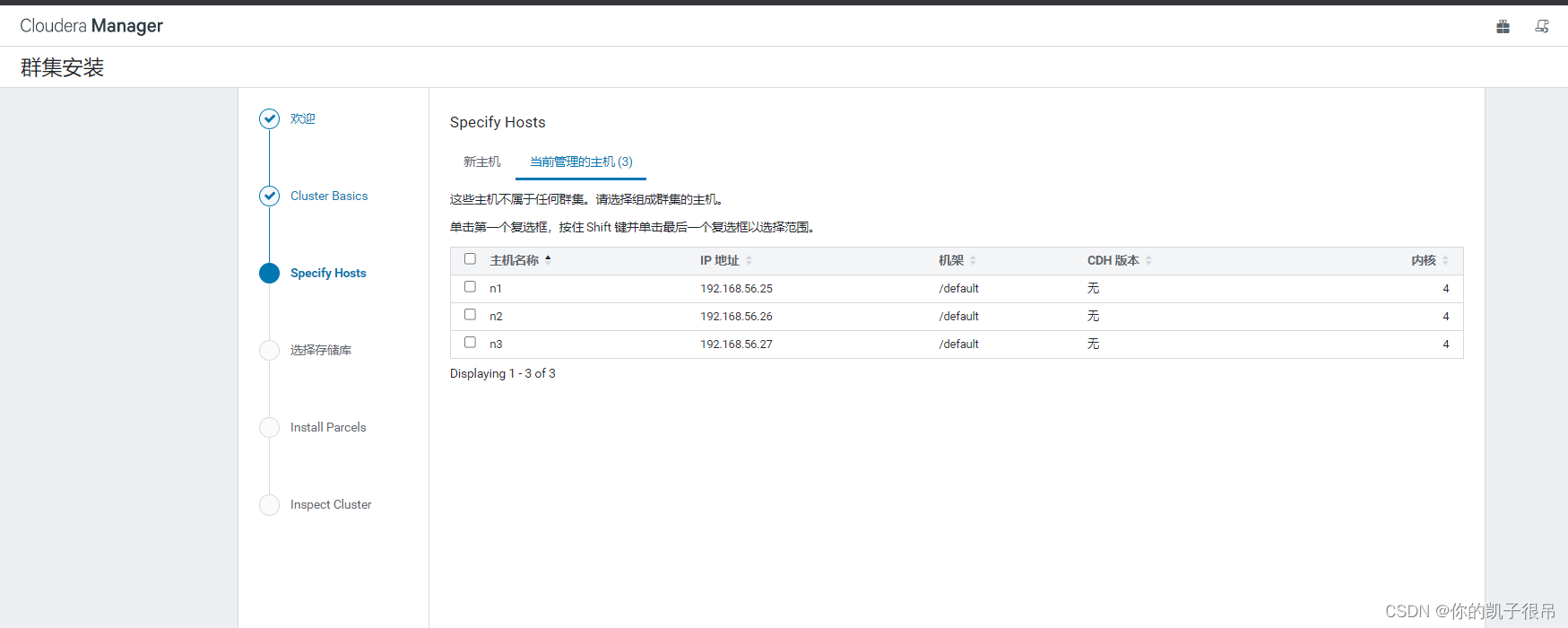

3)搜索并添加机器:(可以参照“模式”填入空白处如192.158.56.[39-41] )

Linux中“ll”命令,-bash: ll: command not found_ls $ls_options -l_很酷一只卷儿的博客-CSDN博客

又所改变

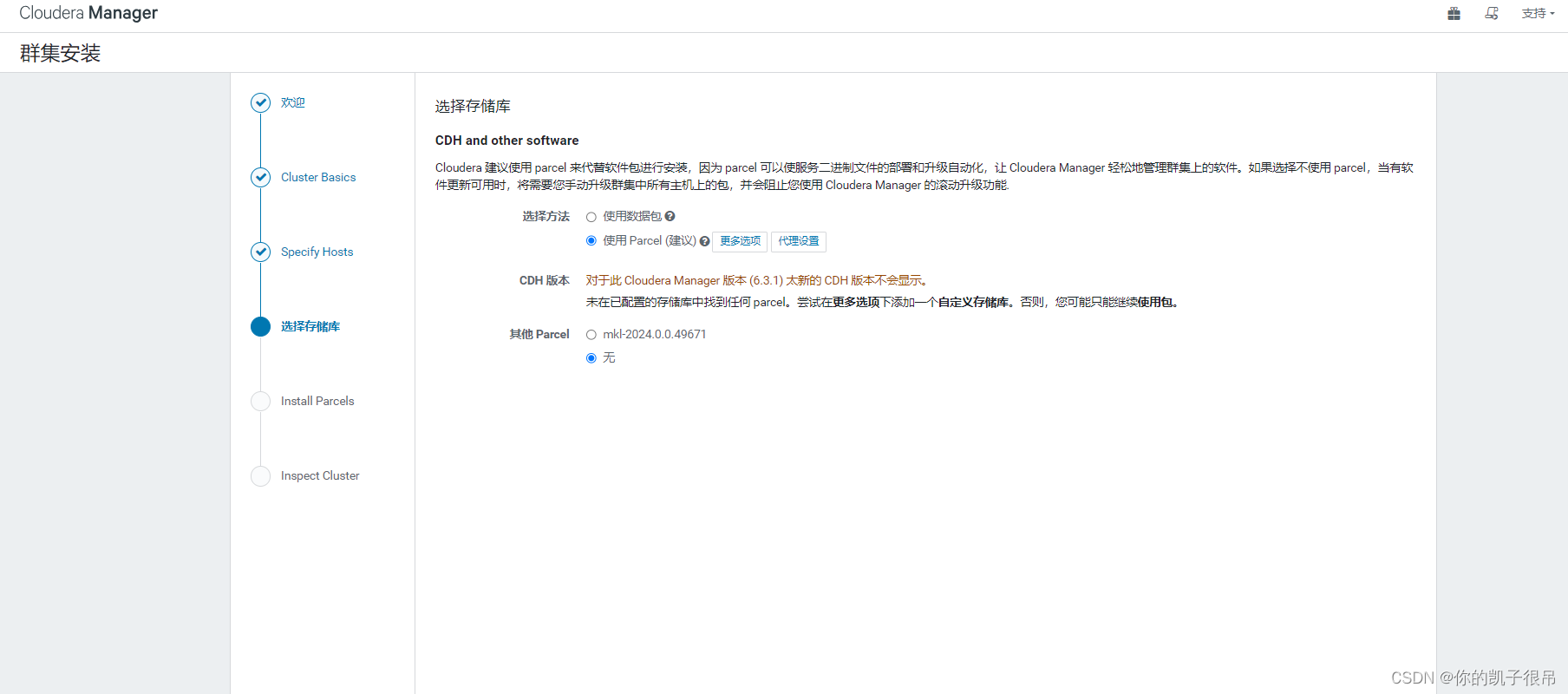

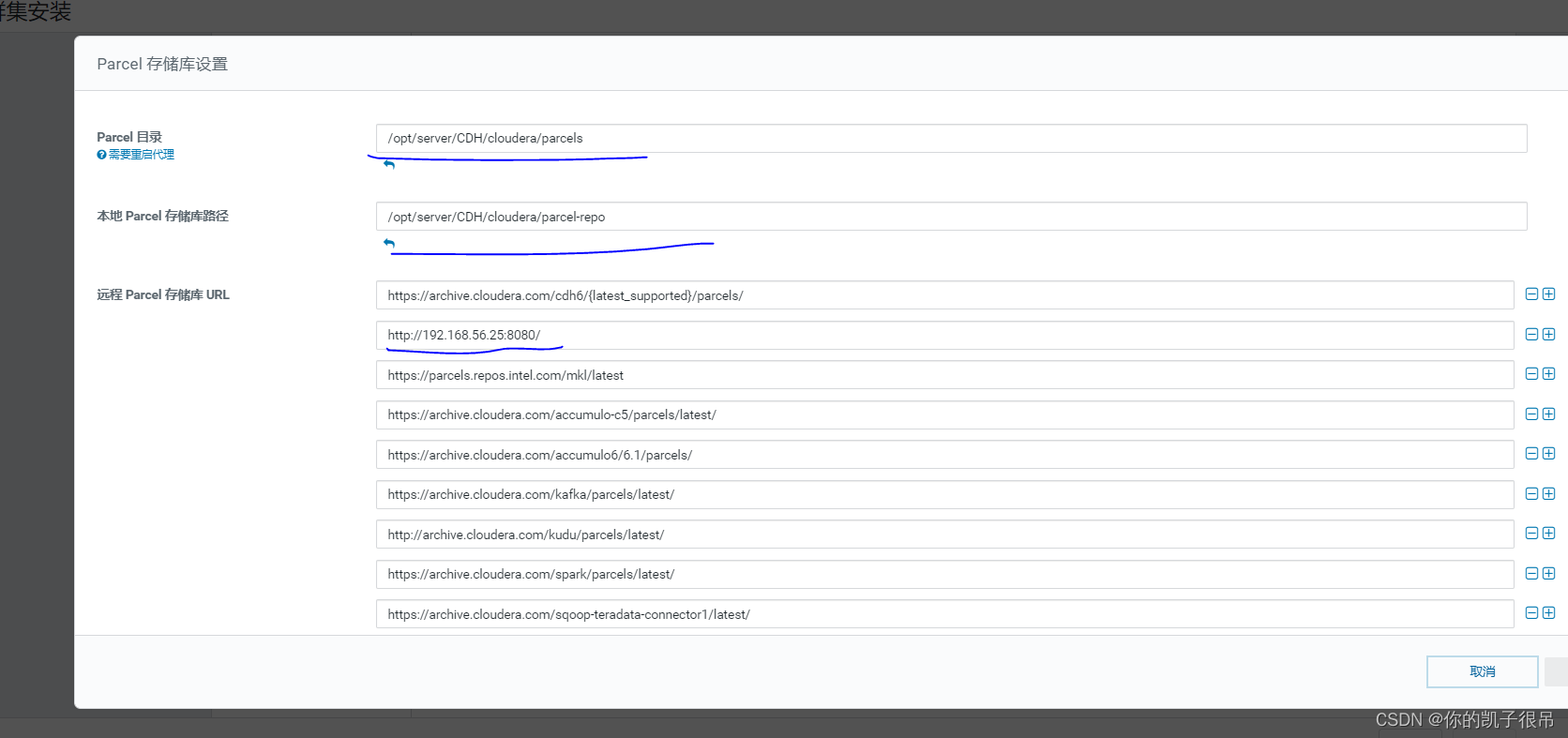

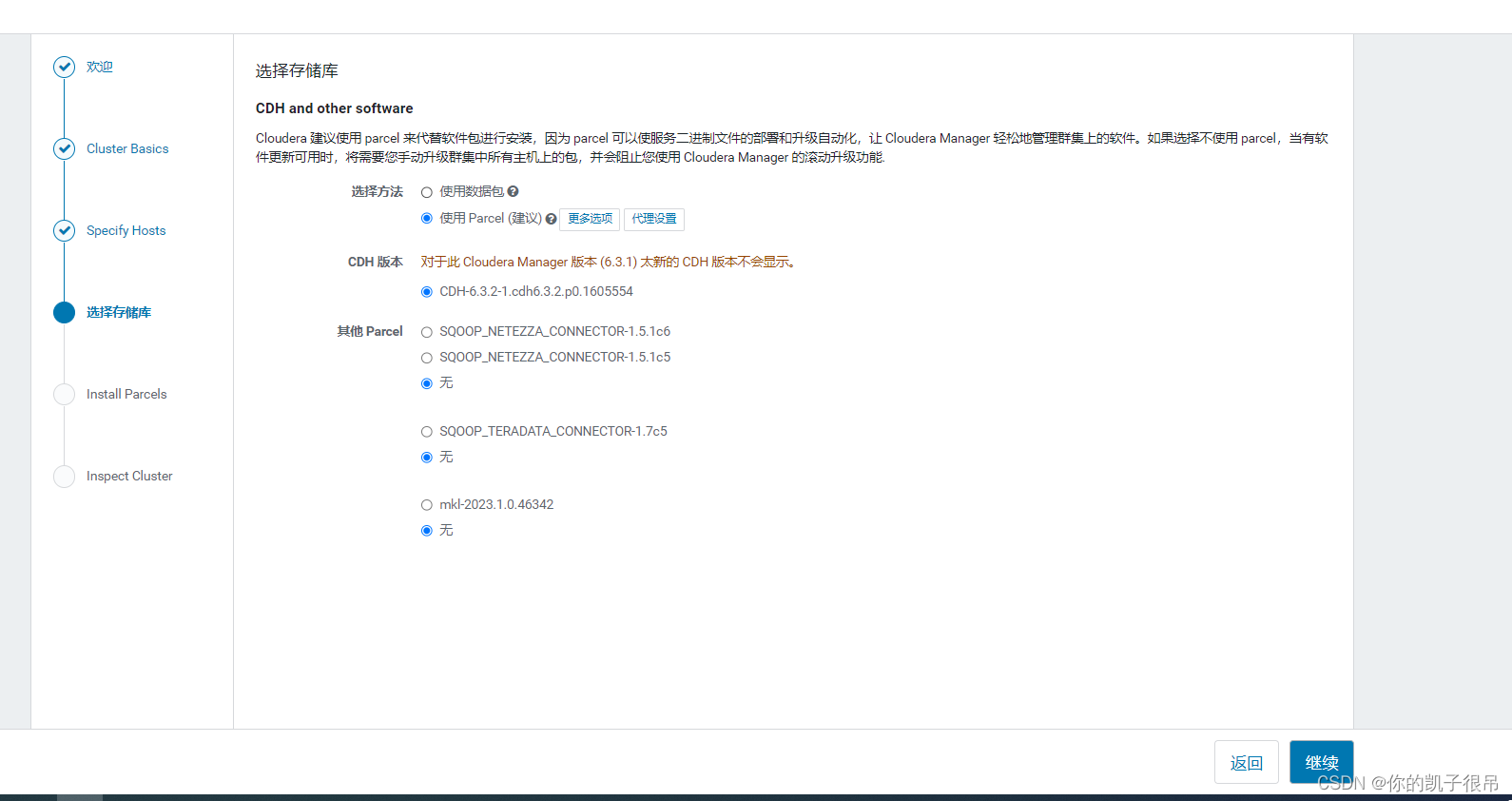

配置存储库地址,这里选择更多选项

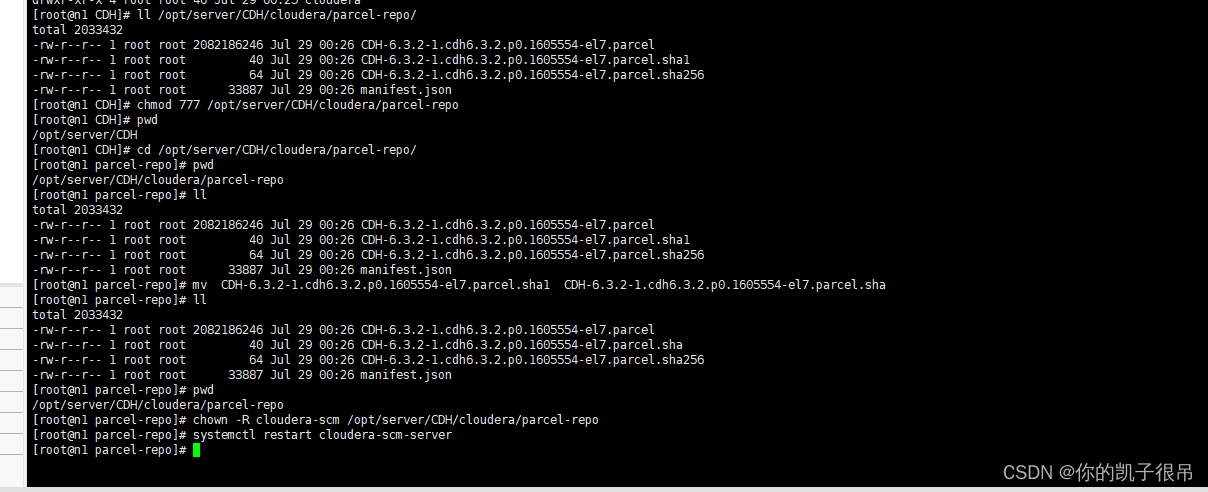

创建本地仓库目录和本地安装目录

$ cd /opt/server/CDH

$ mkdir cloudera/parcels -p

$ mkdir cloudera/parcel-repo -p$ cp /opt/software/CDH/CDH6.3.2/CDH-6.3.2-1.cdh6.3.2.p0.1605554-el7.parcel* /opt/server/CDH/cloudera/parcel-repo/

$ cp /opt/software/CDH/CDH6.3.2/manifest.json /opt/server/CDH/cloudera/parcel-repo/

$ ll /opt/server/CDH/cloudera/parcel-repo/

# 目录需要写入权限

$ chmod 777 /opt/server/CDH/cloudera/parcel-repo

解决方式 :参照未在已配置的存储库中找到任何parcel_未在已配置的存储库中找到任何 parcel。尝试在更多选项下添加一个自定义存储库。_流萤的花火的博客-CSDN博客

cd /opt/server/CDH/cloudera/parcel-repo/

mv CDH-6.3.2-1.cdh6.3.2.p0.1605554-el7.parcel.sha1 CDH-6.3.2-1.cdh6.3.2.p0.1605554-el7.parcel.sha

chown -R cloudera-scm /opt/server/CDH/cloudera/parcel-repo

systemctl restart cloudera-scm-server

继续按钮可进行下一步

cat /etc/os-release

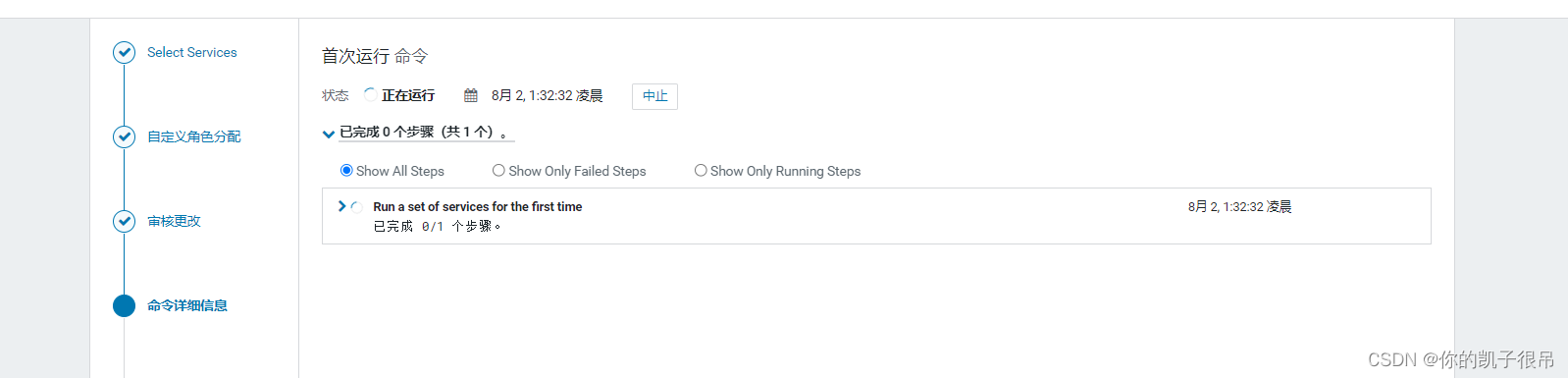

我选择大数据组件,第一次安装选择所有结果虚拟机直接处于休眠状态,第二次就选择部分,连接成功就下一步

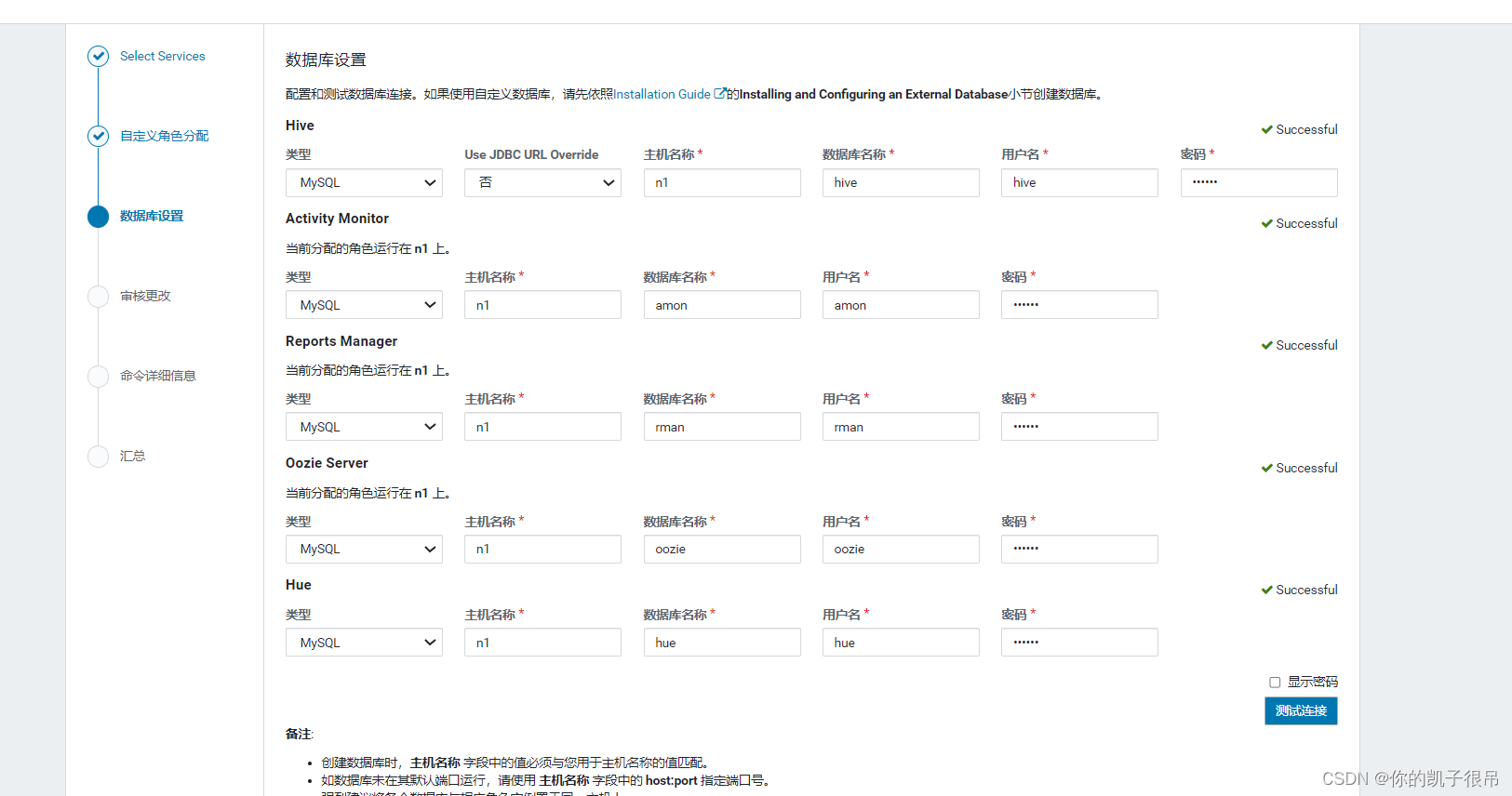

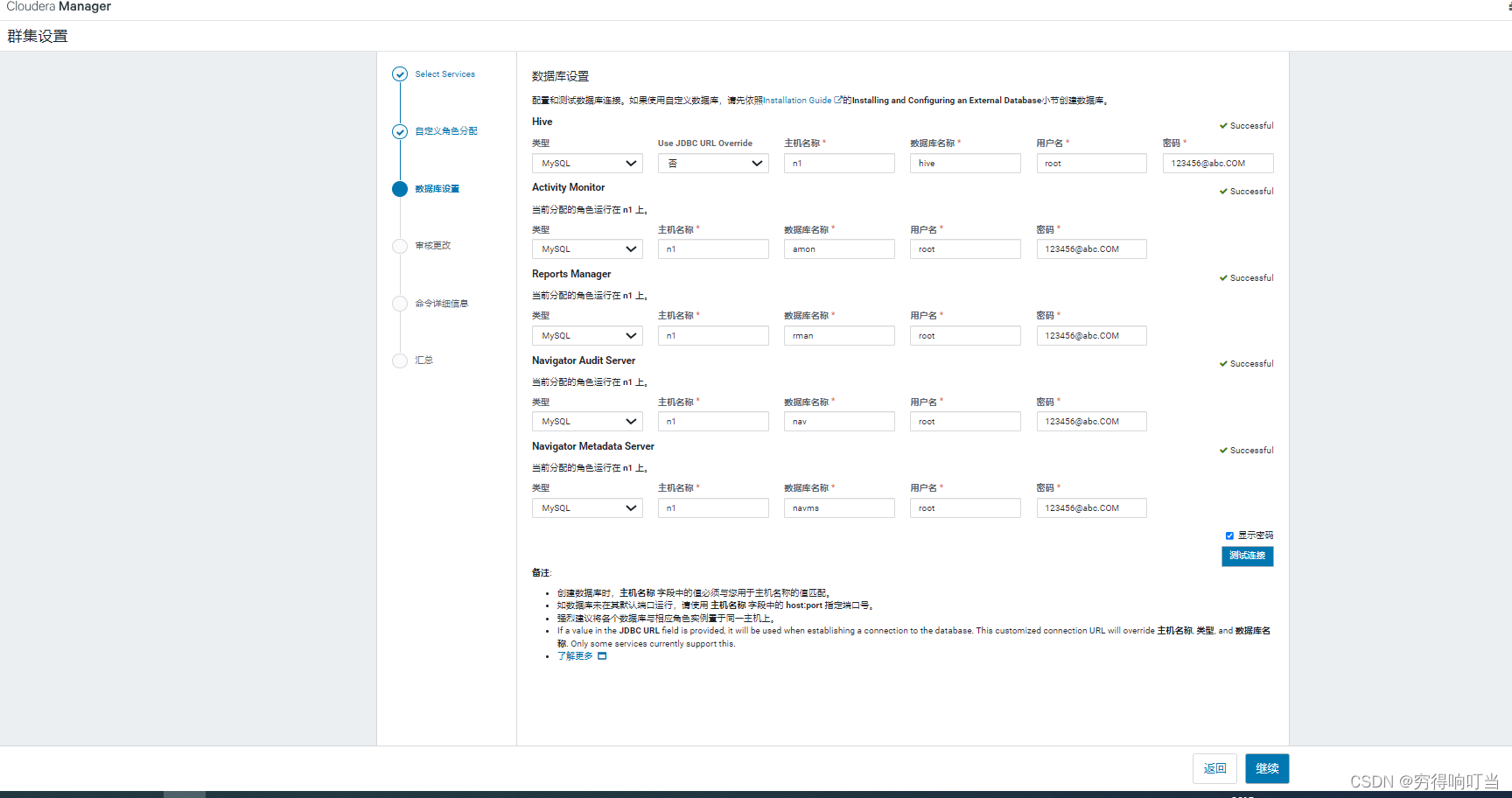

数据库设置

注意数据库名称前后空格

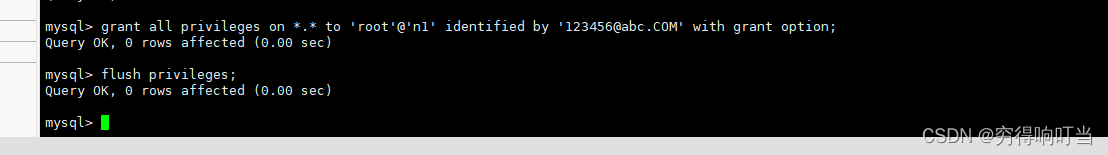

mysql> grant all privileges on *.* to 'root'@'n1' identified by '123456@abc.COM' with grant option;

Query OK, 0 rows affected (0.00 sec)

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

mysql>

审核批改默认就行

假如在安装的时候出现问题,如网络连接中断,机器死机

先停止所有服务。清除数据库

systemctl stop cloudera-scm-server

systemctl stop cloudera-scm-agent

1> 删除Agent节点的UUID

# rm -rf /var/lib/cloudera-scm-agent/*

cd /var/lib/cloudera-scm-agent/

rm -rf uuid

2> 清空主节点CM数据库

进入主节点的Mysql数据库,然后drop database cm;

3> 在主节点上重新初始化CM数据库

# /opt/cm-5.7.1/share/cmf/schema/scm_prepare_database.sh mysql cm -hlocalhost -uroot -p123456 --scm-host localhost scm scm scm

等待一下,连接访问master:7180即可

所有节点部署完成

https://it.cha138.com/mysql/show-112645.html

https://blog.csdn.net/a921122/article/details/51939692

https://blog.csdn.net/Keyuchen_01/article/details/128770325

3.报错问题

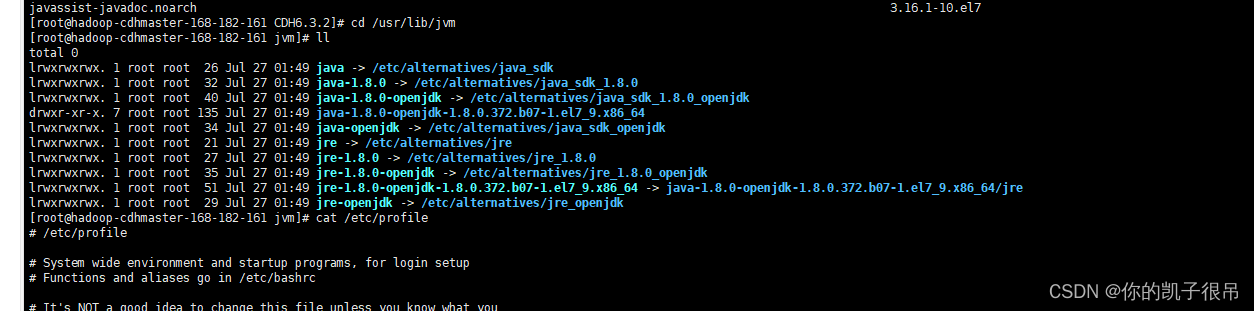

1).安装过程遇到报错没按照要求安装jdk 8

# 查看日志

journalctl -xe

yum install java-1.8.0-openjdk-devel -y

cd /usr/lib/jvm

配置环境变量

vim /etc/profile cat /etc/profile

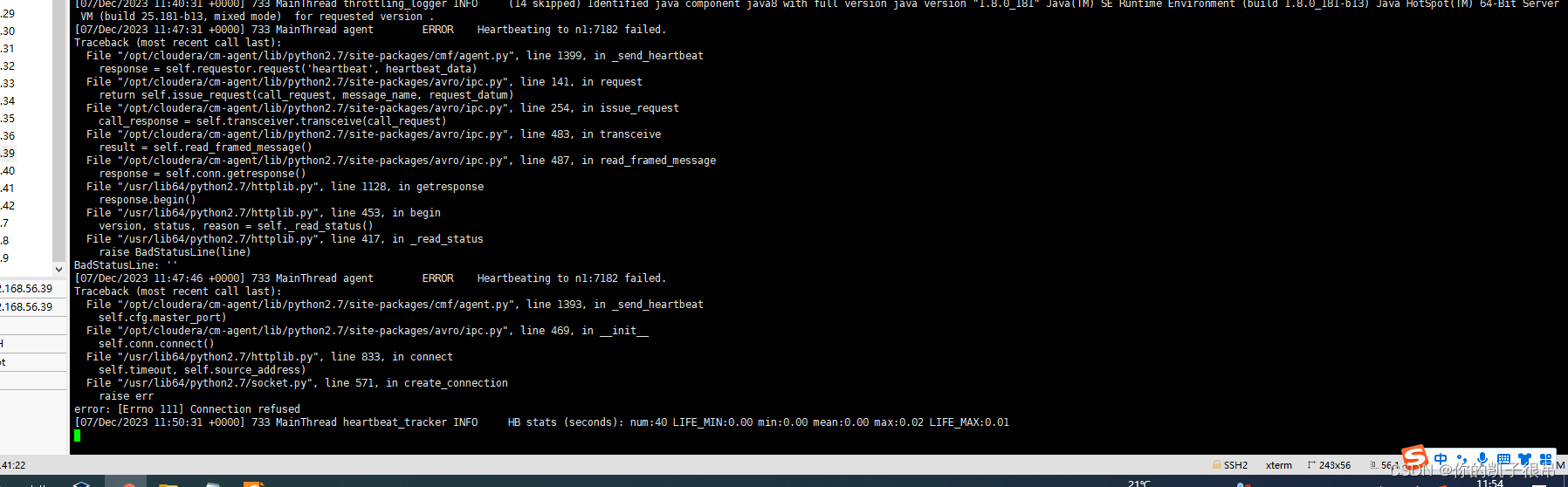

2).主节点cloudera-scm-server报error

systemctl status cloudera-scm-server

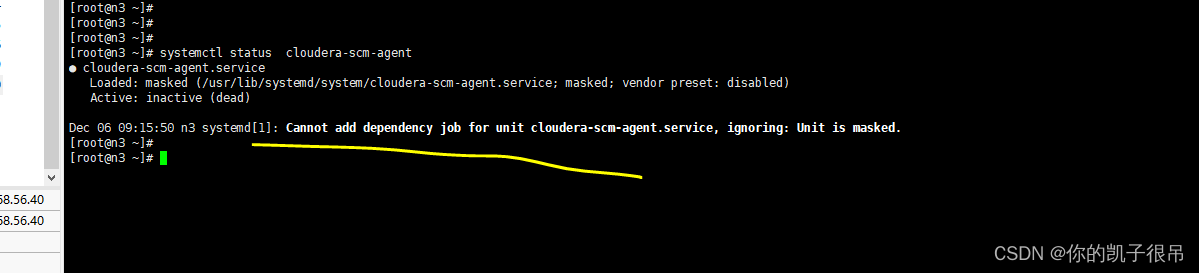

3). cloudera-scm-agent节点报error

3). cloudera-scm-agent节点报error

systemctl status cloudera-scm-agent

4).主节点

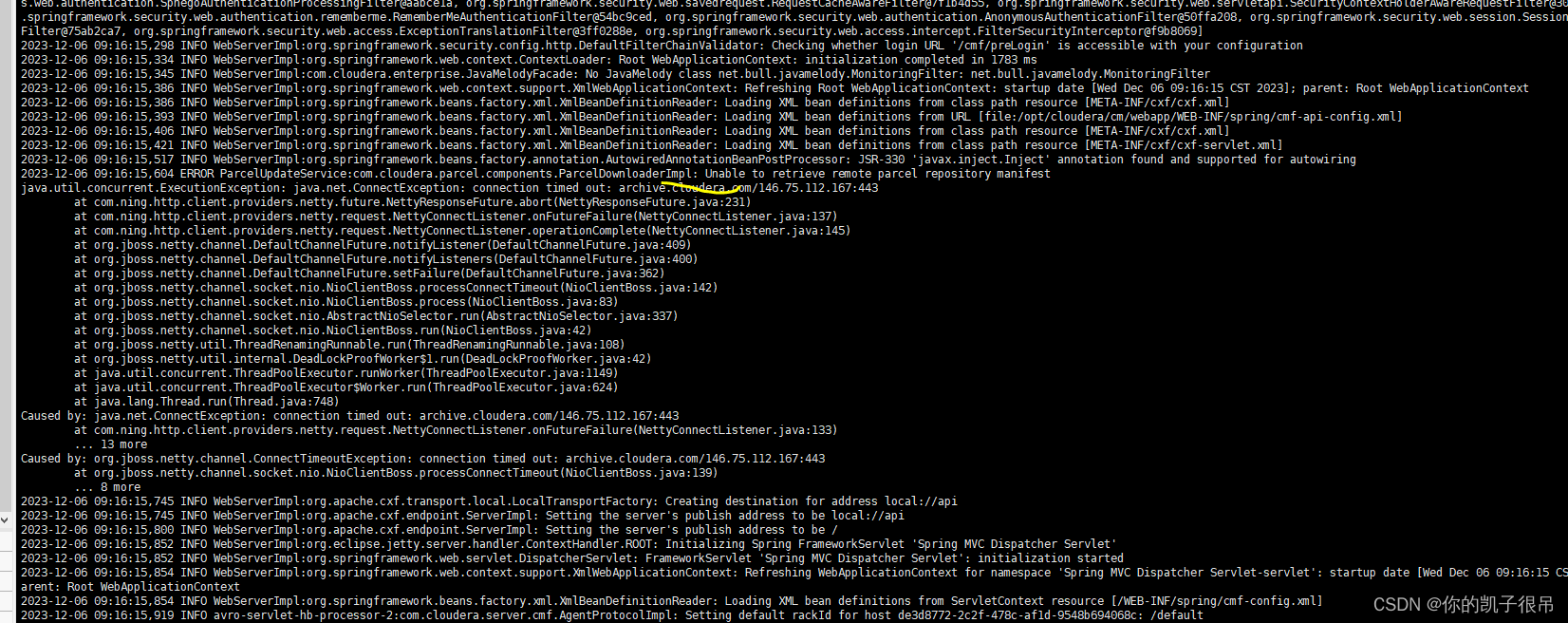

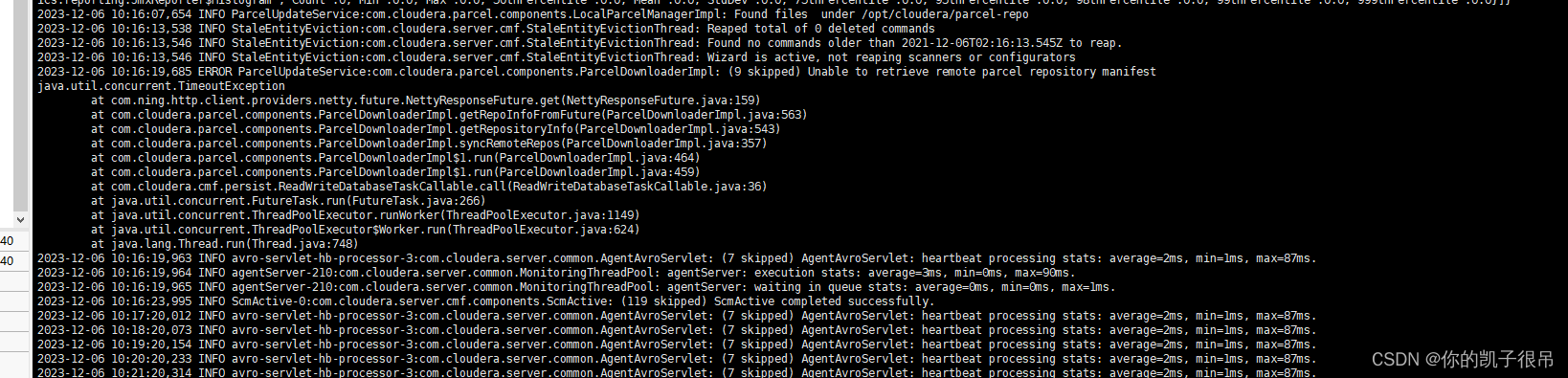

tail -f /var/log/cloudera-scm-server/cloudera-scm-server.log

cat >> /etc/hosts/ <<EOF

127.0.0.1 archive.cloudera.com

EOF

systemctl restart cloudera-scm-server

tailf /var/log/cloudera-scm-server/cloudera-scm-server.log

Unable to retrieve remote parcel repository manifest

General SSLEngine problem

可以忽略

这是由于远程的parcel库是https,而本地没有开启auto-TLS(也就是ssl),导致的远程库不可用,所以务必将远程的包拉到本地,然后启用本地的http服务

https://www.cnblogs.com/warren6/p/16775444.html

tailf /var/log/cloudera-scm-server/cloudera-scm-server.log

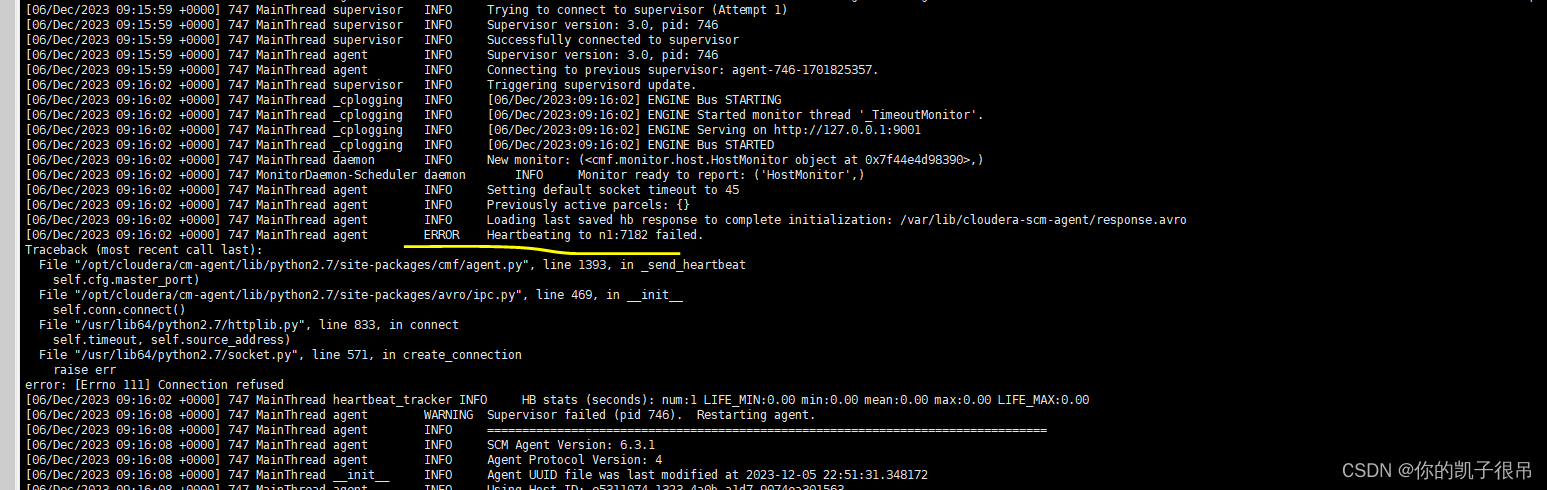

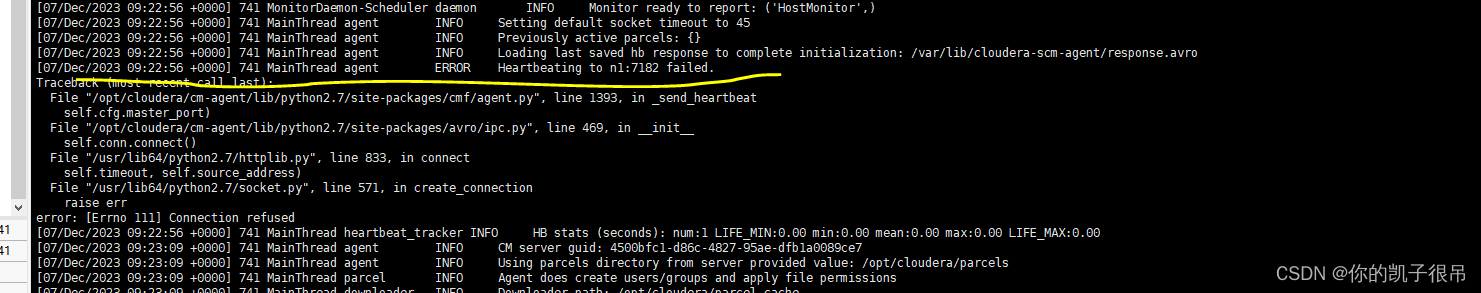

5). cloudera-scm-agent节点报error

#执行操作

tail -1000f /var/log/cloudera-scm-agent/cloudera-scm-agent.log

tailf /var/log/cloudera-scm-agent/cloudera-scm-agent.log

#执行操作

tailf /var/log/cloudera-scm-agent/cloudera-scm-agent.log

ClouderaManager agent 报错,无法连接到结群 Error, CM server guid updated, expected xxx , received xxx

解决方式

find / -iname *cm_guid*

cat /var/lib/cloudera-scm-agent/cm_guid

rm -rf /var/lib/cloudera-scm-agent/cm_guid

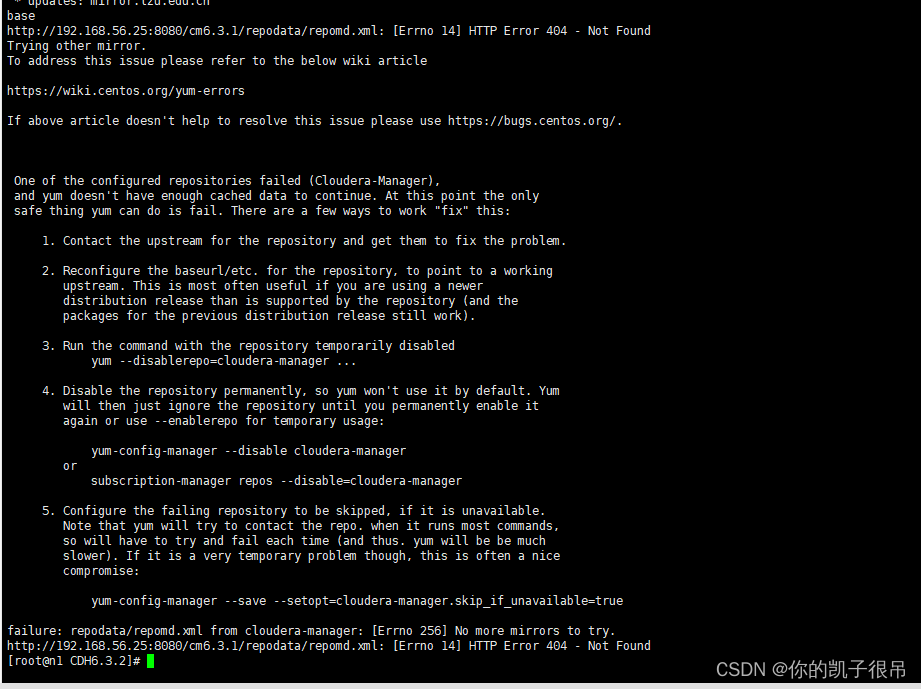

6). cloudera-scm-agent节点报error

yum makecache

以上报错原因是因为这个yum未开启,下面两张图可解决

以上报错原因是因为这个yum未开启,下面两张图可解决

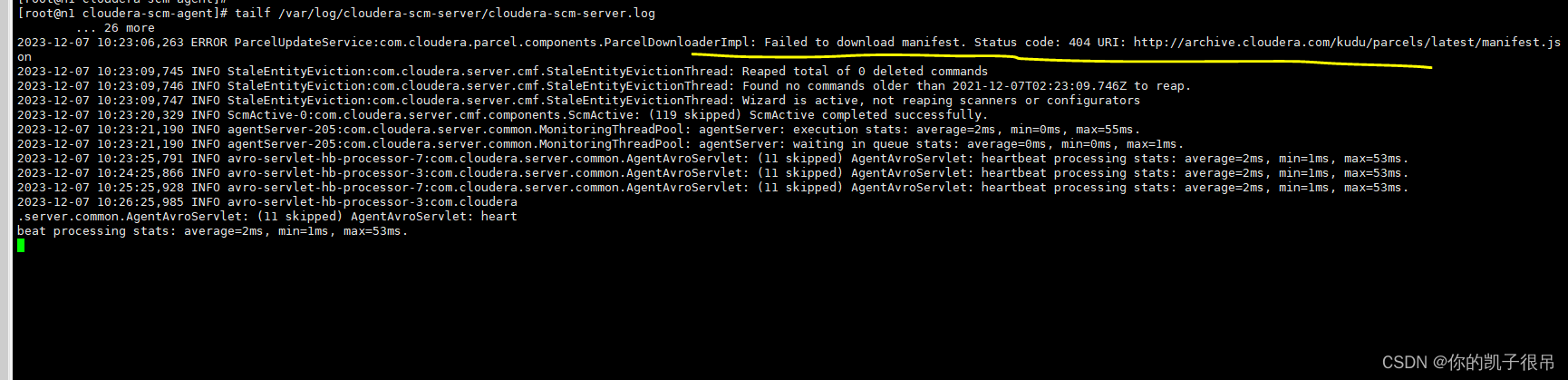

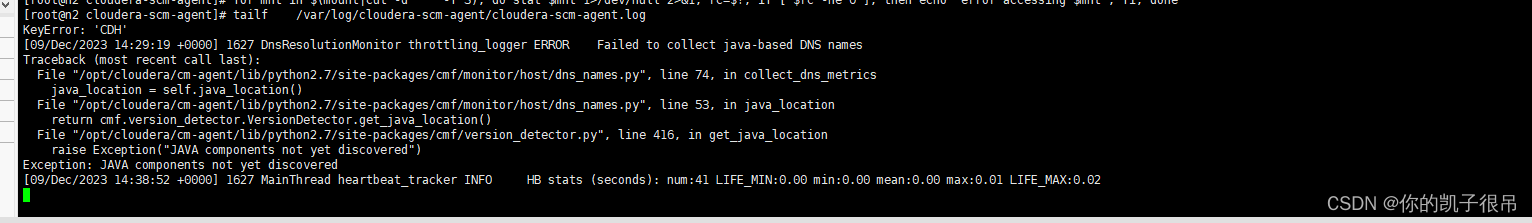

7). cloudera-scm-server节点报error

8). cloudera-scm-agent节点报error

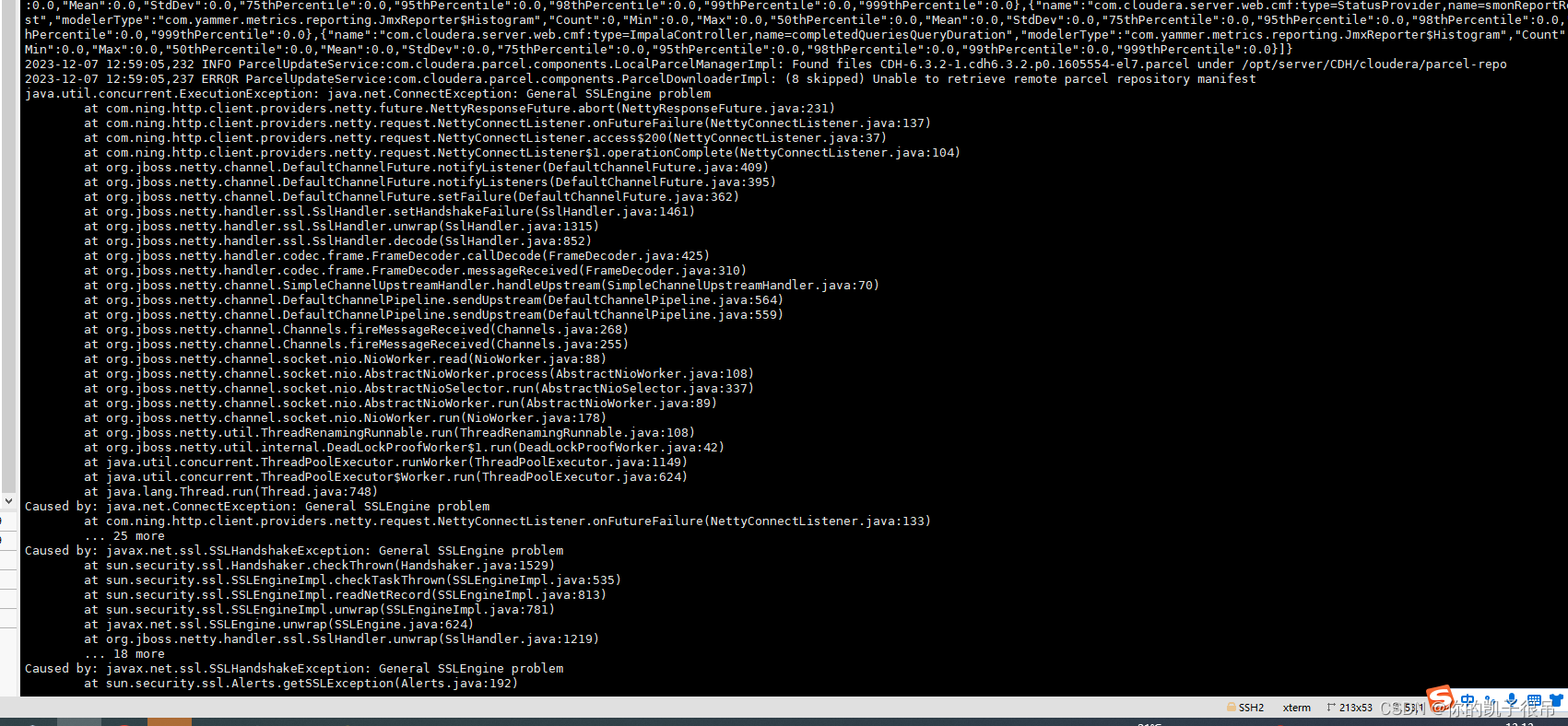

8.2023-12-12 11:07:18,234 ERROR ParcelUpdateService:com.cloudera.parcel.components.ParcelDownloaderImpl: (9 skipped) Unable to retrieve remote parcel repository manifest

解决办法

2023-12-12 14:30:24,147 WARN avro-servlet-hb-processor-4:com.cloudera.server.cmf.AgentProtocolImpl: Received an optimized heartbeat for a host with ID '232543b2-d8b7-48de-9229-cf2d7648374b' that is not recognized

2023-12-12 14:30:24,249 ERROR ParcelUpdateService:com.cloudera.parcel.components.ParcelDownloaderImpl: (6 skipped) Failed to download manifest. Status code: 401 URI: https://archive.cloudera.com/p/cdh6/6.3/parcels/manifest.json

2023-12-12 14:30:24,249 ERROR ParcelUpdateService:com.cloudera.parcel.components.ParcelDownloaderImpl: (3 skipped) Could not retrieve repository info for repo https://archive.cloudera.com/cdh6/6.3/parcels/. Got HTTP response code 401

2023-12-12 14:30:24,581 WARN avro-servlet-hb-processor-5:com.cloudera.server.cmf.AgentProtocolImpl: Received an optimized heartbeat for a host with ID 'a6a2a001-3e55-48f2-8e74-6e65d1b6e03c' that is not recognized

2023-12-12 14:30:26,824 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Reaped total of 0 deleted commands

2023-12-12 14:30:26,825 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Found no commands older than 2021-12-12T06:30:26.825Z to reap.

2023-12-12 14:30:26,825 INFO StaleEntityEviction:com.cloudera.server.cmf.StaleEntityEvictionThread: Wizard is active, not reaping scanners or configurators

2023-12-12 14:30:29,264 ERROR ParcelUpdateService:com.cloudera.parcel.components.ParcelDownloaderImpl: (9 skipped) Unable to retrieve remote parcel repository manifest

java.util.concurrent.ExecutionException: java.net.ConnectException: connection timed out: archive.cloudera.com/146.75.112.167:443

at com.ning.http.client.providers.netty.future.NettyResponseFuture.abort(NettyResponseFuture.java:231)

at com.ning.http.client.providers.netty.request.NettyConnectListener.onFutureFailure(NettyConnectListener.java:137)

at com.ning.http.client.providers.netty.request.NettyConnectListener.operationComplete(NettyConnectListener.java:145)

at org.jboss.netty.channel.DefaultChannelFuture.notifyListener(DefaultChannelFuture.java:409)

at org.jboss.netty.channel.DefaultChannelFuture.notifyListeners(DefaultChannelFuture.java:400)

at org.jboss.netty.channel.DefaultChannelFuture.setFailure(DefaultChannelFuture.java:362)

at org.jboss.netty.channel.socket.nio.NioClientBoss.processConnectTimeout(NioClientBoss.java:142)

at org.jboss.netty.channel.socket.nio.NioClientBoss.process(NioClientBoss.java:83)

at org.jboss.netty.channel.socket.nio.AbstractNioSelector.run(AbstractNioSelector.java:337)

at org.jboss.netty.channel.socket.nio.NioClientBoss.run(NioClientBoss.java:42)

at org.jboss.netty.util.ThreadRenamingRunnable.run(ThreadRenamingRunnable.java:108)

at org.jboss.netty.util.internal.DeadLockProofWorker$1.run(DeadLockProofWorker.java:42)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.net.ConnectException: connection timed out: archive.cloudera.com/146.75.112.167:443

at com.ning.http.client.providers.netty.request.NettyConnectListener.onFutureFailure(NettyConnectListener.java:133)

... 13 more

Caused by: org.jboss.netty.channel.ConnectTimeoutException: connection timed out: archive.cloudera.com/146.75.112.167:443

at org.jboss.netty.channel.socket.nio.NioClientBoss.processConnectTimeout(NioClientBoss.java:139)

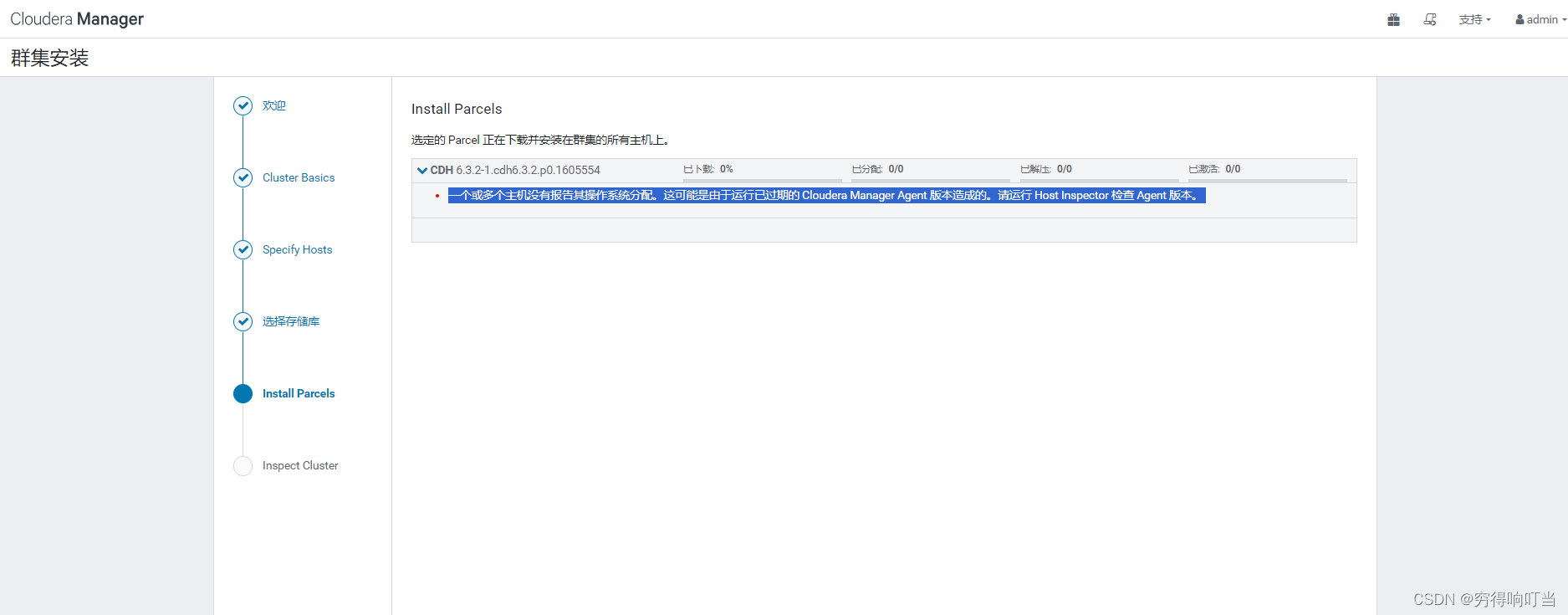

9)一个或多个主机没有报告其操作系统分配。这可能是由于运行已过期的 Cloudera Manager Agent 版本造成的。请运行 Host Inspector 检查 Agent 版本。

解决办法

我个人重装

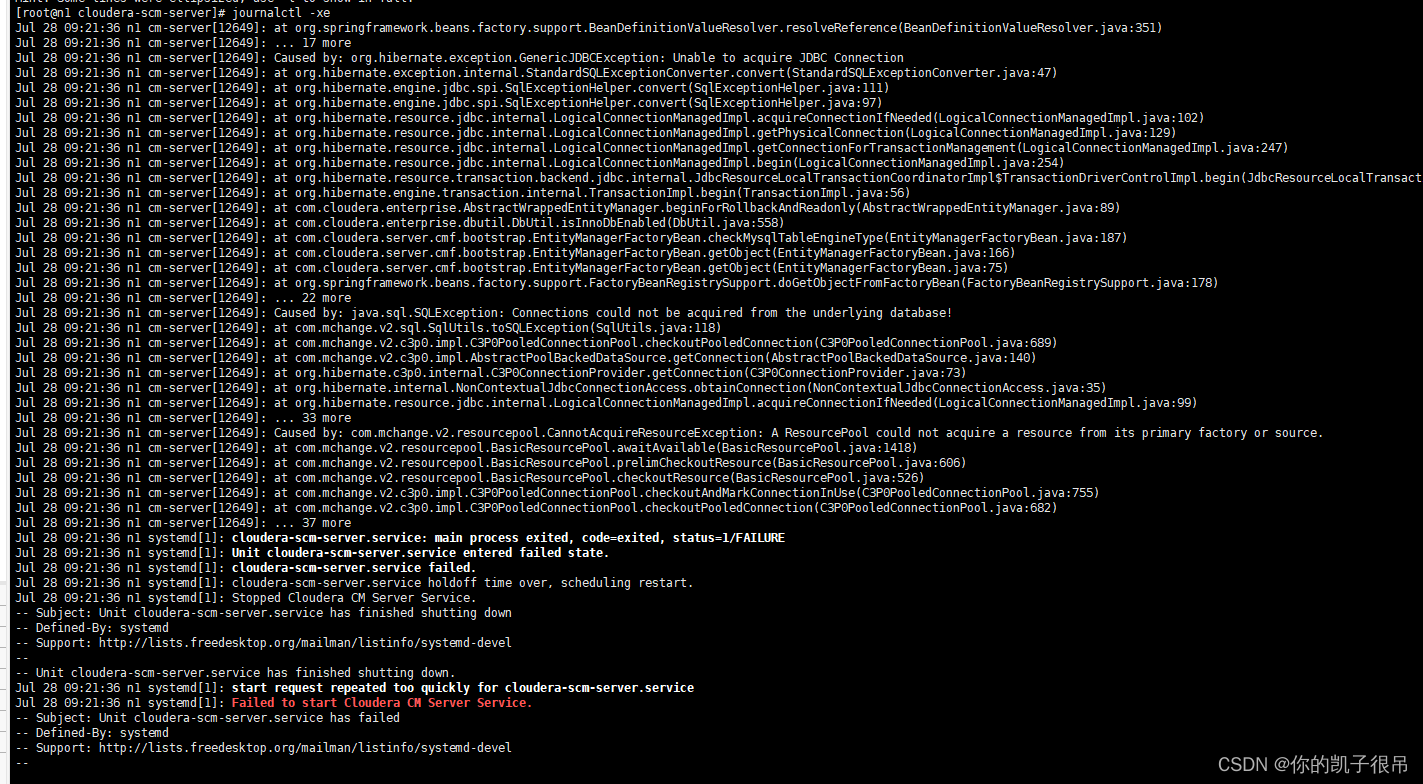

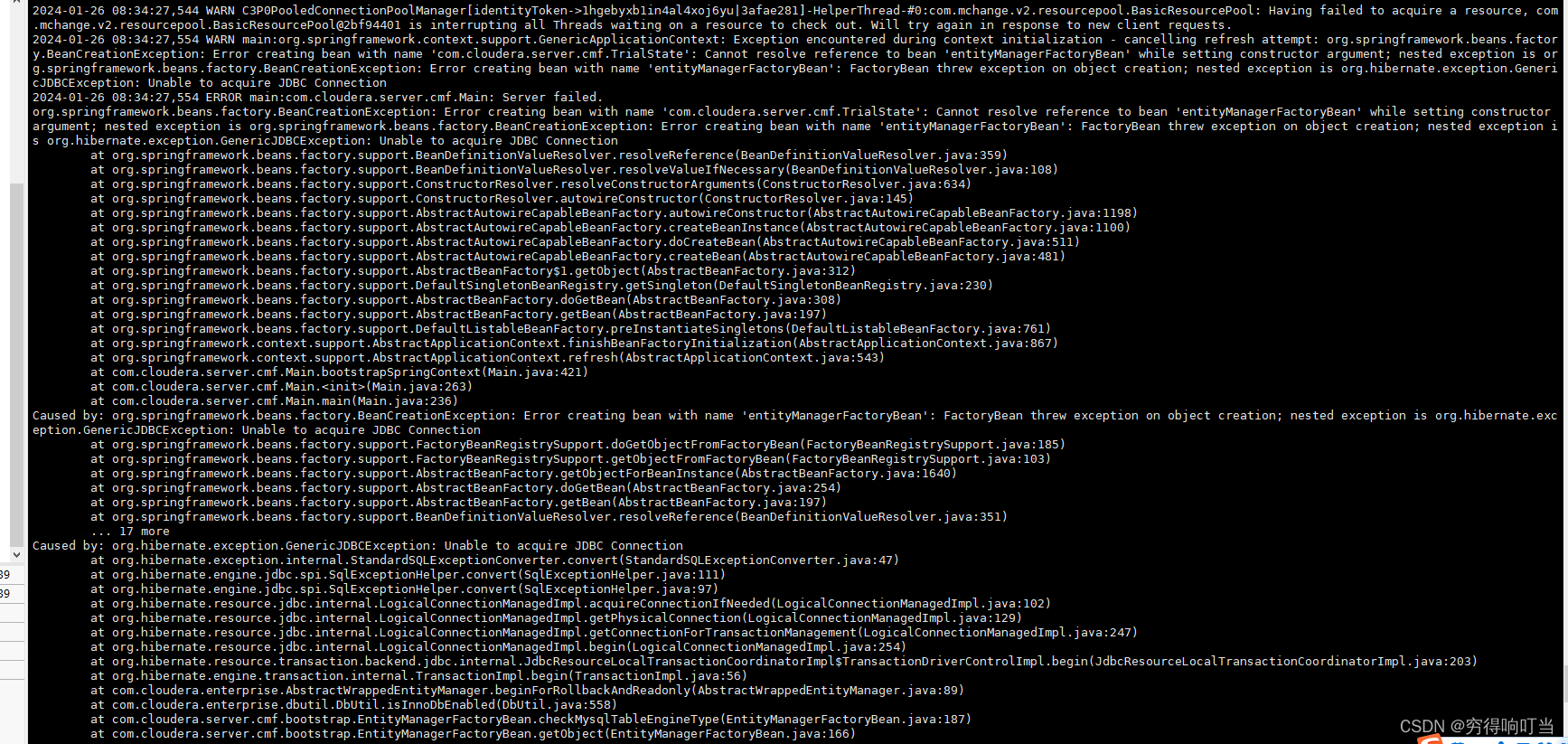

10.org.springframework.beans.factory.BeanCreationException: Error creating bean with name 'com.cloudera.server.cmf.TrialState': Cannot resolve reference to bean 'entityManagerFactoryBean' while setting constructor argument; nested exception is org.springframework.beans.factory.BeanCreationException: Error creating bean with name 'entityManagerFactoryBean': FactoryBean threw exception on object creation; nested exception is org.hibernate.exception.GenericJDBCException: Unable to acquire JDBC Connection

解决办法

11.主机运行不良

解决方法:

是因为节点上次安装没有成功,需要删除cm_guid文件才能再次安装。

[root@cdh-70 ~]# find / -name cm_guid

/var/lib/cloudera-scm-agent/cm_guid

[root@cdh-70 ~]# rm -rf /var/lib/cloudera-scm-agent/cm_guid –删除文件

[root@cdh-70 ~]# /etc/init.d/cloudera-scm-agent restart –重启服务到此,

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?