准备工作

执行引擎

| 引擎 | 说明 |

|---|

| mr | 默认引擎,hadoop自带的框架,在2.x版本中已不推荐使用 |

| tez | 相比于mr,减少了磁盘io,速度比mr有明显提升 |

| spark | 内存计算框架,速度最快 |

运行模式

| 模式 | 说明 | 特点 |

|---|

| 内嵌模式 | 数据保存在内嵌的 derby 数据库中 | 不支持多用户登录 |

| 本地模式 | 数据保存在本地的数据库,如mysql | 支持多用户登录 |

| 远程模式 | 数据保存在远程的数据库中,如mysql | 多个hive客户端使用同一个套元数据,节省资源 |

开始使用

解压hive,进入conf目录

- hive-env.sh.template 名称更改为 hive-env.sh

HADOOP_HOME=/opt/module/hadoop-2.10.0/

export HIVE_CONF_DIR=/opt/module/apache-hive-2.3.7-bin/conf/

export HIVE_AUX_JARS_PATH=/opt/module/apache-hive-2.3.7-bin/lib/

sbin/start-dfs.sh

sbin/start-yarn.sh

hadoop fs -mkdir /tmp

hadoop fs -mkdir -p /user/hive/warehouse

hadoop fs -chmod g+w /tmp

hadoop fs -chmod g+w /user/hive/warehouse

bin/schematool -dbType derby -initSchema

bin/hive

配置本地登录模式(远程登录模式也适用)

- hive-default.xml.template 重命名 hive-site.xml

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.75.136:3306/mydb?createDatabaseIfNotExist=true</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.cj.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>mine</value>

</property>

<property>

<name>hive.exec.local.scratchdir</name>

<value>/opt/module/apache-hive-2.3.7-bin/iotmp/atguigu</value>

</property>

<property>

<name>hive.downloaded.resources.dir</name>

<value>/opt/module/apache-hive-2.3.7-bin/iotmp/${hive.session.id}_resources</value>

</property>

</configuration>

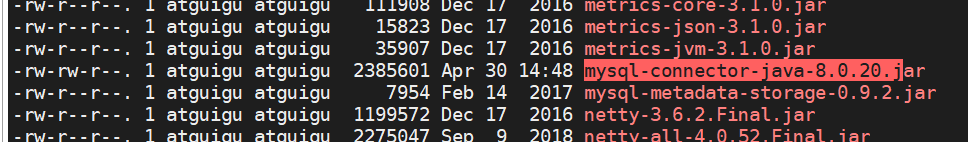

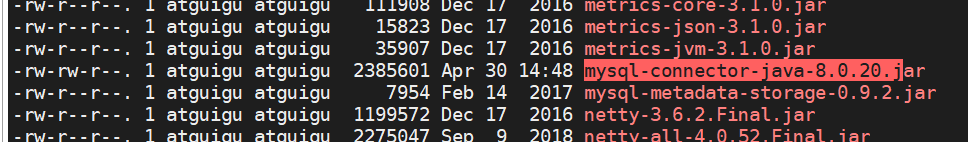

- 复制mysql驱动jar包到hive安装目录下的 lib 目录下

- 执行初始化命令

bin/schematool -dbType mysql -initSchema

- 执行成功后,mysql中成功生成表数据

复杂数据类型

| 名称 | 示例 |

|---|

| array | array<string> |

| map | map<key, value> |

| struct | struct <name:string, age:int> |

建表语句

create table student(id int,name string);

create table student1(name string,

friends array<string>,

children map<string,int>,

address struct<street:string, city:string>

)

row format delimited fields terminated by ','

collection items terminated by '_'

map keys terminated by ':'

lines terminated by '\n';

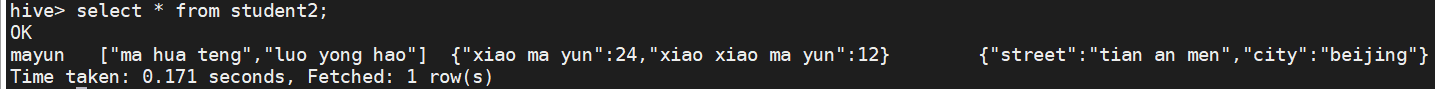

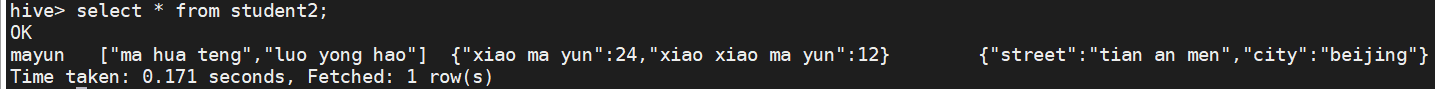

测试

mayun,ma hua teng_luo yong hao,xiao ma yun:24_xiao xiao ma yun:12,tian an men_beijing

hdfs dfs -put test.txt /user/hive/warehouse/student1

select * from student1;

- 结果

- 复杂结构的取值

select friends[1],children['xiao ma yun'],address.city from student;

常用的sql语句

< > 必须 [ ] 可选

desc database <database_name>

desc [extended] <table_name>

desc [formatted] <table_name>

create database [if not exists] <database_name> [location <'path'>];

create [external] table [if not exists] <table_name>

(<col_name> <data_type> [comment col_name_comment] [, ...])

[comment table_comment]

[partitioned by (<col_name> <data_type> [comment col_name_comment]) [, ...]]

[location <path>]

create table <table_name> as <sql>;

create table <table_name> like <table_name>;

insert <into|overwrite> <table_name> [partition (col_name="value")] values (<value> [, ...]);

insert <into|overwrite> <table_name> [partition (col_name="value")] <sql>

load data [local] inpath <'path'> [overwrite] into table <table_name> [partition (col_name="value")];

import table <table_name> from <'path'>;

insert overwrite [local] directory <'path'> [row format delimited fields terminated by <'symbol'>] <sql>;

export table <table_name> to <'path'>;

alter table <table_name> rename to <new_table_name>;

alter table <table_name> change [column] olb_col_name new_col_name data_type [comment col_name_comment];

alter table <table_name> add columns (<col_name> <data_type> [comment col_name_comment]);

alter table <table_name> replace columns (<col_name> <data_type> [comment col_name_comment]);

alter table <table_name> set tblproperties('EXTERNAL'='FALSE');

alter table <table_name> set tblproperties('EXTERNAL'='TRUE');

alter table <table_name> add partition(name="value") [partition(name="value") ...];

alter table <table_name> drop partition(name="value") [,partition(name="value")

truncate table <table_name>;

常用hive指令

hive -e <sql> [> <result_file>]

hive -f <file_name> [> <result_file>]

dfs -ls /

! ls /opt

cat /home/{user}/.hivehistory

msck repair table test990;

alter table <table_name> add partition(name="value");

load data [local] inpath <'path'> [overwrite] into table <table_name> [partition (col_name="value")];

其他实用配置

<configuration>

<property>

<name>hive.cli.print.current.db</name>

<value>true</value>

</property>

<property>

<name>hive.cli.print.header</name>

<value>true</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

</configuration>

配置日志文件

property.hive.log.dir = /opt/module/apache-hive-2.3.7-bin/logs

参数动态配置的方式

bin/hive --hiveconf <property=value>

set <property=value>;

782

782

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?