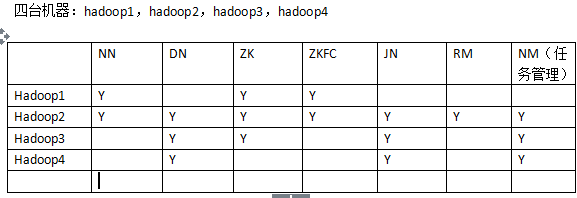

部署分布图

1.core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://bjsxt</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop1:2181,hadoop2:2181,hadoop3:2181</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/hadoop</value>

</property>

</configuration>2.hdfs-site.xml

<configuration>

<property>

<name>dfs.nameservices</name>

<value>bjsxt</value>

</property>

<property>

<name>dfs.ha.namenodes.bjsxt</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.bjsxt.nn1</name>

<value>hadoop1:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.bjsxt.nn2</name>

<value>hadoop2:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.bjsxt.nn1</name>

<value>hadoop1:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.bjsxt.nn2</name>

<value>hadoop2:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop2:8485;hadoop3:8485;hadoop4:8485/bjsxt</value

>

</property>

<property>

<name>dfs.client.failover.proxy.provider.bjsxt</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverPr

oxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_dsa</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/opt/hadoop/data</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

</configuration>3.准备zookeeper

a)三台zookeeper:hadoop1,hadoop2,hadoop3

b)编辑zoo.cfg配置文件

i.修改dataDir=/opt/zookeeper

ii.server.1=hadoop1:2888:3888

server.2=hadoop2:2888:3888

server.3=hadoop3:2888:3888

c)在dataDir目录中创建一个myid的文件,文件内容为1,2,34.配置hadoop中的slaves

5.启动三个zookeeper:./zkServer.sh start

6.启动三个JournalNode:./hadoop-daemon.sh start journalnode

7.在其中一个namenode上格式化:hdfs namenode -format8.把刚刚格式化之后的元数据拷贝到另外一个namenode上

a)启动刚刚格式化的namenode

b)在没有格式化的namenode上执行:hdfs namenode –bootstrapStandby

c)启动第二个namenode

9.在其中一个namenode上初始化zkfc:hdfs zkfc –formatZK

10.hdfs zkfc -formatZK

11.停止上面节点:stop-dfs.sh

12.全面启动:start-dfs.sh

805

805

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?