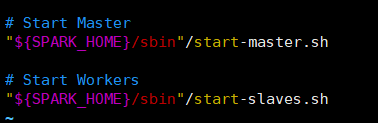

注:spark版本2.1.1,启动模式:Standalone ,需要启动Master和Worker守护进程

一、脚本分析

start-all.sh中会直接启动start-slaves.sh

start-slaves.sh中会调用org.apache.spark.deploy.master.Worker

二、源码解析

org.apache.spark.deploy.master.Worker

1、Worker主类进入main方法,main方法主要是创建RPC环境

def main(argStrings: Array[String]) {

Thread.setDefaultUncaughtExceptionHandler(new SparkUncaughtExceptionHandler(

exitOnUncaughtException = false))

Utils.initDaemon(log)

val conf = new SparkConf

val args = new WorkerArguments(argStrings, conf)

val rpcEnv = startRpcEnvAndEndpoint(args.host, args.port, args.webUiPort, args.cores,

。。。。。。

val externalShuffleServiceEnabled = conf.getBoolean("spark.shuffle.service.enabled", false)

val sparkWorkerInstances = scala.sys.env.getOrElse("SPARK_WORKER_INSTANCES", "1").toInt

require(externalShuffleServiceEnabled == false || sparkWorkerInstances <= 1,

"Starting multiple workers on one host is failed because we may launch no more than one " +

"external shuffle service on each host, please set spark.shuffle.service.enabled to " +

"false or set SPARK_WORKER_INSTANCES to 1 to resolve the conflict.")

rpcEnv.awaitTermination()

}同Master中一样、worker会先初始化一个RpcEnv环境,这里就不在重复可以对比上一篇博客查看RPCenv初始化流程,这里不再重复endpoint的初始化的流程

2、Worker实例化后执行Onstart()方法

override def onStart() {

assert(!registered)

logInfo("Starting Spark worker %s:%d with %d cores, %s RAM".format(

host, port, cores, Utils.megabytesToString(memory)))

logInfo(s"Running Spark version ${org.apache.spark.SPARK_VERSION}")

logInfo("Spark home: " + sparkHome)

// 创建worker工作目录

createWorkDir()

shuffleService.startIfEnabled()

// 创建workerWebUI,并绑定

webUi = new WorkerWebUI(this, workDir, webUiPort)

webUi.bind()

workerWebUiUrl = s"http://$publicAddress:${webUi.boundPort}"

// 重点! 向master注册

registerWithMaster()

// metricsSystem指标度量系统注册资源

metricsSystem.registerSource(workerSource)

// 开启metricsSystem指标度量系统

metricsSystem.start()

// Attach the worker metrics servlet handler to the web ui after the metrics system is started.

metricsSystem.getServletHandlers.foreach(webUi.attachHandler)

}三、总结

这里只写worker在初始化rpcEnv之后,Worker被实例化的工作

1、创建worker工作目录

2、启动服务

startExternalShuffleService()==>

/** Start the external shuffle service */

def start() {

require(server == null, "Shuffle server already started")

val authEnabled = securityManager.isAuthenticationEnabled()

logInfo(s"Starting shuffle service on port $port (auth enabled = $authEnabled)")

val bootstraps: Seq[TransportServerBootstrap] =

if (authEnabled) {

Seq(new AuthServerBootstrap(transportConf, securityManager))

} else {

Nil

}

server = transportContext.createServer(port, bootstraps.asJava)

shuffleServiceSource.registerMetricSet(server.getAllMetrics)

shuffleServiceSource.registerMetricSet(blockHandler.getAllMetrics)

masterMetricsSystem.registerSource(shuffleServiceSource)

masterMetricsSystem.start()

}3、绑定worker web ui地址

webUi = new WorkerWebUI(this, workDir, webUiPort)

webUi.bind()4、registerWithMaster()方法向master注册(Master收到会匹配类型回复)

tryRegisterAllMasters中,创建了一个注册的线程池,因为向master注册是一个阻塞的操作,所以这个线程池必须要满足master rpc地址同时请求的最大数

接下来调用sendRegisterMessageToMaster方法:用于worker端与master进行通信,向master发送注册信息

Worker

registerWithMaster()==>

tryRegisterAllMasters()==>

sendRegisterMessageToMaster(masterEndpoint)==>

private def sendRegisterMessageToMaster(masterEndpoint: RpcEndpointRef): Unit = {

masterEndpoint.send(RegisterWorker(

workerId,//当前Worker的标识ID

host,//节点

port,//端口

self,//当前的RpcEndpointRef

cores,//CPU数

memory,//内存大小

workerWebUiUrl,//Worker Web UI地址

masterEndpoint.address))

}5、Master匹配消息类型后回复,Master会给Worker返回一个消息msg,告诉Worker注册的结果是成功 or 失败

override def receive: PartialFunction[Any, Unit] = {

......

case RegisterWorker(

id, workerHost, workerPort, workerRef, cores, memory, workerWebUiUrl, masterAddress) =>

logInfo("Registering worker %s:%d with %d cores, %s RAM".format(

workerHost, workerPort, cores, Utils.megabytesToString(memory)))

if (state == RecoveryState.STANDBY) {

workerRef.send(MasterInStandby)

} else if (idToWorker.contains(id)) {

workerRef.send(RegisterWorkerFailed("Duplicate worker ID"))

} else {

val worker = new WorkerInfo(id, workerHost, workerPort, cores, memory,

workerRef, workerWebUiUrl)

if (registerWorker(worker)) {

persistenceEngine.addWorker(worker)

workerRef.send(RegisteredWorker(self, masterWebUiUrl, masterAddress))

schedule()

} else {

val workerAddress = worker.endpoint.address

logWarning("Worker registration failed. Attempted to re-register worker at same " +

"address: " + workerAddress)

workerRef.send(RegisterWorkerFailed("Attempted to re-register worker at same address: "

+ workerAddress))

}

}

.......6、master返回消息msg,Worker端通过消息类型,对msg进行处理,如果消息是注册成功,则启动forwordMessageScheduler定时器,并开始定期向Master发送心跳包,发送心跳包的同时定期向Master报告Worker中executor的最新信息

7、发送心跳给master后,master会记录最后一次的心跳时间,并且master内部会一直定时轮询worker的状态

case Heartbeat(workerId, worker) =>

idToWorker.get(workerId) match {

case Some(workerInfo) =>

workerInfo.lastHeartbeat = System.currentTimeMillis()

case None =>

if (workers.map(_.id).contains(workerId)) {

logWarning(s"Got heartbeat from unregistered worker $workerId." +

" Asking it to re-register.")

worker.send(ReconnectWorker(masterUrl))

} else {

logWarning(s"Got heartbeat from unregistered worker $workerId." +

" This worker was never registered, so ignoring the heartbeat.")

}

}

........

case CheckForWorkerTimeOut =>

timeOutDeadWorkers()8、timeOutDeadWorkers方法是对Worker的状态进行检查、如果worker失联,则将其移除

// 检查、移除,超时的worker

private def timeOutDeadWorkers() {

// Copy the workers into an array so we don't modify the hashset while iterating through it

// 现在的时间

val currentTime = System.currentTimeMillis()

// 对workers的HashSet进行筛选,筛选超出规定时间的worker,默认的时间为60秒

// 将这些筛选出来的worker添加到一个array中,以便于在迭代过程中不修改HashSet

val toRemove = workers.filter(_.lastHeartbeat < currentTime - WORKER_TIMEOUT_MS).toArray

// 将筛选出来的worker移除

for (worker <- toRemove) {

// 如果worker的状态为DEAD

if (worker.state != WorkerState.DEAD) {

logWarning("Removing %s because we got no heartbeat in %d seconds".format(

worker.id, WORKER_TIMEOUT_MS / 1000))

// 移除worker

removeWorker(worker)

} else { //如果worker上一次心跳时间小于现在 if (worker.lastHeartbeat < currentTime - ((REAPER_ITERATIONS + 1) * WORKER_TIMEOUT_MS)) {

workers -= worker // we've seen this DEAD worker in the UI, etc. for long enough; cull it

}

}

}

}

9、向metricsSystem注册并开启metricsSystem指标度量系统

到此worker就算启动完成

1221

1221

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?