关于libnids的重要参考资料

http://sourcecodebrowser.com/libnids/1.23/tcp_8c.html

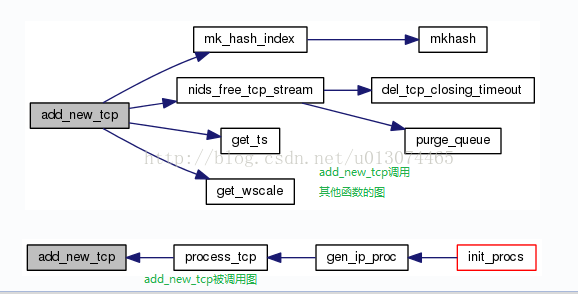

这里给出了libnids中函数之间调用关系的详细图解,很不错。例如在tcp.c中添加新的数据流时“add_new_tcp”调用其他函数的结构如图所示:

tcp.c

/*

Copyright (c) 1999 Rafal Wojtczuk <nergal@7bulls.com>. All rights reserved.

See the file COPYING for license details.

*/

#include <config.h>

#include <sys/types.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <unistd.h>

#include <netinet/in.h>

#include <netinet/in_systm.h>

#include <netinet/ip.h>

#include <netinet/tcp.h>

#include <netinet/ip_icmp.h>

#include "checksum.h"

#include "scan.h"

#include "tcp.h"

#include "util.h"

#include "nids.h"

#include "hash.h"

#if ! HAVE_TCP_STATES

enum {

TCP_ESTABLISHED = 1,

TCP_SYN_SENT,

TCP_SYN_RECV,

TCP_FIN_WAIT1,

TCP_FIN_WAIT2,

TCP_TIME_WAIT,

TCP_CLOSE,

TCP_CLOSE_WAIT,

TCP_LAST_ACK,

TCP_LISTEN,

TCP_CLOSING /* now a valid state */

};

#endif

#define FIN_SENT 120

#define FIN_CONFIRMED 121

#define COLLECT_cc 1

#define COLLECT_sc 2

#define COLLECT_ccu 4

#define COLLECT_scu 8

//期待收到的序列号是发送的第一个序列号+收到的数据总和+紧急数据

#define EXP_SEQ (snd->first_data_seq + rcv->count + rcv->urg_count)

extern struct proc_node *tcp_procs;

static struct tcp_stream **tcp_stream_table;

static struct tcp_stream *streams_pool;

static int tcp_num = 0;

static int tcp_stream_table_size;

static int max_stream;

static struct tcp_stream *tcp_latest = 0, *tcp_oldest = 0;

static struct tcp_stream *free_streams;

static struct ip *ugly_iphdr;

struct tcp_timeout *nids_tcp_timeouts = 0;

//删除某个方向的tcp数据流

static void purge_queue(struct half_stream * h)

{

struct skbuff *tmp, *p = h->list;

while (p) {

free(p->data);

tmp = p->next;

free(p);

p = tmp;

}

h->list = h->listtail = 0;

h->rmem_alloc = 0;

}

static void

add_tcp_closing_timeout(struct tcp_stream * a_tcp)

{

struct tcp_timeout *to;

struct tcp_timeout *newto;

if (!nids_params.tcp_workarounds)

return;

newto = malloc(sizeof (struct tcp_timeout));

if (!newto)

nids_params.no_mem("add_tcp_closing_timeout");

newto->a_tcp = a_tcp;

newto->timeout.tv_sec = nids_last_pcap_header->ts.tv_sec + 10;

newto->prev = 0;

for (newto->next = to = nids_tcp_timeouts; to; newto->next = to = to->next) {

if (to->a_tcp == a_tcp) {

free(newto);

return;

}

if (to->timeout.tv_sec > newto->timeout.tv_sec)

break;

newto->prev = to;

}

if (!newto->prev)

nids_tcp_timeouts = newto;

else

newto->prev->next = newto;

if (newto->next)

newto->next->prev = newto;

}

static void

del_tcp_closing_timeout(struct tcp_stream * a_tcp)

{

struct tcp_timeout *to;

if (!nids_params.tcp_workarounds)

return;

for (to = nids_tcp_timeouts; to; to = to->next)

if (to->a_tcp == a_tcp)

break;

if (!to)

return;

if (!to->prev)

nids_tcp_timeouts = to->next;

else

to->prev->next = to->next;

if (to->next)

to->next->prev = to->prev;

free(to);

}

//删除tcp数据流并释放空间

void

nids_free_tcp_stream(struct tcp_stream * a_tcp)

{

//在tcp_stream的结构中记录了该tcp流的hash index,这里要使用该值

int hash_index = a_tcp->hash_index;

struct lurker_node *i, *j;

del_tcp_closing_timeout(a_tcp);

//分别删除两个方向上的half_stream

purge_queue(&a_tcp->server);

purge_queue(&a_tcp->client);

//从hash表中删除该tcp流并释放空间

if (a_tcp->next_node)

a_tcp->next_node->prev_node = a_tcp->prev_node;

if (a_tcp->prev_node)

a_tcp->prev_node->next_node = a_tcp->next_node;

else

tcp_stream_table[hash_index] = a_tcp->next_node;

if (a_tcp->client.data)

free(a_tcp->client.data);

if (a_tcp->server.data)

free(a_tcp->server.data);

if (a_tcp->next_time)

a_tcp->next_time->prev_time = a_tcp->prev_time;

if (a_tcp->prev_time)

a_tcp->prev_time->next_time = a_tcp->next_time;

if (a_tcp == tcp_oldest)

tcp_oldest = a_tcp->prev_time;

if (a_tcp == tcp_latest)

tcp_latest = a_tcp->next_time;

i = a_tcp->listeners;

while (i) {

j = i->next;

free(i);

i = j;

}

a_tcp->next_free = free_streams;

free_streams = a_tcp;

tcp_num--; //删除了tcp流后,当前的tcp流数量减1

}

void

tcp_check_timeouts(struct timeval *now)

{

struct tcp_timeout *to;

struct tcp_timeout *next;

struct lurker_node *i;

for (to = nids_tcp_timeouts; to; to = next) {

if (now->tv_sec < to->timeout.tv_sec)

return;

to->a_tcp->nids_state = NIDS_TIMED_OUT;

for (i = to->a_tcp->listeners; i; i = i->next)

(i->item) (to->a_tcp, &i->data);

next = to->next;

nids_free_tcp_stream(to->a_tcp);

}

}

//该函数调用mkhash将源/目的IP和端口号hash为一个int数,该数已经

//具有了足够的随机性,然后在通过取余操作得到其在哈希表中的位置

static int

mk_hash_index(struct tuple4 addr)

{

int hash=mkhash(addr.saddr, addr.source, addr.daddr, addr.dest);

return hash % tcp_stream_table_size;

}

static int get_ts(struct tcphdr * this_tcphdr, unsigned int * ts)

{

int len = 4 * this_tcphdr->th_off;

unsigned int tmp_ts;

//偏移得到tcp选项options的位置

unsigned char * options = (unsigned char*)(this_tcphdr + 1);

int ind = 0, ret = 0;

while (ind <= len - (int)sizeof (struct tcphdr) - 10 )

switch (options[ind]) {

case 0: //TCPOPT_EOL,表示选项结束

return ret;

case 1: //TCPOPT_NOP,表示无操作,用NOP填充字段为4字节整数倍

ind++;

continue;

case 8: // TCPOPT_TIMESTAMP,时间戳选项

//偏移到时间戳值(4字节)的位置并将其拷贝到tmp_ts中

memcpy((char*)&tmp_ts, options + ind + 2, 4);

*ts=ntohl(tmp_ts);

ret = 1;

/* no break, intentionally */

default:

if (options[ind+1] < 2 ) /* "silly option" */

return ret;

ind += options[ind+1];

}

return ret;

}

static int get_wscale(struct tcphdr * this_tcphdr, unsigned int * ws)

{

int len = 4 * this_tcphdr->th_off;

unsigned int tmp_ws;

unsigned char * options = (unsigned char*)(this_tcphdr + 1);

int ind = 0, ret = 0;

*ws=1;

while (ind <= len - (int)sizeof (struct tcphdr) - 3 )

switch (options[ind]) {

case 0: /* TCPOPT_EOL */

return ret;

case 1: /* TCPOPT_NOP */

ind++;

continue;

case 3: //TCPOPT_WSCALE,窗口扩大因子

//偏移到窗口扩扩大因子中的“位移数”的位置

tmp_ws=options[ind+2];

if (tmp_ws>14) //位移数最大为14

tmp_ws=14;

*ws=1<<tmp_ws;

ret = 1;

/* no break, intentionally */

default

本文提供了libnids中tcp.c文件的深入解析,着重介绍了tcp.c如何处理TCP数据流,包括关键函数add_new_tcp的调用结构。通过提供的链接可以查阅详细的函数调用关系图解,帮助理解libnids的工作原理。

本文提供了libnids中tcp.c文件的深入解析,着重介绍了tcp.c如何处理TCP数据流,包括关键函数add_new_tcp的调用结构。通过提供的链接可以查阅详细的函数调用关系图解,帮助理解libnids的工作原理。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

6484

6484

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?