Intellij idea

准备工作

- 在虚拟机安装hadoop集群

- 开发机配置

(1)idean版本15.0.4

(2)jdk版本1.7.0_71

(3)Mac OS X 10.11.6

(4)hadoop安装(hadoop-2.5.2.tar.gz解压)

/Users/zhangws/opt/hadoop-2.5.2 - 配置环境变量

HADOOP_HOME=/Users/zhangws/opt/hadoop-2.5.2

HADOOP_BIN_PATH=%HADOOP_HOME%\bin

HADOOP_PREFIX=/Users/zhangws/opt/hadoop-2.5.2

另外,PATH变量在最后追加;%HADOOP_HOME%\bin创建工程

pom.xml文件内容

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.zw</groupId>

<artifactId>hadoop-demo</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<name>hadoop-demo</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<hadoop.version>2.5.2</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>3.8.1</version>

<scope>test</scope>

</dependency>

</dependencies>

</project>core-site.xml配置(resources目录)

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

</configuration>注:value指定hadoop的地址(虚拟机)

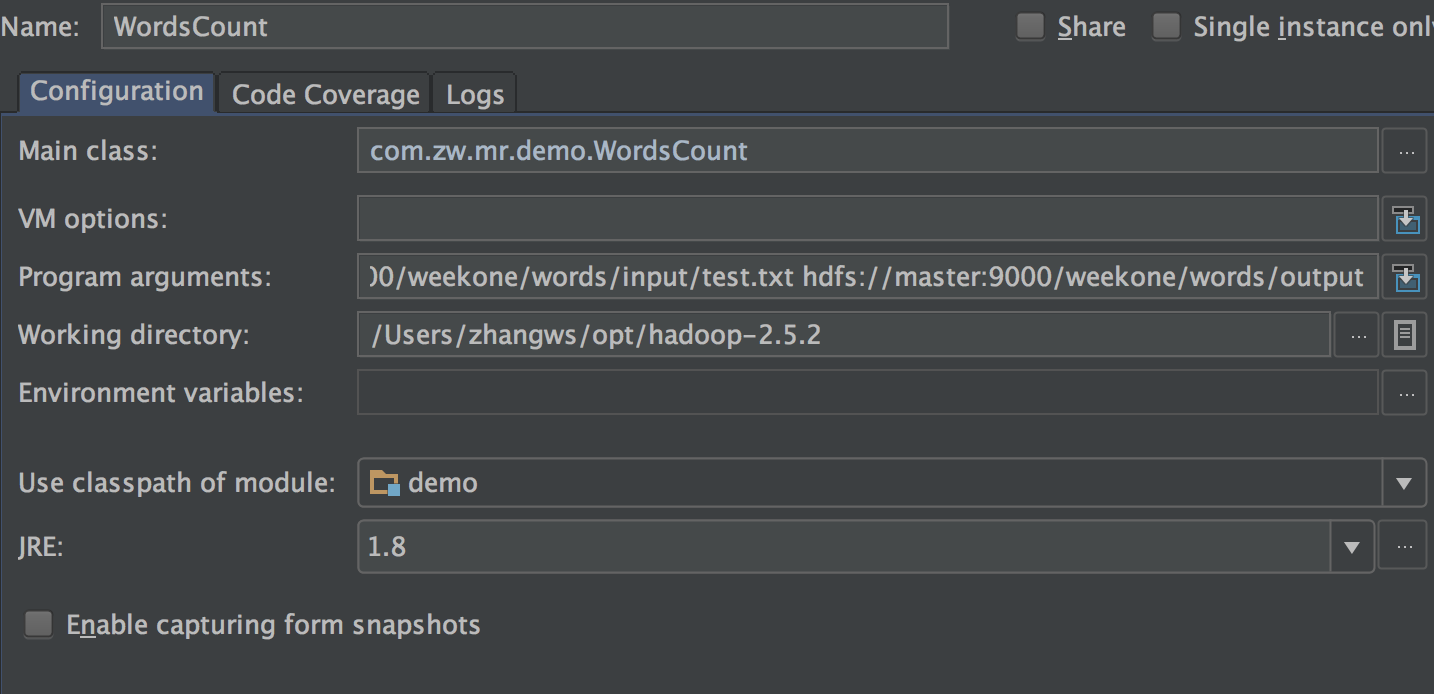

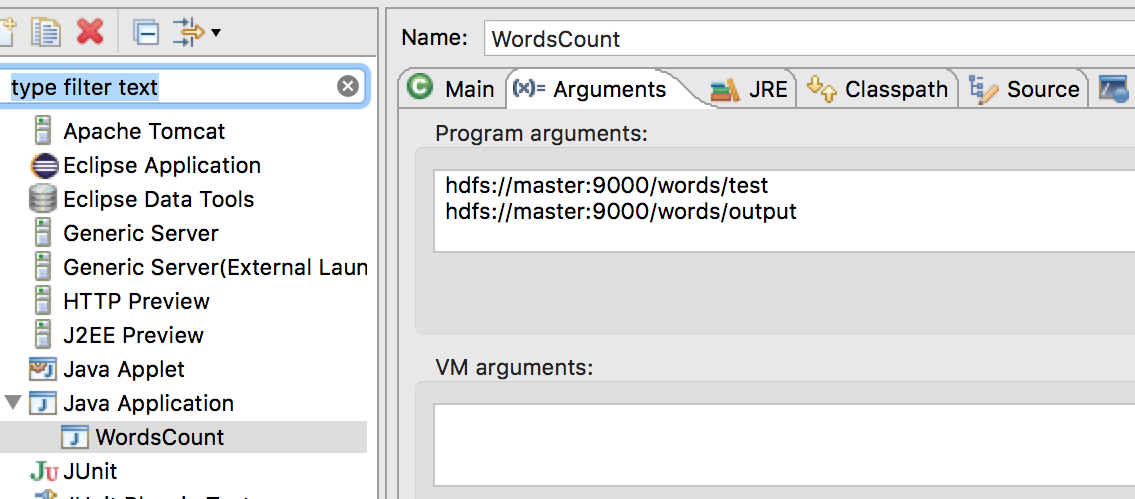

设置运行参数

Working directory是本地hadoop的home路径;

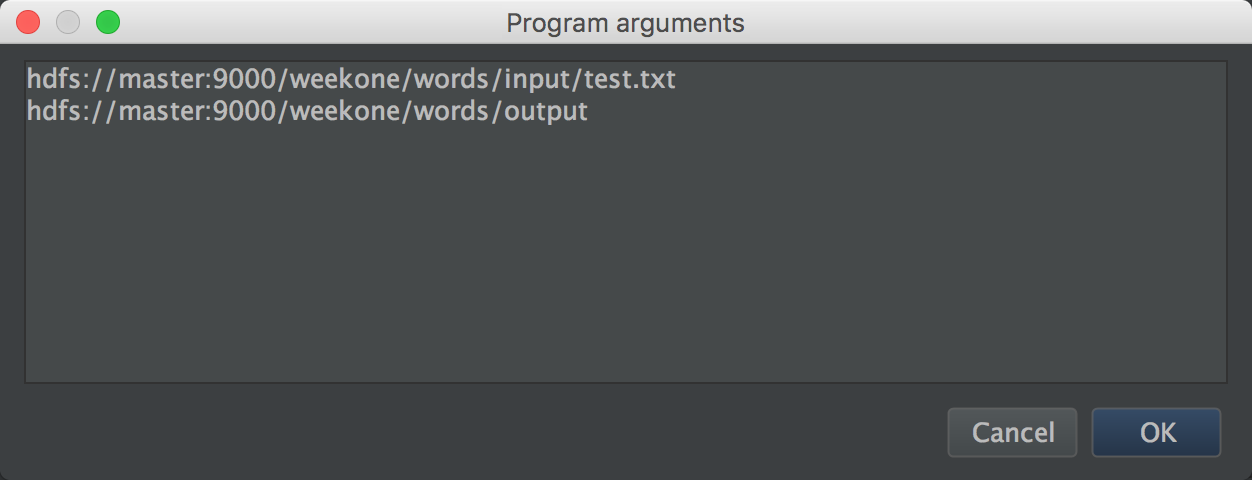

Program arguments的内容如下:

hdfs://master:9000/weekone/words/input/test.txt

hdfs://master:9000/weekone/words/output

分别为输入参数和输出参数。

注:

如果input/test.txt文件没有,请先手动上传;

/output/ 必须是不存在的,否则程序运行到最后,发现目标目录存在,也会报错;

按照上面步骤就可以在适当的位置打断点,调试了。

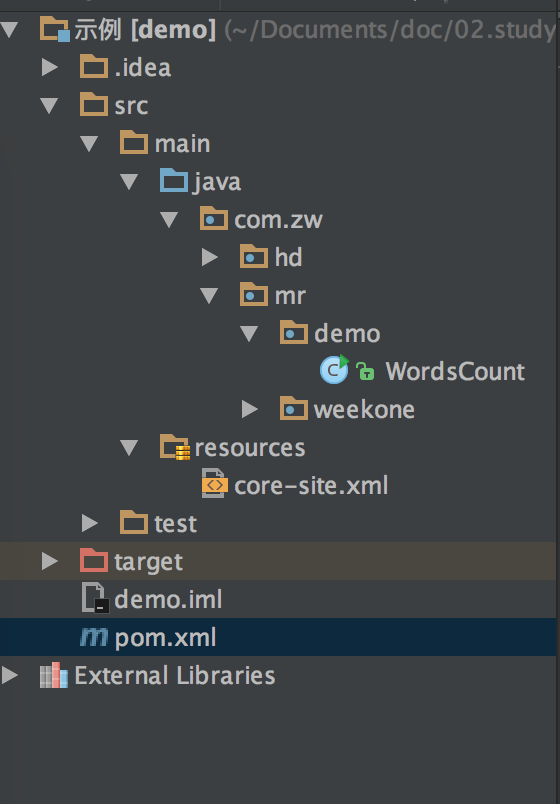

示例

package com.zw.mr.demo;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

import java.io.IOException;

/**

* 这是统计单词个数的例子

*

* Created by zhangws on 16/7/31.

*/

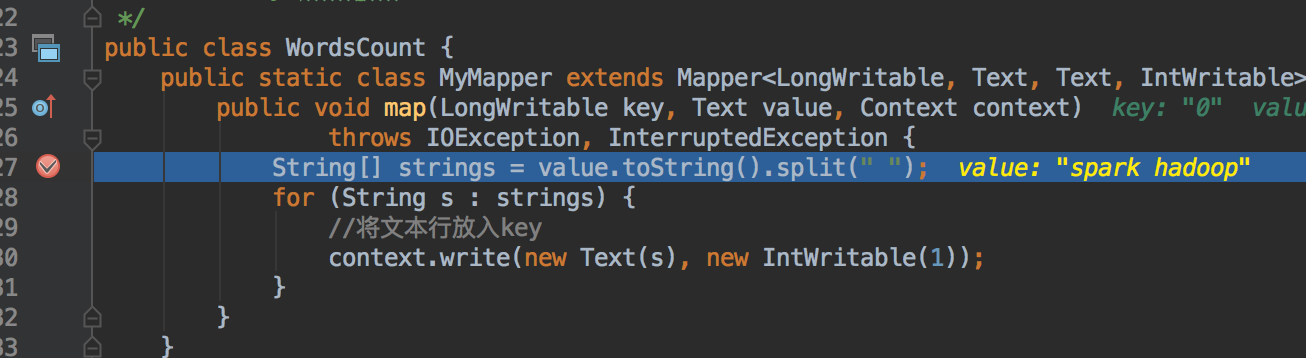

public class WordsCount {

public static class MyMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String[] strings = value.toString().split(" ");

for (String s : strings) {

//将文本行放入key

context.write(new Text(s), new IntWritable(1));

}

}

}

public static class MyReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException {

int count = 0;

for (IntWritable v : values) {

count += v.get();

}

//输出key

context.write(key, new IntWritable(count));

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length < 2) {

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

//先删除output目录

rmr(conf, otherArgs[otherArgs.length - 1]);

Job job = Job.getInstance(conf, "WordsCount");

job.setJarByClass(WordsCount.class);

job.setMapperClass(MyMapper.class);

job.setCombinerClass(MyReducer.class);

job.setReducerClass(MyReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

if (job.waitForCompletion(true)) {

cat(conf, otherArgs[1] + "/part-r-00000");

System.out.println("success");

} else {

System.out.println("fail");

}

}

/**

* 删除指定目录

*

* @param conf

* @param dirPath

*

* @throws IOException

*/

private static void rmr(Configuration conf, String dirPath) throws IOException {

boolean delResult = false;

// FileSystem fs = FileSystem.get(conf);

Path targetPath = new Path(dirPath);

FileSystem fs = targetPath.getFileSystem(conf);

if (fs.exists(targetPath)) {

delResult = fs.delete(targetPath, true);

if (delResult) {

System.out.println(targetPath + " has been deleted sucessfullly.");

} else {

System.out.println(targetPath + " deletion failed.");

}

}

return delResult;

}

/**

* 输出指定文件内容

*

* @param conf HDFS配置

* @param filePath 文件路径

*

* @return 文件内容

*

* @throws IOException

*/

public static void cat(Configuration conf, String filePath) throws IOException {

// FileSystem fileSystem = FileSystem.get(conf);

InputStream in = null;

Path file = new Path(filePath);

FileSystem fileSystem = file.getFileSystem(conf);

try {

in = fileSystem.open(file);

IOUtils.copyBytes(in, System.out, 4096, true);

} finally {

if (in != null) {

IOUtils.closeStream(in);

}

}

}

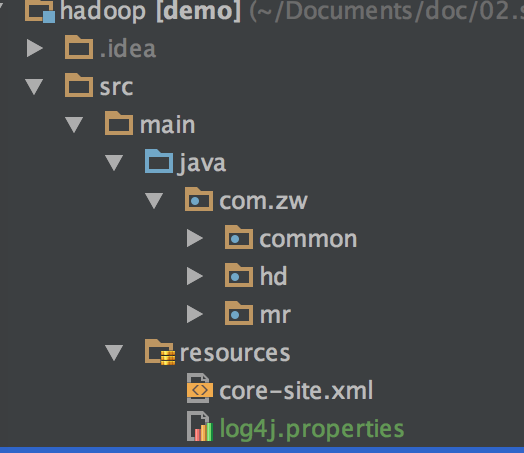

}日志文件

log4j.rootLogger=INFO, stdout

#log4j.logger.org.springframework=INFO

#log4j.logger.org.apache.activemq=INFO

#log4j.logger.org.apache.activemq.spring=WARN

#log4j.logger.org.apache.activemq.store.journal=INFO

#log4j.logger.org.activeio.journal=INFO

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d{ABSOLUTE} | %-5.5p | %-16.16t | %-32.32c{1} | %-32.32C %4L | %m%n权限设置

由于客户端与服务器的权限问题,对输入目录等需要赋予授权

hdfs dfs -chomod 777 test/

或者hdfs-site.xml里添加

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>运行结果

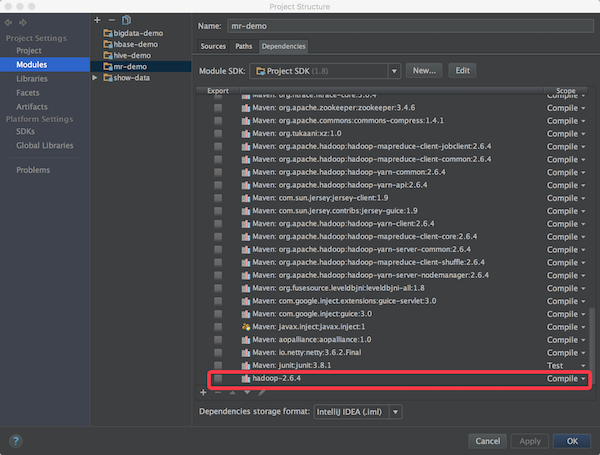

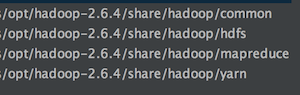

可能会遇到下面问题(本人是用自编译的hadoop-2.6.4遇到的)

java.io.IOException: No FileSystem for scheme: hdfs

Eclipse

准备工作

- 在虚拟机安装hadoop集群

- 开发机配置

(1)Eclipse Version: Mars.2 Release (4.5.2)

(2)jdk版本1.7.0_71

(3)Mac OS X 10.11.6

(4)hadoop安装(hadoop-2.5.2.tar.gz解压)

/Users/zhangws/opt/hadoop-2.5.2 - 环境变量同上

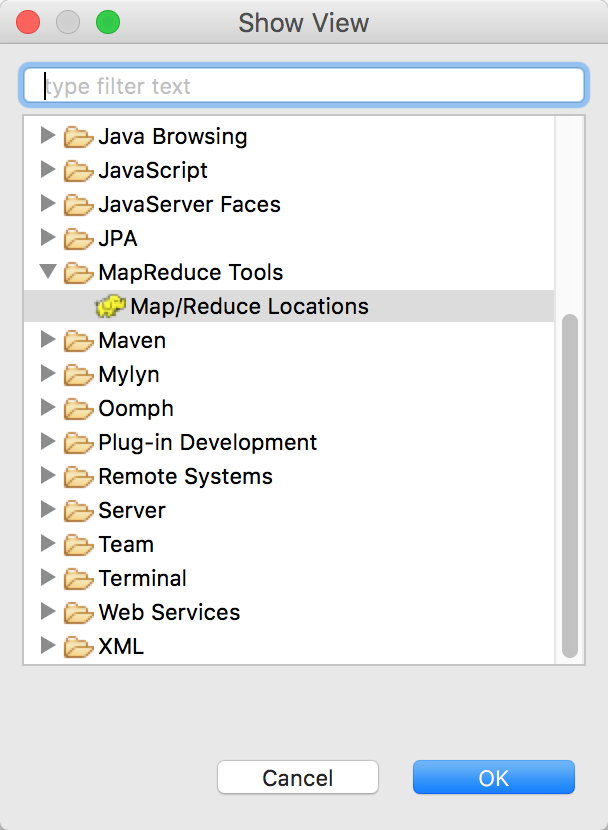

- 安装插件

https://github.com/winghc/hadoop2x-eclipse-plugin

下载hadoop-eclipse-plugin-2.6.0.jar,放入eclipse的plugins目录,启动eclipse

配置eclipse环境

preferences->hadoop map/reduce 指定mac上的hadoop根目录(即:$HADOOP_HOME)

配置hadoop-eclipse-plugin插件

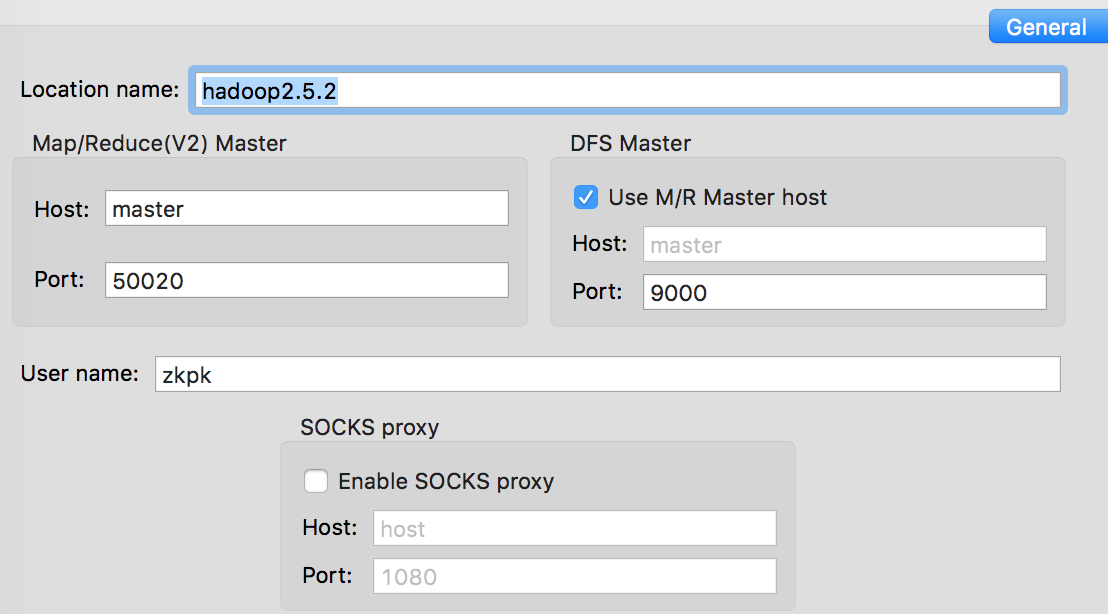

Location name 这里就是起个名字,随便起

Map/Reduce(V2) Master Host 这里就是虚拟机里hadoop master对应的IP地址,下面的端口对应 hdfs-site.xml里dfs.datanode.ipc.address属性所指定的端口,默认端口50020

DFS Master Port: 这里的端口,对应core-site.xml里fs.defaultFS所指定的端口

最后的user name要跟虚拟机里运行hadoop的用户名一致,我是用zkpk身份安装运行hadoop 2.5.2的,所以这里填写zkpk,如果你是用root安装的,相应的改成root

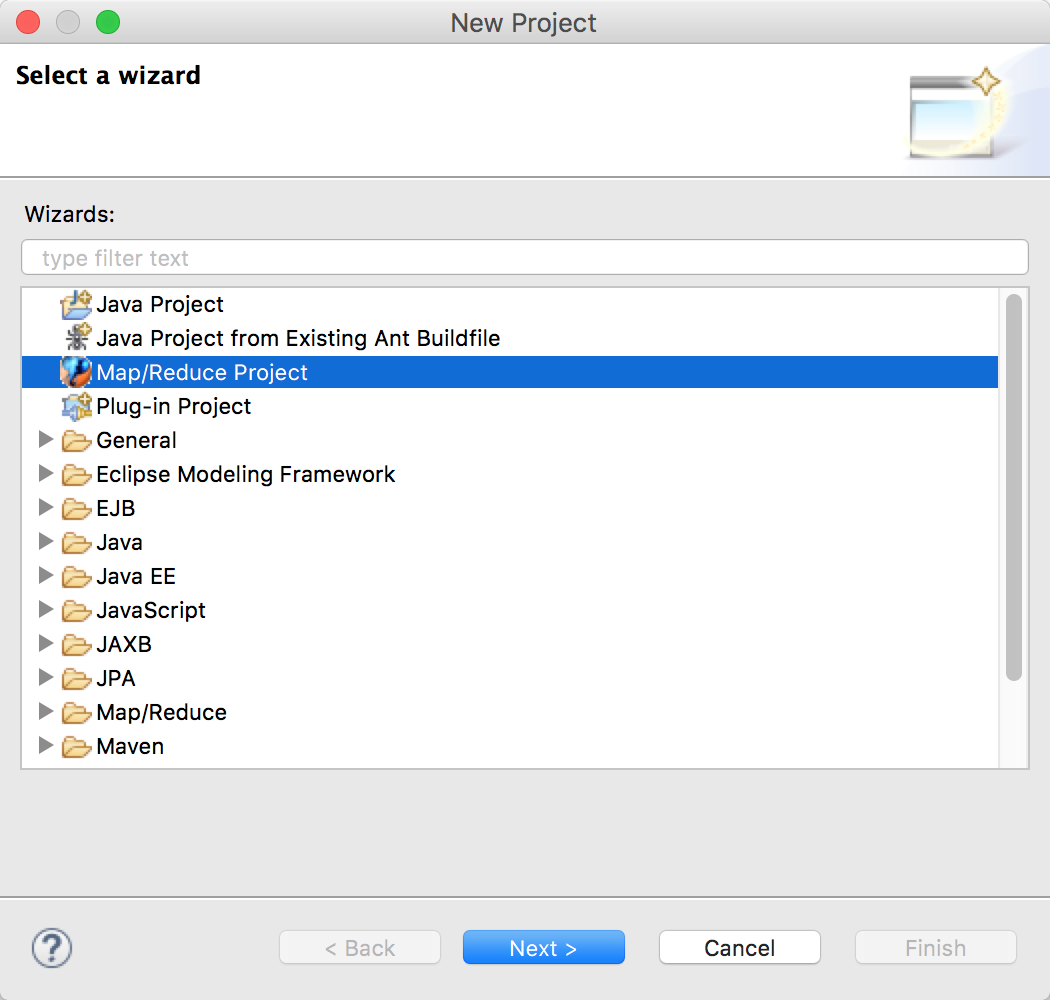

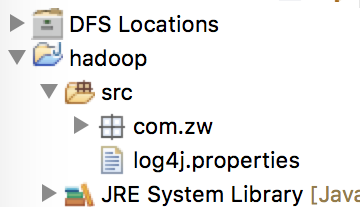

新建工程

运行参数

参考:

http://www.cnblogs.com/yjmyzz/p/how-to-remote-debug-hadoop-with-eclipse-and-intellij-idea.html

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?