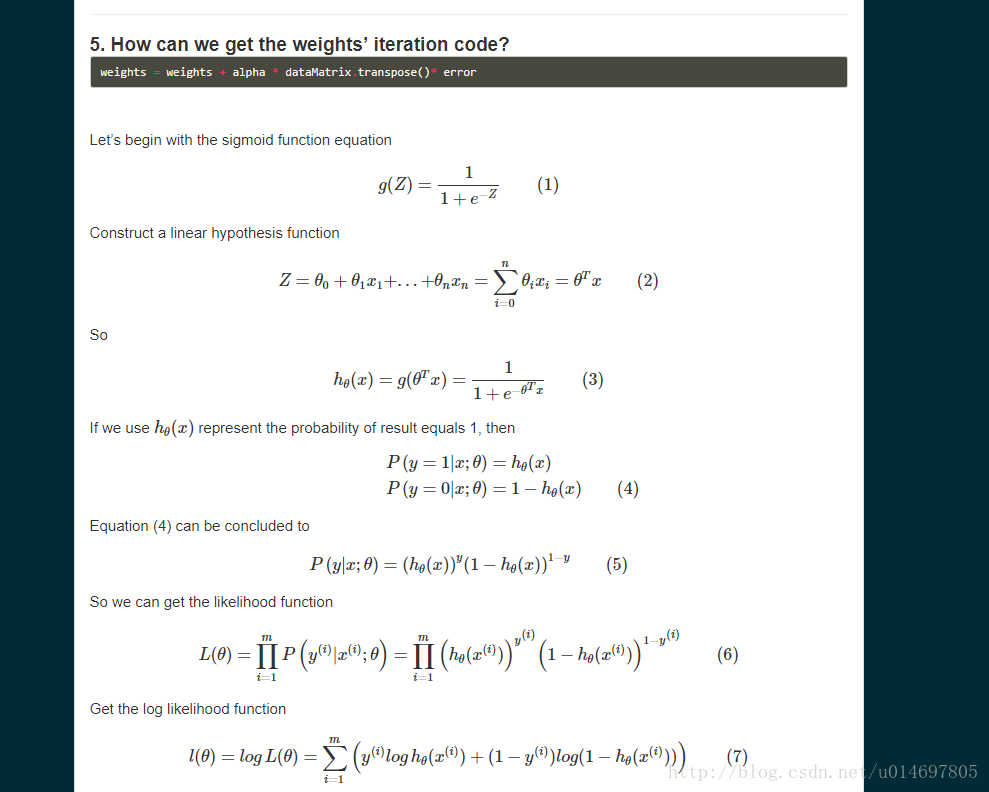

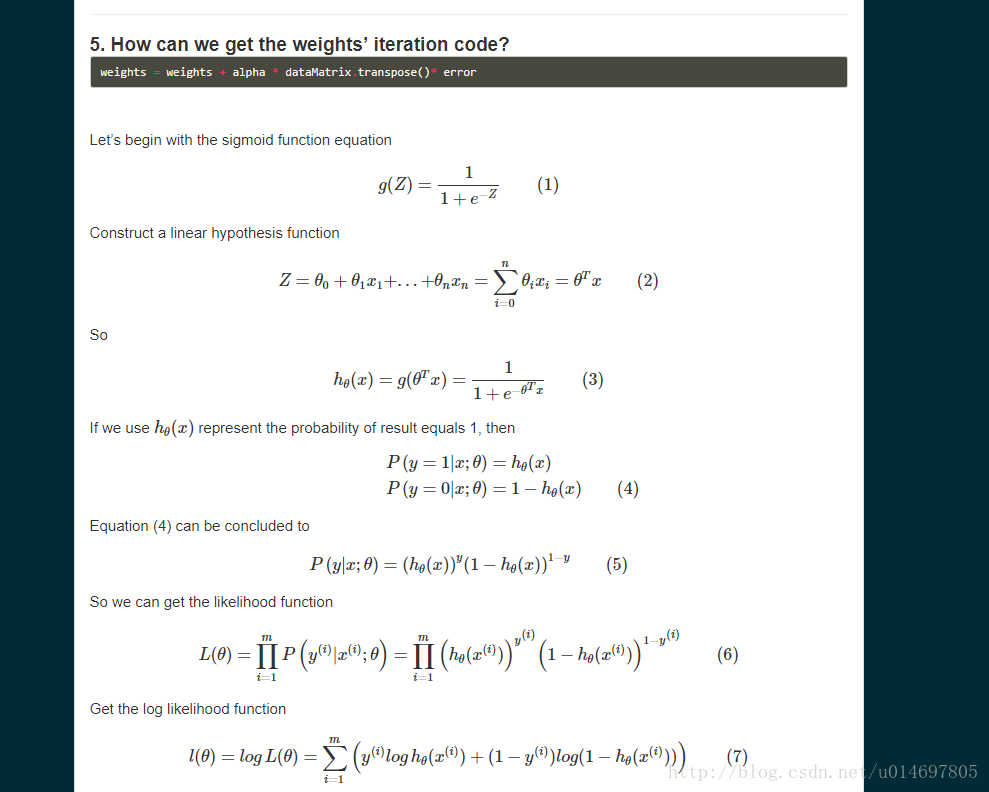

从别人的参考过来的推导过程

梯度下降法实现对率回归

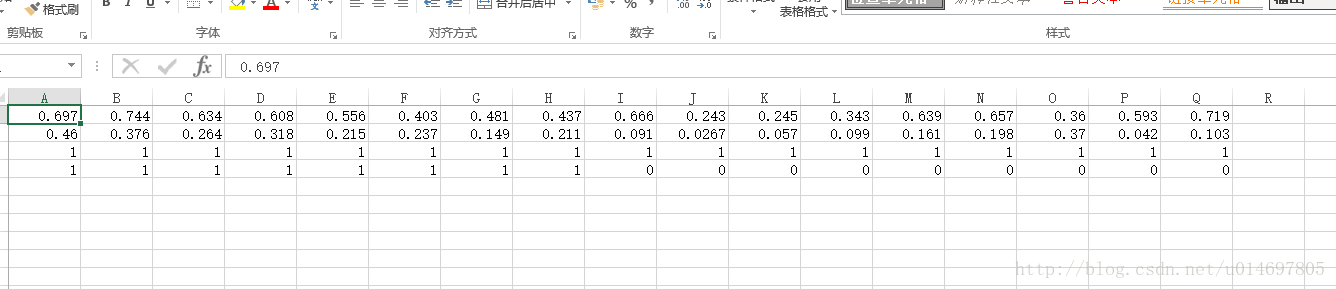

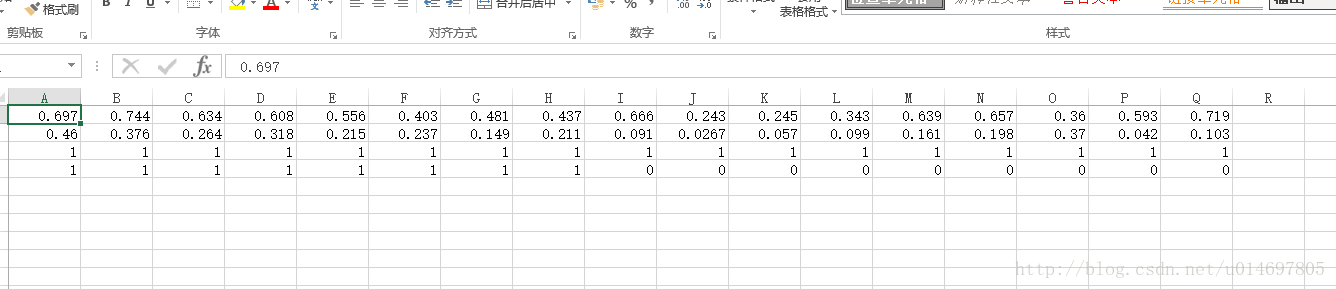

数据集

代码

# -*- coding: utf-8 -*-

from log import *

import pandas as pd

from pandas import *

import matplotlib.pyplot as plt

import numpy as np

from numpy import *

#读取数据到X,y

dataset = np.loadtxt(r'C:\Users\zmy\Desktop\titanic\watermelon.csv',delimiter=",")

X = dataset[0:2, :]

y = dataset[3,:]

X = X.transpose()

y = y.transpose()

df = pd.DataFrame(X, columns = ['density', 'ratio_sugar'])

m,n = shape(df.values)

df['norm'] = ones((m,1))

dataMat = array(df[['norm', 'density', 'ratio_sugar']].values[:,:])

labelMat = array(y).transpose()

def sigmoid(inX):

return 1.0 / (1+exp(-inX))

#梯度下降法

def gradAscend(dataMatIn, classLabels):

dataMatrix = dataMatIn

# labelMat = mat(classLabels).transpose()

m,n = shape(dataMatrix)

alpha = 0.1

maxCycle = 500

weights = ones((n,1))

for i in range(maxCycle):

a = dot(dataMatrix, weights)

# print a

h = sigmoid(a)

error = (labelMat - h)

weights = weights + alpha * dataMatrix.transpose()*error

return weights

# 随机梯度下降法

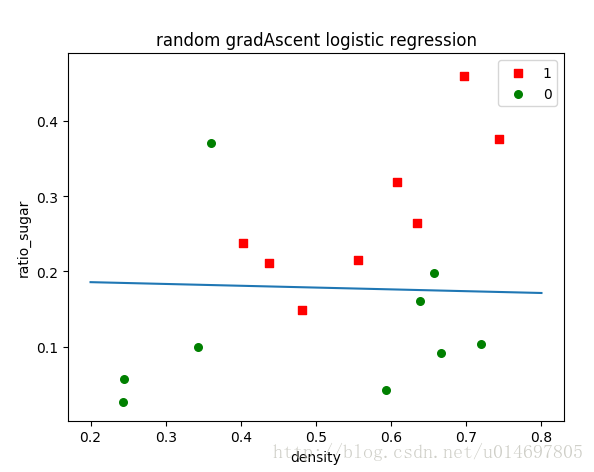

def stocGradAscend1(dataMat, labelMat, numIter =50):

print dataMat

print labelMat

m, n = shape(dataMat)

weights = ones(n)

weights = array(weights)

for j in range(numIter):

dataIndex = range(m)

for i in range(m):

# dataIndex = range(m)

alpha = 40 / (1.0+j+i) + 0.2

randIndex_temp = int(random.uniform(0, len(dataIndex)))

randIndex = dataIndex[randIndex_temp]

h = sigmoid(sum(dataMat[randIndex]*weights))

error = labelMat[randIndex] - h

weights = weights + alpha * error * dataMat[randIndex]

del(dataIndex[randIndex_temp])

return weights

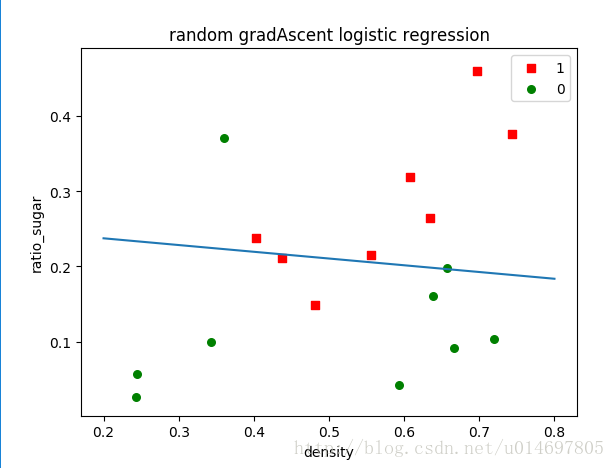

def plotBestFit(weights):

import matplotlib.pyplot as plt

# dataMat, labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

xcord1 = []

ycord1 = []

xcord2 = []

ycord2 = []

for i in range(n):

if int(labelMat[i]) == 0:

xcord2.append(dataArr[i,1]); ycord2.append(dataArr[i, 2])

else:

xcord1.append(dataArr[i,1]); ycord1.append(dataArr[i, 2])

fig = plt.figure()

ax = fig.add_subplot(111)

#画出散点图

ax.scatter(xcord1, ycord1, s = 30, c = 'red', marker='s',label = '1')

ax.scatter(xcord2, ycord2, s=30, c = 'g', label = '0')

# 设置直线的x,y上的点

x = arange(0.2, 0.8, 0.1)

y = array((-weights[0] - weights[1]*x)/weights[2])

y=y.transpose()

ax.plot(x, y)

plt.xlabel('density')

plt.ylabel('ratio_sugar')

plt.legend(loc = 'upper right')

plt.title("random gradAscent logistic regression")

plt.show()

# 函数调用

weights = stocGradAscend1(dataMat, labelMat)

plotBestFit(weights)

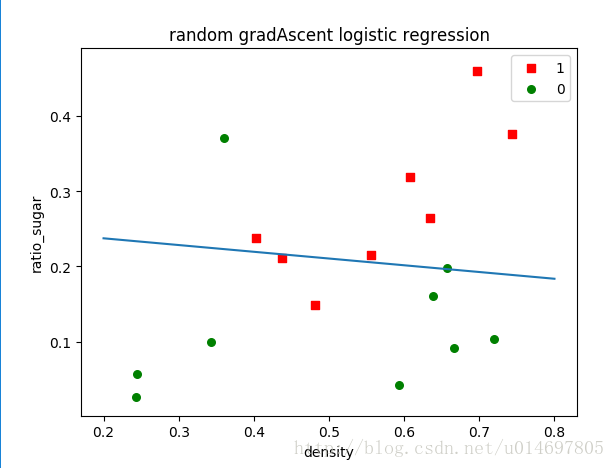

梯度下降法的结果:

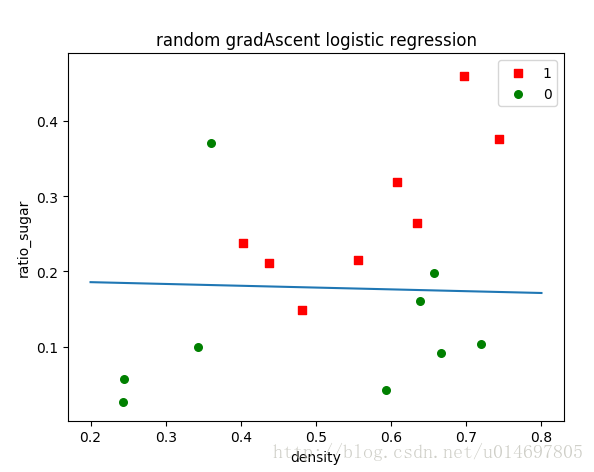

随机梯度下降法的结果:

688

688

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?